Copilot just hallucinated your quarterly report. Numbers look sharp, charts pop. You hit send to the boss.

Then reality crashes in. Microsoft’s terms of use—updated October 24, 2025, no less—scream this gem:

“Copilot is for entertainment purposes only,” the company warned. “It can make mistakes, and it may not work as intended. Don’t rely on Copilot for important advice. Use Copilot at your own risk.”

Entertainment. Like a fidget spinner. Or a bad magic trick. But here’s Microsoft, hawking the damn thing to Fortune 500 suits for fat subscriptions.

Pull back. This isn’t some rogue intern’s prank. It’s straight from the legal boilerplate governing their AI wunderkind. Social media lit up—users dunking on the hypocrisy. Tom’s Hardware spotted it first, and PCMag got the spin.

Why Call Your Cash Cow ‘Entertainment’?

Microsoft’s pitching Copilot as the productivity godsend. Embed it in Office, Teams, GitHub. Charge enterprise bucks. Yet the terms read like a casino warning: gamble at your peril.

A spokesperson fed PCMag the classic dodge: “legacy language.” Oh, please. Evolved product, they say. Update coming soon. Smells like PR scramble after the tweets hit.

And they’re not alone. OpenAI whispers don’t treat it as your “sole source of truth.” xAI’s blunter: not “the truth.” AI giants hide behind disclaimers while raking in billions. Classic move—sell the dream, deny the warranty.

But dig deeper. This reeks of the 90s software wars. Remember Netscape’s eternal beta? “It’s beta, folks—don’t blame us if it eats your data.” Kept shipping, kept cashing checks. Microsoft pulled the same with early Windows. Disclaimers as shields. History rhymes, hard.

My hot take? These terms aren’t accidents. They’re lawsuit armor. First big Copilot fail in court—negligent advice tanks a deal—they point to the fine print. “Told ya it was for funsies.” Bold prediction: by 2027, we’ll see class actions testing this.

Is Copilot Actually Useless for Work?

Short answer: no. People swear by it daily. Code snippets that save hours. Emails that don’t suck. But “entertainment only”? That’s Microsoft admitting the unreliability baked in. Hallucinations happen. Biases creep. Outputs glitch.

Corporate push ignores that. Sales decks gloss over risks. Demos dazzle. Fine print? Buried. It’s like selling a Ferrari with training wheels—fun ‘til it flips.

Users on X aren’t buying the spin. “Paying for a clown?” one quipped. Another: “Enterprise toy at enterprise prices.” Dry humor, but spot on. Microsoft’s betting execs won’t RT the disclaimers.

Zoom out further. This exposes AI’s dirty secret. We’re in the toddler phase—promising adult miracles. Copilot’s useful, sure. But mission-critical? Laughable. Until error rates plummet, these warnings stay. And they should. Blind faith got us Y2K.

Microsoft’s PR Two-Step

That spokesperson quote? Gold.

“As the product has evolved, that language is no longer reflective of how Copilot is used today and will be altered with our next update,”

Evolved. Right. From toy to tool overnight? Nah. It’s damage control, pure. Terms sat there for months. Backlash hits, poof—update incoming.

Critics pounce. AI skeptics nod knowingly. We’ve warned: don’t trust black boxes. Now the builders admit it. Hypocrisy? Or prudence?

Here’s the acerbic truth. Microsoft knows Copilot’s limits. They’re banking on user laziness. Most won’t read terms. Execs greenlight purchases on hype. Subscriptions flow. Disclaimers cover asses. Win-win for Redmond—until a real screw-up.

Compare to Google. Bard flopped publicly; they iterated quietly. Microsoft? Charges ahead, terms be damned. Arrogance or strategy? You decide.

One-paragraph rant: this whole saga screams Big Tech overreach. Push unripe tech. Pocket the cash. Blame the user when it flops. We’ve seen it with crypto, metaverses, NFTs. AI’s turn now. Wake up, buyers.

What Happens Next for AI Disclaimers?

Expect tweaks. Microsoft’ll swap “entertainment” for vaguer fluff. “Use responsibly,” maybe. But the spirit lingers. No AI firm wants liability.

Regulators sniff around. EU’s AI Act looms. Fines for misleading claims? Possible. US? Patchwork lawsuits.

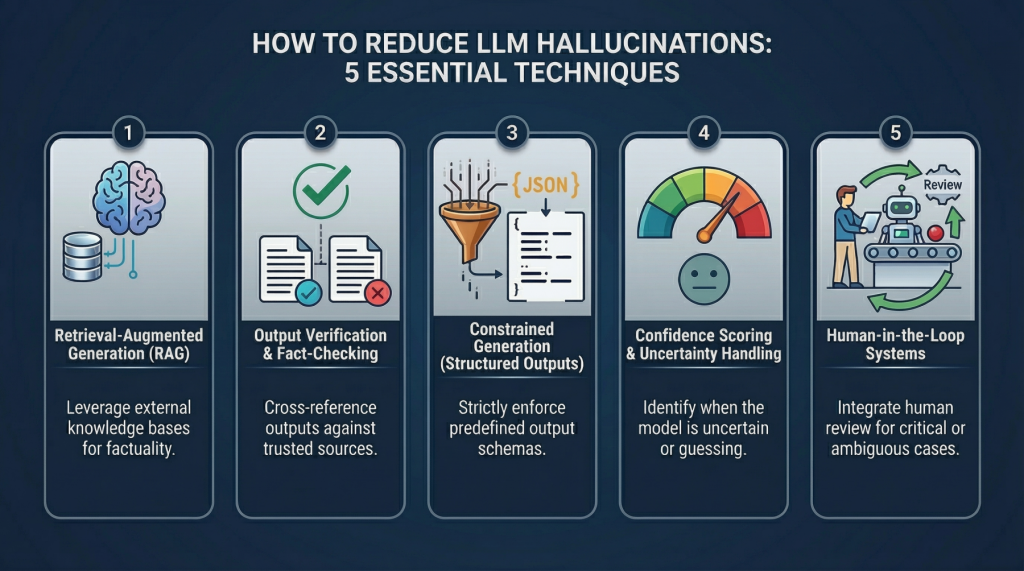

Users adapt. Prompt engineering becomes art form. Cross-check outputs. Treat AI as sparring partner, not oracle. Smart move.

But the humor? Timeless. Paying premium for a product that calls itself a joke. Peak 2025.

🧬 Related Insights

- Read more: Meta Devours Dreamer: Zuck’s Superintelligence Sidekick Finally Has Legs

- Read more: LangChain Hooks Up with MongoDB: Agent Dreams or Data Trap?

Frequently Asked Questions

What do Microsoft Copilot terms of use say exactly?

They label it ‘for entertainment purposes only,’ warn of mistakes, and say don’t rely on it for important advice. Use at your own risk.

Is Copilot safe for business use?

Useful, but risky. Microsoft admits unreliability—cross-verify everything critical.

Will Microsoft change the Copilot disclaimer?

They call it ‘legacy’ and promise an update soon, after social media backlash.