Gemma3 chokes on legal docs. Twenty-seven billion parameters, and it barely extracts a usable knowledge graph.

But swap in Gemma4’s MoE setup—a model that’s a sliver of the size—and boom, graphs pop out crisp, connected, ready for prime time. That’s the hook from this Towards AI piece, anyway. Twenty-one models, chained in one pipeline, supposedly driving knowledge graph quality through the roof.

I’ve seen this movie before. Back in the ’00s, ensembles ruled machine learning—bagging, boosting, random forests stacking weak learners into something punchy. Now it’s LLMs doing the same dance, but with fancier clothes and billion-dollar valuations.

Why Does Legal Text Break Single LLMs?

Legal prose? It’s a nightmare. Dense clauses tangled like Christmas lights, nested definitions, archaic phrasing that makes even humans squint. Feed it to a solo LLM, and yeah, you get entities—names, dates, maybe a court ruling—but relations? Those edges linking ‘plaintiff’ to ‘breach of contract’? Forget it. The model hallucinates half the time, or worse, misses the hierarchy entirely.

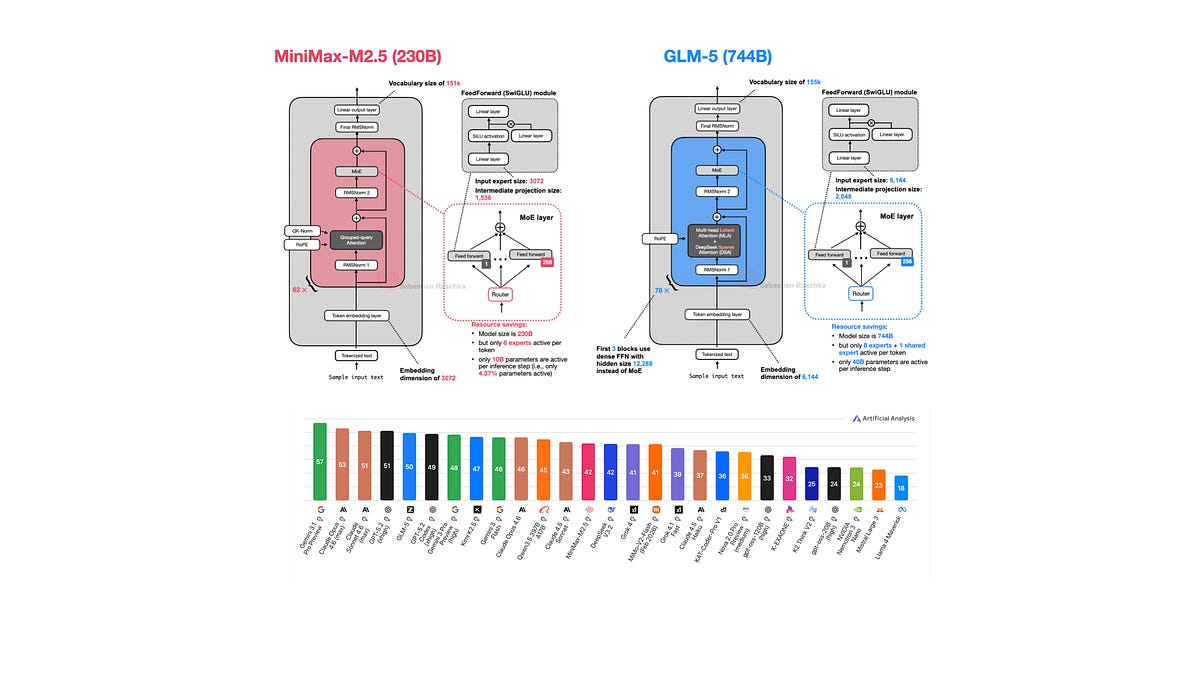

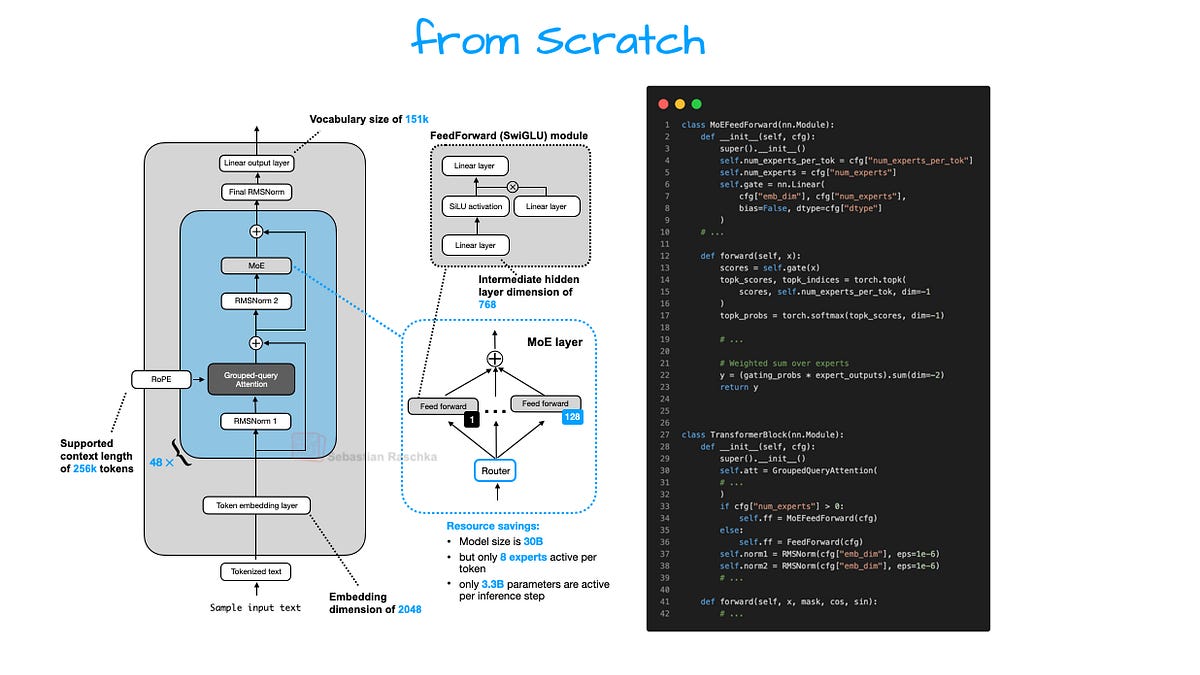

Gemma3 needed 27B parameters to extract a usable graph from legal text. With Gemma4’s MoE architecture, a model a fraction of that size…

That’s the money quote. MoE—Mixture of Experts—lets the model route inputs to specialized sub-models, activating only what’s needed. Efficient, sure. But here’s my unique twist: this isn’t revolution; it’s regression to the mean. Remember XGBoost? Stacking gradient-boosted trees crushed single models on tabular data. Same principle—divide labor, specialize, aggregate. LLMs just needed GPU prices to crash before rediscovering it.

And the pipeline? Twenty-one models. Not one mega-brain, but a relay race: one spots entities, another infers relations, a third prunes noise, and so on. Quality skyrockets because each step’s laser-focused, not some generalist fumbling everything.

But. Who’s paying for the orchestration?

Knowledge graph quality isn’t magic—it’s engineering grunt work. That pipeline demands data pipelines, error propagation fixes, human-in-the-loop tweaks (they don’t mention that part). I’ve covered graph databases since Neo4j was a startup pitch; quality always boiled down to iteration, not parameters.

Is a 21-Model Pipeline Scalable—or a Compute Trap?

Scale it up. Say, enterprise contracts, not just legal snippets. Your 21 models multiply compute by 21, right? Nope—MoE sparsity means only fractions fire per token. Gemma4 sips power compared to Gemma3’s guzzle.

Yet, cynicism kicks in. Who makes bank? Not the open-source tinkerers. It’s vendors like Neo4j, TigerGraph, or the new AI graph startups (Stardog? Anyone?). They’ll charge premium for ‘production-ready’ pipelines wrapping this. And the models? Google’s Gemma family—free-ish, but hook you on their ecosystem, Vertex AI, whatever. Classic freemium trap.

Prediction time: this peaks at niche verticals—legal, pharma, finance—where graphs shine for compliance, discovery. General text? LLMs hallucinate less with RAG anyway. No one’s rebuilding Wikipedia this way.

Look, I’ve grilled execs at graph summits. They admit: raw LLM graphs suck without post-processing. This pipeline’s just admitting the emperor has no clothes—knowledge graph quality demands humility, not hubris.

A single sentence: Ensembles win. Always have.

Who Actually Profits from Knowledge Graph Hype?

Follow the money. Towards AI post hints at efficiency gains, but skips the business angle. Knowledge graphs? Hot again thanks to LLMs exposing retrieval limits. Pinecone, Weaviate pivot to hybrid vector+graph stores. Millions in funding.

But quality? That’s the moat. Your graph’s only as good as its recall-precision balance. Twenty-one models nail that by specialization—one for temporal relations (laws evolve), one for ontologies (legal thesauruses are gold). Result: downstream apps—query engines, recommendation—hum.

Skeptical vet take: PR spin calls it ‘breakthrough.’ Nah. It’s cost optimization. Train 21 tiny experts cheaper than one giant. Deploy modular—swap a relation extractor without retraining all. Smart, if you’re not chasing AGI dreams.

Historical parallel? 2010s deep learning shift from hand-crafted features to end-to-end. Graphs resisted because structure matters. Now, pipelines bridge that—weak learners for strong structure. Old school vindicated.

And the compute savings? Real. But watch: as MoE scales (Mixtral, anyone?), single models creep back. Pipeline overhead—latency in chaining—bites at inference time. Enterprises hate that.

One punchy para: Hype cycles end. Graphs endure for reasoning tasks LLMs botch.

Why Should Developers Care About This Pipeline?

You’re building agents? RAG pipelines? Graphs fix hallucination by grounding in structured facts. This 21-model setup delivers that without 100B param monsters.

Tinker it: open Gemma weights, LangChain for orchestration, Neo4j sink. Boom—prototype. But production? Label data. Legal’s annotated goldmines exist (Caselaw Access Project). Generalize? Tough.

Cynical advice: don’t bet the farm. Test on your domain. Quality metrics—node accuracy, edge F1—rule. Buzzword ‘knowledge graph’? Yawn. Measurable recall does.

🧬 Related Insights

- Read more: Appeals Court Slaps Down Anthropic’s Bid to Ditch Pentagon Blacklist

- Read more: Sam Altman’s OpenAI Circus: Can We Trust Him with Our Future?

Frequently Asked Questions

What actually drives knowledge graph quality?

It’s not model size—it’s specialized pipelines chaining 21 models for entities, relations, pruning. Ensembles beat generalists.

Does MoE architecture fix LLM graph extraction?

For legal text, yes—Gemma4 crushes Gemma3 on efficiency. But needs pipeline glue; solo MoE still wanders.

Is a 21-model pipeline better than one big LLM for knowledge graphs?

Usually. Modular, cheaper, precise. Scales poorly without orchestration tools, though.