What if your AI agent’s biggest enemy isn’t the LLM, but the framework trapping it?

John Nichev slammed into this wall hard. Building production agents for actual customers—not demos—he found LangChain’s promises crumbling under real loads. Five packages for one job. Hidden protocols nuking debuggers. Paid logs to trace your own code. LangGraph restarting nodes on human pauses. Brutal.

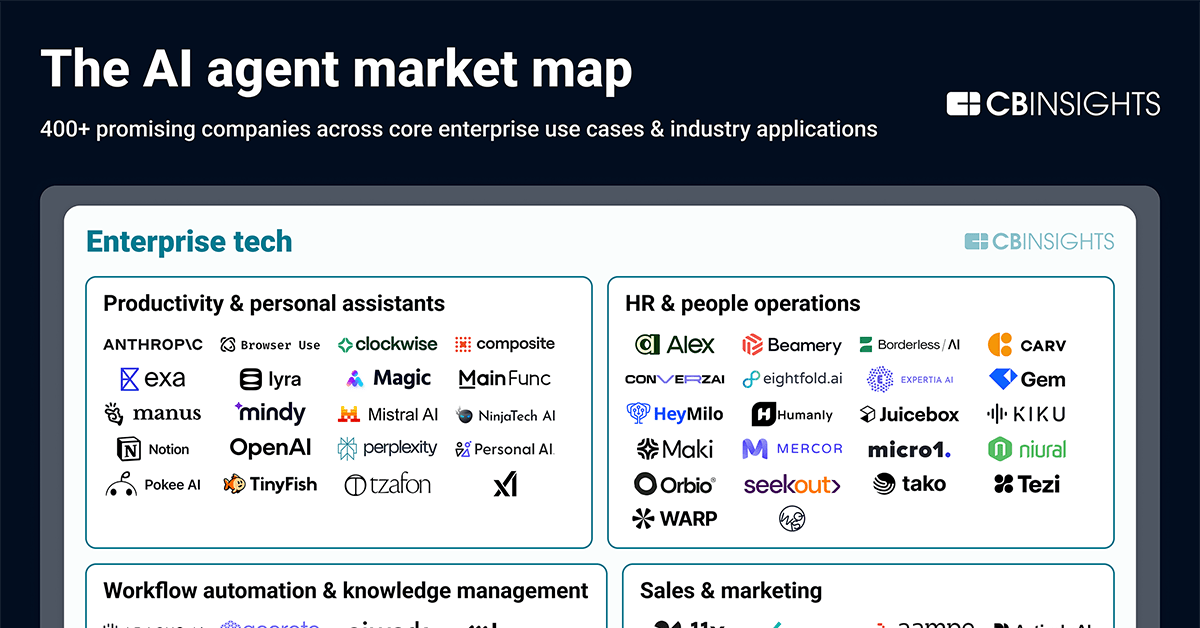

And here’s the market truth: AI agent frameworks are exploding. Gartner pegs agentic AI at $47 billion by 2027, up from near-zero today. But adoption stalls at prototypes. Why? Frameworks like LangChain (1.2 million weekly PyPI downloads) prioritize integrations over simplicity. They’re Swiss Army knives—until you need a scalpel.

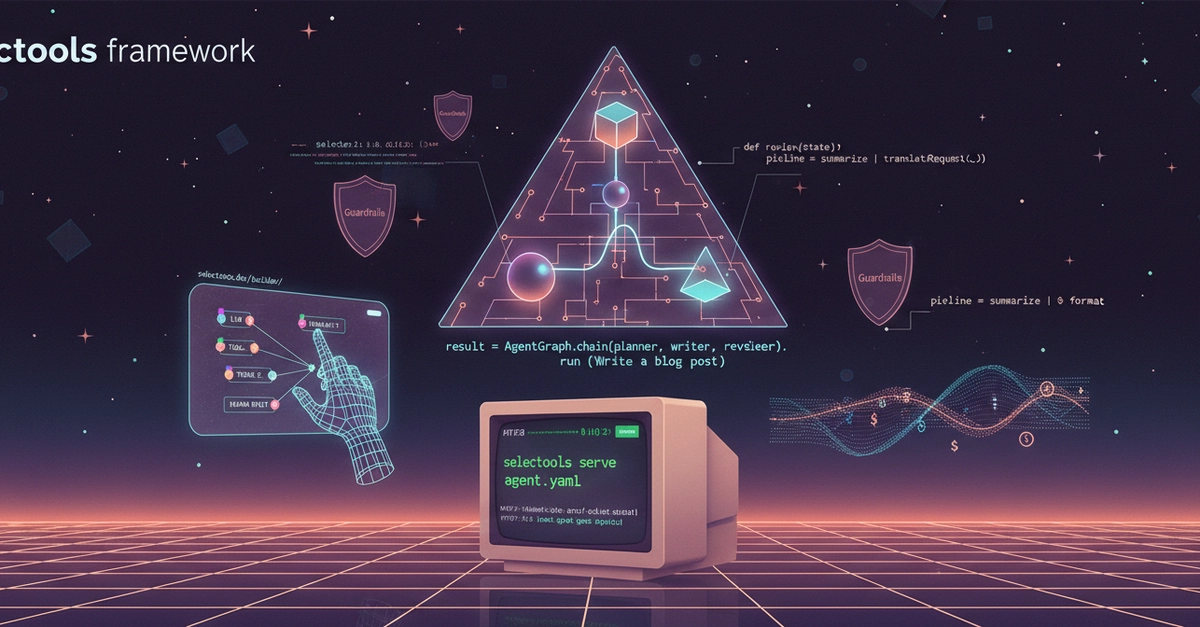

Enter Selectools. Nichev’s brainchild. Pip-installable, Apache-2.0 licensed, with 4,612 tests at 95% coverage. It’s tiny compared to LangChain’s behemoth.

Tool calling that just works. Define a function, the LLM calls it. No adapter layers, no schema gymnastics. Works the same across OpenAI, Anthropic, Gemini, and Ollama.

That’s from the announcement. No fluff.

Look, I’ve seen this movie before. Back in 2008, Flask launched as a ‘micro’ web framework. Django ruled with batteries-included glory, but Flask said: write Python, not XML configs. Result? Flask hit 100k stars on GitHub; it powers Pinterest, Netflix backends. Selectools feels like that pivot for agents—pure Python routing, no DSLs, no Pregel nonsense.

Why Does LangChain Feel Like Enterprise Bloat?

LangChain’s LCEL pipes look elegant. Until they don’t. That | operator? Masks a Runnable protocol. Debug it? Good luck. LangSmith? Pay to peek at your traces. Production agents need human-in-the-loop without restarts—LangGraph chokes there.

Nichev’s team hit these at work. Real customer requests. Not hackathons. Selectools flips it: traces baked in, free. Every run() spits timings, costs, tool calls. Guardrails? PII detection, injection blocks—shipped, not bolted-on.

Multi-agent? AgentGraph.chain(planner, writer, reviewer). One line. Pipelines? summarize | translate | format. Human pauses? Yield InterruptRequest, resume exactly. Deploy? selectools serve agent.yaml. Endpoints, SSE, playground—instant.

Data backs the skepticism. LangChain’s GitHub issues: 2,500 open. Selectools? Pre-launch audit fixed 9 critical bugs via 5-agent hunts. 152 model defs with pricing. 50 evaluators, no SaaS.

But my unique angle: this isn’t just dev relief. It’s a bet against vendor lock-in. OpenAI’s Swarm, Anthropic’s APIs—they’re pushing agents, but frameworks glue them. Selectools stays agnostic, local-first with Ollama. In a world where 70% of AI projects fail (Forrester), neutrality wins.

Is Selectools Production-Ready—or Just a Prototype Killer?

Short answer: yes, for most. 76 runnable examples. 44 interactive docs with stability badges. Visual builder? Browser-based, GitHub Pages, drag-drop to YAML/Python. Zero install.

Caveats. Community’s young—fewer integrations than LangChain’s 50+. If you crave managed platforms, stick with LC. But for Python purists? It’s liberating. Errors as tracebacks, not abstractions. Routing as functions, not graphs.

Benchmarks? 40 real-API evals vs. OpenAI/Anthropic/Gemini. Costs tracked natively. Python 3.9-3.13.

Nichev admits: LangChain safer for massive scales today. Fair. But trends favor lean: FastAPI dethroned Flask for APIs. Selectools could do that for agents.

Prediction—and here’s my edge: by Q2 2025, Selectools forks 10k+ stars. Why? Agent fatigue. Devs want tools that vanish, not frameworks that star. Pip install selectools. Test it.

The builder demo? Game-changer. https://selectools.dev/builder/ —wire agents visually, export code. No subscriptions. Smells like Cursor’s rise: make AI dev visual, accessible.

Critique the spin? None here. Nichev calls his shots straight—no ‘revolutionary’ hype. Refreshing in AI’s buzzword swamp.

So, market dynamics: agents need simplicity to hit production. LangChain’s 80% prototype share drops as Selectools-like challengers rise. Watch PyPI downloads.

What Happens When Agents Go Local-First?

Ollama support shines. Run agents offline, cheap. Costs? Tracked per model—152 defs. No cloud bills sneaking up.

Production story: one-command serve. Handles parallel 5-agent bugs fixed pre-launch. Security audit: 56 findings squashed.

Wander a bit—I’ve built agents. Human loops killed me in LC. Selectools’ yield? Resumes precisely. Huge for workflows.

🧬 Related Insights

- Read more: Stripe’s AI Billing Hack: Token Costs Become Startup Cash Machines

- Read more: Python 3.15’s JIT Surges Ahead—Early Wins Rewrite the Script

Frequently Asked Questions

What is Selectools?

Selectools is a lightweight Python library for building, tracing, and deploying AI agents with simple tool calling, guardrails, and multi-agent orchestration—no bloat.

Selectools vs LangChain?

Selectools prioritizes simplicity and production readiness with free tracing and pure Python; LangChain offers more integrations but adds complexity and costs for logs.

How do I get started with Selectools?

pip install selectools, then define agents in Python or YAML, run with .run(), deploy via selectools serve agent.yaml. Docs at https://selectools.dev.