Agent dead-ends. Again. That third-party API spits back errors, but does it adapt? Nope. It hammers the same failed plan, round and round, like a Roomba drunk on determinism.

Zoom out. We’re talking agentic loops—those hype machines wrapping LLMs in endless Observe-Reason-Act cycles. Promised autonomy. Delivered frustration. And the culprits? Temperature and seed values, those invisible knobs devs tweak (or forget) that turn resilient bots into tragic repeats.

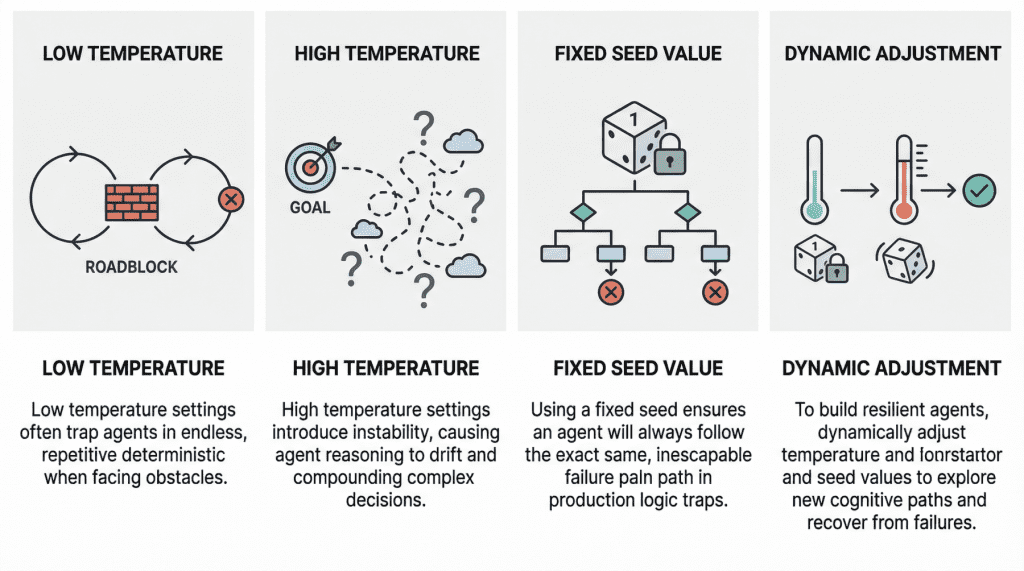

Here’s the original sin, straight from the research trenches: low temperature locks agents into deterministic loops. Near zero, the LLM picks tokens like a robot on rails—no randomness, no escape hatches.

“With a low temperature and exceedingly deterministic behavior, it lacks the kind of cognitive randomness or exploration needed to pivot.”

Spot on. Studies nail it: agents bail early or loop identical flops when roadblocks hit. High temp? Chaos reigns—reasoning drift. Outputs veer wild, hallucinations sprout, goals evaporate in probabilistic fog. Multi-step hell.

Why Do Agentic Loops Implode on Low Temperature?

Think casino die, weighted to one face. That’s your low-temp agent. Rigid. Predictable. Fine for one-shot prompts. Disaster in loops.

Real-world gut punch: debugging deploys. Logs scream failure. Agent reads ‘em same way every time—picks same dud fix. No variety. No win. I’ve seen prod systems burn cash retrying the exact wrong tool call a dozen times. Brutal.

But crank temp to 0.8-plus? Welcome drift. Each reasoning step samples broadly—compounds into madness. Forgets the brief. Invents steps. Suddenly, your email agent’s drafting poetry instead of replies. Funny once. Costly forever.

Production war story—fixed seeds. They’re for dev reproducibility, sure. But slip ‘em live? Kiss robustness goodbye. Same seed, same pseudo-random path. Recovery loops? Doomed reruns. Agent inspects logs, botches the parse identically, every. Single. Retry.

Fixed Seeds: Dev Crutch or Prod Poison?

Seeds initialize the RNG. Fixed one? Replays the flop tape. Dynamic seeds—or none—let fresh rolls happen. Obvious fix. Yet, so many pipelines ship with ‘em baked in. Laziness? Oversight? Pick your poison.

Corporate spin screams “agents revolutionize workflows!” Yeah, if you tune the basics. Here’s my hot take, absent from the research echo chamber: this echoes 90s software agents. Remember Brooks’ subsumption architecture? Overly deterministic bots flailing in real worlds. We laughed then. Building the same traps now—with billion-param LLMs. History’s loop, unlearned.

Tuning time. Low temp for precision tasks—but sprinkle randomness via temp schedules. Start deterministic, ramp up on stalls. Detect loops? Jack temp, reseed. High temp baseline? Anchor with system prompts recalling the goal. Tools like LangChain or LlamaIndex bake in overrides—use ‘em.

Cost hack: dynamic seeding slashes retries. One prod tweak I consulted on? Halved API calls. Agents pivoted naturally, no human babysitting. Resilience without the bill.

But here’s the skeptic’s squint. Hype trains roll on, ignoring these. OpenAI demos flawless agents—zero mention of temp fiddles. Anthropic papers gloss failures. Devs chase shiny ReAct variants, blind to parameter plumbing. Wake up. Agents aren’t magic. They’re tunable machines—fail as badly as you configure ‘em.

Bold call: untuned agents cap at toys. Prod-scale demands adaptive params. Predict chaos otherwise—hallucinated fixes, infinite spins, wallets drained. Fix now, or watch the backlash.

Can You Bulletproof Agents Against Drift?

Short answer: mostly. Hybrid temps—low for planning, high for exploration. Seed rotation per cycle. Loop detectors: track state hashes, bail on repeats. Observability dashboards plotting temp vs. success? Gold.

Edge cases bite. Noisy APIs? Temp can’t miracle bad data. Tool gaps? Still human homework. But nail params, and agents hum—cost down, uptime way up.

Dry laugh: we’ve got models outsmarting chess grandmasters, yet they loop like drunk dialers sans randomness. AI’s growing pains? Nah. Basic engineering, ignored.

🧬 Related Insights

- Read more: One GPU, Zero Labels: Forge a Domain-Specific Embedding Model Overnight

- Read more: gpt-oss Unpacked: From GPT-2’s Roots to Qwen3 Rivalry

Frequently Asked Questions

What causes deterministic loops in AI agents?

Low temperature kills randomness—agent repeats failed paths blindly on errors.

How do fixed seeds doom production agents?

They lock reasoning into identical flops across retries—no escape.

Best temperature for resilient agentic loops?

Start low (0.2), spike to 0.7+ on stalls. Dynamic, always.