What if the smartest AI isn’t locked behind a paywall anymore?

Open models. They’ve crossed a threshold. And it’s not hype – it’s evals screaming it. We’ve got GLM-5 from Z.ai and MiniMax M2.7 posting scores that mock closed frontier darlings like Claude Opus on agent tasks. File ops? Nailed. Tool use? Solid. Instructions? Followed without the drama.

But here’s the kicker – they’re dirt cheap. Slash that budget.

Why Chase Open Models When Closed Ones Promise ‘Frontier’ Magic?

Cost. Latency. Performance. That’s the holy trinity builders chase. Closed models? Dreamy on paper. Hellish in the wallet. Run Claude Opus at scale, and you’re bleeding $25 per million output tokens. GPT-5.4? Still $15. Ouch.

Open alternatives laugh. GLM-5 at $0.95 input, $3.15 output. MiniMax M2.7? $0.30 in, $1.20 out. Scale to 10 million tokens a day – Opus devours $250 daily. MiniMax? Twelve bucks. That’s $87k yearly you’d pocket instead of torching.

And speed? Forget it. Open models on Groq or Baseten zip at 70 tokens per second, 0.65s latency. Opus? Snoozes at 34 t/s, 2.56s. Users tap out waiting.

Closed vendors spin tales of unmatched smarts. But for agents – real workflows – open’s predictability wins. No black-box surprises. Deploy without fear.

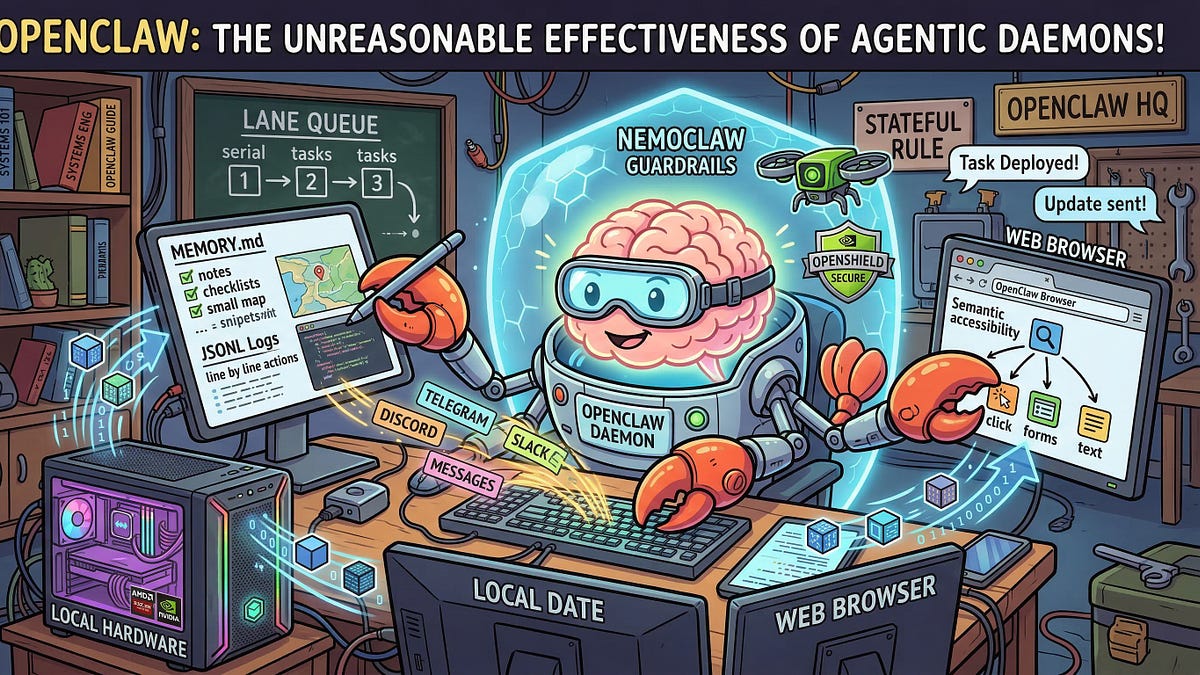

Have Open Models Actually Crossed the Agent Threshold?

Deep Agents evals don’t lie. Seven categories: files, tools, retrieval, chat, memory, summaries, unit tests. Hard passes or fails. No fluff.

GLM-5: 0.64 correctness. 94 of 138 passed. MiniMax: 0.57, 85 passed. Closed frontiers? Similar ballpark. But open’s efficient – step ratios near 1.0, tool calls on budget.

GLM-5 (z.ai) and MiniMax M2.7 each score similarly to closed frontier models on core agent tasks such as file operations, tool use, and instruction following.

That’s the original verdict. Punchy. True.

Per category? GLM-5 aces file ops (1.0), retrieval (1.0), unit tests (1.0). Tool use at 0.82. Weak on convo (0.38), but agents aren’t chatbots.

Skeptical? Check the GitHub. LangSmith traces. Real-time runs. No smoke.

It’s like Linux in the ’90s – open source ate proprietary Unix’s market. Clunky at first. Then unstoppable. Open models? Same script. Bold call: in 12 months, 70% of agent deploys run open weights. Closed? Niche luxury.

The Eval Guts – No Magic, Just Math

Success assertions. Efficiency checks. Correctness: passed over total. Solve rate: accuracy times speed. Step ratio: actual vs expected steps. Tool calls same.

A model nails a task in two steps, not five? Gold. GLM-5’s 1.02 step ratio means it’s lean. No wandering.

Hosted on Baseten, Ollama. Local via vLLM. Private. Your data stays yours – closed models? They peek.

Critics whine: open lacks ‘reasoning depth.’ Bull. For production agents, reliability trumps PhD-level puzzles. And costs? Closed’s $87k hole per app? That’s the real reasoning fail.

Providers like Fireworks optimize inference. Throughput soars. Teams can’t match solo. OpenRouter logs prove it.

Open’s Edge – Predictability Over Hype

Benchmarks screamed it: SWE-Rebench, Terminal Bench 2.0. Tool calling? Reliable. Instructions? Consistent.

Production agents need that. No 50% flake rate. Open delivers.

Unique twist: closed giants’ PR spins ‘frontier’ as god-mode. Reality? Diminishing returns. Each IQ point costs exponentially. Open hits 90% there – for 10% price. Smart money shifts.

Builders: test it. GLM-5 on Baseten. MiniMax local. Threshold crossed. Party’s open.

But don’t sleep. Evals expand. Early days. Still – viable now.

The Cost Massacre Table

| Model | Type | Input ($/M tokens) | Output ($/M tokens) |

|---|---|---|---|

| Claude Opus 4.6 (Anthropic) | Closed | $5.00 | $25.00 |

| Claude Sonnet 4.6 (Anthropic) | Closed | $3.00 | $15.00 |

| GPT-5.4 (OpenAI) | Closed | $2.50 | $15.00 |

| GLM-5 (Baseten) | Open | $0.95 | $3.15 |

| MiniMax M2.7 (OpenRouter) | Open | $0.30 | $1.20 |

Stare. Weep if you’re on closed.

🧬 Related Insights

- Read more: Gemini 3 Deep Think Spots Flaws Humans Miss – And Redefines Lab Work

- Read more: Inside DeepSeek R1: The Four Paths to Smarter LLM Reasoning

Frequently Asked Questions

What are the best open models for AI agents in 2024?

GLM-5 and MiniMax M2.7 top evals – match closed on tasks, crush costs.

Do open LLMs perform as well as Claude or GPT for production?

On agent benchmarks? Yes, 60-70% correctness. Plus 10x cheaper, faster.

How much can I save switching to open models?

Up to $87k/year per high-output app. Latency halves too.