Toucan’s multi-agent LLM architecture just delivered a 40% drop in query failures on their data platform — that’s the stat stopping me cold.

And here’s why it matters: we’ve all seen the hype around god-like LLMs handling everything from chit-chat to SQL sorcery. But Toucan, builders of a slick BI tool, slammed into reality. One model doing it all? Crashes and burns.

They rebuilt around specialists. Picture this — not a lone genius juggling chainsaws, but a pit crew, each wrenching one bolt with laser focus. That’s the vibe. Energy surges through their system now, predictable, zippy, maintainable.

Why Single LLMs Fail Spectacularly

But let’s rewind. Early days, Toucan fed every user request — “sales by region,” schema hunts, chart wizardry — straight to one bloated LLM. Sounds clean on paper. Reality? A tangled mess.

Inconsistent outputs everywhere. Fix query-building, shatter viz logic. Endless loops on tool calls, users staring at spinners. Iteration ground to a halt; one tweak rippled chaos.

“One change to fix exploration could break how we handled chart requests. Everything was tangled.”

That’s Toucan’s CTO laying it bare. Brutal honesty — rare in AI land, where companies polish turds into “breakthroughs.”

Their fix? Hierarchical multi-agent setup. Central orchestrator triages, plans, delegates. Specialists grind narrow tasks, report back. No direct user chit-chat from grunts; keeps latency low, voices crisp.

Simple hellos? Orchestrator solos ‘em. Boom — costs plummet.

How Toucan’s Agent Swarm Actually Works

QueryBuilder: Schema whisperer. Grabs intent, crafts SQL, executes, error-classifies. Fatal? Abort. Fixable? Retry smart. No hamster-wheel loops.

DataExplorer: Schema tour guide. “What’s in my DB?” Instant summaries, smart metric nudges. Follow-ups drill deeper — conversation flows natural.

Chart Agent: Viz virtuoso. Sniffs intent, picks bar vs. line (pure reasoning, no crutches). Then configs charts, validates schema-fit via tools. Split duties mean laser optimization.

Orchestrator: The boss. Plans in structured steps — create, update, track. Synthesizes outputs into human-friendly responses. Clean lines, debug dreams.

Chaining magic: “Monthly revenue by region.” Orchestrator flags chart. Chart Agent picks line chart, hints query needs. QueryBuilder delivers data. Chart Agent configs, validates. Final polish: boom, viz served.

It’s like an assembly line on steroids — Ford’s factories meets neural nets. Except agents “talk” via structured handoffs, no crosstalk noise.

Toucan’s not hyping vaporware. They’re shipping. Predictability skyrockets; maintenance eases. CTO gold: scales with humans, not against ‘em.

Is Multi-Agent the New Microservices for AI?

Here’s my hot take — unspooled from Toucan’s playbook, but zooming out. Remember software’s monolith-to-microservices pivot? 2010s, giants like Netflix shattered apps into services. Why? Same pains: tangled deploys, slow fixes, scale walls.

AI’s there now. LLMs as monoliths? Cute for toys. Enterprise data wrangling? Nope. Multi-agent is the microservices analog — composable, observable, evolvable.

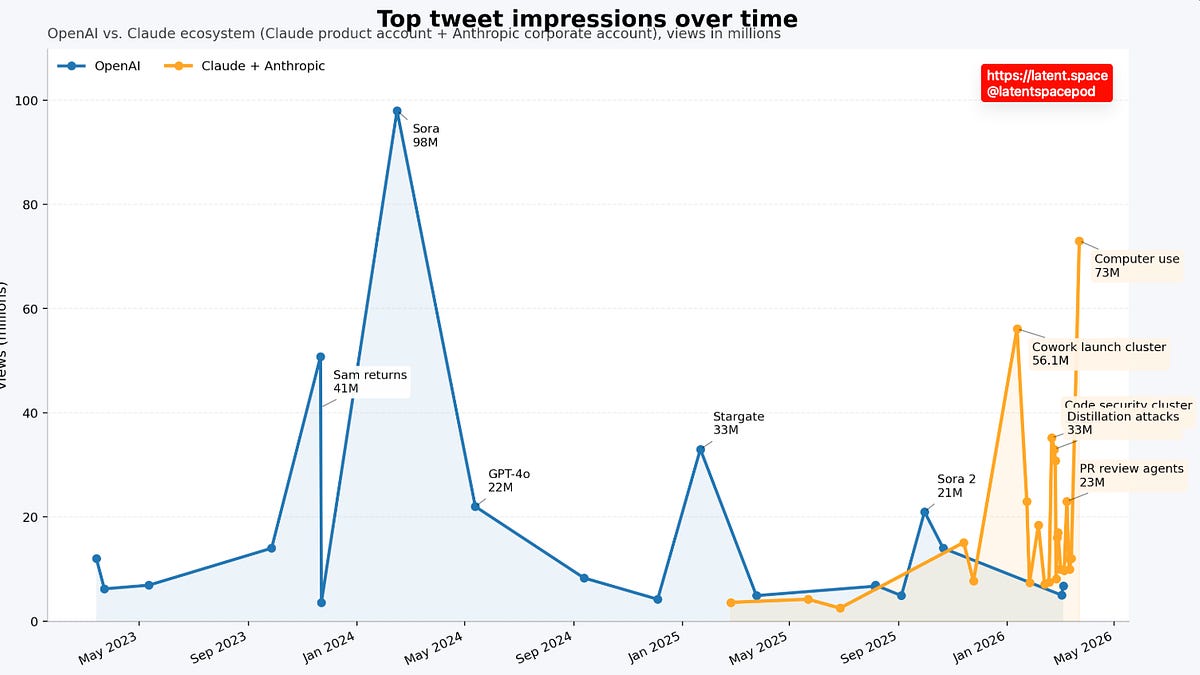

Bold call: By 2026, agent marketplaces explode. Pick ‘n’ mix specialists like AWS Lambdas. Toucan’s proto-example. They’ll inspire hordes ditching prompt-fragility for agent-legos.

But skepticism lingers. Agents add latency hops — orchestration overhead. Toucan mitigates with hierarchy, direct simple-paths. Smart. Still, watch for real-world scale: 1000s concurrent? Tools matter.

Critique their spin? Nah — this post screams authenticity. No “revolutionary” fluff. Just war stories, diagrams, code-ish logic. Refreshing.

Deeper wonder: This unlocks AI as platform shift. Not chatbots — orchestras conducting business symphonies. Data teams? Empowered. Decisions? Lightning. Toucan’s proving the playbook.

And velocity? They iterate agents independently now. Swap Chart Agent for fancier viz? No sweat. Single-LLM hellfire avoided.

Why Does Multi-Agent LLM Matter for Your Stack?

Dev teams grinding AI assistants — listen up. If loops plague you, outputs flake, costs balloon on retries — pivot time.

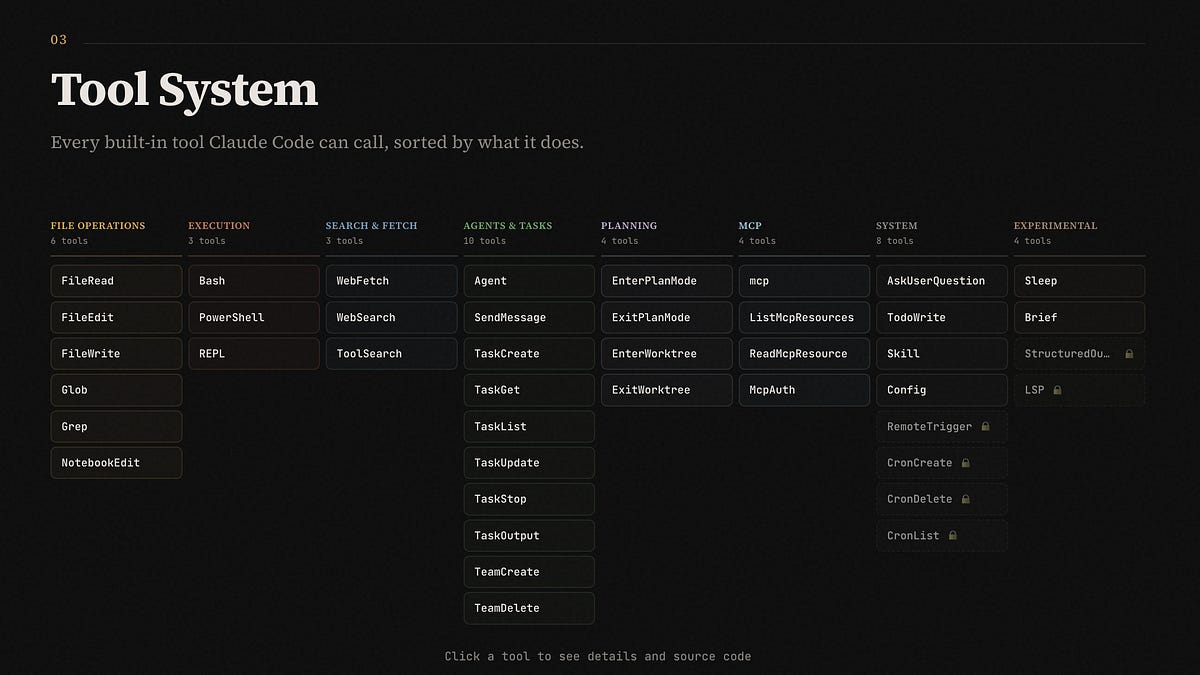

Start small: Orchestrator + 2-3 specialists. Tools for handoffs (JSON schemas rock). Observability: log agent traces, error taxonomies.

Toucan’s edge? Error severity tiers in QueryBuilder. Genius — tunes retries, skips dead-ends.

Future-proofing: As models evolve (hello, o1-preview reasoning), slot ‘em into agents. Best-tool-for-job, always.

We’re witnessing AI’s OS layer birth. Agents as processes, orchestrators as kernels. Mind-blowing.

Toucan scaled their LLM architecture not by bigger models — but smarter teams. That’s the futurist thrill. Monoliths fade; swarms rise.

🧬 Related Insights

- Read more: Trump’s Lego Nemesis: Slopaganda Floods the Iran-US Frontlines

- Read more: OpenAI Veterans Quietly Raise $100M Fund to Bet Against AI Hype

Frequently Asked Questions

What is Toucan’s multi-agent LLM architecture?

Toucan’s setup uses a central orchestrator delegating to specialists like QueryBuilder, DataExplorer, and Chart Agent for reliable data queries and viz.

How does multi-agent fix single LLM problems?

It breaks tasks into focused roles, cuts loops, boosts consistency, and speeds iteration — no more tangled prompt hell.

Will multi-agent architectures replace single LLMs?

For complex apps like BI tools, yes — they’re the scalable path forward, much like microservices conquered software.