Everyone’s eyes were glued to OpenAI today—their monster fundraise, $24B ARR boasts, that weird ‘soft IPO’ with ARK ETFs. Stagnant ChatGPT weekly actives? Yawn. No new Codex milestone? Whatever. We figured Anthropic might counter with Claude 4 whispers or some agent flex. Instead? Boom. Claude Code’s source code leaks across GitHub forks, npm traps, and Twitter threads. 500K lines of their crown-jewel coding agent, out in the wild. Changes everything—suddenly, we’re dissecting state-of-the-art agent guts, not just hype decks.

Look, I’ve covered enough Valley oopsies to know: leaks like this aren’t just PR nightmares. They’re cheat codes. Remember the early Cursor betas? Folks reverse-engineered those into Frankenstein forks before official release. This? Bigger. Claude Code’s architecture—memory layers, subagents, fork-join KV caches—now anyone’s playground. Anthropic’s scrambling with DMCA takedowns, but the cat’s long gone. (And yeah, shady npm packages like color-diff-napi popped up to snag the curious. Classy.)

What the Hell is Claude Code, Anyway?

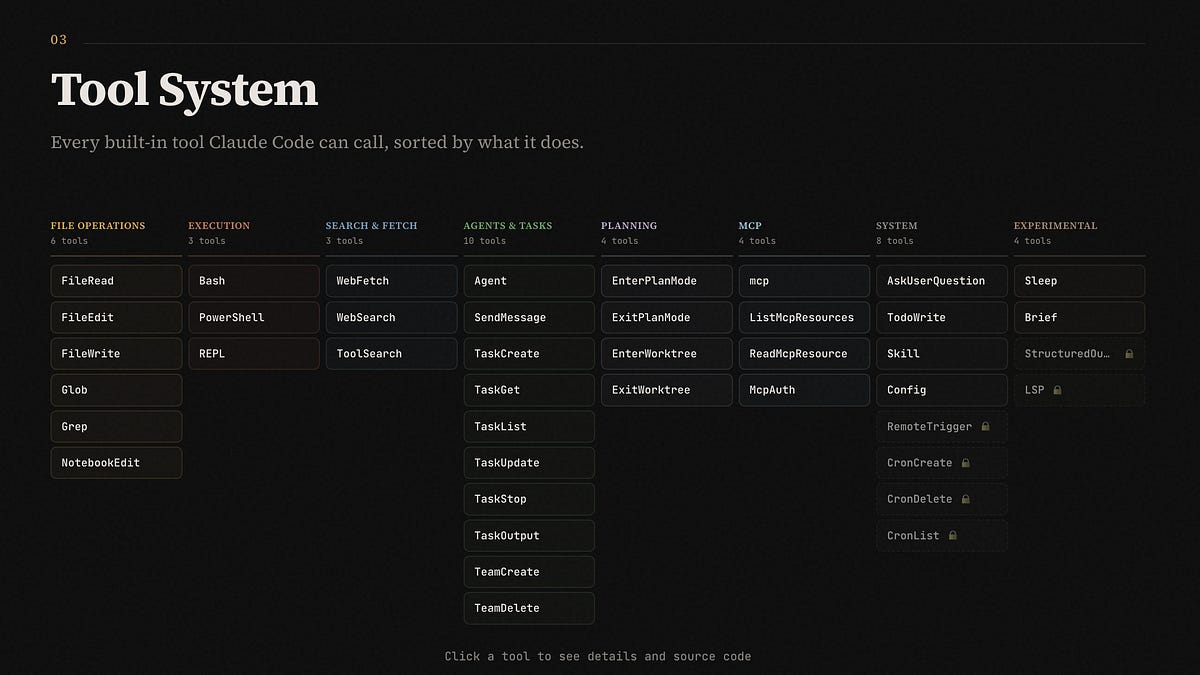

Short answer: Anthropic’s beast-mode coding agent. Not some toy autocomplete—think autonomous repo wrangler. It plans, edits files, runs bash, searches web, all with guardrails. The leak? Christmas for agent nerds. Sebastian Raschka nailed the highlights:

Putting Repo state in Context (eg recent commits, git branch info) Aggressive cache reuse Custom Grep/Glob/LSP (standard in industry) Claude code has less than 20 tools default on (up to 60+ total) File read deduplication/tool result sampling Structured Session Memory

That’s table stakes now. But dig deeper.

Memory’s the star—three layers deep. MEMORY.md indexes everything, topic files load on-demand, full transcripts searchable. Then ‘autoDream’ mode: it ‘sleeps,’ merges memories, prunes junk, fixes contradictions. Mem0.ai broke it into eight phases, five compaction types. Subagents? Genius hack—they fork the KV cache for parallelism. No repeated context. Free speed.

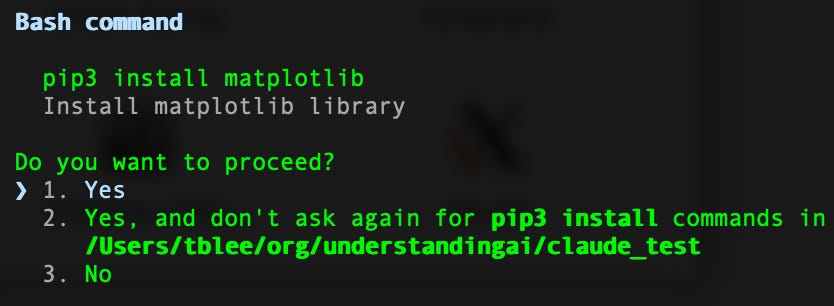

Five-level permissions. Dual plan modes. Resilience retries. Unreleased gems like ULTRAPLAN, KAIROS, MAGIC DOCS. Employee TUIs. Even a ‘/buddy’ April Fools gag and Boris’ WTF counter. (Boris? Internal lore, I guess.)

One paragraph here. Wild.

But here’s my unique spin, after 20 years chasing these cycles: this leak echoes the 2016 TensorFlow repo explosion. Google open-sourced accidentally at first—sparked PyTorch rivalry, commoditized training stacks. Anthropic’s handing rivals (Cursor, Devin, Replit) their playbook. Prediction? Open agent harnesses go viral by summer. Who profits? Not Anthropic—their moat crumbles. Vibe like early Kubernetes forks: everyone builds better on your bones.

Why Does the Claude Code Leak Hurt Anthropic?

Embarrassing? Sure. A Claude teamer drops ‘/web-setup’ mid-chaos—like, business as usual, folks. But security holes galore. Attackers baited compiles with malicious packages. Forks hit 32K stars before Python ports fled legal heat. DMCA waves incoming.

Cynical me asks: who’s really winning? OpenAI’s ARR flex feels hollow—WAUs stuck below 1B target. Anthropic? Leak spotlights they’re ahead on agents, but now everyone’s copying. No new revenue model exposed—just smarter orchestration. Tools list: AgentTool, BashTool, FileEditTool, etc. (20 default, 60 total.) Deduped reads, session memory. Fork-join subagents mean parallelism’s cheap.

And that permission system? Layers from read-only to god-mode edits. Plan modes toggle autonomy. It’s polished. Too polished for a leak.

Here’s the thing—Anthropic’s PR spin’ll be ‘nothing critical lost, no weights exposed.’ True-ish. But orchestration logic? That’s the secret sauce. Competitors wake up with blueprints. Devin folks probably popping champagne.

Is This the Blueprint for Next-Gen Coding Agents?

Damn right it might be. Cache reuse aggressive as hell. Custom LSP/grep? Industry standard, but integrated tight. WebFetch, TodoWrite, SkillTool—ecosystem hints at extensibility.

Deeper: structured memory isn’t vaporware. Index + on-demand + transcripts. AutoDream compacts it smart. Subagents parallelize sans cost. That’s efficiency OpenAI’s chasing in o1 previews.

Skeptical pause. Does it work? Leaks don’t ship. But forks are live—nerds compiling, porting. Yuchenj_UW’s Python conversion? Early sign agents democratize.

Two sentences. Punch.

Now sprawl: We’re at a tipping point—coding agents shift from hype to harnesses. Claude Code proves it: less LLM magic, more systems engineering. Memory pruning? Contradiction resolution? That’s reliability, not buzz. I’ve seen Valley chase moonshots; this feels grounded. Bold call: by EOY, open-source Claude Code forks outperform closed betas. Money trail? Startups forking this into niches—enterprise repos, solo devs. Anthropic? Back to black-box drawing boards.

Unreleased stuff teases more: employee gates, TUI, ULTRAPLAN (mega-planning?), KAIROS (time magic?). MAGIC DOCS—internal wiki? Roadmap gold.

🧬 Related Insights

- Read more: Google’s Veo 3.1 Lite Slashes Video AI Costs in Half – A Game for Indie Devs

- Read more: AI’s Real Bottlenecks: Helium Shortages, Chip Wars, and 2026’s Crunch

Frequently Asked Questions

What caused the Claude Code source leak?

Shipped source maps and package guts exposed 500K lines; public forks exploded before DMCA hits.

What features stand out in the leaked Claude Code?

Three-layer memory, KV-cache subagents for free parallelism, autoDream compaction, 5-level permissions.

Will the Claude Code leak speed up AI coding agents?

Yes—blueprints for rivals; expect open forks to iterate faster than Anthropic’s secrecy.