Qwen’s tiny 0.5B model spits out ‘C’ for that tricky MMLU biology question – correct, again. Accuracy: 72%. Not bad for something that fits in your laptop’s RAM. But as I watch the numbers rack up, I can’t shake the feeling we’ve been here before.

Twenty years chasing AI benchmarks that vanish like smoke when real work hits. Back in the 90s, ELIZA fooled folks into thinking it understood therapy; today, it’s MMLU fooling us into thinking LLMs grok the world.

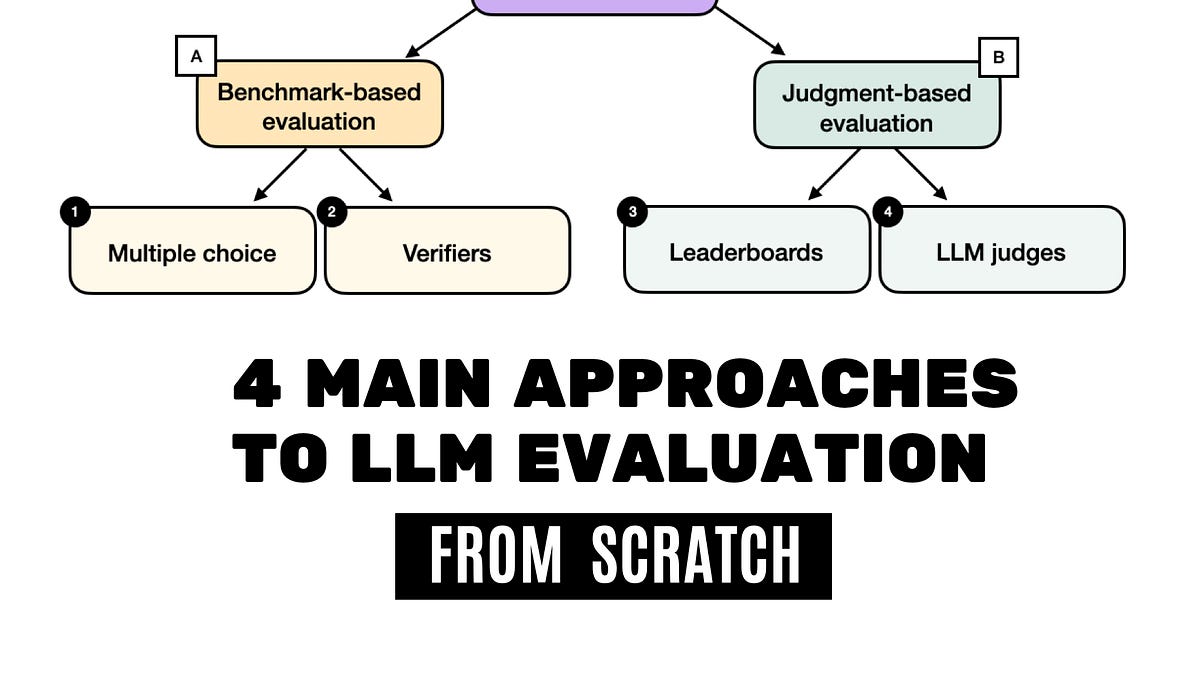

LLM evaluation. That’s the buzzphrase du jour, the four main approaches everyone’s hawking to prove their model’s god-tier. Multiple-choice benchmarks. Verifiers. Leaderboards. LLM judges. Sound rigorous? Pull up a chair – let’s gut this fish.

Multiple-Choice Benchmarks: Easy Wins, Narrow Minds

MMLU. Massive Multitask Language Understanding. Fourteen thousand questions across 57 subjects, from high-school trig to virology. Model picks A, B, C, or D; we tally accuracy. Simple as a SAT scantron.

“The complete MMLU dataset consists of 57 subjects… with about 16 thousand multiple-choice questions in total, and performance is measured in terms of accuracy.”

That’s straight from the playbook. Load your PyTorch model – say, that from-scratch Qwen implementation needing just 1.5GB RAM – feed it prompts, argmax the logits, match to ground truth. Boom, 87%? You’re a hero.

But here’s the cynical vet’s whisper: these tests reward memorization, not reasoning. Models hoover up internet scraps; they regurgitate. Remember GLUE benchmarks? Hype train until models cheated the format. MMLU’s next – companies fine-tune just for it, scores inflate, real-world tasks flop.

Code’s trivial. Tokenize question plus options, generate, extract letter. GitHub’s littered with it. Yet execs parade 90% scores like Nobel prizes. Who’s paying? Benchmark creators selling consulting. Dataset curators licensing data.

Short para for punch: It’s quantifiable. That’s the trap.

Now, dig deeper. Log-prob scoring – sum probs of correct tokens – dodges generation errors. Or exact-match on full answer. Cute tweaks, but still multiple-choice theater.

Verifiers: The Cop on the Beat

Shift gears. Benchmarks test raw recall; verifiers judge outputs. Train a side-model to score if an answer’s right. Think process supervision – reward chains of thought that lead true.

Author’s book pushes this hard: Build a Reasoning Model (From Scratch). Fair play – verifiers catch reasoning slips multiple-choice misses. But training them? Data-hungry beasts. And bias creeps in; your verifier’s only as smart as its training set.

I’ve covered verifier hype since o1-preview. OpenAI swears by them internally. Externally? Opaque black boxes. Who audits? Nobody. Prediction: by 2026, verifier arms race – companies gaming datasets, scores decoupling from truth like Theranos blood tests.

Code sketch: Fine-tune a small LLM on (question, answer, score) triples. Inference: score candidate against gold. Elegant, until your verifier hallucinates.

Are Leaderboards Actually Rigged?

Arena-style battlegrounds. LMSYS Chatbot Arena, Hugging Face Open LLM Leaderboard. Users blind-vote model outputs. Elo scores emerge. Dynamic, human-ish.

Qwen tops one today; tomorrow, Claude surges. “Crowd-sourced truth,” they claim. Laughable. Vote brigades from China pump local models. VPN farms game it. And prompts? Leaked, optimized, eval-hacked.

Historical parallel no one mentions: ImageNet leaderboards in 2015. Everyone clustered at 95% accuracy – then the bust when adversarial examples shredded them. LLM leaderboards? Same script. Real money’s in the hosting: chips rented, APIs queried, tab picked up by hopeful startups.

“Research papers, marketing materials… often include results from two or more of these categories.”

Translation: cherry-pick your best two. Leaderboard warriors flaunt it; laggards bury it.

One-line zinger: Leaderboards measure popularity contests, not intelligence.

LLM-as-Judge: AI Judging AI, What Could Go Wrong?

Final boss. Use a beefy LLM – GPT-4o, Claude – to rate outputs. “Which answer’s better?” Scale 1-10. Cheap, scalable, no humans needed.

Promising? Sure, until positional bias: judge favors longer answers, first-listed ones. Alignment flukes make GPT-4o simp for OpenAI models. Studies show 10-20% disagreement with humans.

From-scratch code: Prompt judge with pair of responses, parse rationale + score. Parse fails? Retry. It’s meta-fragile.

Cynic’s take: Infinite regress. Judge an LLM with an LLM judged by… what? Bootstraps to nowhere. Money trail? API giants billing judgment cycles by the billion.

Why Does LLM Evaluation Still Matter for Devs?

You’re fine-tuning Llama-3.1. Benchmarks say it’s meh. Leaderboard tanks. Switch to verifiers? Costs skyrocket. Judges? Inconsistent.

Real talk: Mix ‘em. Use MMLU for baselines, verifiers for reasoning, custom evals for your app. But distrust absolutes. Progress? Track your own suite – domain-specific, contamination-free.

Unique insight: This mirrors 2000s search eval. PageRank leaderboards ruled until personalized search nuked them. LLMs go same way: evals personalize or die.

And the book plug? Build a Reasoning Model (From Scratch) – early access humming, PyTorch purity. Respect the grind; beats vaporware.

But PS from the frontlines: Papers stack like cordwood. o1’s test-time compute? Game-changer or gimmick? Next column.

🧬 Related Insights

- Read more: Voice AI’s Ambient Computing Surge: 2027’s Real Breakthrough or Hype?

- Read more: Anthropic’s Revenue Rocket: Set to Eclipse OpenAI Before the IPO Circus

Frequently Asked Questions

What are the 4 main approaches to LLM evaluation?

Multiple-choice benchmarks like MMLU, verifiers for output scoring, leaderboards for blind battles, and LLM judges for automated ratings.

How do you evaluate LLMs from scratch with code?

Load PyTorch model, tokenize MMLU prompts, argmax predictions, compute accuracy – GitHub repos abound for verifiers and judges too.

Are LLM benchmarks reliable?

They’re quick baselines but prone to gaming; combine with custom tests for real insight.

Who makes money from LLM evaluation?

Dataset owners, API providers, leaderboard hosts – follow the compute bills.