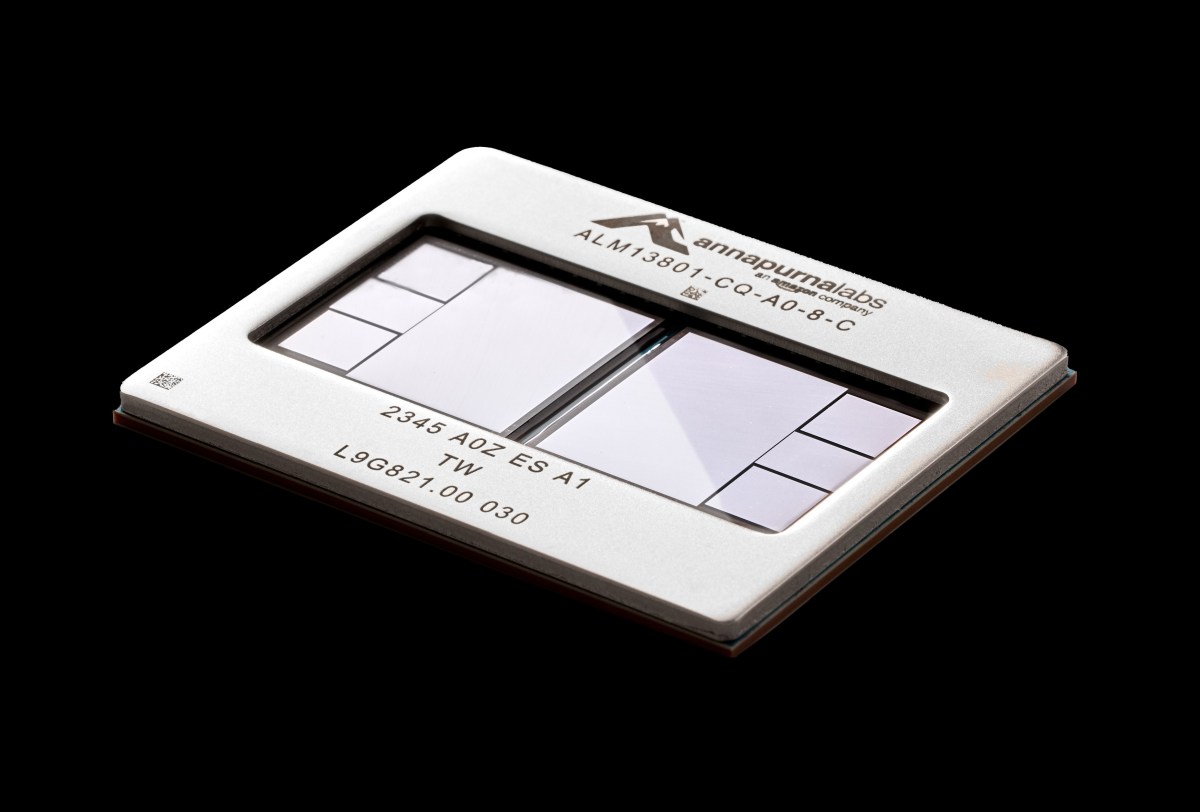

Uber’s engineers are firing up Amazon’s Trainium3 chips right now, testing the limits of AWS’s latest Nvidia challenger for ride-matching magic. And just like that, one of cloud computing’s biggest migrations hits a sharp U-turn.

Zoom out: this isn’t a minor contract tweak. It’s Amazon flexing its in-house silicon muscle, poaching Uber from Oracle and Google’s turf after the ride-hailer swore multi-year loyalty to those clouds last year. Amazon’s AI chips—Graviton for everyday grunt work, Trainium3 for the heavy AI lifting—are the bait.

Here’s the thing. Uber ditched its own data centers in 2023, splitting its workloads across Oracle Cloud Infrastructure (OCI) and Google Cloud. They bragged about it, too. Check this from their December blog post:

In February 2023, Uber began transitioning from on-premise data centers to the cloud using OCI and Google Cloud Platform, taking on the dual challenge of shifting massive workloads and introducing Arm-powered compute instances into a previously x86-dominated environment.

Arm chips from Ampere, hosted on Oracle, were the stars back then. Uber loved the power sip, the cost crunch—perfect for scaling maps, predictions, all that real-time jazz without melting servers.

But Ampere? That’s a Silicon Valley soap opera wrapped in one startup.

How Ampere’s Fall Hands AWS the Win

Renee James—ex-Intel exec who got passed over for CEO—launched Ampere with Oracle cash. She use her Carlyle investor gig, her Oracle board seat (had to quit for it), pulling in a third of the company from Larry Ellison’s empire. Oracle pushed Ampere hard, especially those Arm beasts for Uber’s shift.

Fast-forward (sorry, couldn’t resist): SoftBank snaps up Ampere in December. Oracle cashes out $2.7 billion pre-tax—nice payday—and Ellison declares custom chips ain’t their game anymore. Why design when you can buy Nvidia’s fire-breathing GPUs by the truckload? Oracle’s now all-in on data centers for OpenAI, Stargate, the whole frontier-model frenzy.

James bounces from Oracle’s board end of 2024. Ampere’s toast as an independent player.

Uber watches this circus and… pivots to AWS. Graviton expansion for core ride-sharing. Trainium3 trial for AI workloads. Joins Anthropic, OpenAI, Apple—big names chasing Amazon’s chip savings.

Andy Jassy called Trainium a multibillion-dollar business last December. No hype there; AWS prints its own money-makers.

Why Uber’s Switch Screams Architectural Shift

Look, power-hungry Nvidia GPUs dominate training massive models—fair. But inference? Running those models in production, like Uber’s demand predictions or route optimization? That’s where custom silicon shines. Trainium3 promises Nvidia H100-level performance at lower watts, cheaper racks.

Uber’s not alone craving this. Ride-sharing chews data: billions of pings, traffic flows, ETAs. x86 was king forever—Intel’s moat. Then Arm snuck in, Graviton-style, slashing costs 20-40%. Now AWS iterates: Trainium2 crushed benchmarks; 3 ups the ante with faster interconnects, better scaling.

Architecturally? It’s the inference revolution. Nvidia owns training (for now), but clouds want lock-in via cheaper ops. Google has TPUs, but AWS’s open-ish ecosystem—EC2 flexibility—pulls hyperscalers like Uber.

And here’s my unique angle, one the original coverage misses: this echoes the 2000s server wars. AMD cracked Intel’s x86 stranglehold with cheaper Opterons; clouds exploded on those gains. AWS Trainium could do the same to Nvidia’s CUDA empire—not kill it, but force price wars, open alternatives. Prediction: by 2027, 30% of inference shifts to custom chips like these. Uber’s just the canary.

Is Amazon’s Chip Play a Nvidia Killer?

Not yet. Nvidia’s moat—software stack, ecosystem—is nuts. But Amazon’s thumbing its nose at everyone: Google (TPUs), Oracle (now Nvidia simp), even Microsoft (NDv4 instances).

Uber’s move? Pure economics. Post-Ampere implosion, Oracle hikes prices or lags on Arm? AWS steps in with proven Graviton fleets, Trainium trials showing 50% cost cuts on ML inference (AWS claims, but benchmarks back it).

Corporate spin? Amazon touts this as ‘innovation leadership.’ Please—it’s survival. Without chips, AWS bleeds to rivals on AI margins. Jassy’s squad toured their labs (exclusive peek sometime); it’s Intel-level R&D, not garage hacking.

But Oracle? They’re all-in Nvidia now, funding OpenAI circles with SoftBank. Circular deals everywhere—Nvidia chips to Oracle to OpenAI back to AWS rivals? Wait, no: Uber’s exit stings.

Short para: Clouds are chipping away—literally.

Longer riff: Think bigger. This multi-cloud dream Uber chased? Dead. Loyalty’s to the best silicon. Developers rejoice—pick Graviton for scale, Trainium for AI, no vendor lock except physics. But why does it matter? Uber’s 150 million monthly users feel it: faster matches, lower fares? Or just AWS margins fattening.

Critique the PR: Uber’s blog lauded OCI/Google; now crickets. Hypocrisy? Nah, business. But it exposes cloud fragility—migrations ain’t one-way.

Why Does This Shake Up Developers and Startups?

You’re building the next Uber clone? Ditch GPU bills. Trainium’s Neuron SDK rivals CUDA—compile once, run AWS-cheap. Startups snag reserved instances, scale without bankruptcy.

Historical parallel: EC2 launch killed on-prem for many. This? AI cloud 2.0—custom accel everywhere.

One sentence warning: Ignore at your peril.

🧬 Related Insights

- Read more: Meta’s AI Now Writes Its Own Kernels — Watching You More Efficiently Than Ever

- Read more: Gemini in Google Sheets Just Nailed Spreadsheet Mastery at 70% – Humans, Watch Out

Frequently Asked Questions

What are Amazon Trainium3 chips?

AWS’s latest AI accelerator, built to rival Nvidia for training and inference, with massive focus on cost-per-flop efficiency.

Why did Uber expand AWS over Oracle and Google?

Cheaper Arm-based Graviton for core workloads, plus Trainium trials promising Nvidia-beating AI performance at lower power.

Does Uber’s AWS deal threaten Nvidia?

Not dominance, but nibbles at inference margins—custom chips like Trainium could claim 20-30% market share by decade’s end.