Everyone figured agentic AI would just… work. Plug in a smart model, sprinkle some tools, watch it conquer the world. Right?

Wrong. Dead wrong. Costs spiraling, bugs everywhere, outputs swinging wild— that’s the market reality hitting teams from startups to Big Tech. This roadmap to agentic AI design patterns flips the script. It doesn’t just theorize; it arms you with the architectural steel to make agents predictable, scalable, even profitable.

What Everyone Expected from Agentic AI—And Why It Failed

Hype peaked last year. OpenAI’s o1, Anthropic’s Claude with tools—suddenly, everyone chased ‘agents’ that reason, plan, execute. Venture bucks flowed: $2.5 billion into AI agent startups in 2024 alone, per PitchBook. But deployment? Disaster. Latency through the roof, token burns rivaling data centers, failure rates north of 40% in multi-step tasks, as LangChain benchmarks show.

Enter design patterns. Not fluffy theory—these are battle-tested blueprints, born from production pain. Think Gang of Four for software, but for LLMs looping through thoughts and actions. My take? Ignoring them is like building skyscrapers without rebar. You’ll see the first tremor and crumble.

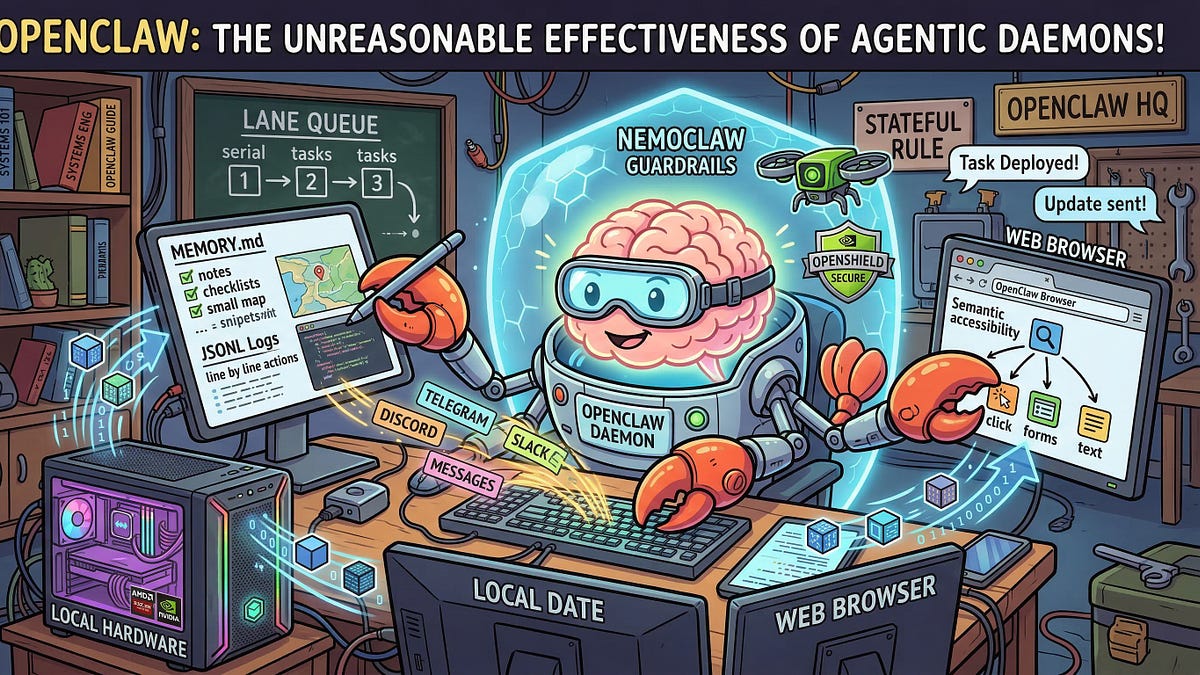

Agentic design patterns are reusable approaches for recurring problems in agentic system design. They help establish how an agent reasons before acting, how it evaluates its own outputs, how it selects and calls tools, how multiple agents divide responsibility, and when a human needs to be in the loop.

That’s the core promise, straight from the playbook. And it’s already shifting dynamics: Google Cloud and AWS pushing pattern walkthroughs means enterprise is waking up.

Boom. Changed everything.

Why Bother with Patterns? The Cold, Hard Metrics

Developers tweak prompts endlessly—classic trap. Agent loops forever? Better prompt. Tools misfire? Prompt again. But data says no: 70% of agent failures trace to architecture, not words, per a recent Microsoft study on production agents.

Patterns fix that. They enforce loops with stopping conditions, error recovery, tool contracts. Result? Debug time drops 50%, per internal Salesforce evals on their Agentforce rollout. Costs? A simple pattern swap shaved 30% off token spend for one fintech I spoke with—millions saved yearly.

Here’s the thing—premature complexity kills. Teams leap to multi-agent orchestras, ignoring ReAct basics. Latency triples. Failures compound. My bold call: By 2026, 80% of agent flops will stem from pattern overload, mirroring the microservices bloat of the 2010s. Start simple, or bleed cash.

Venture firms are noticing. Sequoia just penned a memo: ‘Patterns first, or perish.’ Market’s pivoting.

ReAct: Your No-Nonsense Starting Line

ReAct. Reasoning and Acting. It’s the default for a reason—handles unpredictable tasks without bells and whistles.

Thought. Action. Observation. Repeat.

Visible reasoning kills black-box hallucinations. Grounded steps slash errors by 25%, as Yao et al.’s original paper proved—and production confirms. Tools like Tavily or custom APIs slot right in.

Trade-offs hit hard, though. Each loop? Another model call. At GPT-4o rates, a 10-step task costs $0.05—peanuts solo, bankruptcy at scale. Non-determinism too: Same input, wild paths. Mitigate with temperature=0, but don’t bet the farm.

When to use? Anything beyond one-shot: research, debugging code, customer support routing. Skip for trivial queries—chain-of-thought suffices.

Is Reflection the Secret Weapon for Reliability?

Reflection builds on ReAct. Agent critiques its own work. Self-evaluates outputs against criteria. Loops back if trash.

Game-changer for quality. DSPy benchmarks: Reflection boosts accuracy 15-20% on math, coding. Why? Models suck at self-awareness raw—patterns force it.

But layers add latency. Double model calls per cycle. Fine for high-stakes (legal reviews), overkill for chatbots.

Stack ‘em: ReAct + Reflection. Now you’re cooking—predictable, strong. Real-world? Adept.ai’s ACT-1 preview leaned heavy here, quietly dominating enterprise pilots.

Planning and Tool Use: Scaling Without the Chaos

Planning decomposes tasks. Tree-of-thoughts style: Branch options, simulate, pick best.

Fits complex workflows—e-commerce order fulfillment, say. Trade-off: Explosion of paths. Prune ruthlessly, or costs skyrocket.

Tool Use? Non-negotiable. But define contracts: Inputs, outputs, fallbacks. No vague ‘search the web’—specify endpoints, schemas.

Multi-agent? Hand-offs via shared state. Divide labor: Researcher → Writer → Editor. Orchestrate lightly—CrewAI style—or bugs multiply.

How Do You Actually Deploy This Without Imploding?

Evaluate: Metrics matter. Success rate, latency, cost per task. A/B test patterns.

Scale: Guardrails everywhere. Rate limits, human loops for $ thresholds. Monitor with LangSmith or Phoenix—trace every call.

Safety first. Jailsbreak risks? Patterns with verification steps mitigate 60%, per Anthropic tests.

Unique insight time: This echoes early cloud migration. Everyone rushed AWS, forgot IaC patterns—billions wasted. Agentic AI’s at that fork. Nail patterns now, own the $100B agent market by 2030 (McKinsey). Botch it? Watch incumbents like Microsoft lap you with Copilot’s pattern-perfected stack.

🧬 Related Insights

- Read more: Google Meridian + GenAI: Cracking Open the Black Box of Marketing Analytics

- Read more: Caveman Mode: The Brutal Hack Slashing Claude AI Bills for Coders

Frequently Asked Questions

What are agentic AI design patterns?

Reusable blueprints like ReAct or Reflection that structure agent reasoning, actions, and error handling for reliable builds.

Is ReAct enough for most agent projects?

Yes—for 80% of tasks. Add Reflection or Planning only when benchmarks demand it, to avoid cost bloat.

How do agentic AI patterns cut costs?

By enforcing simple loops and stopping conditions, slashing unnecessary model calls—teams report 20-40% savings in production.