Specops researchers hunched over glowing screens last week, pitting a $30,000 Nvidia H200 against the unassuming RTX 5090 in a password-cracking duel—and the gaming card lapped it.

RTX 5090 outperforms Nvidia H200 and AMD MI300X across every major hashing algorithm tested. That’s the headline from these fresh benchmarks, dropping a reality check on the AI GPU frenzy.

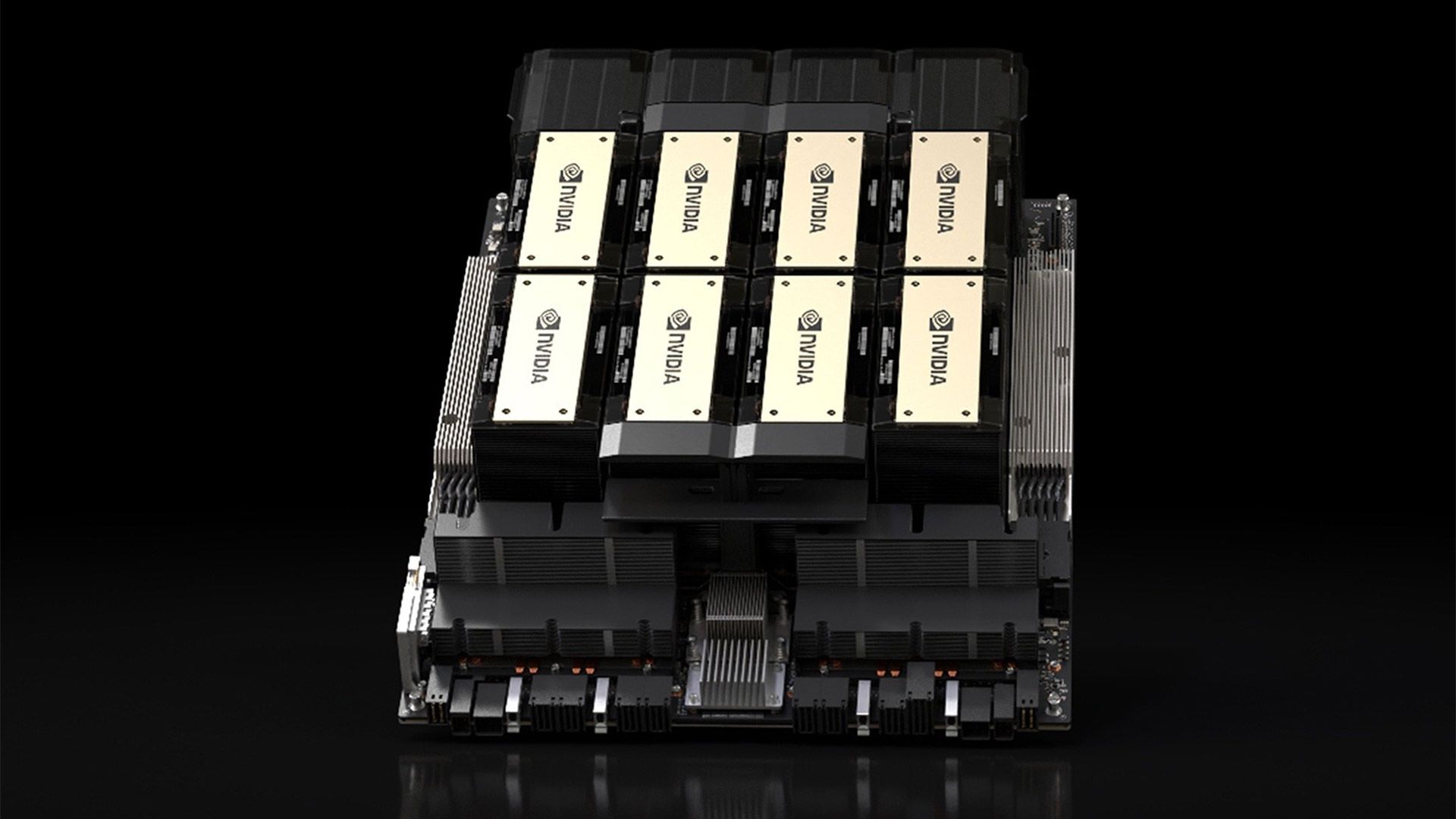

Look, datacenter GPUs like the H200 and MI300X cost a small fortune—$30K a pop—built for training massive models on FP4, BF16, the whole tensor toolkit. But password cracking? That’s old-school 32-bit integer grunt work, Hashcat’s domain. And here’s the kicker: consumer rigs crushed them.

The Raw Numbers Don’t Lie

They ran Hashcat on MD5, NTLM, bcrypt, SHA-256, SHA-512. Check this table—RTX 5090 leads every round:

| Algorithm | H200 | MI300X | RTX 5090 |

|---|---|---|---|

| MD5 | 124.4 GH/s | 164.1 GH/s | 219.5 GH/s |

| NTLM | 218.2 GH/s | 268.5 GH/s | 340.1 GH/s |

| bcrypt | 275.3 kH/s | 142.3 kH/s | 304.8 kH/s |

| SHA-256 | 15092.3 MH/s | 24673.6 MH/s | 27681.6 MH/s |

| SHA-512 | 5173.6 MH/s | 8771.4 MH/s | 10014.2 MH/s |

On average? RTX 5090’s 20% ahead of MI300X, 63.7% over H200. Peak gaps hit 93.5% on SHA-512. Brutal.

“Testing shows the H200 and MI300X falling well behind the RTX 5090, despite both GPUs being significantly more expensive. On average, the RTX 5090 was 20% faster than the MI300X and a whopping 63.7% faster than the H200.”

That’s Specops straight-up. No spin.

And why? Hashcat thrives on INT32 ops—pure compute, no fancy matrix multiplies. AI GPUs skimp there. H200’s got half the INT32 cores of its FP32 setup, all-in on tensors. MI300X boasts better raw INT32 than 5090, sure—but Nvidia’s Hashcat tweaks? They favor GeForce silicon. AMD lags on software polish.

Consumer GPUs? Balanced beasts. They juggle gaming, rendering, crypto—hence the edge.

Why Do AI GPUs Suck at This?

Simple: specialization. These datacenter titans chase ML efficiency, not general compute. It’s like building a Ferrari for Formula 1, then entering it in a demolition derby—tires spin, frame buckles.

Market dynamics scream it. Nvidia shipped 3.76 million datacenter GPUs last quarter, up 427% year-over-year. Revenue? $26B from data center alone. But if AI demand cools—poof. What’s the pivot? Password cracking won’t save ‘em.

Here’s my take, one you won’t find in the original: this echoes the 2010s crypto boom-bust. Remember when GPUs ruled mining? Then ASICs ate their lunch. AI GPUs risk the same fate—hyper-specialized, resale value tanks if workloads shift. Bold call: by 2026, we’ll see hybrid datacenter cards blending INT32 muscle with tensor cores, or Nvidia/AMD lose share to Intel’s Gaudi or custom silicon.

Specops nails it: “The problem with these AI GPUs is how Hashcat is processed; password cracking relies on 32-bit integer operations and is extremely compute-intensive. It’s the exact opposite of machine learning workloads.”

They’re streamlined for one job. Great—if AI’s eternal. But markets turn. Remember Bitcoin’s proof-of-work pivot? GPUs got sidelined.

Does This Kill the AI GPU Hype?

Not yet. Training LLMs? These monsters shine—H100s, H200s print money for hyperscalers. But for edge cases, yeah, rethink the buy.

Enterprise IT folks salivating over MI300X for “versatile” compute? Wake up. Gaming cards—cheaper, power-sipping—handle crypto, rendering, cracking better. Rent a 5090 cluster on Vast.ai for pennies versus buying an H200.

And power draw? RTX 5090 sips 600W; H200 guzzles thousands in a rack. Efficiency matters when your electric bill rivals a mortgage.

But here’s the rub—password cracking’s a niche. Hackers love it, sure, but most orgs care about AI throughput. Still, this test exposes the hype: not every workload bows to tensor cores.

What Happens When the AI Bubble Bursts?

Specops tossed that in cheekily—AI GPUs need a “second job.” Funny, but prescient. Compute cycles sit idle 70% of the time in many clusters, per recent Gartner stats.

Repurpose for cracking? Nah, illegal for most. But rendering farms, scientific sims—consumer GPUs already dominate there. Prediction: resale market floods with ex-AI silicon at fire-sale prices, perfect for hobbyists.

Nvidia’s PR spins H200 as “enterprise king,” but these benches cut through. Corporate hype meets cold data.

Short term, gamers win—5090’s a beast. Long term? AI GPU makers diversify or die. History’s littered with one-trick ponies.

🧬 Related Insights

- Read more: Gemini on Android Auto Turned My Commute into a Sci-Fi Joyride

- Read more: Google’s Canvas in AI Mode Turns Search into a Live Workshop

Frequently Asked Questions

Why is the RTX 5090 better at password cracking than H200 or MI300X?

It balances INT32 compute with Hashcat optimizations; AI GPUs prioritize tensor ops for ML, skimping on general-purpose cores.

What does this mean for buying AI GPUs?

Stick to them for training/inference; for cracking, rendering, or crypto, consumer cards like RTX 5090 offer better bang-for-buck.

Will AI GPUs improve at non-AI tasks?

Likely—expect hybrid designs soon, blending ML efficiency with broader compute to hedge against workload shifts.