Imagine firing up your laptop Friday night, typing a few prompts, and by Sunday brunch, you’ve got an AI churning out images that’d make pros sweat. Not some pipe dream — this is now.

A scrappy team just pulled off the impossible: training a text-to-image model in 24 hours on 32 H200 GPUs, total bill $1,500. Real people — indie creators, hobbyists, small studios — suddenly hold the keys to god-tier image gen without begging OpenAI for scraps.

It’s electric. AI’s platform shift hits warp speed here.

Why a 24-Hour Text-to-Image Speedrun Matters for You

Back in the wild early days, diffusion models guzzled millions — think Flux or Stable Diffusion’s first beasts, trained on clusters that’d bankrupt a startup. Now? Pocket change. This isn’t hype; it’s proof the field’s matured into something personal, like going from room-sized computers to your iPhone.

They stacked every trick from prior experiments: x-prediction for pixel-space training (ditching clunky VAEs), perceptual losses like LPIPS and DINOv2 for sharper convergence, token routing via TREAD to slash compute. Start at 512px, fine-tune to 1024px. Sequence lengths tamed — 1024px clocks in manageable.

And the kicker? Open-source code on GitHub. Fork it. Tweak it. Your turn.

“This speedrun is not just a fun experiment. It will likely serve as the foundation for our large-scale training recipe going forward.”

That’s straight from the PRX crew. Love the candor — no corporate fluff, just engineers geeking out.

But here’s my unique spin, absent from their post: this mirrors the Linux kernel’s birth. Torvalds hacked together a free OS on a borrowed PC in ‘91; now it powers the world. Expect text-to-image to fork wildly — community diffs piling up, birthing niche models for anime, realism, whatever. Bold prediction: by 2026, your phone runs a custom 24h-trained genAI, no cloud required.

Can You Train a Text-to-Image Model in 24 Hours Too?

Hell yes — if you’ve got the GPUs. 32 H200s at $2/hour each? Crunch the math: 24 * 32 * 2 = $1,536. Cloud providers like Lambda or CoreWeave make it bookable. But it’s the recipe that democratizes.

Pixel-space magic means no VAE dance. Predict raw pixels, sequence length? 512px: tidy 256 tokens. 1024px: still sane. Patch size 32, 256-dim bottleneck — elegant hack.

Perceptual losses? Game-changer. LPIPS for textures that pop, DINOv2 for semantics that sing. They tweaked: full-image pooling, all noise levels. Weights: 0.1 LPIPS, 0.01 DINO. Overhead? Negligible. Boom — faster convergence, prettier pics.

Token routing with TREAD: 50% tokens skip mid-layers, re-injected later. Cheaper steps. Routed models get wonky with CFG? Self-guidance fixes it — dense vs. routed predictions, no unconditional branch needed.

It’s a cocktail of papers: Back to Basics (x-pred), PixelGen (perceptual), TREAD (routing). Stacked smart, not brute force.

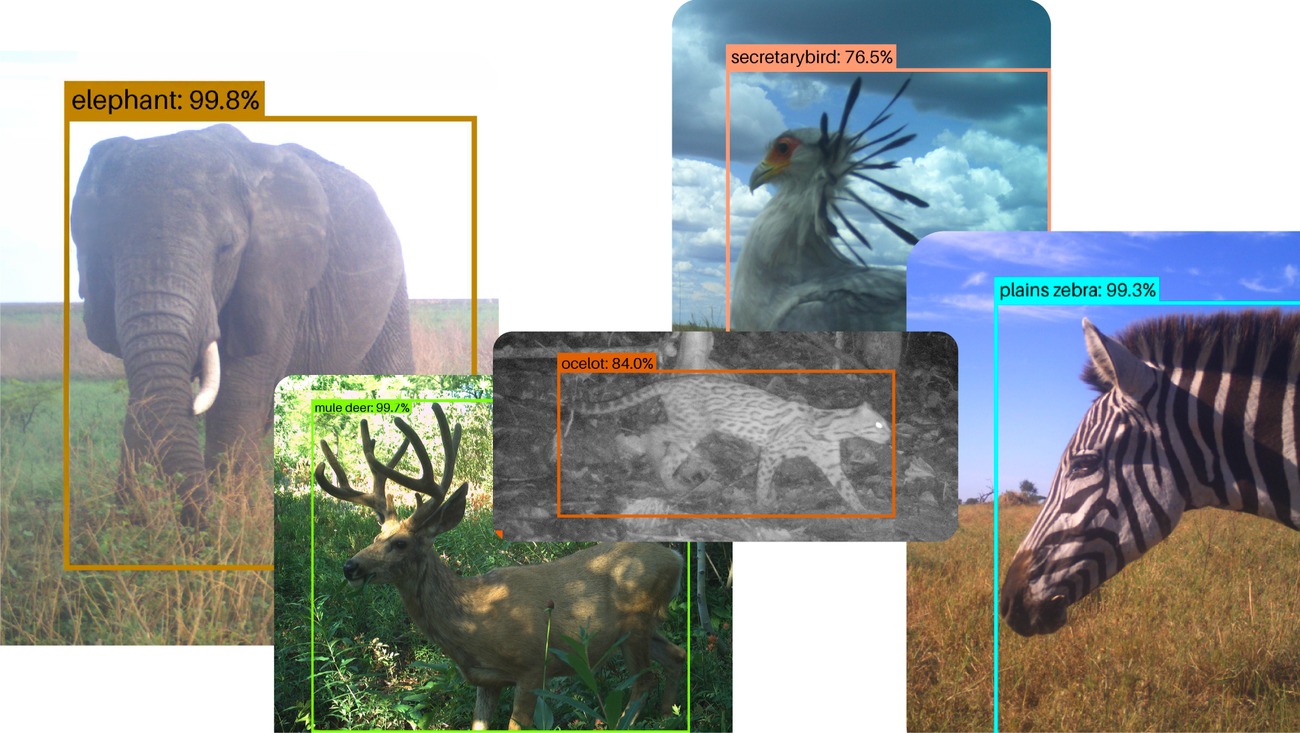

One short para: Results? Stunning. Competitive FID, visuals rival big labs.

Skeptical? Reproduce it. Code’s there.

The Hidden Edge: Pixel-Space Revival

VAEs? So 2022. Pixel-space revives CV classics — plug in losses designed for humans, not latents. No decoding hacks. Perceptual supervision flows natural.

Analogy time: latents are like cooking through a funhouse mirror — distortions creep in. Pixels? Straight shot, chef’s kiss.

They skipped the 256→512 ramp-up. Direct to 512px. Why? Modern hardware laughs at token counts. H200s flex.

TREAD over SPRINT? Simplicity wins. 64-token seq at 512px — plenty fast.

Self-guidance? Inspired Krause et al., but lean. Undertrained models shine.

This isn’t isolated; it’s the new baseline. Large-scale? Just scale this recipe.

Wonder hits: what if you swap datasets? LAION-A for niche? Train on your photos — family AI artist.

Why Does Pixel-Space Training Beat Latent Diffusion?

Latent diffusion: compress to VAE bottle, diffuse there, decode back. Perceptual losses? Awkward — decode every eval? Or latent-space proxies that drift from human eyes.

Pixels: direct. LPIPS, DINO — as intended. Low-level fidelity, high-level meaning.

Side effect? Toolbox unlocked. Classical CV floods back.

Their tweaks — pooled images, all timesteps — empirical wins. Small changes, big lifts.

Compute? Perceptual adds whisper to transformer roar.

Historical parallel (my insight): like shift from vector to raster graphics in ’80s. Raster won for photoreal — pixels rule again.

Prediction: 90% new models ditch VAEs by EOY.

But — corporate spin alert? None here; pure engineering. Refreshing.

Real-World Speedrun Breakdown

Hardware: 32 H200s. Throughput optimized.

Training: flow matching objective + aux losses.

Resolutions: 512px base, 1024px fine-tune.

Code: GitHub, full repro.

Evolution flex: from million$ to 1.5k$. Field’s leaped.

For creators: weekend warrior status unlocked.

🧬 Related Insights

- Read more: ChatGPT’s Dialect Trap: Reinforcing Real-World English Bias

- Read more: Rocket Close’s AWS AI Blitz: 15x Faster Mortgages, But Who’s Cashing In?

Frequently Asked Questions

What does training a text-to-image model in 24 hours cost?

Around $1,500 on 32 H200 GPUs at $2/hour — cloud-rentable, fully reproducible with open code.

Can I train my own text-to-image AI at home?

With access to H200s or equiv (A100s work-ish), yes — fork the GitHub repo, swap data, run.

Is pixel-space training better than latent diffusion?

Often yes: direct perceptual losses, no VAE overhead, cleaner pixels — especially under tight budgets.