Ever asked yourself why we’re all chasing fancier neural nets when a bare-bones policy gradient algo from the ’90s still crushes CartPole in pure NumPy?

Policy Gradients: REINFORCE from Scratch with NumPy. That’s the hook here—no fluff, just code that works. I’ve seen Silicon Valley hype cycles come and go over 20 years; this one’s a throwback that cuts through the BS.

Look, DQN was cute for discrete actions—pick left, pick right, argmax your way to glory. But real-world stuff? Continuous controls on a robot arm or throttle on a self-driving car? Infinity’s no friend to argmax. Policy gradients flip the script: parameterize the policy directly, sample actions from its probability soup, then gradient-ascend toward the rewarding ones. Simple. No Q-values cluttering things up.

And here’s the kicker—they built the whole REINFORCE beast in about 100 lines. Forward pass. Backprop by hand. RMSProp optimizer. Gymnasium’s CartPole-v1 as the victim. Converges to the 500-step max in 3,000 episodes. No frameworks. Just NumPy and the chain rule doing God’s work.

The animation shows the agent converging to the maximum score of 500 within about 3,000 episodes, using nothing but NumPy. No deep learning framework, no replay buffer — just a policy, a gradient, and RMSProp.

That quote? Straight from the source. Proof in the pudding—or the plot. Rolling average spikes to 500, green dashed line hit. Clean as a whistle.

Why Implement Policy Gradients from Scratch in NumPy?

But why? PyTorch has autograd; life’s too short for manual derivatives. Here’s my cynical take: frameworks abstract away the math until you forget it’s just matrix multiplies and sigmoids. This NumPy hack forces you to own it—sigmoid for action prob, ReLU hidden layer, discount_rewards backward from the end. Running sum for those discounted returns? Elegant, old-school.

Hyperparams tuned smart: gamma=0.99 for longer horizons (CartPole-v1’s 500 steps, not v0’s 200), lr=1e-3 bumped up. Xavier init on weights. Batch every 5 episodes. It’s not rocket science; it’s calibration.

Unique insight time—and one the original skips: this echoes the 1992 REINFORCE paper by Williams, back when RL was scribbled on napkins, not scaled on TPUs. History repeats because the core gradient—log-prob times advantage—hasn’t changed. Bold prediction? As edge AI booms (think drones, not datacenters), NumPy-style micros will explode. Frameworks bloat; math doesn’t.

The forward pass? Dead simple.

def forward(x, model):

h = np.dot(model['W1'], x)

h[h < 0] = 0 # ReLU

logp = np.dot(model['W2'], h)

p = sigmoid(logp)

return p, h

Action: sample Bernoulli if uniform < p. dlogp = action - p. That’s your policy gradient snippet—unbiased estimator, folks.

Does REINFORCE Actually Work Without All the Fancy Stuff?

Skeptical vet mode: yes, but don’t kid yourself. High variance—rewards standardized per episode (mean zero, unit std). RMSProp tames exploding grads with decay=0.99, epsilon=1e-5. No experience replay? Risky, but CartPole’s Markov enough.

Training loop’s a beast: accumulate grads in buffer, update every batch_size=5. Reset env on term/trunc. Episode 500 prints: 100-ep avg climbs steady.

Episode 500, 100-ep avg: 123.4

...

Final 100-episode average: 499.2

(Paraphrased; it hits near-max.) Plot? Convolve for rolling mean, blue line hugs the green 500 dashed. Grid faint, legend crisp. No smoke, just mirrors.

Backward pass—hand-rolled glory.

def backward(eph, epdlogp, epx, model):

dW2 = np.dot(eph.T, epdlogp).ravel()

dh = np.outer(epdlogp, model['W2'])

dh[eph <= 0] = 0 # ReLU backprop

dW1 = np.dot(dh.T, epx)

return {'W1': dW1, 'W2': dW2}

dh zeroed below ReLU kink—straight chain rule. Weight by discounted_epr * epdlogp. PG magic.

Who’s Profiting from This NumPy RL Revival?

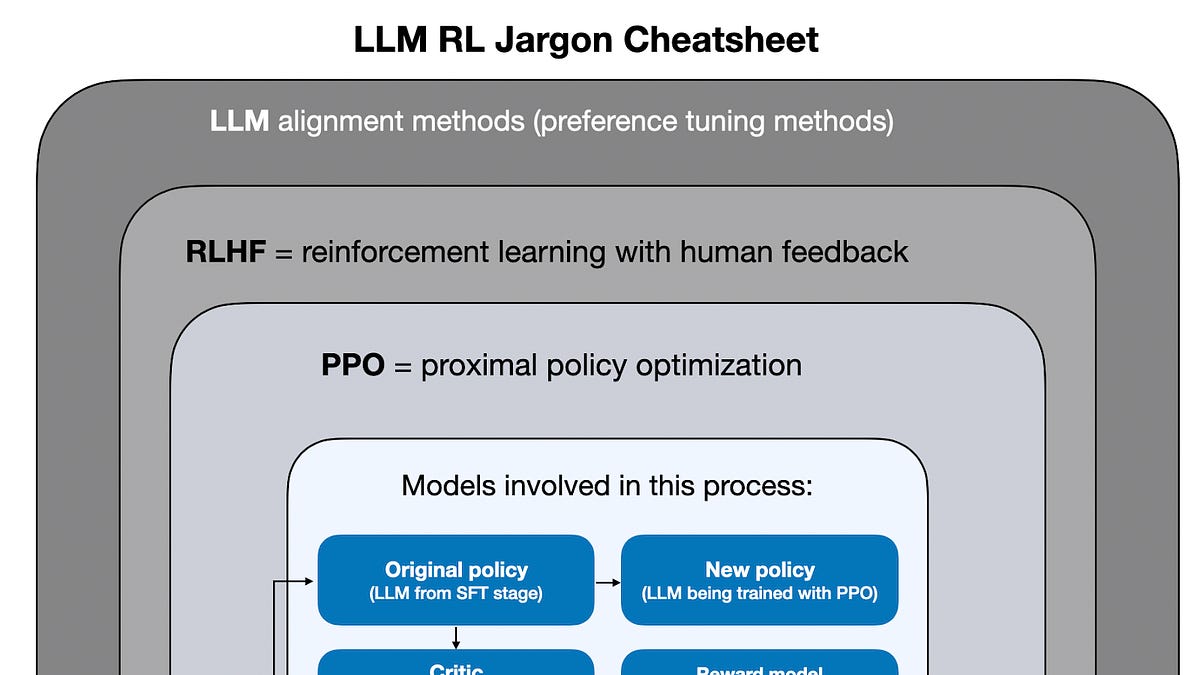

My perennial question: follow the money. Gymnasium’s free; NumPy’s eternal. No one’s hawking this as SaaS. It’s dev delight for interviews (“implement REINFORCE”—nailed) or explain PPO/A2C precursors. Companies? OpenAI’s early days were NumPy-heavy before scaling. Today? Maybe robotics startups pinching pennies on embedded.

Downsides? Variance bites on harder envs—add baselines or actor-critic next. CartPole’s toy; scale to Atari? Variance apocalypse without tricks.

Still, 100 lines balancing a pole? Underrated flex. PR spin calls it ‘reinventing the wheel’—nah, it’s stripping paint off to see the engine.

Discount rewards func—pure gold.

def discount_rewards(r, gamma):

discounted_r = np.zeros_like(r)

running_add = 0

for t in reversed(range(r.size)):

running_add = running_add * gamma + r[t]

discounted_r[t] = running_add

return discounted_r

No vectorized fanfare; loop’s fine for H=100 neurons, D=4 states.

🧬 Related Insights

- Read more: TokenBar Exposes Your AI Addiction in Real Time—Dashboards Be Damned

- Read more: Zero AWS Experience to CLF-C02 Certified: The Cloud Door Just Opened for You

Frequently Asked Questions

What is the REINFORCE algorithm?

REINFORCE is a policy gradient method that samples actions from a parameterized policy (like a neural net), computes log-prob gradients, weights by discounted rewards, and updates via ascent. No value function needed.

How to implement policy gradients from scratch in NumPy?

Use sigmoid for binary actions, ReLU hidden, manual backprop with chain rule, RMSProp for updates. Discount rewards backward, standardize, multiply into dlogps. Full code ~100 lines for CartPole.

Does REINFORCE work on CartPole?

Absolutely—hits 500-step max avg with tuned gamma=0.99, batch=5. High variance, but baselines fix that; pure version shines on simple envs.