A monstrous AI GPU, starved for data, latches onto a PCIe x16 link screaming at 256 GT/s per lane. One terabyte per second, bidirectional. Insane.

But here’s the kicker: that vision—PCI Express roadmap barreling to PCIe 8.0 and 1TB/s—isn’t some effortless clock bump anymore. It’s a war against physics, where every doubler in bandwidth slams into shrinking voltage margins, crosstalk nightmares, and PCB fabs that can’t keep up without jacking costs sky-high.

PCIe started simple back in 2004, ditching PCI’s shared-bus chaos for point-to-point lanes at 2.5 GT/s. Doubled nicely with each gen—5, 8, 16, 32 GT/s—thanks to smarter encoding like 128b/130b kicking 8b/10b to the curb. Enthusiasts got PCIe 4.0 GPUs just two years post-spec; PCIe 5.0 hit 128 GB/s x16 in 2019, perfect for data-center AI beasts but still pricy for your gaming rig.

Then, bam—PCIe 6.0 in 2022 flips the script.

Why Did PCIe Ditch NRZ for PAM4?

PAM4. Four voltage levels per symbol, packing two bits where NRZ squeezed one. Doubles to 64 GT/s without redlining the clock. Genius, right?

Wrong. Or half-right. Margins crater—tiny noise spikes kill signals. PCI-SIG slaps on FEC (forward error correction), DSP-heavy equalization, FLIT-mode encoding (242B/256B). Controllers morph into power-hungry mixed-signal monsters, gobbling silicon and watts.

“Multi-level signaling methods like PAM4 compress voltage margins dramatically, making them far more sensitive to electrical noise, jitter, crosstalk between lanes, and even tiny imperfections in PCB manufacturing.”

That’s straight from the spec docs, and it nails the pain. PCIe 6.0 PHYs aren’t interfaces anymore; they’re mini-processors wrestling analog hell.

Distance? Forget it. At 64 GT/s, signals crap out beyond inches without retimers—active repeaters that add latency, power, cost. Platforms choke on validation; one rogue via in your motherboard, and poof, link fails.

PCIe 7.0 (2025) cranks to 128 GT/s, still PAM4 but refined. PCIe 8.0? The 1TB/s holy grail via x16 at 256 GT/s. But wait—rumors swirl of optical extensions, CXL 3.0 synergies for memory pooling. PCI-SIG’s not spilling full beans yet.

Can PCIe 8.0 Actually Hit 1TB/s Without Bankrupting Us?

Short answer: maybe, but not cheaply. Early gens rode Moore’s Law coattails—faster transistors, done. Now? Interconnects rule. It’s the 1990s bus wars redux, when PCI supplanted ISA because shared bandwidth starved CPUs.

Unique angle here—and PCI-SIG glosses this over—the real shift is architectural. PCIe isn’t just fatter pipes; it’s forcing a rethink of system design. AI racks already stitch NICs, DPUs, accelerators via PCIe fabrics. At 1TB/s, expect retimer farms between chips, exotic dielectrics in boards (think liquid-crystal polymers over FR4), maybe even silicon photonics sneaking in for longer hauls.

Cost? PCIe 5.0 SSDs are mainstream-ish now, but 6.0? Enterprise-only. 8.0 hits consumer desktops around 2030, if ever—bet on servers first, trickling down like DDR5 did.

Look, PCI-SIG’s three-year cadence holds (mostly), but slips loom. PCIe 3-to-4 took forever; 6.0’s PAM4 was no picnic. My bold call: PCIe 8.0 launches spec-only by 2028, real silicon 2030, but integration challenges cap practical bandwidth at 70-80% spec—retimers everywhere, power budgets exploding.

History echoes this. Remember HyperTransport? AMD’s PCIe rival, peaked then faded because scaling hurt. Or InfiniBand, enterprise king but pricey. PCIe wins on ecosystem—ubiquitous, backward-compatible—but physics doesn’t care about incumbency.

How Does This Reshape AI Hardware?

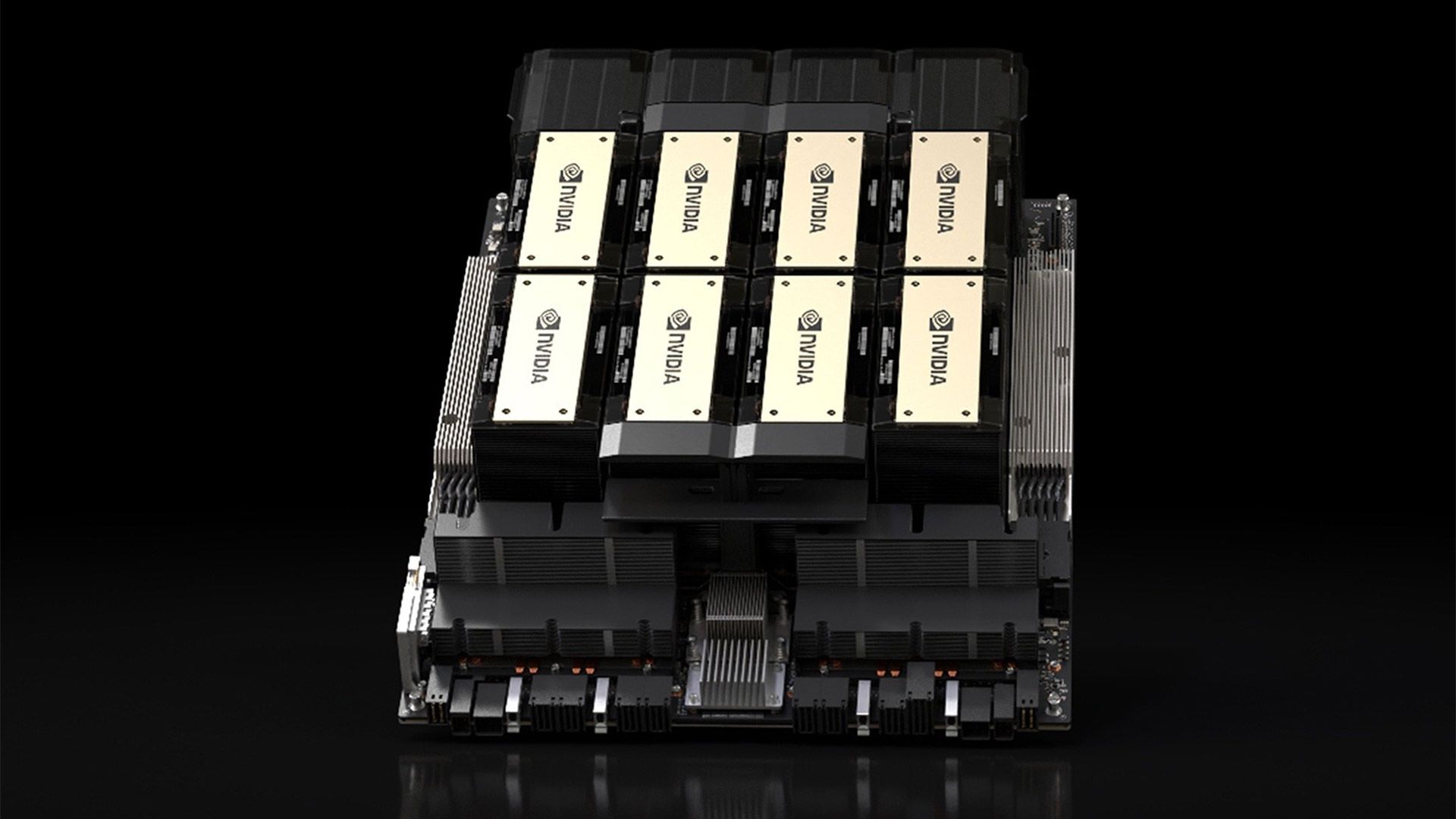

AI’s the killer app. GPUs like Nvidia’s H100 slurp PCIe 5.0 x16 (256 GB/s raw), but training clusters crave more—enter NVLink, but PCIe glues the fab. Future Blackwell or whatever: 1TB/s means exascale without custom stacks.

Yet skepticism reigns. Corporate spin calls it “evolutionary”; truth? Revolutionary pain. Retimers add 10-20ns latency—peanuts for storage, killer for coherent memory over CXL. Power? A PCIe 8.0 PHY might draw 20W per x16, versus 5W today. Racks melt.

Platforms adapt weirdly. Intel’s Lunar Lake integrates PCIe 5.0 tunnels; AMD’s chips too. But desktops lag—your next RTX 5090? PCIe 5.0 x16, plenty for 8K ray-tracing, but AI tinkers will riser-chain for bandwidth.

And materials. PCBs need sub-1mil traces, low-loss laminates—costs 5x consumer boards. Fabs balk; supply chains strain like they did for HBM.

So, the ‘how’: PAM4 + FEC + DSP scales bits/symbol, but trades reach, efficiency. The ‘why’: AI/data deluge demands it—Nvidia’s teasing 1TB/s teases aren’t hype; they’re necessities.

But here’s my critique: PCI-SIG’s roadmap feels like Icarus—flying high on specs, ignoring wing-melt. Without photonics or 3D packaging breakthroughs, 1TB/s stays niche.

Beyond PCIe: What’s Next?

CXL 3.0 pools DRAM over PCIe 5/6 fabrics—pooled memory for AI without HBM premiums. UCIe chiplets bridge dies via PCIe-like links. Optical PCIe? DARPA dreams, but fab-ready? 2035.

PCIe endures because it’s the spine—GPUs, SSDs, NICs all plug in. But evolution slows; doublings get discrete, like clock speeds post-3GHz.

Wrapping the arc: from 2.5 GT/s toy to 256 GT/s beast, PCIe redefined computing. Challenges mount, but so does need. Watch for PCIe 7.0 kits next year—proof the grind pays.

🧬 Related Insights

- Read more: Moda’s AI Agents: From Vague Ideas to Pro Designs in Seconds

- Read more: AsgardBench: Why Robots Still Can’t Plan Past the First Dirty Mug

Frequently Asked Questions

What is the PCI Express roadmap to 1TB/s?

PCI-SIG plans PCIe 7.0 (128 GT/s, 2025), 8.0 (256 GT/s, ~2028), hitting 1TB/s bidirectional x16 via PAM4 and advanced error correction.

When will PCIe 8.0 be available in PCs?

Spec by 2028; server silicon 2029-30, consumer GPUs/SSDs post-2030 due to cost and integration hurdles.

Why is PCIe 6.0 so hard to implement?

PAM4 squeezes signal margins, demands FEC/DSP, retimers for distance, and premium PCBs—ballooning power, latency, validation woes.