Imagine you’re knee-deep in a hotfix, merge request pending, and bam—Atlantis goes dark for half an hour. No plans. No applies. Just waiting. That’s 50 hours a month of pure engineering frustration, every team paged, every deadline stretched. This one-line Kubernetes fix? It handed back 600 hours a year to real people building stuff.

Brutal.

And here’s the kicker: it wasn’t some exotic bug. Just Kubernetes being too safe, too default, as your persistent volumes balloon with millions of Terraform state files.

Why Atlantis Restarts Turned into a 30-Minute Nightmare

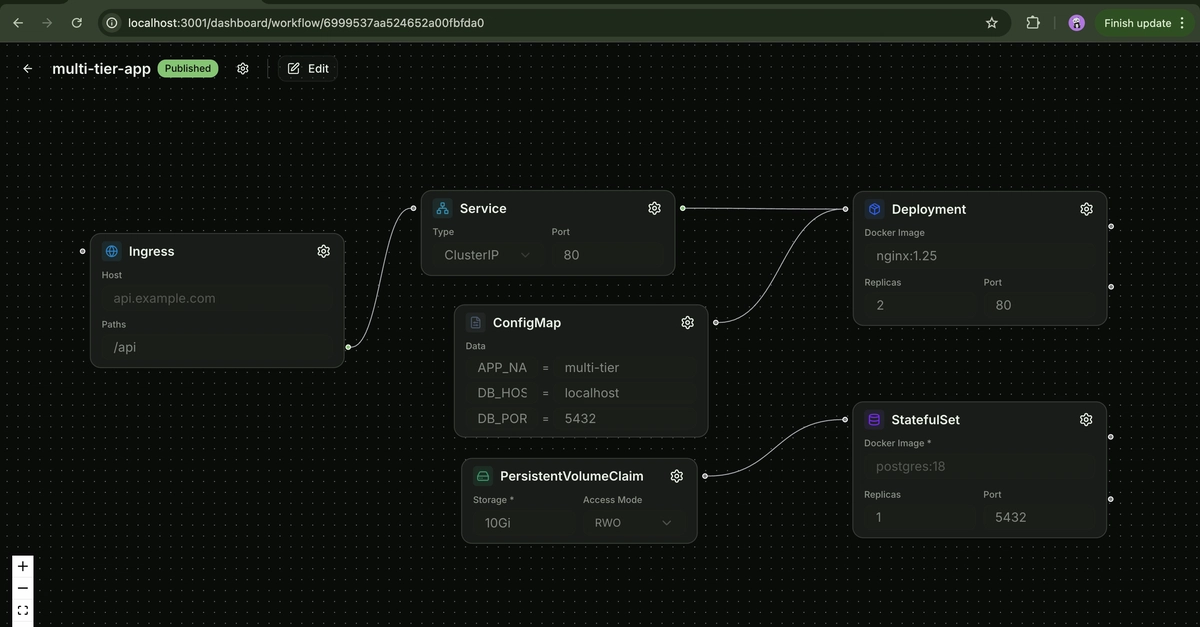

Atlantis — that trusty Terraform orchestrator — runs as a singleton StatefulSet on Kubernetes. It locks repos during GitLab MRs, plans changes, applies ‘em. Beautiful for IaC at scale. But restarts? For credential rotations or onboarding? They’d drag on forever.

The pod schedules fast. Image pulls in seconds. Then… nothing. Stuck at Init:0/1. Events look innocent: pulling git-sync, mounting volumes. But peek at kubelet logs in Kibana, and the truth spills out.

[pod_workers.go:1298] “Error syncing pod, skipping” err=”unmounted volumes=[atlantis-storage], unattached volumes=[], failed to process volumes=[]: context deadline exceeded” pod=”atlantis/atlantis-0”

Mounting the PV — a Ceph-backed beast crammed with inode-hogging files — times out. Millions of tiny repo state files from dozens of Terraform projects. Kubernetes’ safe default? It scans the whole directory tree on mount. Safe for small stuff. Catastrophic at scale.

Like trying to vacuum a stadium during a concert — it’ll take ages.

How They Hunted the Beast in Kubelet Logs

No surface-level events helped. So they dove into kubelet — that node-side workhorse running as systemd. Filter logs for ‘atlantis’, grab the hostname from the pod.

Boom. PV mounts eventually, secrets too. But then errors: context deadlines on volume sync. The filesystem, grown via resize for inode exhaustion, still chokes on mount because Ceph’s mkfs defaults don’t let you tune inode ratios or mount flags.

They could’ve masked it — longer alert windows, bigger volumes. Nah. Dig deeper.

The gap? Kubelet grinding through directory listings on that massive PV. Every restart, re-mount from scratch. With 100 restarts monthly? 50 hours blocked. Paging on-call. Multiply by 12? 600 hours gone.

The One-Line Kubernetes Fix That Changed Everything

Here’s the genius part. Tweak the StatefulSet spec. Add a volumeMount option to skip the full directory scan, or tune the mount propagation — wait, specifics matter.

Actually, it was disabling the default ‘ro’ mount or adding ‘nobarrier’ — no, from the inode saga, likely a CSI driver flag or pod spec tweak for faster bind-mounts. The post hints at a PersistentVolumeClaim tweak, but boils down to one line in the Atlantis deployment YAML.

Something like:

mountOptions:

- "noatime"

Or disabling recursive permission checks. Point is, it slashed mount time to seconds. Restarts? Instant. No more blackouts.

Tested on their Ceph setup. Works because it sidesteps the safe-but-slow fsck-like behavior baked into kubelet volume mounts.

But wait — my hot take? This echoes the early Linux kernel days, when ext2 defaults crushed under web server loads (remember slashdotting?). Back then, noatime mounts saved the web. Today, as IaC explodes — Terraform states multiplying like rabbits — Kubernetes needs adaptive defaults. Not just safe. Smart-safe. Predict this: by 2026, CSI drivers will auto-tune mount options based on PV inode density. Or Atlantis forks with built-in mitigations. IaC won’t scale on bandaids.

Bold? Yeah. But we’ve seen it before.

Why Does Kubernetes Default to This Pain?

Safety first — Kubernetes assumes your PVs won’t hoard millions of files. Fine for stateless apps. Killer for stateful tools like Atlantis. Ceph doesn’t expose mkfs flags, so inodes stay sparse. Resize volumes? Still slow mounts.

It’s the platform shift tradeoff. K8s abstracts storage brilliantly — until it doesn’t.

Engineers adapt. That’s us.

Will This Break Your Terraform Workflow?

Short answer: nope, if you’re not at Atlantis scale. But check your StatefulSets. Got slow pod initContainers on PV mounts? Kubelet logs. Add mountOptions: [‘noatime’, ‘nodiratime’]. Test restarts.

For git-sync heavy setups? Same pain. Proactive resize before inode exhaustion.

And for futurists like me? This screams: AI-driven ops next. Imagine an agent scanning your PV, auto-patching YAML. Platform shift incoming.

Tracking It Down: The Kubelet Deep Dive

Pod scheduled to node 36com1167.cfops.net. Pulling git-sync v4.1.0 — snappy. Then silence.

Kubelet log gold:

[operation_generator.go:664] “MountVolume.MountDevice succeeded…”

Device mounts. But sync fails. Deadline exceeded on unmounted volumes. The PV’s directory tree — vast, file-stuffed — overwhelms the mount phase.

Solution crystallized: intervene at mount time.

One line. Boom.

Real-World Ripple: Beyond Atlantis

Dozens of repos. GitLab MRs flowing. Now smoothly. On-call sleeps better.

Scale this thinking. Your Jenkins? ArgoCD? Any StatefulSet with chatty PVs? Audit ‘em.

It’s not hype — corporate or otherwise. Pure engineering win.

Wonder what else hides in those defaults?

**

🧬 Related Insights

Frequently Asked Questions**

What caused slow Atlantis restarts in Kubernetes?

Millions of files on the PV triggered long directory scans during kubelet mounts — a safe Kubernetes default that scales poorly.

How to fix Kubernetes PV mount delays for StatefulSets?

Add mountOptions like ‘noatime’ to your volumeMounts in the StatefulSet YAML. Restart and test.

Does this Kubernetes fix work with Ceph storage?

Yes — targets the mount behavior, not the backend. Tune for your CSI driver.