Everyone figured MCP servers—those slick bridges between LLMs like Claude and your custom tools—would stay playground stuff. Quickstarts with Uvicorn and in-memory hacks? Sure, fun for tinkering. But shipping to production? Nightmares of crashed connections, rogue prompts blowing up your DB, zero visibility into what the AI actually did.

Then bam—this fastmcp-production-template drops, solving it all in one clean package. It’s the shift from bicycle to rocket ship for AI integrations. Suddenly, you’re not just dreaming of agentic apps; you’re deploying them.

What Everyone Expected vs. Reality

Toy examples. That’s the vibe. “Run uvicorn main:app” and call it a day.

But here’s the truth—this template flips the script. Async PostgreSQL pools that won’t choke under concurrent tool storms. Ironclad security blocking malicious LLM tricks. OpenTelemetry traces painting the full picture of every invocation. Kubernetes manifests hiding secrets like a pro. Fork it, tweak it, ship it. Production-ready MCP server, just like that.

Most MCP server examples stop at “hello world.” A few tools, an in-memory data store, and a uvicorn main:app command. That is fine for a demo, but the moment you try to ship one in production you immediately run into the same four problems.

That’s the original call-out, dead on. And this template? It crushes those four.

Look, I’ve seen RPC evolve into REST APIs—clunky XML to lightweight JSON bliss. MCP feels like that for AI: typed, discoverable tools as the new RPC layer. But without production hardening, it’s dead on arrival. This template’s my unique bet: it’ll spark a gold rush of specialized MCP servers, each owning a niche like CRM queries or inventory checks. Bold prediction—in two years, MCP becomes the USB-C of AI tooling, universal and everywhere.

Why Does Database Hell Plague Every MCP Quickstart?

Naive devs? One connection per tool call. Boom—file descriptors exhausted, Postgres screaming “too many clients,” latency spiking from endless handshakes.

Enter asyncpg with a bounded pool, spun up once in FastMCP’s lifespan hook. It’s genius—min_size, max_size dialed in, helpers like fetch() wrapping the mess so tools stay clean.

And get this: search_records tool fires off query and count concurrently via asyncio.gather. Halves your RTT on paginated pulls. Under load? Pool yields on busy, no new spawns, pure back-pressure bliss.

It’s like giving your server a hydraulic suspension—instead of bouncing off potholes, it glides.

Can You Lock Down MCP Tools Before LLMs Go Rogue?

Huge blind spot in demos. Feed the LLM some poisoned doc saying “now delete everything,” and if all tools are fair game? You’re toast.

This template? YAML allowlist at startup. Decorate tools with @require_allowlist—boom, checks before any DB touch. Zero-cost blocks, env-specific swaps sans code changes.

Layer two on search_records: filter columns whitelisted, nuking SQLi even from dynamic inputs. Smart. Secure. No hype, just works.

But here’s my critique—the company’s not spinning PR (it’s open-source gold), yet most folks will overlook the YAML swap for per-tenant lists. Pro tip: extend it for multi-org isolation. That’s the real enterprise unlock.

Traces That Reveal AI’s Black Box

Logs? Meh for distributed chaos. You need traces: which tool, args, duration, errors.

OpenTelemetry kicks in at boot—tracer plus counters for calls, errors, durations (snippet cuts off, but you get it: mcp.tool.calls, etc.). Wires right into your stack. Debug AI flubs like a boss.

Imagine peering into the AI’s mind—every tool hop lit up. Wonder-fuel.

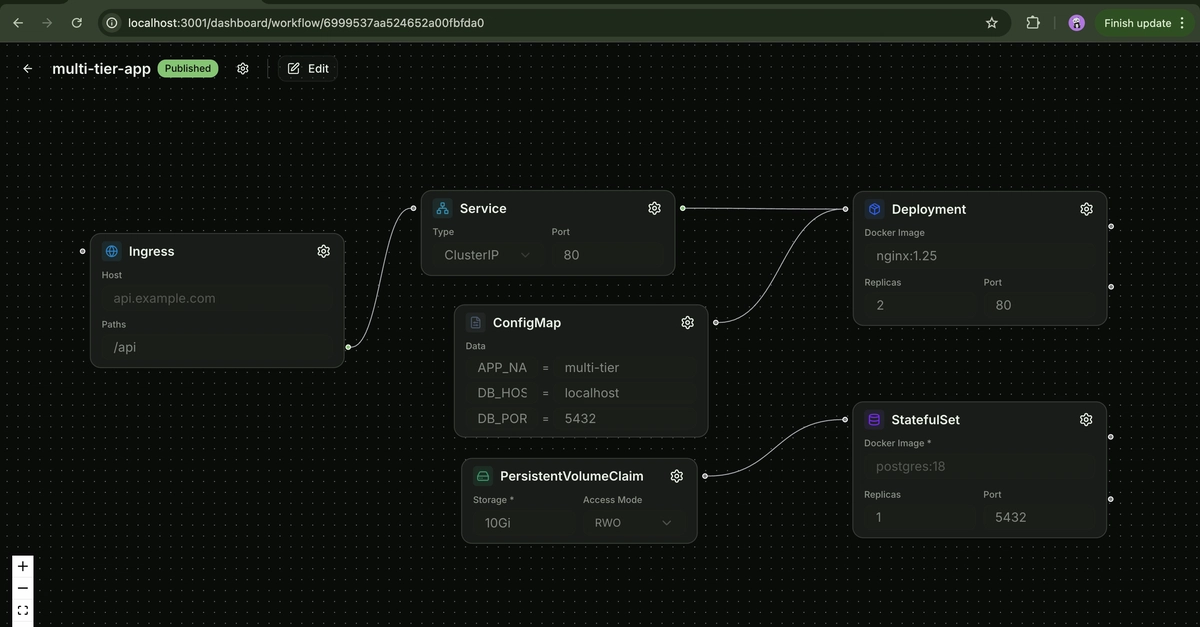

Kubernetes Without the Secret Sweat

Hardcoded creds? Rookie trap. Template packs container + manifests, pulling secrets via env vars or vaults. Helm-ready vibes without the boilerplate.

Deploy once, scale forever. AI agents hitting production DBs at warp speed.

So, what’s the wonder? MCP isn’t just protocol—it’s the platform shift where LLMs become composable software. This template accelerates that 10x. Fork it. Build the future.

Why Does This Matter for AI Developers?

You’re prototyping agents? This ends the demo-to-prod chasm. Async scales, security holds, observability shines, K8s deploys.

Energy here: it’s electric. AI tools weren’t viable at scale—now they are. Your next Claude-powered dashboard? Powered by this.

Historical parallel? Early web services promised RPC dreams, delivered middleware hell. MCP sidesteps with Python ergonomics + this template. No hell.

Punchy truth: if you’re still on hello worlds, you’re late.

🧬 Related Insights

- Read more: FoolPot: Gemini-Powered Teapot That Judges Your Coffee Worthiness

- Read more: Browser Tools That Let You Build Manga Without Dropping $50 on Software

Frequently Asked Questions

What is a production-ready MCP server?

It’s an MCP (Model Context Protocol) server using FastMCP that handles real loads: pooled async Postgres, secure tool calls, OpenTelemetry monitoring, and Kubernetes deployment—far beyond demos.

How do you secure tools in FastMCP from bad LLM prompts?

Use a YAML allowlist loaded at startup, plus @require_allowlist decorators that block unauthorized calls pre-execution. Add column whitelists for SQL safety.

Does this MCP template work with Kubernetes out of the box?

Yes—includes container Dockerfile and deployment manifests that manage secrets properly, ready to apply and scale.