Deploy button clicked. Boom — servers spin up, traffic floods in. But wait, something’s off. Errors spike. And then? No frantic Slack pings. No all-hands fire drill. Instead, AI agents leap into action, dissecting logs, pinpointing the culprit code, drafting a fix, and slapping a PR on GitHub. All in minutes.

That’s not sci-fi. That’s Vishnu Suresh’s self-healing pipeline for LangChain’s GTM Agent, live in production right now.

What the Hell Just Happened?

Zoom out. We’re in the thick of AI’s great platform shift — think electricity replacing steam, but for brains. Coding agents aren’t toys anymore; they’re the new operating system for software teams. Vishnu, a LangChain engineer, saw the bottleneck: shipping code is easy. Keeping it alive? Nightmare fuel. His fix? A pipeline that turns deployments into self-correcting machines.

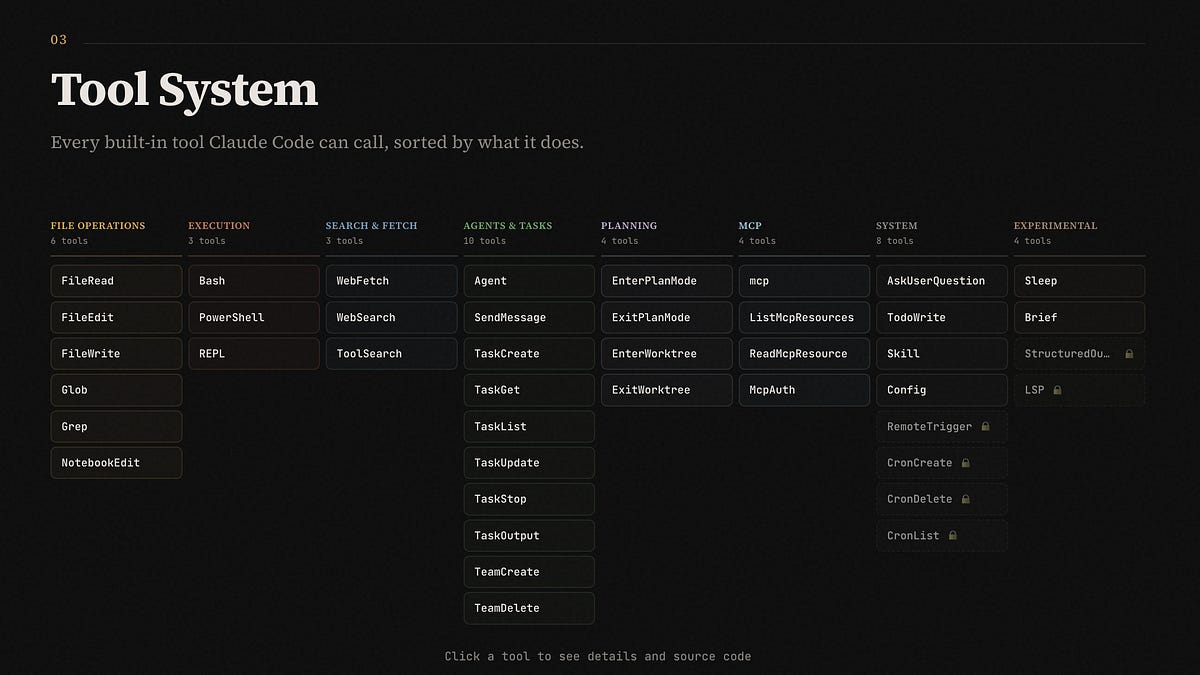

It starts post-deploy. GitHub Action fires, grabs logs. Two paths: build fails? Instant handoff to Open SWE, LangChain’s open-source coding beast. Server glitches? Smarter game.

Baseline errors from 7 days get squished into signatures — regex magic strips UUIDs, timestamps, junk. Normalize to 200 chars. Boom, buckets of error types.

Poll the next hour. Compare via Poisson test — yeah, probability distributions, because why not flex some stats? If spikes scream ‘regression’ (p<0.05), or new errors pile up, flag it.

But here’s the genius guardrail — a triage agent. No dumping raw chaos on the fixer. It scans the git diff, classifies changes (runtime? Tests? Docs?). Demands causal proof: ‘Does this line in the diff birth that error?’ Only green-lights real links.

Then Open SWE — built on Deep Agents — dives in, researches, codes, PRs. Loop closed. Humans? Optional spectators.

“With coding agents, the hard part of shipping isn’t getting code out. It’s everything after: figuring out if your last deploy broke something, investigating what caused the issue, and fixing it before users notice.”

Vishnu nails it. That’s the quote that hooked me — pure truth serum for every dev who’s watched a deploy turn into a dumpster fire.

Why This Feels Like Biological Evolution

Think immune system. Your body doesn’t wait for a doctor when viruses hit; white blood cells swarm, tag intruders, neutralize. Vishnu’s pipeline? Digital antibodies for codebases.

Unique twist I see (and original post glosses): this echoes early aviation autopilots in the 1920s. Planes veered off course? Gyroscopes self-corrected. No pilots wrestling sticks. Today, that’s mundane. Tomorrow? Self-healing deploys will be too. Bold call: by 2026, 50% of AI-heavy teams ditch manual triage. LangChain’s not hyping; they’re blueprinting the norm.

Servers aren’t Poisson-perfect — traffic spikes, API flakes correlate errors. Stats flag suspects; triage proves guilt. Build fails? Diff’s tiny, blame’s obvious. Docker bombs from last commit? Agent feasts on context, spits fix.

Deep Agents power it all — async, codebase-savvy. LangSmith deploys the GTM Agent (go-to-market wizard, I bet). Open SWE? Open-source hero for PRs.

How Do Self-Healing Agents Dodge False Alarms?

Trickiest bit: noise. Prod’s error soup — timeouts, flaky vendors. Naive count spikes? Recipe for agent hallucinations, pointless PRs.

Vishnu’s Poisson play: baseline λ per hour, scale to 60-min window. Deviate hard? Sus. New signatures repeating? Sus.

Triage agent — Deep Agents again — vetoes. Changed files: runtime only? Link ‘em causally or bust. Structured output: verdict, confidence, reasoning, pinned errors. Open SWE gets laser focus, not landfill.

No runtime touches? Hard pass. Docs tweak causing prod bug? Agent laughs it off. False positives crushed.

And if Open SWE hallucinates? PRs are reviewable. Humans in loop as safety net — for now.

Post-deploy window: 60 minutes. Why? Goldilocks — catches fresh regressions, ignores eternals. 7-day baseline: strong enough.

Normalization: regex zaps variables. Identical errors cluster, even if UUIDs dance.

This isn’t bolt-on monitoring. It’s proactive surgery.

Is LangChain’s Self-Healing Hype or Hardware?

Skeptic hat: LangChain’s PR machine spins agents like cotton candy. But Vishnu’s X-post? Raw, technical, emoji stats flex (🙃). No vapor. Real pipeline, production-tested.

Critique: assumes agent reliability. Open SWE’s async smarts shine, but edge cases? Multi-file causal chains? Triage might miss. Still, iteration beats paralysis.

Energy here: deployments become experiments. Ship fast, agents heal. Velocity explodes. Imagine fleets of agents — one heals deploys, another optimizes prompts, third hunts features. Platform shift accelerates.

Vivid pic: codebases as living organisms. Mutate (deploy), sense pain (errors), adapt (PRs). Darwin for devs.

LangSmith Deployments glue it. GTM Agent? Sales/marketing AI, self-sustaining now.

Scale this: microservices galore? Per-service baselines. Monoliths? Whole-repo triage. Agents evolve too — fine-tune on triage verdicts.

Wonder: what if users report bugs, agents triage those? Full-circle autonomy.

Why Does This Matter for Every Dev Team?

You’re shipping ML? Nightmare — non-deterministic, prompt drifts, model rot. Self-healing? Lifeline.

Traditional? CI/CD gates pre-deploy. This? Post-deploy resurrection.

Prediction: forks everywhere. Your Open SWE clone + custom triage. OSS gold rush.

Teams without? Left in dust. AI-first shops ship 10x, heal 10x.

🧬 Related Insights

- Read more: ByteDance’s Seedance 2.0: Hollywood’s Worst AI Nightmare Comes True

- Read more: Gemma 4: Google’s Bid to Own the Edge AI Revolution

Frequently Asked Questions

What is a self-healing deployment pipeline?

Post-deploy system that auto-detects bugs via logs/stats, triages causes with AI, and generates PR fixes — no human until review.

How does LangChain’s agent detect regressions?

Baselines 7-day errors into Poisson models, compares 60-min post-deploy spikes, uses triage AI for causal proof.

Will self-healing agents replace SRE teams?

Augment, not replace — handles routine regressions; humans tackle systemic issues, reviews PRs.