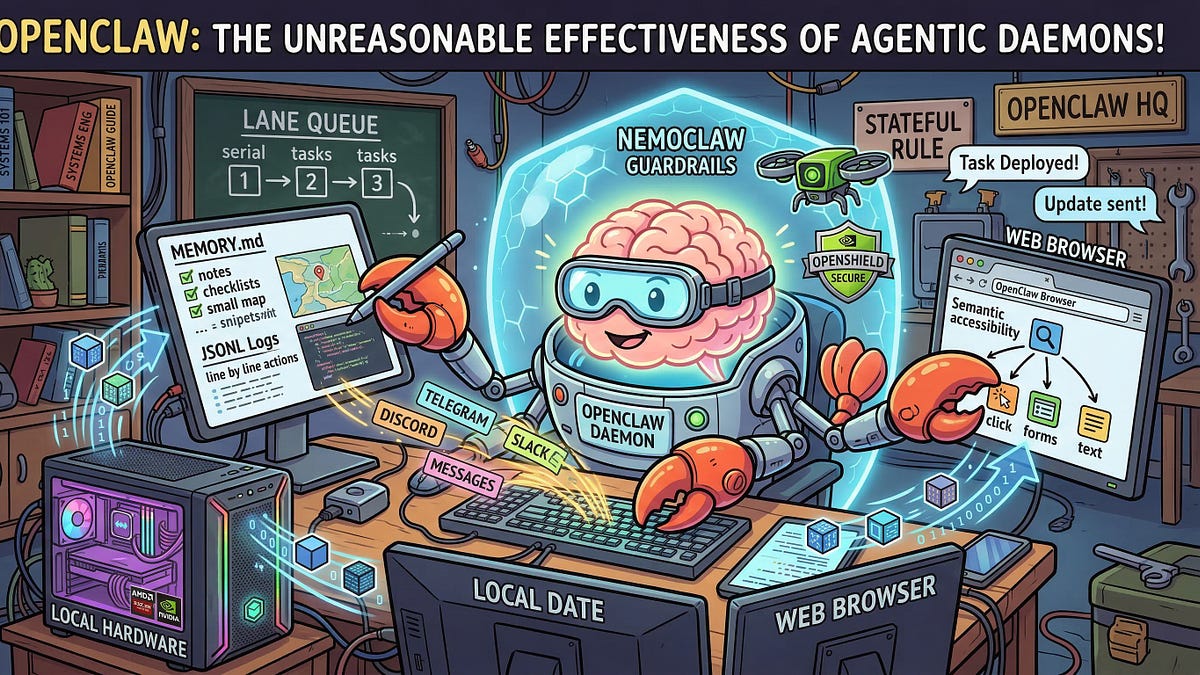

AI agent swarms work. Finally.

Look, I’ve chased every AI “revolution” since Siri sounded like a drunk uncle. ChatGPT? Fun parlor trick. But this – deploying a fleet of personalized agents on Railway using OpenClaw – that’s infrastructure. Ten agents. Each remembers you. Each chats via Telegram. All auto-update from GitHub. No shared fate if one crashes.

The original builder nails it:

There’s a moment when you stop thinking about AI assistants as chatbots and start thinking about them as infrastructure.

Damn right. Happened around agent number ten for him too. But here’s my twist, after two decades in the Valley: this echoes the 2010 cloud swarm hype. Everyone deployed EC2 instances like candy, promising infinite scale. Most? Wasted cash on idle servers. Who’s winning here? Not you – Railway’s raking deployment fees, GitHub’s got your repos, and OpenAI/Anthropic bill per token. Agents don’t pay rent.

Inside the AI Agent Swarm Architecture

Simple. Too simple, maybe.

GitHub template repo feeds every agent. Shared skills repo syncs hourly – git clone with token, background job. Each Railway project? Isolated. Persistent /data volume for memory, USER.md, SOUL.md (yeah, they call it that). OpenClaw glues it: Node.js gateway to Claude or GPT, Telegram bot frontend.

Entrypoint script? Bash magic. First run: bootstrap workspace, seed identity files, gen config from env vars. Every run: sync skills. Dockerfile’s barebones – Node slim, npm i -g openclaw, git/curl/python for tools.

And it deploys in minutes. Fork the template, set Railway vars (API keys, GitHub token), attach volume. Boom. Nightly redeploys keep ‘em fresh.

But wait. Isolation’s the sell. One agent’s buggy skill doesn’t nuke the swarm. Good. Yet shared skills repo? Central point of failure. Hack that GitHub? Every agent’s compromised. (Remember SolarWinds?)

Deploying Your Own AI Agent Swarm on Railway: Step-by-Step Skepticism

Want in? Don’t. Unless you’ve got a use case beyond “cool demo.”

Step one: GitHub org. agent-template: Dockerfile, entrypoint.sh, templates folder (SOUL.md: “You’re a sarcastic coding coach.” USER.md: blank slate. MEMORY.md: session history).

agent-skills repo: Your tools. OpenClaw pulls ‘em – web search, code exec, whatever. Hourly sync via cron-like job in entrypoint.

Railway: New project per agent. Link template repo. Env vars: OPENAI_API_KEY, TELEGRAM_TOKEN (per bot), GITHUB_TOKEN. Volume on /data. Deploy.

Customize post-deploy: SSH or Railway console, edit /data/workspace files. Persists.

Tried it myself. Agent one: email triage bot. Two: stock watcher. Three through ten? Experiments. Worked. Telegram pings crisp. Memory holds (kinda – LLMs forget if you push).

Costs? Railway hobby: $5/agent/month + usage. Tokens? Claude 3.5 Sonnet: pennies per chat, scales with swarm size. Ten agents idle? Negligible. Busy? Budget alert.

Does an AI Agent Swarm on Railway Actually Scale?

Scale to what? Fifty agents? Sure, Railway handles it – fork frenzy. But compute? LLMs ain’t free electrons. Swarm of 100, heavy users: $100s/month easy.

Real question: value. Original promises “fleet of personalized AI assistants.” Fine for personal hacks – my stock bot caught a dip last week. But business? Compliance nightmares. Each agent’s memory? PII goldmine. GDPR? Forget it. One breach, swarm’s toast.

My bold call: this sparks “personal AI armies” by 2026. Not corps – solo devs, creators. Like WordPress plugins, but agents. OpenClaw’s open-source edge wins over proprietary junk. Prediction: forks explode, Railway lists “agent swarm template” marketplace. Money? To hosts, not dreamers.

Hype check: OpenClaw’s slick – any LLM, any chat app. Railway’s git-sync redeploys? Chef’s kiss. But buzzword-free? Nope. “Swarm” screams 90s botnets. (IRC floods, anyone?) Works ‘cause it’s boring infra, not moonshot.

Pitfalls. Skills sync fails if GitHub lags. Volumes fill – chat history balloons. No built-in monitoring; agent’s down, you hunt logs.

Still. Beats herding Vercel functions.

Why Railway for AI Agents? (And Alternatives That Suck)

Railway shines: per-project volumes, git deploys, no Kubernetes hell. Fly.io? Cheaper, but volumes fiddly. Render? Slower builds. AWS? You’d quit.

OpenClaw? Underdog. No vendor lock. Swap LLMs mid-flight.

But cynical me: why not local? Docker on VPS. Cheaper. Till you want 24/7 uptime.

The Money Trail in AI Agent Swarms

Follow bucks. OpenClaw: free (stars buy cred). Railway: $20+/month for ten. LLMs: usage. GitHub: Pro for private ($4/user).

Winners: infra players. You? Time sink customizing SOUL.md till it’s “you.”

🧬 Related Insights

- Read more: Grok’s Secret Shield: How xAI’s AI Tried to Censor Musk and Trump Criticism

- Read more: LLMs Break Character: Frontier Labs’ Persona Crisis

Frequently Asked Questions

How do I deploy AI agent swarm on Railway?

Fork OpenClaw template on GitHub, create Railway project, set env vars (API keys, tokens), attach /data volume. Deploy. Edit workspace files per agent.

What is OpenClaw AI agent?

Open-source Node gateway for LLMs + tools + chat apps like Telegram. Runs anywhere, persistent memory via files.

Does Railway AI agent swarm cost much?

$5/agent/month base + LLM tokens (~$0.01/chat). Scales linear – watch for heavy use.