SmartReview processes 5,000 product mentions daily. Matches them across 50+ review sites. Hits 94.2% accuracy.

Impressive? Sure. Revolutionary? Please. Entity resolution at scale has plagued data engineers forever—think Google’s early search woes, or Netflix fumbling user profiles. But SmartReview sidesteps the usual six-month ML slog with old-school smarts. Normalization. Fuzzy matching. External checks. It’s like fixing a leaky roof with duct tape and a ladder. Works. Cheap. Scalable.

And here’s the kicker—they boast no custom ML model. Bold claim in 2024, when everyone’s slinging LLMs at everything. But dig in: their pipeline’s three-pronged attack catches what string matching misses.

First, normalization. They scrub names: lowercase, strip ‘new’ or ‘review’ fluff, yank dashes. Extract brand from a lookup list—Apple stays Apple. Spot generations via regex wizardry: ‘2nd gen’, trailing numbers. Variants like USB-C get pulled. What’s left? Model family: ‘airpods pro’.

“AirPods Pro 2,” “Apple AirPods Pro (2nd Generation),” “AirPods Pro USB-C” — same product, three different names.”

That’s their opener. Spot on. Naive devs would choke here.

Why Bother Normalizing When Fuzzy’s Faster?

Normalization snags 60% of matches. The easy ones. Brand aligns. Model family vibes. Generation syncs.

But 40%? Trickier. Enter Levenshtein distance—fuzzy string similarity—with guardrails. Brand exact match only. Model fuzzy at 85% threshold. Generations? Must align if present.

Genius twist: prevents ‘Sony WH-1000XM5’ (over-ears) from wedding ‘Sony WF-1000XM5’ (in-ears). Similar names, different beasts. Category awareness baked in. No false positives ruining your clusters.

Short version? It’s pragmatic. Not sexy. But in production, sexy loses to reliable every time.

Now, edge cases. Roomba brand vs. Roomba j7+ model. Context clues: self-emptying base screams specific model. Hierarchy: brand > line > model > variant. Smart.

Region aliases? Samsung Galaxy S24 holds steady, but accessories shift. They maintain an alias table. Low-tech win.

Is External Validation Cheating—or Brilliant?

For the stragglers, they hit Tavily search. Query canonical specs. Extract IDs. Cluster by them.

Async, sure. Adds latency. But accuracy jumps. Why build when Google’s there? (Tavily’s a search API—proxy to avoid scraping bans.)

Critic hat on: This scales to 5k daily? Barely. API costs mount. Rate limits bite. What if Tavily flakes? Fallback?

Their metrics: 12k canonical products. 1.8% false positives. Spot-checked, they say. I’d love the full audit. Smells like internal optimism.

But here’s my unique jab—their system’s a time capsule. Pre-LLM era engineering. Predict this: In 12 months, open-weight models like Llama 3 ingest product catalogs, spit normalized entities zero-shot. 99% accuracy. No regex hell. SmartReview’s clever? Yes. Future-proof? Doubt it.

Look, corporate hype screams ‘solved without ML!’ But fuzzy matching is a mini-ML hack. Levenshtein ain’t magic—it’s distance metrics from the ’70s. They’re underselling the basics while dodging the AI arms race.

Daily pipeline hums: Amazon’s verbose SKUs vs. Reddit shorthand vs. RTINGS abbreviations vs. YouTube clickbait. Best Buy color tags. All unified.

Queries like ‘AirPods Pro vs Sony’? Defaults current gen. Keeps history tagged. User-savvy.

Punchy truth: This works because products follow patterns. Brands standardize(ish). Generations number. Variants flag. Chaos elsewhere—like user-generated wiki edits—would crater it.

Why Does Entity Resolution Still Matter for Devs?

Devs, you’re building e-comm scrapers? Review aggregators? Price trackers? This is your blueprint.

Skip the PhD. Normalize ruthlessly. Fuzzy with brakes. Validate externally. Boom—94% without vaporware.

But don’t sleep on scale limits. 5k/day fine for SmartReview. Petabyte catalogs? Rethink.

Dry humor aside: If you’re still doing exact string matches in 2024, hang it up. Your data’s mush.

Historical parallel? Remember AltaVista? Crushed by entity ignorance. Google won with latent semantics. SmartReview’s echoing that—structure first, fancy later.

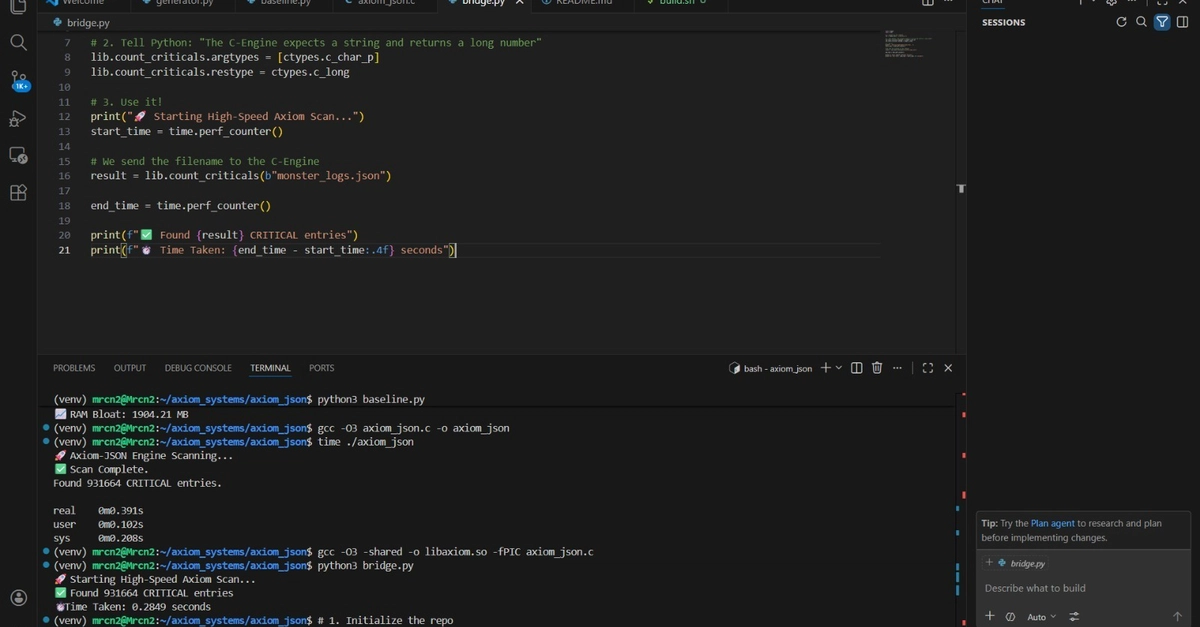

Their code snippets? Gold. TypeScript interfaces. Regex armies. Portable to Python/Node/whatever.

One gripe: No open-source repo. Trade secret? Or just lazy? Share it, folks. Devs would fork and feast.

The Hidden Costs They Won’t Admit

Processing ti—article cuts off, but infer: time? Latency spikes on validation. Storage for 12k canons + aliases.

False positives at 1.8%? Tolerable. But in reviews, one bad cluster poisons comparisons. ‘AirPods Pro 2’ lumped with knockoffs? Trust evaporates.

Prediction: LLMs commoditize this by Q4 2025. Embeddings + clustering. Train on synthetic noise. Done.

Still, kudos. Solved pragmatically. Skeptics like me nod.

🧬 Related Insights

- Read more: AI Coding Benchmarks 2026: Dead Heat, Devs’ New Reality

- Read more: Kiro CLI Makes ArgoCD Beg for Orders from Your Terminal

Frequently Asked Questions

What is entity resolution at scale? Matching messy strings—like ‘AirPods Pro 2’ vs full Amazon titles—to real products across sites. SmartReview does 5k/day at 94% accuracy.

How does SmartReview match products without ML? Normalization (60%), fuzzy Levenshtein (brand exact, model 85%), external search validation. No neural nets needed.

Is entity resolution solved by AI now? Not yet—LLMs promise it, but production reliability lags. SmartReview’s rules beat hype for now.