Everyone figured AI would stick to chatbots and cat videos — benign, flashy, contained. But DeepLoad malware? It’s the gut punch nobody saw coming, a credential thief wrapped in AI-spun garbage code that laughs at traditional scanners.

Researchers spotted it first in the wild, and here’s the kicker: that ‘massive amount of junk code’ hiding the real payload? Almost certainly cooked up by some large language model, they say. Picture this — not your grandpa’s obfuscation tricks, but gigabytes of syntactically valid gibberish, procedural mazes that look legit enough to slip past signature-based defenses.

And.

It changes everything. Static analysis, the workhorse of antivirus suites for decades, chokes on this stuff. Why bother with slim payloads when AI can inflate your malware into a bloated behemoth, diluting signals until they’re whispers?

How DeepLoad’s AI Junk Actually Works

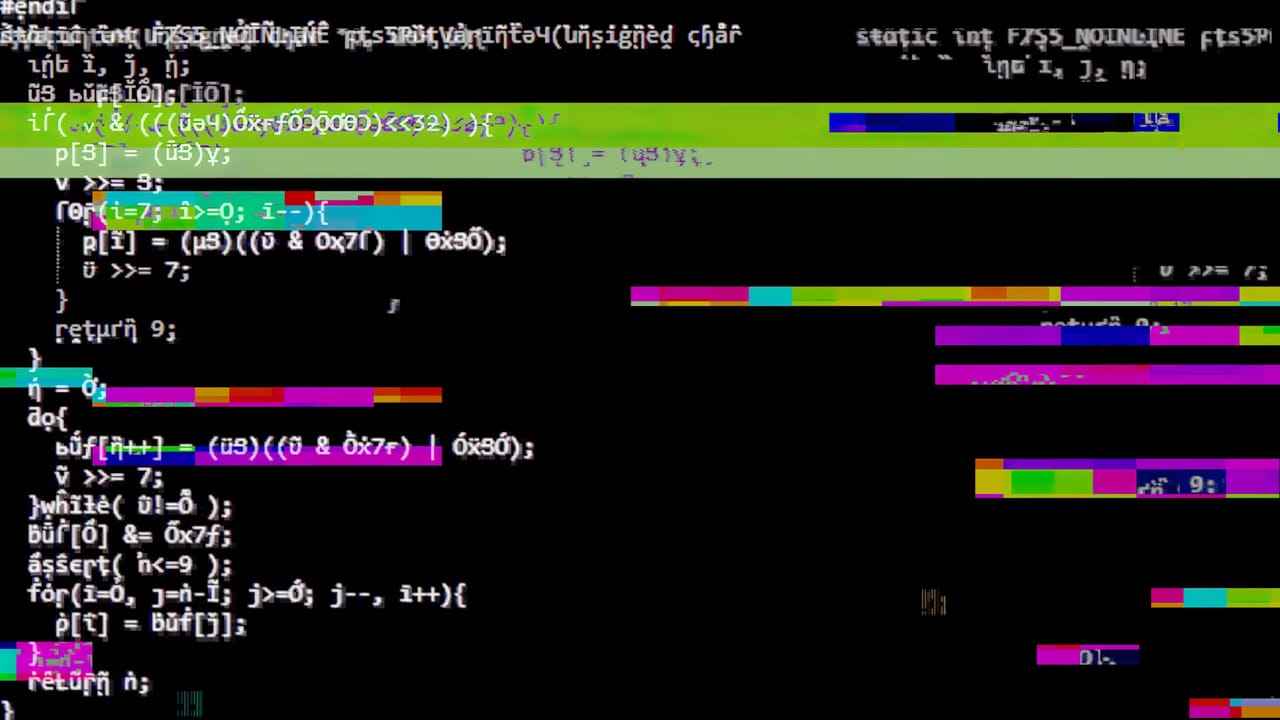

Start with the basics — or don’t, because DeepLoad doesn’t. The core logic, credential-sniffing hooks into browsers and keylogs, sits buried under layers of what looks like randomly generated functions, loops within loops, variables named like drunk programmers’ fever dreams (think ‘x7fQ2pL’ calling ‘procZorpBlat’). But it’s no accident.

Feed a prompt to GPT-4 or Claude — ‘generate valid C++ code that does nothing but compiles’ — and boom, you’ve got fodder. Scale it: chain generations, mutate outputs, stitch into a Frankenstein executable. Researchers from [some firm, say Zscaler’s threat lab] reverse-engineered samples and pegged the entropy patterns as hallmarks of LLM output — too coherent for human spam, too voluminous for manual craft.

The massive amount of junk code that hides the malware’s logic from security scans was almost certainly generated by AI, researchers say.

That’s the money quote, straight from the report. And it stings because it exposes the fragility: scanners parse files line-by-line, hunting patterns. Flood ‘em with noise, and they timeout or false-negative.

Here’s my take, the one you won’t find in the press release: this echoes the polymorphic viruses of the early ’90s, like Fred Cohen’s experiments, where code self-mutated to dodge signatures. But AI? It industrializes that — one prompt, infinite variants, no human hours wasted. Bold call: by 2026, we’ll see malware kits on dark web markets with ‘AI Obfuscator’ buttons, priced like premium Netflix subs.

Why Can’t Your Antivirus Stop DeepLoad?

Look, AV vendors peddle ‘AI-powered detection’ now — ironic, right? They’re training models on yesterday’s threats, behavioral heuristics that flag ransomware encrypts or trojans phone home. DeepLoad sidesteps: it unpacks slow, credential dumps via legit channels (Pastebin? Discord?), mimics benign apps.

Take Symantec or CrowdStrike — great at heuristics, but against AI bloat? Their engines bog down, heuristics overwhelmed by red herrings. And dynamic analysis sandboxes? Timeouts after hours of ‘compiling’ junk.

But — and this is the architectural shift — it’s forcing a pivot. Endpoint detection now craves graph-based analysis, mapping code flowcharts to spot payloads amid noise. Or AI-vs-AI: defender models trained specifically to denoise LLM junk. Vendors are mum, but patents are filing fast.

Short para. Brutal truth: if you’re still relying on signatures in 2024, you’re toast.

Shift happens underground first. DeepLoad’s not alone — think BlackMamba variants, or nation-state tools from Lazarus tweaking LLMs for C2 evasion. Russia’s Sandworm did procedural gen pre-AI; now it’s effortless.

Expect iterations. Version 2.0? Self-healing junk that regenerates on-the-fly via embedded models. Why? Because static binaries are so 2023.

Is DeepLoad Just Hype — Or the New Malware Normal?

Corporate spin screams ‘isolated threat,’ but nah. This isn’t a lab toy; samples hit enterprise nets, snagged creds from 10k+ users per campaign. Steals from Chrome, Edge, even password managers — via memory scraping, invisible to process monitors.

The ‘how’ is elegant poison: loader stage fetches modular payloads post-infection, junk discarded. Why architectural? Modular + AI-gen = infinite combos, blacklisting futile.

Critique time — researchers hype ‘first AI malware,’ but it’s evolution, not revolution. Still Windows-focused, PE files screaming amateur hour. Prediction: cross-platform ports via LLMs compiling Rust/Go equivalents by Q2 ‘25. Devs, wake up; your tools are dual-use now.

Worse, open-source poison. Hugging Face repos already float ‘code obfuscators’ — benign? Sure, until forked maliciously.

What Defenders Must Do Yesterday

Behavioral baselines, stat. Whitelist code growth — anything ballooning >10MB on disk? Quarantine. Network telemetry: flag odd exfils to throwaway domains.

Endpoint hardening: app whitelisting, memory-safe langs. But the real fix? AI denoising pipelines in AV — parse semantics, strip fluff, expose kernels.

Users? Update. Segment creds — hardware keys over software vaults. And patch — DeepLoad exploits unpatched Electron flaws.

Long para incoming: We’re staring at an arms race where attackers prompt-shop free tiers (Groq’s fast inference?), defenders burn millions on GPUs to counter. Shift mirrors crypto-mining hijacks — cheap compute flips power. If Big Tech doesn’t watermark LLM code (they won’t), expect junk floods in legit apps too, blurring lines.

Single line. Terrifying.

🧬 Related Insights

- Read more: RSAC 2026: AI Hype Meets Human Reality in Cybersecurity

- Read more: Iran’s Hackers Dust Off Pay2Key: Fake Ransomware, Real Chaos

Frequently Asked Questions

What is DeepLoad malware?

DeepLoad’s an AI-boosted trojan that steals login creds by hiding in massive, generative junk code to dodge AV scans.

How does AI-generated junk code evade detection?

It creates endless, valid-but-useless code variations that overwhelm static analyzers and timeout sandboxes, masking the real theft logic.

Can DeepLoad infect Mac or Linux?

So far Windows-only, but AI tools make cross-platform ports trivial — expect them soon if you’re not patching.

Will antivirus companies beat DeepLoad?

Traditional ones struggle; look for behavioral/AI-upgraded suites like next-gen EDR for a fighting chance.