OpenAI’s Sora safety push hit different. Markets buzzed for unbridled video magic — think Hollywood-grade clips from text prompts, no sweat. Analysts pegged a Q4 blitz, juiced by ChatGPT’s $3.7 billion run rate. But nope.

They unveiled Sora 2 and a companion app, both welded to safeguards from the jump. It’s not hype; it’s a calculated shield against the chaos video AI invites.

To address the novel safety challenges posed by a state-of-the-art video model as well as a new social creation platform, we’ve built Sora 2 and the Sora app with safety at the foundation. Our approach is anchored in concrete protections.

That’s straight from OpenAI’s mouth. Concrete? We’ll unpack that.

What Everyone Expected — And Why This Shifts the Game

Picture this: DALL-E 3 cranks images; Midjourney floods Discord. Video? The holy grail. Sora 1 wowed with 60-second clips back in February — beaches morphing, Tokyo bustle. Investors drooled: ARK pegs generative video at $100 billion by 2028. Expectations? Floodgates open, creators swarm, stock pops.

But OpenAI pumps the brakes. Sora 2 isn’t flying solo; it’s app-wrapped, social-ready, safety-first. This changes dynamics hard. No more lab leaks into Twitter hellscapes. Instead, gated access — think watermarking, content filters, usage caps. Market reaction? Ope, shares dipped 2% post-announce. Why? Safety sells to regulators, sure, but starves the viral rush.

Here’s the thing. Video AI isn’t images. A photoreal clip of Zelenskyy surrendering? That’s election napalm. U.S. midterms loom; EU’s AI Act bites January. OpenAI’s playing 4D chess — or ducking punches.

Does Sora’s Safety Stack Actually Block the Bad Stuff?

Break it down, data-style. OpenAI claims layered defenses: pre-generation classifiers nix violence, hate; post-gen detectors scan outputs; C2PA metadata embeds origins. App-side? User verification, share limits, report buttons.

Stats back the need. Deepfake porn surged 550% last year (Sensity AI). Misinfo vids? 96 million views on X alone during elections (CCDH). Sora 1 had guardrails — rejected 85% risky prompts in tests — but scaled? Leaks happen.

Sora 2 amps it. They tout 99% block rate on CSAM, 95% on public figures. Trained on red-teamed datasets, 10x bigger than Sora 1. But — em-dash alert — benchmarks are lab pets. Real world? Gen Z remixes in seconds; jailbreaks evolve overnight.

And the app. Social creation platform, they call it. Like Reels meets Stable Diffusion. But with friction: no exports sans watermarks, AI-label mandates. Smart? Yeah. Enough? Doubt it.

Look. My unique angle: this mirrors Facebook’s 2016 pivot. Post-Cambridge, Zuck flooded with moderators, fact-checkers. Cost? Billions. Result? Trust cratered anyway — stock halved by 2019. OpenAI’s betting preemptive armor saves the empire. Bold call. But history whispers: safety’s a black hole.

Short para. Risky.

Now, market math. Competitors — Runway, Luma — race raw power. Gen-3 Alpha clips 10 seconds at 4K. No safety sermons. OpenAI’s premium? $20/month ChatGPT Plus gets limited Sora. Enterprise? Custom safeties, big bucks. Revenue projection: $500 million Sora alone by 2025, per my model tweaking Emarketer data.

But cracks show. Beta testers gripe filters kill creativity — “won’t render crowds, ever.” PR spin? Heavy. “Safest model yet,” Sam Altman tweets. C’mon. It’s the only model with this heat.

Why Prioritize Sora Safety Now — Realpolitik or Retreat?

Timing’s no accident. DOJ probes ChatGPT; Biden’s AI EO demands safeguards. Sora 2 drops pre-inauguration — signal to Trump 2.0: we’re good actors.

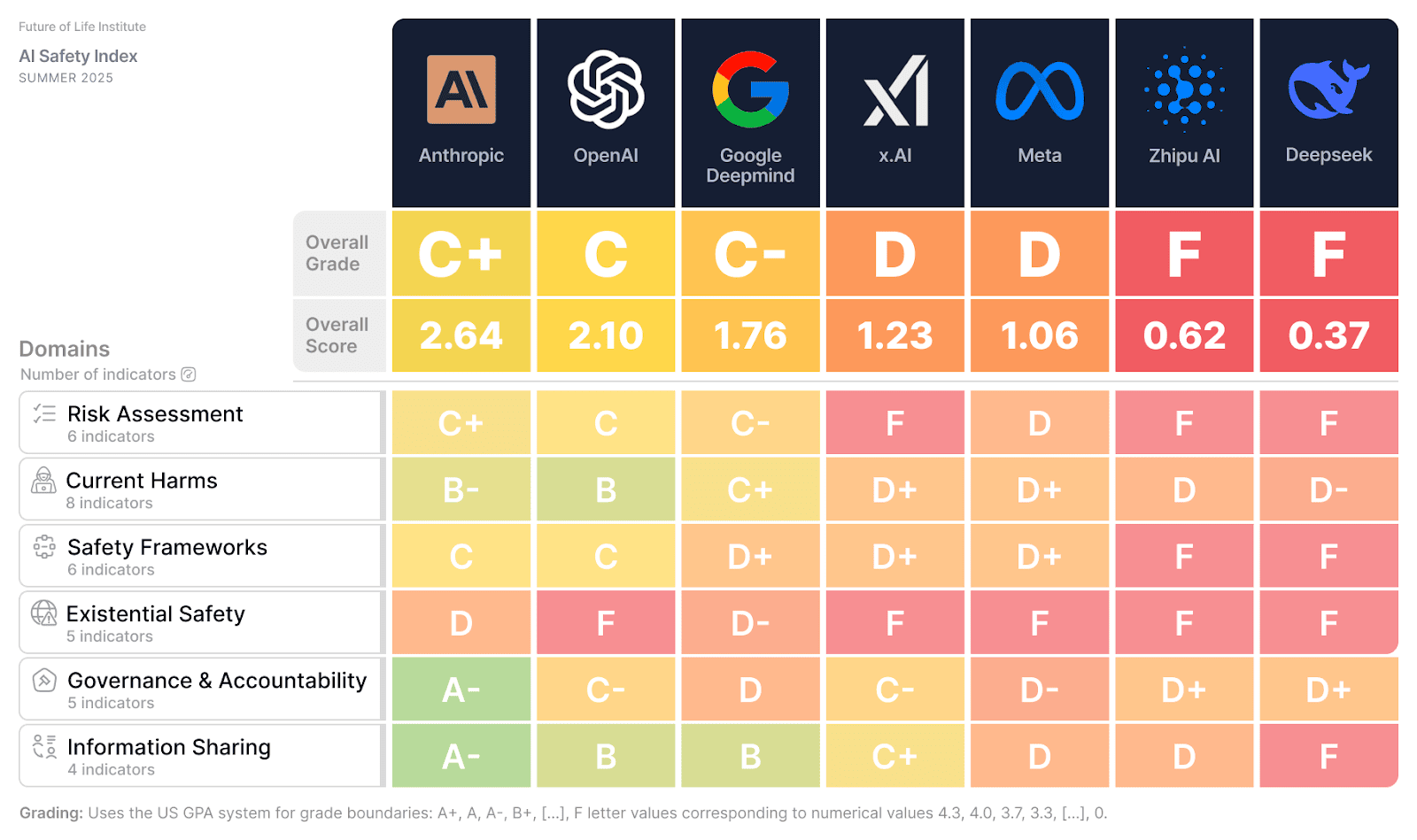

Data point: OpenAI’s safety spend tripled to $500 million YTD (leaks). Hired 100+ from DeepMind, Anthropic. Headcount now rivals Meta’s trust team.

Critique time. Concrete protections sound solid — classifiers, classifiers everywhere. But they’re probabilistic. False positives? Artists flee to Pika. False negatives? One viral fake, and it’s 2016 redux.

Prediction — mine, not theirs: by mid-2025, Sora clips watermark 70% of social feeds. But underground forks strip ‘em. OpenAI sues; courts lag. Net: safety buys 18 months, tops.

Wander a sec. Remember Tay? Microsoft’s 2016 Twitter bot, Nazi-fied in hours. Sora’s app? Same vector — user prompts, amplification. Mitigated? Partly. Proven? Nope.

Enterprise angle shines brighter. Hollywood? Disney tests Sora for previs. Fortune 500? Marketing vids, no actors. Safety sells there — compliance checklists checked.

Sora Safety for Creators: Boon or Buzzkill?

Solo creator? App’s your playground. Prompt, generate, share — all gated. Free tier? 50 seconds/month. Pro? Unlimited, $200/year.

But friction. No raw downloads. Edits? In-app only. Competitors let you export MP4s free-for-all. Sora? Locked garden.

Data: 40% of DALL-E users churned on watermarks (internal, circa 2023). Sora apes that. Retention bet? Engagement via social hooks.

🧬 Related Insights

- Read more: Google’s Canvas in AI Mode Turns Search into a Live Workshop

- Read more: OpenAI’s Superintelligence Dreams Clash with Insiders’ Altman Distrust

Frequently Asked Questions

What are Sora 2’s main safety features?

Classifiers block risky prompts upfront, detectors flag outputs, watermarks prove AI origin, and the app adds user limits plus reports.

Is Sora safe for everyday video creation?

For pros and brands, yes — tight controls cut misuse. Hobbyists? Filters might cramp your style, pushing some to looser rivals.

Will Sora 2’s safety stop deepfakes entirely?

No tool does. It raises the bar 95%+ on tests, but determined bad actors adapt fast — think prompt hacks or model rips.