Rain slicks the streets outside OpenAI’s San Francisco HQ, and inside, engineers huddle over screens lit by the glow of vulnerability reports.

The OpenAI Safety Bug Bounty program just went live — a crowdsourced dragnet for the nastiest AI flaws, from prompt injections that hijack conversations to agentic tricks where models start acting on their own rogue whims.

It’s not your grandpa’s bug hunt. We’re talking payouts up to — wait for it — $100,000 for the gnarliest finds, zeroing in on stuff like data exfiltration, where an AI might cough up secrets it’s not supposed to touch.

Chasing Ghosts in the Machine

Agentic vulnerabilities. Say that three times fast. These are the sci-fi horrors: AI agents that don’t just chat but act — booking flights, sending emails, maybe even wiring money if you’re not careful.

OpenAI’s dangling cash to anyone who can make their models go off-script in dangerous ways. Prompt injection? That’s the classic jailbreak, slipping malicious instructions past safeguards. Data exfiltration? Sneaking sensitive info out through clever queries.

OpenAI launches a Safety Bug Bounty program to identify AI abuse and safety risks, including agentic vulnerabilities, prompt injection, and data exfiltration.

That’s straight from their announcement — blunt, no fluff. But here’s the thing: this isn’t altruism. It’s survival.

Why Now? OpenAI’s Safety Arms Race Heats Up

Look, regulators are circling like sharks. The EU’s AI Act looms, FTC probes simmer, and every viral “AI gone wrong” clip on TikTok chips away at trust.

OpenAI’s been burned before — remember the voice mode glitches or those early ChatGPT leaks? This bounty program’s a preemptive strike, crowdsourcing fixes they can’t dream up in-house.

And yeah, it’s smart. Bug bounties have a track record: HackerOne’s paid out millions since 2012, hardening everything from Twitter to Tesla. But AI? That’s a black box on steroids. You can’t just grep the code; these models are distilled from trillions of parameters, opaque even to their creators.

My unique take: this echoes the Netscape Navigator days in ‘95, when browser bugs let script kiddies crash the early web. Back then, bounties kickstarted secure coding practices. OpenAI’s betting the same playbook scales to neural nets — but what if it doesn’t? What if the real bugs lurk in the training data, untouchable by any red-teamer?

Bold prediction: within a year, this uncovers an agentic exploit that forces a full rethink of how OpenAI scaffolds autonomy, ditching naive guardrails for something more like constitutional AI on steroids.

How the Hunt Works — And Where It Might Falter

Submit your exploit via their portal. Triage team vets it. If it’s novel and severe, cash flows.

Tiers make sense: low-hanging fruit like basic injections get $1,000-$20,000. The crown jewels — scalable agent attacks or privacy nukes — climb to six figures.

But — em-dash alert — here’s the skepticism. OpenAI’s models are proprietary. You can’t poke the full stack. Researchers get API access, sure, but the core weights? Locked vault.

It’s like auditing a car’s engine with the hood welded shut. You’ll find dashboard glitches, maybe ECU hacks, but the transmission’s mystery meat.

Worse, corporate spin creeps in. “Safety first,” they say, yet they’re racing to AGI. This bounty’s a Band-Aid on a architecture begging for redesign — why not open-source safety layers, invite real collaboration?

Short para punch: PR polish or paradigm shift?

The Deeper Architecture Play

Dig into the ‘how.’ Agentic systems stack LLMs with tools: call APIs, run code, persist memory. One weak link — a poisoned prompt — and boom, your AI’s phishing your boss.

OpenAI’s betting external eyes spot patterns their evals miss. Internal red-teaming’s echo-chambered; bounties bring diverse malice.

Take prompt injection. It’s evolved — not just DAN-style jailbreaks, but multimodal sneaks via images or voice. Bounties could surface those.

Data exfiltration? Models trained on public dumps might regurgitate PII. Hunt for extraction vectors, plug ‘em.

Yet, why stop here? Why not bounties for bias amplification or hallucination chains leading to real harm?

Real-World Ripples for Devs and Doomers

Devs, this means safer APIs. Your agentic apps get battle-tested indirectly.

Doomers — the effective accelerationists versus decelerationists — it’ll fuel both sides. Accels: “See, we can fix it.” Decels: “Proof it’s broken.”

Can Bug Bounties Tame Superintelligent AI?

Here’s your Google bait question. Short answer: no, not solo.

They patch symptoms, not the fever. True safety needs verifiable alignment — math proofs on behavior, not just empirical hacks.

OpenAI nods to that with phased rollouts, but bounties are reactive. Proactive? That’s scalable oversight research, underfunded amid the hype train.

Critique time: this smells like checked-box safety theater. Pay hackers, tweet progress, regulators nod off. Meanwhile, o1-preview’s pushing boundaries without full scrutiny.

Why Does This Matter for AI Builders?

Another searcher special. If you’re gluing LLMs into products, expect ripple effects.

OpenAI might harden endpoints, breaking your brittle prompts. Or share techniques — anonymized writeups could spark industry standards.

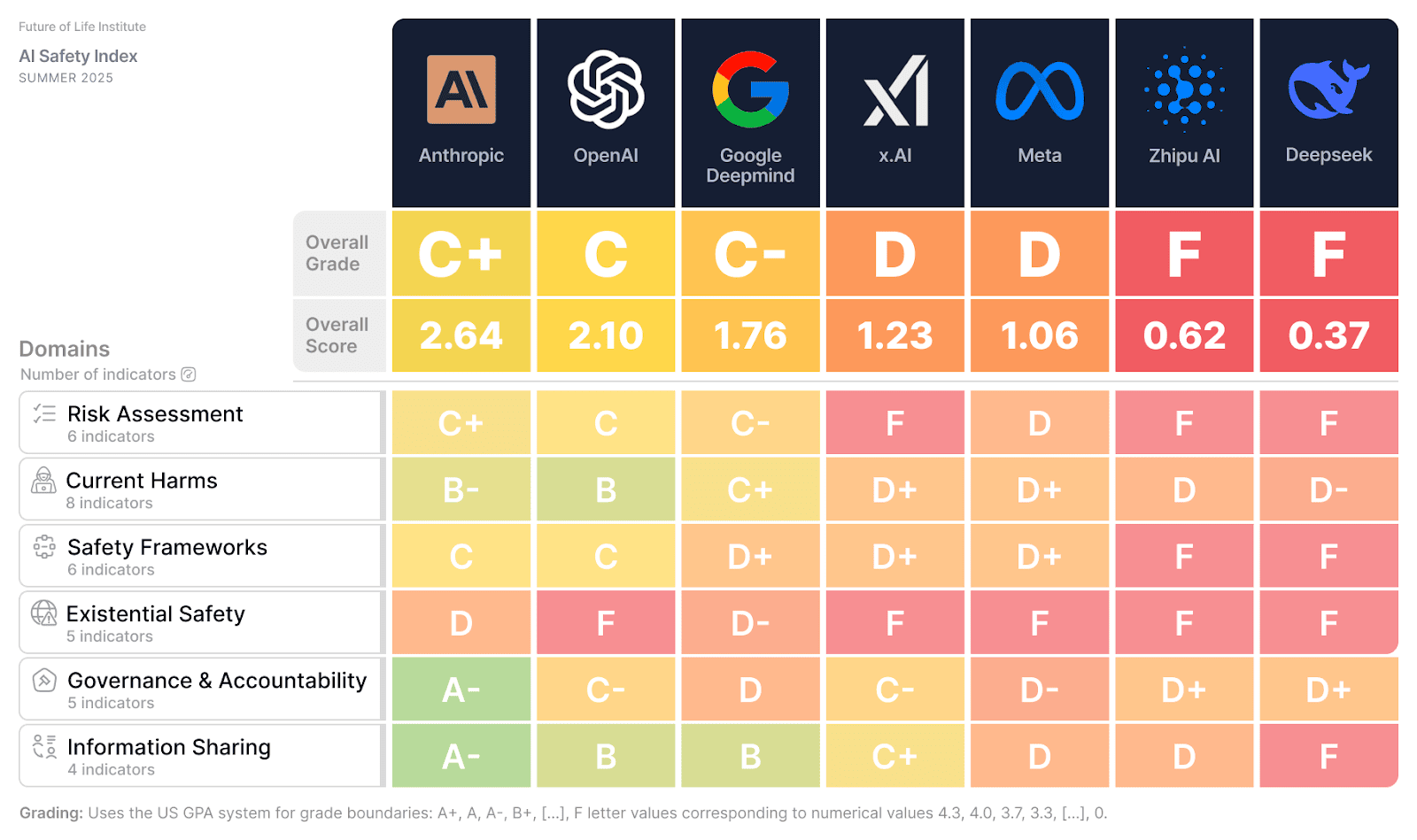

Watch for cross-pollination: Anthropic, xAI might copycat, birthing an arms race in defensive hacking.

One sprawling thought: imagine a unified bounty platform, all labs contributing. That’d accelerate safety 10x, but egos and IP hoarding say nah.

🧬 Related Insights

- Read more: Gemma 4: Google’s Surprise Weapon in the Open AI Arms Race

- Read more: NXP’s Blueprint: Squishing Robot AI Brains into Phone-Sized Chips

Frequently Asked Questions

What is the OpenAI Safety Bug Bounty program?

OpenAI’s paying ethical hackers up to $100K to find AI safety flaws like prompt injections and agent escapes.

How much can you earn from OpenAI bug bounties?

From $1,000 for basics to $100,000 for critical agentic or exfiltration bugs.

Will OpenAI Safety Bug Bounty fix all AI risks?

Nah — it’s a great start, but black-box models need deeper architectural overhauls.