Claude Code just fixed that sneaky bug in your repo—without you lifting a finger. Bam. Test passes, deploy ready. But hold on, what’s really happening under the hood?

Zoom out. Coding agents. They’re exploding right now, transforming raw LLMs into tireless coding partners. And here’s the electric truth: it’s not the models alone driving this shift. Nope. It’s the components of a coding agent—tools, memory, repo context—that make LLMs shine in the trenches of real software work.

Think of it like this: an LLM is a brilliant brain, churning next-tokens like a poet on caffeine. But slap on a coding agent harness? Suddenly, it’s got eyes on your entire codebase, hands to run tests, and a notebook for every pivot. We’re talking platform-level change, folks—like when GUIs freed us from command lines, or browsers turned the web into a playground.

Why Coding Agents Feel Like Magic (When Plain LLMs Don’t)

You’ve chatted with GPT or Claude, right? Solid for snippets. But hand it a full project? It forgets the file structure. Hallucinates imports. Loses the plot after three edits.

Coding agents fix that. They wrap the LLM in a coding harness—a smart scaffold handling the grunt work. Repo navigation. Tool calls. Diffs applied flawlessly.

In many real-world applications, the surrounding system, such as tool use, context management, and memory, plays as much of a role as the model itself.

That’s from the deep dive sparking this piece. Spot on. Progress isn’t just bigger models—it’s these harnesses making Claude Code or Codex CLI feel superhuman.

And my hot take? This mirrors the IDE revolution of the ’90s. Remember vi? Emacs wars? Then boom—Visual Studio, Eclipse. Autocomplete, debuggers, context-aware everything. Coding agents? They’re IDE 2.0, but alive, adaptive, predicting your next commit.

Short version: LLMs generate. Reasoning models think harder. Agents loop it all with tools and feedback.

But coding agents? Specialized. They grok repos like a senior dev who’s lived in your code for years.

The Six Core Components of a Coding Agent

Let’s crack ‘em open. No fluff.

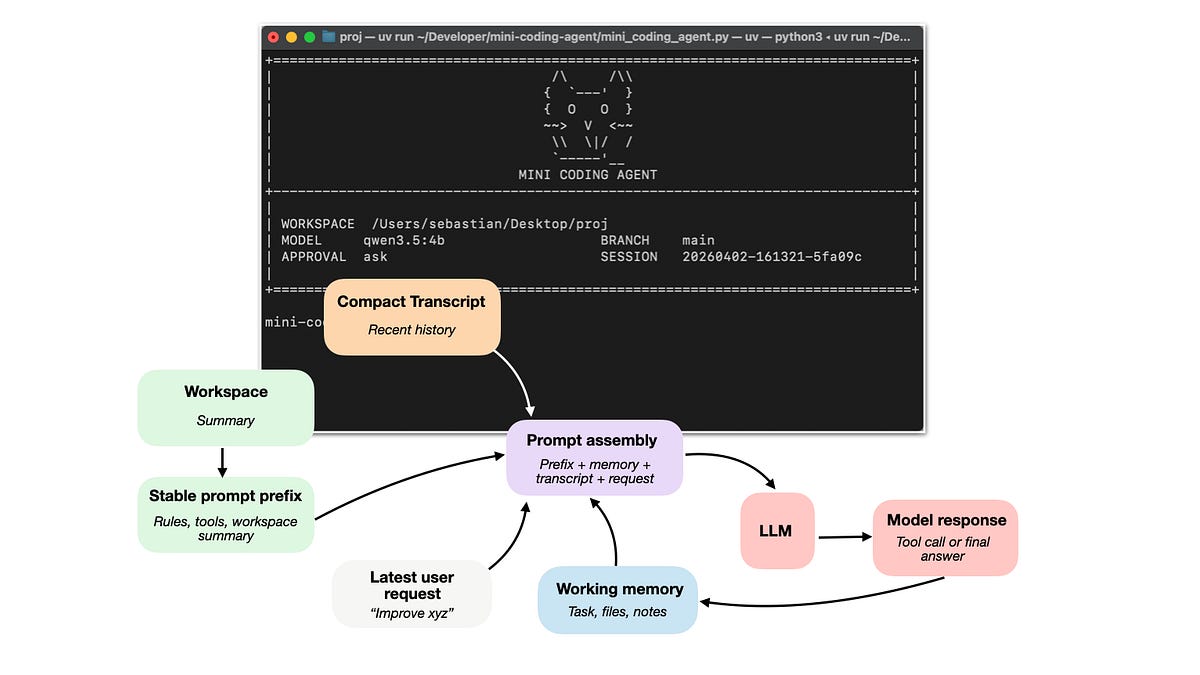

First: Repo Context. Agents don’t guess—they map your entire project. Files, deps, structure. Like giving the LLM X-ray vision into your codebase, pulling just what’s needed into prompts. No more “where’s that function?”

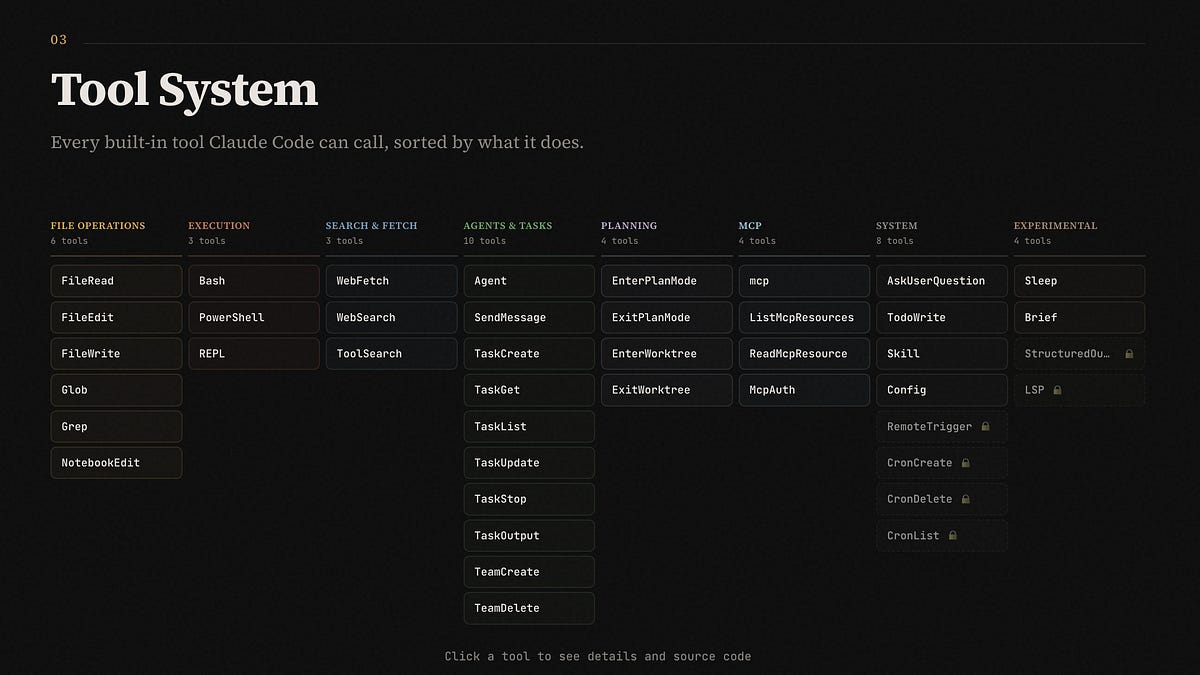

Tools next. Shell access. Git diffs. Test runners. The agent calls ‘em surgically—runs a failing test, inspects the error, iterates. It’s a conversation with your machine, mediated by AI.

Memory seals it. Short-term: chat history, recent changes. Long-term: project notes, user prefs. Without this, every session resets—like coding with amnesia.

Then prompt stability. Caches unchanged code prefixes so tokens don’t burn on boilerplate. Smart.

Control flow: When to edit, test, commit? The harness decides, looping until done.

Permissions and safety: Sandboxed exec, no rm -rf disasters.

Together? A flywheel. Model proposes. Tools validate. Memory evolves. Repeat.

Imagine strapping rocket boosters to a bicycle. That’s the harness on an LLM.

Coding Harness vs. Agent Harness: What’s the Diff?

Agent harness: General-purpose loop for any task. Tools, state, decisions.

Coding harness: Niche beast for software. Adds file tracking, edit application, live REPL.

Claude Code? Pure coding harness. Codex CLI? Same vibe. They turn reasoning models—like o1-preview—into code-crushing machines.

Without? Your LLM’s stuck in chat purgatory. With? It handles the “hard mental work”: search, diffs, errors. (Coders, you feel that?)

Bold prediction: In two years, 80% of dev time shifts to high-level architecture. Agents own the boilerplate, bugs, refactors. We’re entering the composer era—conducting symphonies of code.

Will Coding Agents Replace Human Coders?

Nah. Augment? Hell yes.

They stumble on novel architectures, deep domain quirks. But for implementation? Unmatched speed.

Picture teams: Humans dream big. Agents build fast. Disruptions plummet—because who interrupts a 24/7 dev bot?

Skeptical spin from corps? They hype models, downplay harnesses. Why? Easier to sell APIs than open-source scaffolds. Callout: True power’s in the stack, not just the engine.

How Do Coding Agents Actually Use Tools and Memory?

Tools: Dynamic calls. “Run pytest.” Agent observes output, reasons next.

Memory: Evolves. “Remember, we fixed auth last sprint.” Context balloons smartly.

Repo context: Vector search over files. Pulls relevant chunks, no token waste.

Loop: Plan → Act → Observe → Reflect. Until goal met.

Users rave—Claude Code feels “10x” because it persists sessions, tracks state across hours.

We’re witnessing AI’s OS layer emerge. Agents as the new runtime.

Here’s the wonder: This isn’t incremental. It’s foundational. Like TCP/IP for nets, coding agents standardize AI dev workflows.

Grab one. Tinker. Your next project? Agent-powered.

🧬 Related Insights

- Read more: Rocket Close’s AWS AI Blitz: 15x Faster Mortgages, But Who’s Cashing In?

- Read more: AWS’s FinOps Agent on Bedrock: Cost Savior or CDK Nightmare?

Frequently Asked Questions

What are the main components of a coding agent?

Tools for execution, memory for persistence, repo context for navigation—plus harness for control flow, prompt caching, and safety.

How do coding agents improve LLMs for coding?

By offloading context management, tool use, and iteration—making raw models feel supercharged.

Are coding agents ready for production codebases?

Yes, with safeguards. Tools like Claude Code handle real repos today, but pair with human oversight for complexity.

Word count: ~950.