OpenAI hits ‘launch’ on GPT-5.2. Benchmarks explode: top scores on GPQA-Diamond, AIME 2025, Tau2 Telecom. The crowd cheers. But peek under the hood—NVIDIA Hopper GPUs and GB200 NVL72 racks did the heavy lifting, every tensor, every epoch.

Zoom out. This isn’t a one-off. GPT-5.3 Codex, the agent that codes its own upgrades, trained entirely on those same GB200 beasts. OpenAI calls it their ‘most capable series yet’ for pro knowledge work. Fine. But why does every frontier model—from OpenAI to Runway’s video wizardry—lean so hard on NVIDIA?

The Scale Trap: Why Frontier AI Demands NVIDIA’s Stack

Pretraining. Post-training. Test-time scaling. Those are the laws pushing models toward AGI dreams. But they don’t run on wishes—they chew through tens of thousands of GPUs, synced via bleeding-edge networking.

NVIDIA’s secret? Not just chips. It’s the full stack: accelerators, NVLink for scale-up, InfiniBand for scale-out, and software that doesn’t choke at hyperscale. Hopper to Blackwell? That’s 3x faster training on MLPerf’s biggest tests, 2x better dollars-per-flop. GB300 NVL72? Over 4x Hopper speedup.

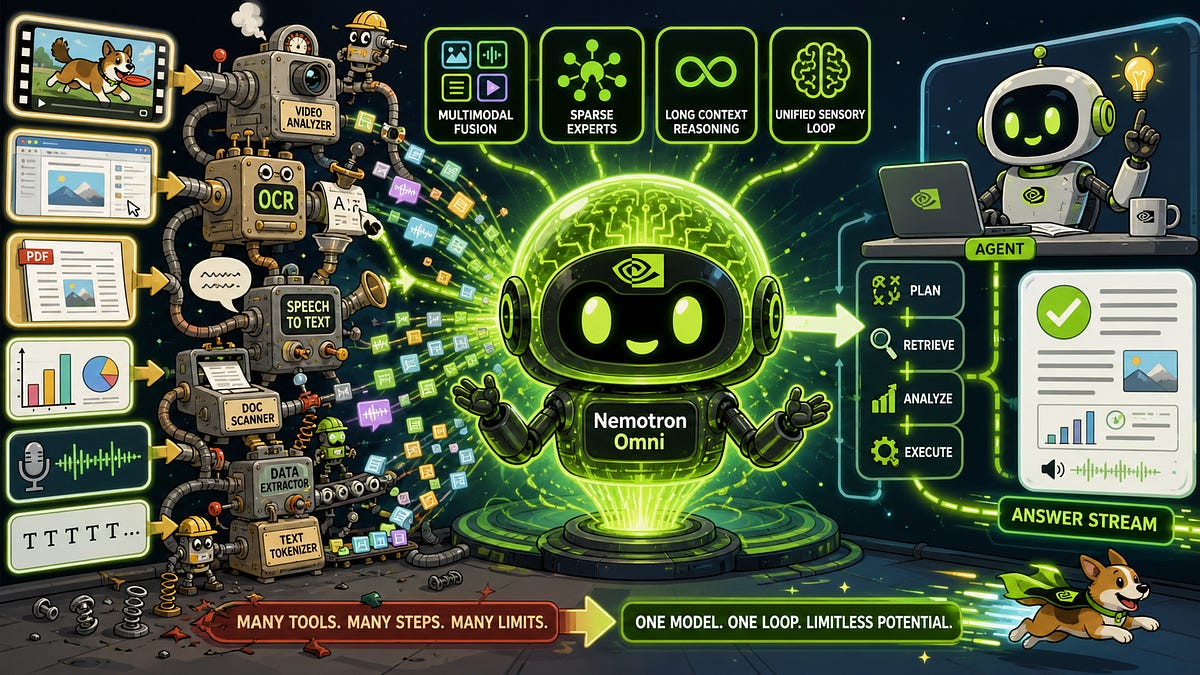

Here’s the thing—rivals like AMD or custom silicon talk big, but nobody matches this at every modality. Text? Check. Video via Runway Gen-4.5? Optimized for Blackwell. Biology with Evo 2 decoding genes or OpenFold3 folding proteins? NVIDIA Clara spinning medical images sans patient data.

And that quote from the announcement seals it:

“GPT-5.2 achieves the top reported score for industry benchmarks like GPQA-Diamond, AIME 2025 and Tau2 Telecom. On leading benchmarks targeting the skills required to develop AGI, like ARC-AGI-2, GPT-5.2 sets a new bar for state-of-the-art performance.”

State-of-the-art, sure. NVIDIA-powered.

Why Can’t AI Labs Just Build Their Own GPUs?

Look, it’s tempting. Train your own iron, cut the middleman. Meta tried with MTIA. Google has TPUs. But scale hits a wall without the ecosystem. CUDA’s the moat—30,000 days of developer mindshare, libraries tuned for every trick.

Blackwell’s rolling out everywhere: AWS, CoreWeave, Azure, even neo-clouds like Lambda. NVIDIA Blackwell Ultra? Extra compute, memory tweaks, hitting servers now. Labs like Black Forest, Cohere, Mistral—they’re all in.

My unique angle: This echoes Intel’s x86 stranglehold in the PC era. Remember how Windows locked it in? NVIDIA’s CUDA is AI’s Windows 95—de facto standard, developer comfort zone. Bold prediction: By 2027, 90% of frontier pretraining flops will route through NVIDIA, even as ASICs nibble edges. But here’s the skepticism—it’s a single point of failure. Supply crunches? Geopolitical chip wars? One Blackwell fab delay ripples to every GPT.

Short answer.

Nobody’s escaped yet.

Multimodal Mayhem: From Code to Clips

AI’s not text-only anymore. Runway’s GWM-1 simulates worlds in real-time—games, robots, edutainment—trained on Blackwell. Inworld for characters. Boltz-2 for drugs.

MLPerf 5.1? NVIDIA swept all seven training benchmarks. Only vendor to submit everywhere. Efficient data centers love it—diverse workloads mean fuller utilization, less idle silicon.

But hype alert. OpenAI’s ‘agentic coding’ in GPT-5.3 Codex? 25% faster, highs on SWE-Bench Pro, Terminal-Bench. Impressive. Yet it’s combining GPT-5.2’s coder brain with reasoner guts—iterative, not revolutionary. Corporate spin calls it ‘self-building.’ Cute, but it’s stacked prior art on better hardware.

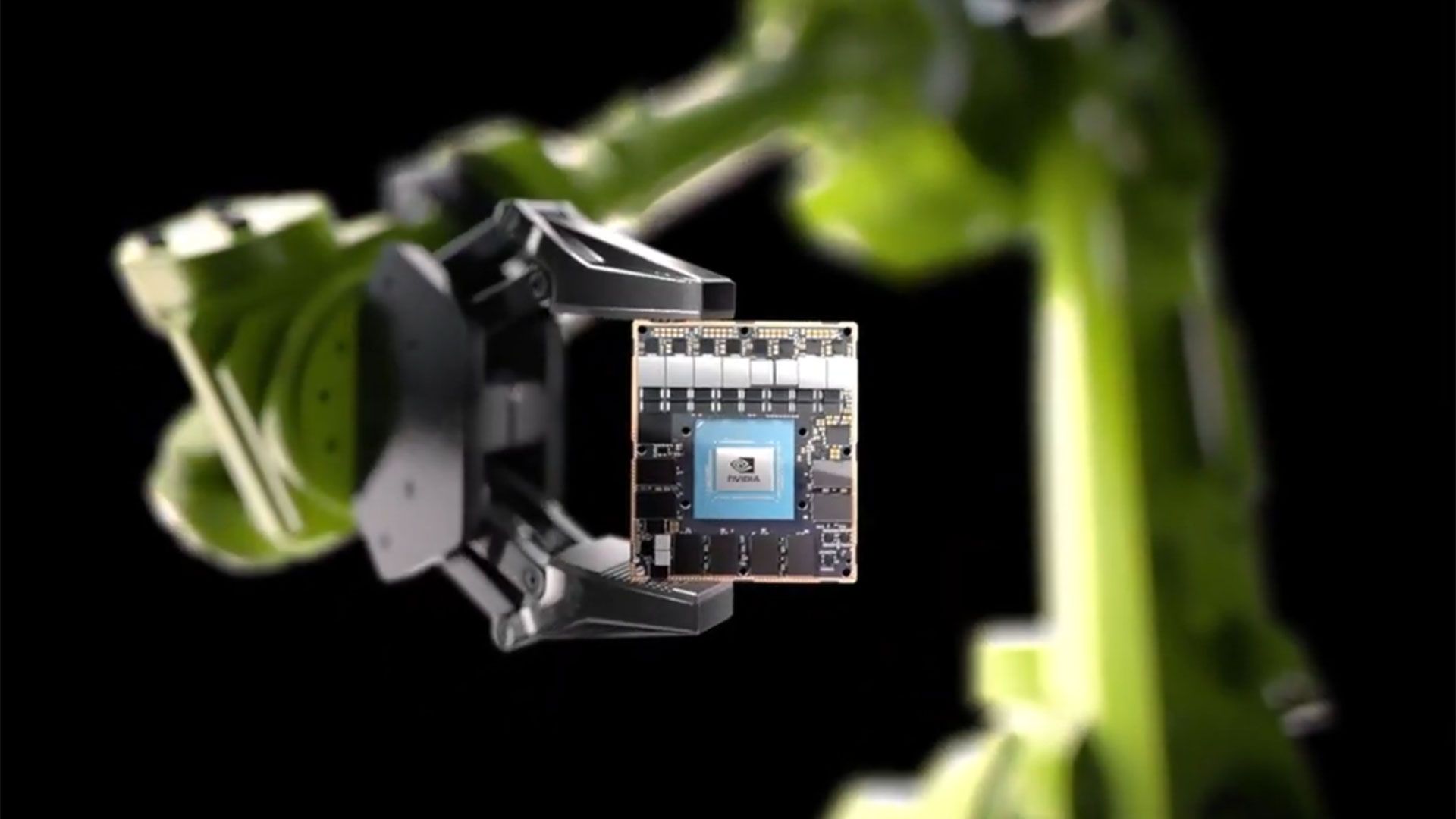

Wander a bit: Imagine robotics. NVIDIA’s stack powers sim-to-real transfers, where models like those from Thinking Machines Lab learn physics in silico. Why? Because scale lets you brute-force the chaos of real-world data.

The Dependency Dilemma

Clouds are flooded: Nebius, Oracle OCI, Together AI—all Blackwell instances live. Server makers crank GB200 racks.

Architectural shift? Yeah. Pre-2020, CPUs faked it with parallelism hacks. Now, AI factories are GPU cathedrals—liquid-cooled, NVLink’d racks humming at exaflop scales.

Critique time. NVIDIA’s PR gushes ‘full-stack AI infrastructure.’ Translation: We’re indispensable. True, but it masks the lock-in tax. Labs pay premiums, waitlists stretch months. OpenAI’s Sam Altman whispers about fab investments—hinting at cracks.

Still, proof’s in the models. GPT-5 series, Runway’s top video lead on Artificial Analysis— all NVIDIA from R&D to inference.

Punchy truth.

Hardware wins wars.

Is NVIDIA’s Blackwell the AI Training Endgame?

Not quite. Ultra variant promises more. But quantum leaps? H100 to B200 was evolutionary—bigger memory, faster interconnects. The ‘how’: Transformer-scale models ballooned parameters; inference now chains reasoning nets, demanding inference-time compute.

Why it sticks: Software maturity. TensorRT-LLM, NeMo—optimized for these behemoths. Competitors lag.

Historical parallel (my insight): Like Standard Oil refining kerosene when everyone chased whales, NVIDIA refined CUDA when others chased raw FLOPS. Now they own the refinery.

Risk? Overreliance breeds complacency. If a Grace Hopper successor flops—or TSMC hiccups—AI stalls.

Deep breath.

That’s the architecture.

🧬 Related Insights

- Read more: Data Recovery Strategies: Hype Meets Hard Reality

- Read more: PostTrainBench: When LLMs Train LLMs, Cheating Ensues

Frequently Asked Questions

What powers OpenAI’s GPT-5 models?

NVIDIA Hopper GPUs and GB200 NVL72 systems handle training and deployment for GPT-5.2 and 5.3-Codex, delivering top benchmarks like GPQA-Diamond and ARC-AGI-2.

Why do AI labs use NVIDIA Blackwell?

Blackwell offers 3-4x speedups over Hopper, full-stack software, and support for text, video, biology—proven in MLPerf across all categories.

Can companies escape NVIDIA dependency?

Not easily—CUDA ecosystem and scale networking create a moat, powering 90% of leading LLMs despite alternatives like TPUs.