Everyone figured the AI showdown would ramp up — Apple hoarding chips, Google churning models, Microsoft buying everything in sight. More cutthroat rivalry. But nope. Project Glasswing flips the script: these same behemoths are linking arms with Anthropic to defend the world’s creakiest software from AI-fueled hacks.

That’s right. A coalition. In tech.

Project Glasswing: Hype or Holy Grail?

Look, when Apple, Google, Microsoft, Amazon, Cisco, and the rest announce they’re pooling resources — $4 million cash plus $100 million in AI credits — you smell something rotten. Or existential. Pick your poison.

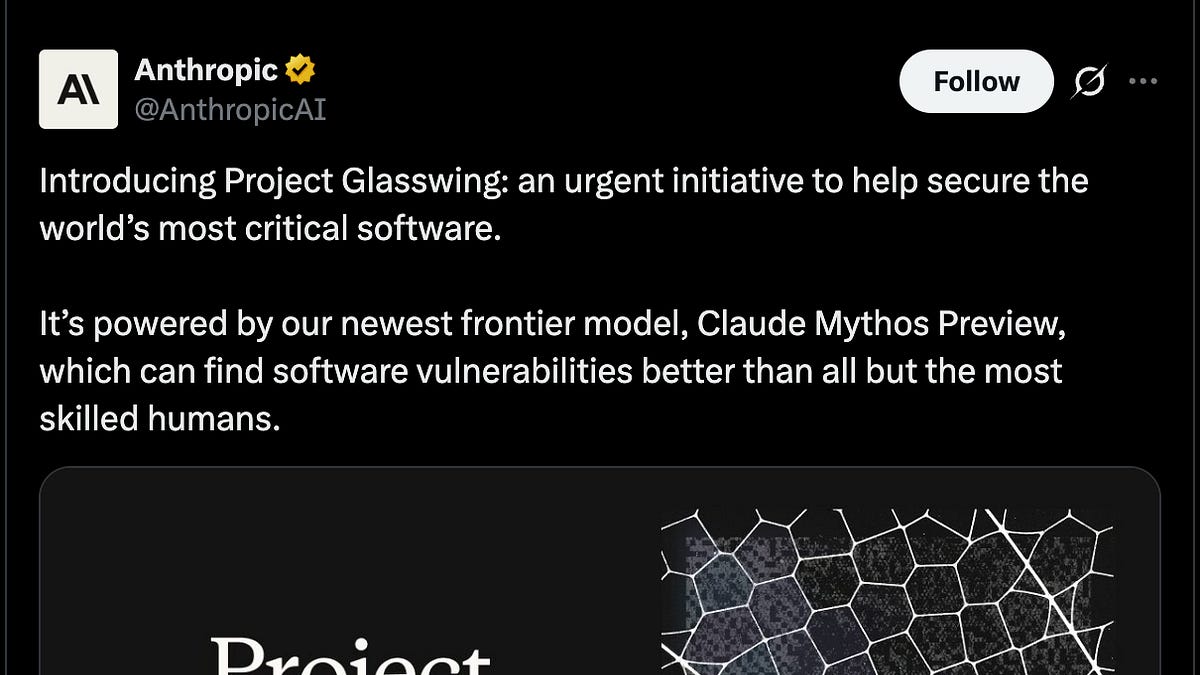

These aren’t chums. They’re sharks circling the same bloody waters. Yet here they are, handing over codebases to Anthropic’s unreleased Claude Mythos Preview, a beast of an AI not tuned for security but sniffing out thousands of bugs anyway. The announcement drips with drama: cyberattack timelines shrunk from months to minutes.

And here’s CrowdStrike’s CTO, Elia Zaitsev, laying it bare:

“The window between a vulnerability being discovered and being exploited by an adversary has collapsed. What once took months now happens in minutes with AI.”

CrowdStrike. You know, the folks who blue-screened the planet last year. If they’re spooked, maybe we should be too.

But wait — is this AI’s Manhattan Project, as some hype it? Nah. Too tidy. The original built bombs in secret; this is a publicity blitz. Still, the scale? Terrifying. Encouraging? Sure, if you buy the unity schtick.

I’ve got a unique angle these press releases skip: this reeks of 1980s arms control treaties. Remember Reagan and Gorbachev? Bitter Cold War foes signing deals to cap nukes, because one slip meant Armageddon. Project Glasswing’s the same — rivals sharing intel on Mythos because solo efforts won’t cut it against state hackers wielding the same AI tools.

Why Did Cyber Threats Force This Tech Truce?

Start with the obvious. AI’s dual-use nightmare. Anthropic admits Mythos wasn’t built for bug-hunting — it’s a generalist with killer coding chops. Dump critical software (Linux kernels? Banking systems?) into it, and boom: vulnerabilities pour out. Thousands, they say.

Terrifying, yeah? Because adversaries — think China, Russia, or that basement troll with a grudge — get the same tech. Open-source models democratize destruction. Exploit windows? Zaitsev’s right; they’re gone.

So these companies fork over their crown jewels. Apple, post-Symantec exec whispers, guards IP like Fort Knox. Microsoft’s the same. Cooperating screams ‘shared infrastructure at risk.’ Cloud providers, OS giants — one mega-breach tanks everyone.

Here’s the dry humor bit: it’s like rival mob bosses teaming up after realizing the Feds have nukes. Mutually assured destruction, baby.

Skeptical take? Smells like a cartel. Lock out startups, control the narrative. But nah — statements ring true. Anthropic’s not releasing Mythos publicly (smart, lest it arms the baddies). Instead, credits flow to partners for secure scans.

One para wonder: Wild.

Is Anthropic’s Mythos Preview Safe Enough for This?

Anthropic calls it a ‘frontier model’ with agentic skills — think AI that codes autonomously, reasons deep. Not for public eyes. Good call.

They tested it on ‘critical software’ — unnamed, natch. Results? Piles of zero-days. The kind that could unravel supply chains, cripple grids.

But — em-dash alert — what if Mythos misses something? Or worse, hallucinates fixes that backfire? Frontier models are flaky divas. We’ve seen it: ChatGPT suggesting ransomware tricks, unprompted.

Prediction time, my bold call: this accelerates AI silos. Open-source dreams? Dead. Big Tech hoards safety tools, spins ‘responsible AI’ while squeezing competitors. Linux Foundation’s in? Even they’re bending.

JPMorganChase adds finance flavor — banks know breaches cost billions. Nvidia? Chips power it all. Broadcom, Palo Alto: hardware and firewalls.

It’s a who’s who of vulnerability. And they’re scared.

Short one: Finally.

What Happens When AI Eats Cybersecurity Whole?

Picture this sprawl: Mythos chews through codebases, flags flaws, suggests patches. Partners deploy in their labs — no sharing exploits publicly, promise. Race to fix before nation-states pounce.

Changes everything. Devs? Less hero-coding blind. Infra pros (like the original article’s author) get god-mode scanners. But users? Still screwed if a patched bug slips.

Corporate spin? Thick. ‘Coalition of the willing’ — please. It’s self-preservation dressed as altruism. Glasswing butterfly nod? Camo and strength. Cute, but butterflies don’t run data centers.

Dense dive: Critics might cry ‘security theater,’ photo-op for AI regs. I get it — post-CrowdStrike, everyone’s jumpy. Yet handing $100M credits? That’s skin in the game. Symantec vets nod: real.

Wander a sec: Remember Log4Shell? One lib, global panic. AI scales that to everything. Timelines collapse — minutes to exploit means preemptive AI hunts or bust.

Why Does Project Glasswing Matter for You?

Not just tech bros. Your bank’s app, power grid, hospital records — all ‘critical software.’ One AI-discovered hole, exploited fast? Chaos.

Encouraging twist: competition yields to survival. If they pull it off, fewer breaches. My critique? PR glosses the weaponization risk. Mythos could flip offensive tomorrow.

Punch: Trust, but verify.

Long ramble: We’ve profiled threats for decades — FBI councils, NDU lectures. Paradigm shift, sure. But unity’s fragile. One leaker, one defector, and poof — cartel crumbles. Still, better than solo suicides.

🧬 Related Insights

- Read more: Google’s March 2026 AI Onslaught: Gemini Invades Maps, Workspace, and Your Wrist

- Read more: Cursor’s $2B ARR Blitz: From Code Editor to Enterprise AI Juggernaut

Frequently Asked Questions

What is Project Glasswing?

Tech giants like Apple, Google, Microsoft team with Anthropic to use Mythos AI for finding bugs in critical software before hackers do.

Will Anthropic release Claude Mythos publicly?

No plans — too risky for weaponization, per Anthropic.

Does Project Glasswing fix all cybersecurity problems?

Nope, just buys time against AI-speed exploits. More work needed.