Late night in a San Francisco co-working space. A screen flickers as an AI agent iterates on a sleek dashboard UI, critiquing its own work, handing off refined code—four hours in, still grinding.

That’s the scene Anthropic’s three-agent harness conjures up, a setup designed to tame long-running full-stack AI development. Forget the hype around solo super-agents that promise the world then crash on context overload. This one’s surgical: planner, generator, evaluator, each with a laser-focused role, passing structured handoffs like batons in a relay race.

Here’s the thing. AI agents suck at marathons. They hit context limits, forget what they were doing, or just hallucinate their way to a halt. Anthropic’s engineers—led by folks at Anthropic Labs—spotted this early. Instead of brute-forcing bigger contexts (hello, compaction’s pitfalls), they reset cleanly. Each agent starts fresh with JSON specs, commit logs, init scripts. No amnesia. Progress sticks.

How Does Anthropic’s Three-Agent Harness Actually Work?

Break it down. The planner maps the big picture—user stories into tasks, milestones. Generator builds: code, designs, whatever. Evaluator? That’s the no-BS judge. Calibrated on few-shot examples, it scores against rubrics like design quality (visual pop), originality (no cookie-cutter crap), craft (pixel-perfect), functionality (does it click?).

For frontend, it even puppeteers browsers via Playwright MCP—clicks buttons, scrolls, sniffs out bugs live. Outputs a critique: “Too bland here, amp the contrast; navbar overlaps on mobile.” Generator iterates. Five to fifteen cycles, up to four hours. Boom: functional, distinctive apps.

Prithvi Rajasekaran, engineering lead at Anthropic Labs, nails it:

Separating the agent doing the work from the agent judging it proves to be a strong lever to address this issue.

Spot on. Self-scoring agents? They’re cheerleaders, inflating grades on fuzzy stuff like UI. Split ‘em, and truth emerges.

But wait—it’s not just pretty diagrams. They tested across tasks. Objective ones (unit tests) get reproducibility. Subjective (design) gains sanity via separated evals. Handoffs? Structured artifacts: feature specs, enforced tests, commit-by-commit diffs. As Artem Bredikhin put it on LinkedIn:

long-running AI agents fail for a simple reason: every new context window is amnesia. The breakthrough is structure: JSON feature specs, enforced testing, commit-by-commit progress, and an init script that ensures every session starts with a working app.

Industry echoes that. Raghus Arangarajan chimed in: the framework’s repeatability shines in multi-hour grinds, boosting reliability.

Why Do Long-Running AI Tasks Keep Crashing?

Context loss. Premature stops. Overconfidence. We’ve all seen it—Claude or GPT spins up a React app, then derails on state management. Compaction helps short-term but spooks models near limits; they play safe, outputs bland.

Anthropic flips the script. Resets + handoffs = continuity without bloat. Parallel runs for independents, sequential for deps. Distributed? Sure, scale it.

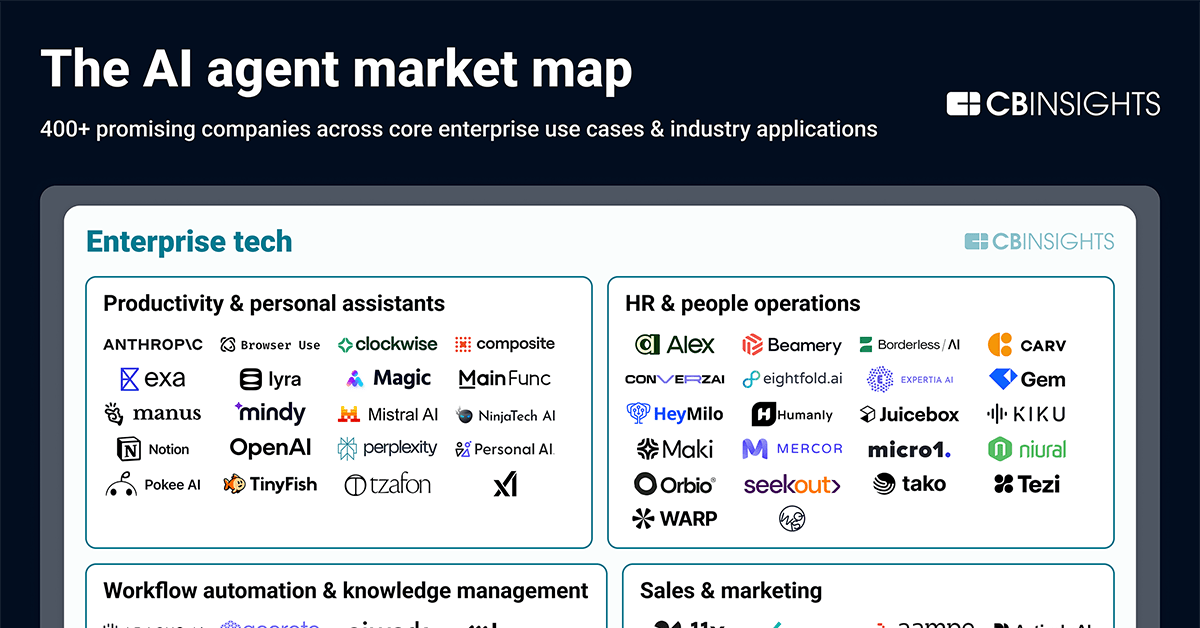

My take? This reeks of Unix philosophy reborn—small, composable tools chained via pipes. Remember awk, sed, grep? Masterpieces of modularity. Anthropic’s harness is that for agents. Prediction: as models bulk up (Claude 3.5? Sonnet?), solo agents fade; these pipelines become the enterprise standard. No more bespoke mega-prompts. Plug-and-play agent swarms.

Corporate spin check: Anthropic calls it a “harness,” not a product. Smart—they’re not selling vaporware. It’s a design pattern, open for tinkering. But don’t sleep: this scales their internal dev, edges out rivals in agentic workflows.

Operationally, it’s no fire-and-forget. Calibrate evals upfront (human touch there). Monitor traces. Decompose tasks finer as models evolve. Parallelism unlocks speed; next-gen LLMs might solo simple stuff, but harnesses eat complexity.

What Happens When Models Outgrow the Harness?

Improved AIs mean beefier tasks. Or direct handling of basics. But here’s the cycle: better models + better harnesses = moonshots. Experiment. Trace everything. It’s Darwinian—weak combos die.

Take frontend wins: those iterative cycles birth UIs rivaling mid-tier agencies. Full-stack? Backend endpoints, integrations—coherent over hours. Skeptical? Run it yourself; specs are out there.

Critique time. Human oversight lingers for calibration, validation. Fine, but scale that? Teams need eval dashboards, not babysitters. And four hours? Impressive, but enterprise wants days. V2 incoming.

Still, this shifts architecture. From monolithic prompts to orchestrated agents. Why care? Devs, you’re freed for architecture, not debugging AI hallucinations. Startups? Ship MVPs solo. Bigcos? Automate boilerplate floods.

Look, Anthropic’s not first—multi-agent papers abound. But this? Battle-tested internally, practitioner-praised. It’s the how: structured amnesia-proof flows. The why: coherence in chaos, quality via separation.

🧬 Related Insights

- Read more: Craft: Cargo for C++ That Actually Works

- Read more: Braves Booth Dashboard: Tabs Vanish, Density Rises, AI Prose Dies for Bulletproof Pitcher Facts

Frequently Asked Questions

What is Anthropic’s three-agent harness?

It’s a workflow splitting AI tasks into planner, generator, and evaluator agents for long-running coding sessions, using handoffs to beat context loss.

How does Anthropic’s harness improve AI frontend design?

Evaluator agents score live UIs on quality, originality, craft, and function, feeding critiques back for 5-15 iterations over hours.

Will multi-agent harnesses replace single AI agents?

Likely for complex, long tasks—modularity wins, like microservices over monoliths, scaling with better models.