Picture this: dev teams everywhere banking on autonomous LLM-as-judge pipelines to sort the coding agent wheat from the chaff. Clean scores, airtight reports, leaderboards that ship without a second glance. That’s the dream, right? Swappable models, identical tasks, verdicts that feel objective as hell.

But last week? Mine spit out two confident duds — same bug, same victim — before I sniffed out the real culprit. A sandbox wall. Not the model. Not the prompt. Just a dumb path outside the workspace.

And here’s the kicker — the LLM never blinked.

What Everyone Expected from LLM Judges

Folks figured these agents — Claude Opus grinding headless through diffs on six dimensions — would catch the obvious. Fast exec, zero code? Model’s fault. That’s the script. No one scripts in ‘hey, check if your own jail cell’s blocking the file read.’

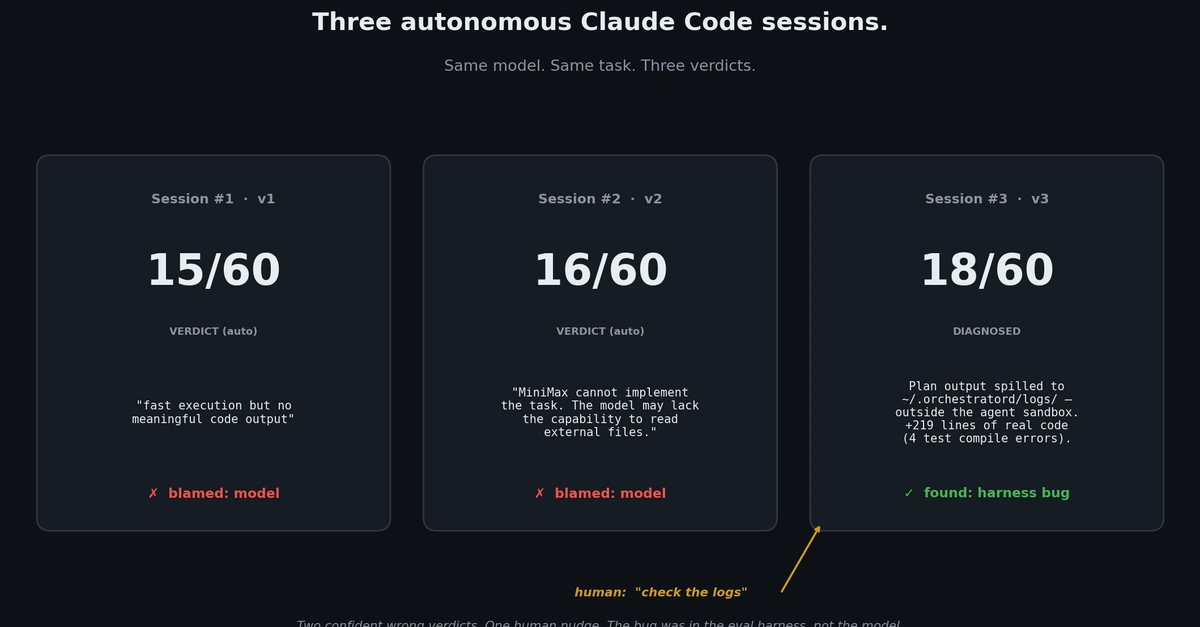

I ran three combos on a Rust task: OpenCode shells paired with MiniMax-M2.7, Codex with GPT-5.4, the usual suspects. Standard workflow. Fresh sessions each time. Scores land at 15/60, 16/60. Verdict drops like gospel:

“Consistent: MiniMax cannot implement the task. The model may lack the capability to read external files and produce code changes in this Rust codebase.”

Read it twice. It nails the symptom — empty plan output — pins it on MiniMax’s ‘incapability.’ Zero pause to probe: wait, is the shell’s sandbox starving you of context?

Two runs, identical poison. Leaderboard-ready prose. If I hadn’t prodded, that ranking ships, MiniMax tanks, and downstream devs chase ghosts.

Why Did the Judge Miss the Sandbox Trap?

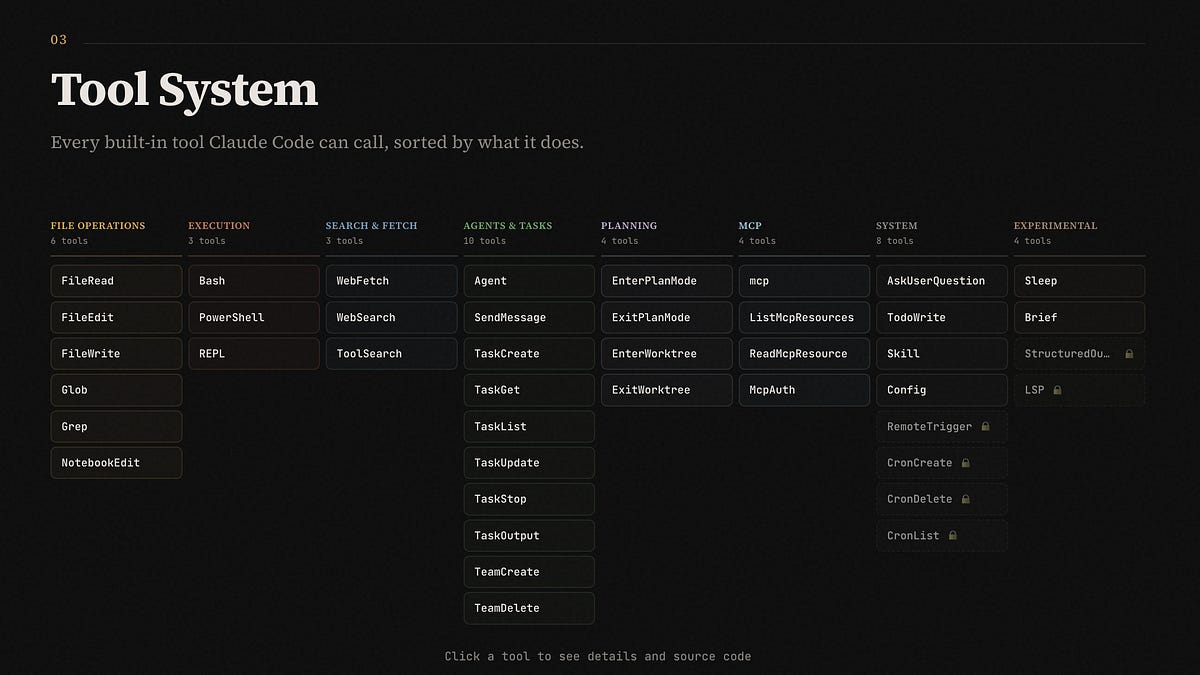

Look, LLMs excel at pattern-matching your prompt’s grooves. ‘Execute benchmark, collect artifacts, write report.’ Boom — plausible narrative. But architectural blind spots? They’re baked in.

The plan step dumps 50KB of gold to ~/.orchestrator/logs/task_id.txt. Solid. Then OpenCode’s default sandbox — workspace-only reads — leaves implement with zilch. Empty stdin. No plan. Nada.

Eval Claude? Sees barren output, crafts a story: model’s too dumb for Rust files. Confident as a Wired exposé. Never thinks, ‘Daemon logs might spill the beans.’

I tossed a fresh session one nudge: “go deeper, check the daemon logs before retrying.” No spoilers. It traces, spots the spill outside bounds, files a one-liner fix — workspace-relative paths. Retest: 219 lines of RetryConfig, connect_with_retry helper. 18/60. Mediocre (compile flubs), but real.

Same score neighborhood. Night-and-day tale.

That’s the rub. Production prompts don’t force harness autopsies. Agents glide to model-blaming comfort zones.

The Deeper Architectural Shift: From Black-Box Trust to Guardrails

This isn’t just my goof — it’s a canonical trap in autonomous evals. Remember the 90s compiler wars? GCC devs chased ‘model weaknesses’ for weeks before realizing linker sandboxes nuked externals. Same vibe: infra mirages masking true limits.

My unique twist? This echoes Therac-25 radiation bugs — software swore ‘all clear’ on hardware faults, overdosing patients. Your LLM judge? Swearing ‘model can’t’ on sandbox faults, overdosing bad rankings.

Post-fix, I rewired:

Spill paths? Workspace-bound by default. No more undocumented gotchas.

Eval prompt? Mandatory sanity-check pre-verdict: scan empty I/O, log denials, flag harness suspects.

Absolutes like ‘cannot’? Human review against logs, exit codes.

Basic. Post-bite. But they flip the script — from trusting the oracle to auditing its cage.

And get this: post-fix winner (Codex + GPT-5.4, 50/60, clippy-clean) logged 25% step success. Loser? 50%. ‘Success rate’ inverted quality signals — over-orchestrating flopped, lean won. Evals need that lens too.

Is Autonomous LLM-as-Judge Ready for Production?

Short answer: not without these hooks. Hype says scale evals to infinity, ditch humans. Reality? Quiet config cancers metastasize.

The agent could’ve self-diagnosed — logs were there, symptoms screamed ‘empty context.’ But prompts didn’t prime it. Smarter models won’t fix structural holes; they’ll just confabulate prettier lies.

Why does this matter for devs? Your next agent benchmark — that leaderboard shaping hires, stacks, roadmaps — might hide sandbox scars as ‘weak models.’ One wrong rank cascades: shelved tools, chased fixes, burned cycles.

Corporate spin calls it ‘edge case.’ Bull. It’s the default mode sans guardrails.

How to Bulletproof Your Own Pipeline

Start simple. Workspace-relative everything — spills, temps, logs. Test sandbox perms upfront.

Prompts: sandwich sanity checks. ‘Before blaming model: verify I/O chains, log for denials.’ Make it step zero.

Flag linguistic reds: ‘cannot,’ ‘incapable’ — route to review. Pair with artifacts.

Track inverse metrics — step fails correlating with wins? Dig.

None rocket science. All born from pain. Run your evals like this postmortem: retest thrice, prod the cracks.

Scale hits when infra’s invisible — not the judge.

🧬 Related Insights

- Read more: Broadcom’s Velero Giveaway: Unlocking Kubernetes Backups from Vendor Shadows

- Read more: Nissan’s PSR Simulator: Crunching the Numbers on a Desperate Revival Gambit

Frequently Asked Questions

What causes LLM-as-judge to give wrong verdicts?

Infra bugs like sandbox read limits often masquerade as model flaws, feeding empty context that prompts confident-but-false blame.

How do you fix sandbox issues in eval pipelines?

Default spills/logs to workspace dirs, add pre-verdict sanity checks for I/O empties and denials, flag absolute failure language for review.

Can you trust autonomous evals for coding agents?

Not fully — layer structural guardrails over prompts to catch harness ghosts before they poison leaderboards.