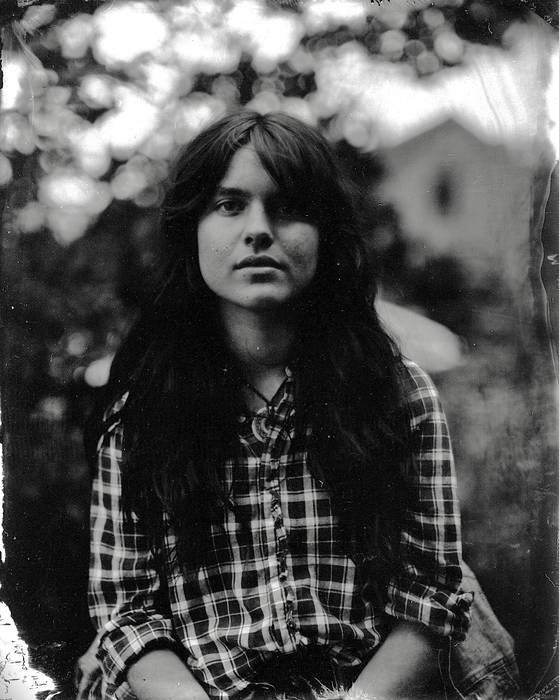

Murphy Campbell scrolls through her Spotify artist page one January morning — and freezes. There they are: tracks she’d recorded but never released, vocals warped into something unnaturally smooth, her name slapped on top like a counterfeit label.

AI fakes. Someone scraped her YouTube videos, fed them into a voice-cloning tool, churned out covers, and dumped them onto streaming platforms. I tested one, “Four Marys,” with two detectors; both lit up: probable AI. Campbell’s shock? Palpable. “I was kind of under the impression that we had a little bit more checks in place before someone could just do that,” she told The Verge. “But, you know, a lesson learned there.”

She pestered support — became a “pest,” her words — until most vanished. Not all, though. One lingers on Spotify, now under a knockoff profile: Murphy Campbell (no relation). Thrilling, right?

How AI Voice Clones Hijack Artist Profiles Overnight

Look, this isn’t random. Tools like ElevenLabs or open-source clones let anyone mimic a voice from minutes of audio — YouTube’s a goldmine for that. Pull a folk ballad performance, swap the voice, upload via shady distributors. Boom: fake streams, diluted real ones. Spotify’s testing artist approval gates now, but Campbell’s burned. “Every time an entity that’s that large makes a promise like that to musicians, it seems to just not be what they made it out to be,” she says. Skeptical? Smart.

But wait — the plot thickens, fast.

Right as a Rolling Stone piece drops on her AI woes, bam: YouTube claims hit her videos. Distributor Vydia uploads private clips under “Murphy Rider,” triggers Content ID on her public domain staples like “Darling Corey” and “In the Pines.” Public domain! That Nirvana cover from Lead Belly’s era, 1870s roots. YouTube shares her revenue anyway.

“You are now sharing revenues with the copyright owners of the music detected in your video, Darling Corey.”

Vydia backs off eventually, bans the uploader. Spokesman Roy LaManna boasts: their 6 million claims? Just 0.02% invalid. “By industry standards is like amazing.” Death threats forced office evacuations, he adds. No link to the AI fakes, swears LaManna — timing’s coincidence.

Campbell won’t absolve them. “It goes way deeper than we think it does.”

Why Does YouTube’s Content ID Let Trolls Win on Public Domain?

Here’s the architecture glitch: Content ID fingerprints audio uploads against a database. Claimants upload first, YouTube auto-matches. Public domain? Irrelevant — no human review upfront. Distributors like Vydia (or Timeless IR?) flood it with bogus proxies. Scale wins; disputes drag.

Think 1920s recording pirates — my unique angle here. Back then, sheet music hustlers “copyrighted” folk tunes by tweaking lyrics, claiming Appalachian hollers as theirs. Sheet music firms trolled fiddlers via ASCAP precursors. Today? AI amps it: infinite variants, zero cost. Content ID’s the new ASCAP, but dumber — algorithmic, profit-first. Vydia’s “amazing” 0.02%? That’s 1,200 bad claims yearly. Multiply by platforms.

And Spotify? Algorithms prioritize engagement, not provenance. Fakes farm streams — her real folk ballads drown. Platforms promise fixes (approval tools, better detectors), but incentives misalign: more content = more ads. Artists like Campbell, niche folkies playing public domain chestnuts, are soft targets. No label muscle.

This exposes a deeper shift: music’s plumbing — distribution APIs, ID systems — wasn’t built for AI’s speed. One troll with a voice model and Vydia account fractures it. Prediction? Without mandated pre-upload voice auth (blockchain hashes? Watermarks?), we’ll see artist “splintering” — fake Murphys everywhere, royalties vaporized.

Can Platforms Actually Stop AI Music Trolls?

Short answer: not yet. Spotify’s manual approvals sound good — opt-in verification — but scale? Millions of profiles. YouTube’s tweaking Content ID for AI flags, but trolls pivot: human-AI hybrids fool detectors.

Campbell’s saga screams for federal backstops — like EU’s AI Act watermark mandates, but for audio. US lags; labels lobby, indies suffer. Vydia’s low invalid rate? PR spin — ignores successful scams before disputes.

Deeper why: capitalism’s content flywheel. Platforms grow via uploads; moderation’s cost. Trolls exploit the lag.

A single sentence: Nightmare fuel.

But hope glimmers — artist collectives forming, tools like Content Credentials emerging. Campbell fights on, ballads intact.

🧬 Related Insights

- Read more: Ctrl-World: Robots Dreaming Past the Hype

- Read more: AgentRx: Pinpointing the Exact Moment Your AI Agent Goes Off the Rails

Frequently Asked Questions

What are AI music fakes and how do they target artists?

AI fakes clone voices from YouTube videos, generate covers, upload to Spotify under real names — diluting streams, confusing fans.

Can copyright trolls claim public domain songs on YouTube?

Yes, via Content ID: upload first, auto-match triggers claims — even on 1800s folk tunes. Disputes needed to fight back.

Will Spotify’s artist approval stop AI clones?

Maybe for big names; skeptics like Campbell doubt scalability against troll volume.