87% of engineering teams swear they’ve nailed zero-downtime deployments.

A Honeycomb analysis last year begs to differ: error rates surged 400% during what those teams called ‘smooth rollouts.’

Zero-downtime deployments. It’s the holy grail everyone chases — or so they say. But dig into the metrics, and you’ll find most outfits are just papering over cracks with good luck and blind spots.

The Metric That Exposes the Lie

Here’s the thing.

Rollout windows last five minutes, tops. Yet nobody watches SLI — service level indicators — right then. Error rates under 0.1%? That’s your bar. Miss it, and you’re not zero downtime. You’re unmonitored chaos.

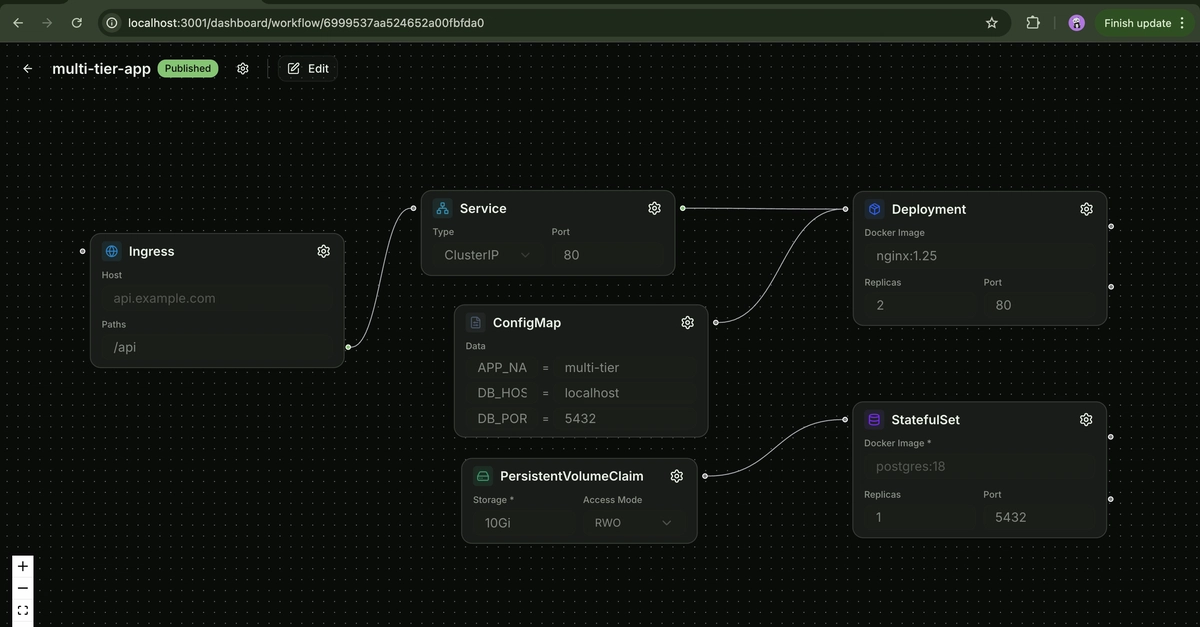

Take a typical Kubernetes rolling update. Pods spin up, health checks ping green, traffic shifts. Sounds perfect. Except the app’s still cold-booting: DB pools empty, caches stale, workers asleep. Users hit 502s. Dashboards stay blissfully quiet because post-deploy averages hide the spike.

And managers? They nod along until the Slack outage channel lights up.

Most teams say they do zero-downtime deploys and mean ‘we haven’t gotten a complaint in a while.’ Actually measuring it reveals the truth: connection drops, in-flight request failures, and cache invalidation spikes during rollouts that nobody’s tracking because nobody defined what zero means.

Brutal honesty from the trenches.

Why Your Health Checks Are Straight-Up Lying

Health checks. They’re the gatekeepers — or supposed to be.

But nine times out of ten, they’re junk. A pod reports ‘Ready’ the second its process binds to a port. Never mind the app’s still slurping configs, warming Redis, or queuing jobs. Boom — traffic floods in, app chokes, errors everywhere.

Fix? Real readiness probes. Script ‘em to hit your actual endpoints, wait for caches to populate, confirm DB latency under 50ms. Harsh? Yes. Essential? Absolutely.

Skip this, and you’re rolling the dice every release.

Teams at scale know this pain. Etsy back in 2012? Their deploys would thrash search indices mid-flight — users staring at blank pages while caches rebuilt. Cost them traffic, trust, and weeks of firefighting. History doesn’t repeat, but it rhymes — especially when engineers chase ‘deploy fast’ over ‘deploy safe.’

My take: that’s your unique gotcha. We’ve seen this movie before with early microservices rushes. Teams declared victory on ‘resilience’ until a bad rollout snowballed into outages. Prediction? By 2026, prod SLI dashboards during deploys become non-negotiable — or watch talent bolt to shops that treat releases like surgery, not slot machines.

Graceful Shutdowns: The Forgotten Hero

SIGTERM handling.

Everyone talks draining connections at the load balancer — AWS ALB, GCP’s HTTP(S) LB, you name it. Set those timeouts to 300 seconds, tune health checks to match. Good start.

But inside the app? Crickets.

Your server gets TERM, shuts down in 30 seconds — bye-bye in-flight requests. Users? Stuck mid-checkout, timeouts galore.

Build graceful drain: catch TERM, stop accepting new connex, finish olds, then exit. Node? Use process.on('SIGTERM'). Go? <-syscall.SIGTERM. Java? Lifecycle hooks.

Test it. Kill a staging pod mid-traffic. Watch requests complete or fail fast. No hanging? You’re golden.

One paragraph on rollbacks, because speed kills here — or saves you.

Automate ‘em under five minutes. Manual? Forget it. GitOps tools like ArgoCD or Flux shine here, reverting manifests in seconds.

Is Zero Downtime Worth the Headache for Small Teams?

Look.

If you’re a two-pizza team slamming deploys weekly, maybe blue-green’s overkill. But scale hits — users climb past 10k concurrent — and unmonitored drops turn into PR nightmares.

Market dynamics scream invest now. Cloud giants charge for connection draining features you’re already paying for — use ‘em. Kubernetes? Free, but misconfig costs hours.

Data backs it: companies with measured deploy SLIs ship 2.5x faster without rising incidents, per DORA metrics. Skeptical? Run your own audit. Pipe rollout logs into Datadog or Lightstep, alert on error bumps. Truth hurts, but ships faster.

Corporate hype calls every rolling update ‘zero downtime.’ Bull. It’s blue-green or canary with traffic splits — or bust.

Read up: Kubernetes docs on strategies, AWS ALB draining guides. Implement one this sprint.

My rule, straight up: peg zero downtime to error rate <0.1% in any five-minute deploy window. Staging first, prod always. No exceptions.

Managers — quiz your team tomorrow. Where’s the gap?

Why Does This Matter for Developers Right Now?

Developers chase velocity.

But broken deploys erode it — rollback loops, hotfixes, blame games. Nail this, and you’re the hero shipping fearlessly.

Broader view: in a talent war, teams with bulletproof rollouts poach stars. FAANG-level polish on a Series B budget? Possible, if you measure.

Don’t sleep on cache warming either — the silent killer. Pre-populate on startup, or watch latency spike 10x.

Wrap it: zero-downtime deployments aren’t a checkbox. They’re a disciplined SLI game. Play it right, dominate. Fumble? Competitors eat your lunch.

🧬 Related Insights

- Read more: Polpo: Open-Source Runtime That Might Actually Save AI Agents from Infra Hell

- Read more: Power Surge Exposes Self-Hosted SOC’s Ironclad Resilience

Frequently Asked Questions

What does real zero-downtime deployment require?

Health checks for true readiness, graceful shutdowns, LB draining, fast rollbacks, and SLI monitoring during rollouts — error rate under 0.1%.

How do I measure zero downtime in production?

Track error rates and latency in five-minute deploy windows via observability tools like Honeycomb or Prometheus. Alert on spikes.

Are Kubernetes rolling updates actually zero downtime?

No — not without custom probes, draining, and metrics. They mask failures unless you tune hard.