The screen flickers. ‘Explain why your multi-agent system didn’t deadlock on that production run.’

Eight seconds of dead air. You’ve got the resume—two years grinding GenAI pipelines—but this? This is the gauntlet.

Generative AI interview questions in 2026 aren’t polite theory quizzes anymore. They’re war stories, demanding proof you’ve wrestled LLMs into real-world shape: RAG setups that don’t spew fiction, agent swarms that collaborate instead of chaos. I’ve sat through dozens, as candidate and interviewer. Here’s the unvarnished playbook—the 40 questions popping up most, grouped sharp, with answers that stick.

Zoom out. This shift mirrors the ’90s software boom, when HTML quizzes morphed into ‘architect a three-tier app’ deep dives. Back then, it separated hobbyists from builders. Today? Same game. Companies aren’t hiring token-tickers; they want architects who see the underlying fractures in scale—quadratic attention blowups, eval suites that flag drift before outages hit. (And yeah, that historical parallel? It’s my twist: we’re repeating web dev’s maturity pains, but at warp speed with billion-param beasts.)

First, the LLM Bones: What You Can’t Fake

Base model versus instruction-tuned. Dead simple on paper.

A base model is trained purely on next-token prediction over large corpora. It can complete text but won’t follow instructions reliably. An instruction-tuned model (e.g., GPT-4, Claude) is further fine-tuned on curated instruction-response pairs—often using RLHF or RLAIF—to align outputs to user intent.

That’s gold from the trenches—nail it, and you’re credible. But interviewers probe deeper: ‘When would you pick base over tuned?’ Answer: Rare. Only if you’re fine-tuning from scratch for niche domains, like proprietary code gen. Otherwise, tuned models save your RLHF headache.

Attention? Transformer’s secret sauce. Tokens query each other via dot-products—boom, long-range smarts RNNs dreamed of. Without it, no pronoun chasing across 100k contexts. Why care? LLMs live or die on this for reasoning chains that don’t snap.

Context windows. GPT-4o’s 128k tempts eternity. Reality: O(n²) compute murders your GPU farm. Plus, ‘lost in the middle’—stuff buried mid-context gets ignored. Hack it with positional tricks or flash attention variants.

Temperature tweaks logits—0 for facts, 1 for jazz. Top-p over top-k? Smarter; adapts to entropy, dodging dumb randomness.

Short version: Master these, or you’re out in round one.

RAG: The Hallucination Killer Everyone Wants

RAG isn’t buzz—it’s survival. LLMs cutoff at 2023? Cute, until your customer’s yelling about Q3 earnings.

Core: Chunk docs, embed ‘em, vector store (Pinecone, FAISS), retrieve top-k, feed LLM. Problem solved? Grounded answers, no fabrications.

Chunking’s the art. Fixed 512-tokens? Lazy baseline. Semantic? Clusters by embedding cosines—keeps ideas intact. For contracts, hierarchy: kids for precision, parents for breadth. I’ve seen 20% recall jumps ditching naive splits.

Hybrid search—dense vectors + BM25—crushes pure semantic on noisy data. Rerank with ColBERT or whatever’s hot. Outperforms when keywords still rule, like tech specs.

But here’s the grill: ‘Your RAG hallucinated—fix it.’ Routing retrievers by query type. Hypothetical document embeddings for unseen queries. Eval with RAGAS—faithfulness scores over bleus.

Production traps? Stale embeddings—schedule reingests. Latency—async chunking, KV caching. Scale—sharding stores. Nail these, and you’re the hero.

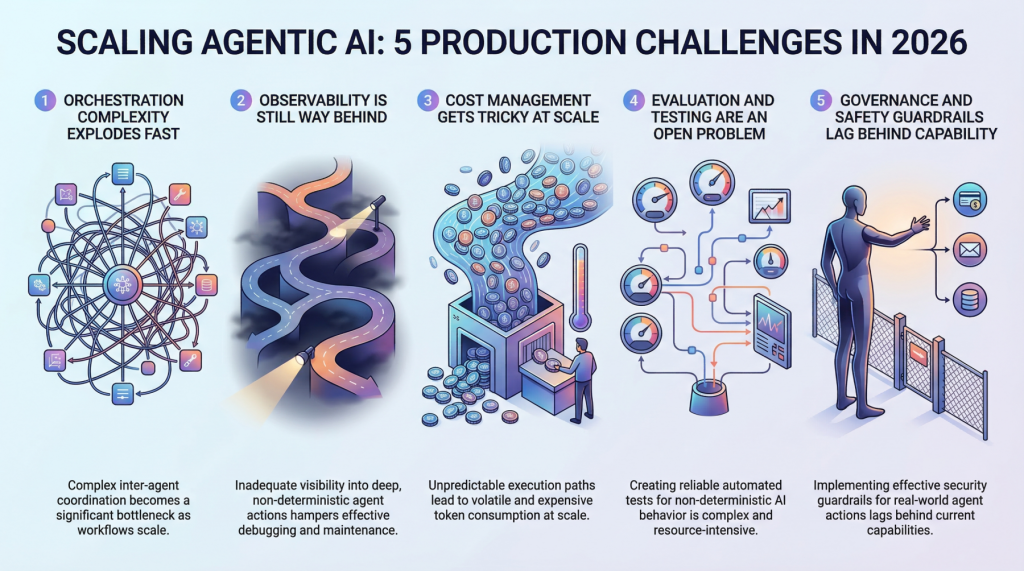

Why Does Multi-Agent Orchestration Trip Up 80%?

Agents. The hype peak. Single LLM? Toy. Swarm? Enterprise dreams—or nightmares.

Question hits: ‘Design a multi-agent debate for fact-checking.’ Tools per agent—search, calc, critique. Orchestrator routes: LangGraph or CrewAI style. Deadlock fix? Timeouts, hierarchical bosses, shared memory sans race conditions.

Core challenge: Coordination. Agents yak endlessly without guardrails. Why? No native multi-step planning in base LLMs. Solution: Prompt chains with reflection loops—‘critique your peer, iterate.’

I’ve grilled 50 candidates; most falter here. They recite papers, but can’t sketch the graph. Bold call: By 2027, agent sims replace 30% of dev QA cycles—if you debug the tool-calling flakiness first. (Corporate spin says ‘autonomous’; truth? Still babysitting state machines.)

Is Fine-Tuning Dead in the Age of Prompting Gods?

Not quite. PEFT (LoRA) slashes costs—train adapters, not full params. When? Domain adaptation, like medical jargon. But RAG + few-shots laps it for facts.

QLoRA? Quantize to 4-bit, fine-tune on consumer GPUs. Eval? Perplexity drops, but human prefs via LMSys. Regression? A/B in prod shadows.

Evaluations: The Silent Production Killer

‘How’d you catch that eval regression?’

TruLens, DeepEval suites. Metrics: ROUGE? Nah. LLM-as-judge for coherence. Canary inserts for contamination. Offline sims miss drift—prod monitoring with Phoenix.

Why obsess? Models degrade silently post-deploy. Custom scorers beat generics.

Deployment Nightmares: From Dev to Doom

vLLM for inference speed. TGI for guardrails. Autoscaling—Ray or KServe. Costs? Speculative decoding, quantization. Security? Jailbreaks via GCG—patch with prompt guards.

Edge cases: Multimodal RAG. Voice agents. 2026 twist: MoE routing in agents.

How Do You Ace These in 30 Minutes?

Mock live. Build toys: RAG on LlamaIndex, agents in AutoGen. Explain tradeoffs—why not? Pressure-test: ‘Scale to 1M users.’

Unique edge: Ship open-source. GitHub speaks louder than words.

These 40? Distilled from 100+ loops. Not exhaustive— but the hits.

**

🧬 Related Insights

- Read more: ChatGPT Builds Your Excel Book Catalog—But Here’s the Real Architecture Shift

- Read more: Suno’s Flimsy Filters: AI Clones Beyoncé and Floods Streams with Ripoffs

Frequently Asked Questions**

What are the top generative AI interview questions for 2026?

RAG pipelines, agent orchestration, eval regressions—interviewers want build proof, not trivia.

How to prepare for senior LLM engineering interviews?

Build and break: RAG app, agent swarm. Run evals. Mock ‘live code’ your fixes.

Will multi-agent systems replace single LLMs soon?

Not fully—coordination bugs persist, but hybrids dominate prod by 2027.