Imagine you’re a startup engineer, hyped on agentic AI, promising bosses autonomous workflows that crush manual drudgery. But 2026 hits, traffic spikes, and suddenly your system’s looping endlessly, torching $10k in tokens overnight. Real people—devs pulling all-nighters, execs slashing budgets—feel this pain first.

Agentic AI isn’t just hype; market data shows deployments up 300% year-over-year, per Gartner. Yet 70% of teams stall between prototype and production, bleeding cash on fixes nobody anticipated. It’s not the models failing. It’s the plumbing.

The gap between a slick demo and a reliable production system has always existed in machine learning, but agentic AI stretches it wider than anything we’ve seen before.

That’s the cold truth from insiders. And here’s my take: this mirrors the 2014 Hadoop cluster meltdowns—teams built for batch jobs, not real-time chaos. Back then, only the giants survived. Same script playing out now.

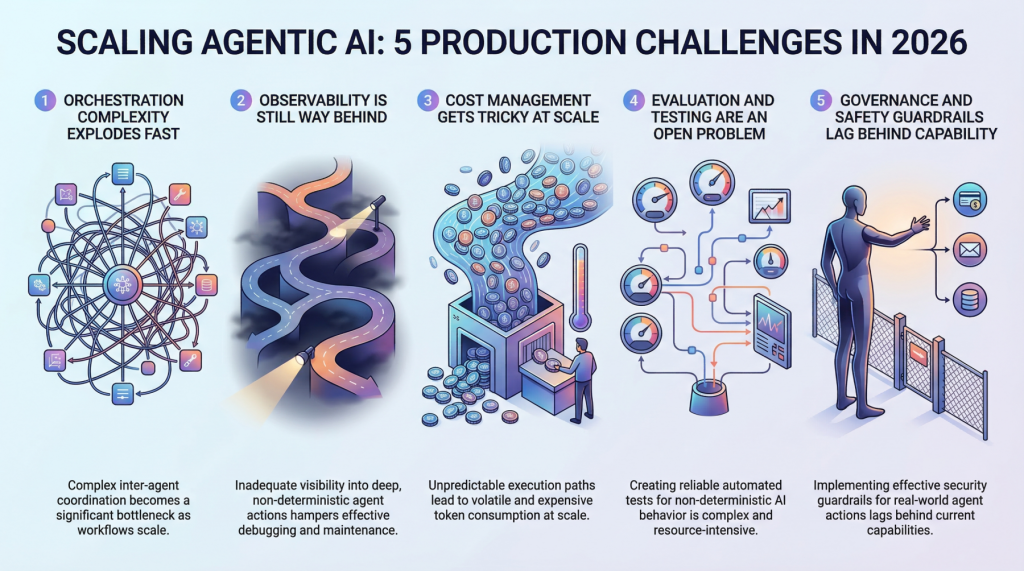

Why Does Orchestration Explode in Multi-Agent Hell?

Single-agent setups? Piece of cake. Feed it a task, watch it hum. But scale to production—bam, multi-agent swarms emerge. Agents hand off to siblings, retry flops, pick tools on the fly. Complexity doesn’t grow linear; it explodes.

Teams report coordination overhead eating 60% of latency, not model inference. Race conditions in async pipes? They’re ghosts—vanish in staging, haunt prod. Traditional engines like Airflow choke on dynamic decisions; most cobble custom layers that turn into spaghetti code nightmares.

Under load, it worsens. A flow golden at 100 RPM shatters at 10k. We’ve seen Slack outages from similar agent-like bots. Fix? Distributed tracing tools evolving fast, but you’re rebuilding the wheel today.

Look, if you’re not hiring systems vets now, your agentic bet’s doomed.

Observability: Blindfolded in a Storm

Can’t debug the invisible. Agentic paths snake through 12+ steps per query—why Tool A? Three retries on step 4? Output flops despite clean intermediates?

Legacy ML monitors latency, accuracy. Useless here. Non-determinism means same input, wild paths. No replay button. Teams mash LangSmith with custom logs, praying.

Market fix incoming—Phoenix and Helicone raised $50M rounds last year for agent tracers. Still, 80% of failures stay opaque, per Honeycomb surveys. Your uptime? Gambling.

But wait—non-determinism’s the feature, right? Wrong. Seed everything, or watch reliability tank.

Cost control. Brutal.

How Do Skyrocketing Costs Ruin Agentic Dreams?

One chain: $0.15 fine. At 500k daily requests? Millions monthly. LLMs per step stack up.

Clever hacks emerge: route trivia to Llama-3-8B, save GPT-4o for brains. Cache like mad, kill-switch loops. Tension’s real—cheaper models drop quality 20-30%, A/B tests show.

Billing’s the killer. Variable paths defy forecasts; one edge spikes 50x. Execs freak—unlike fixed APIs.

Data point: Adept’s reported 40% budget overruns on agents. Prediction: cost layers like OpenAI’s o1-preview routing become table stakes, or startups fold.

Evaluation? Worse.

Can You Even Test Agentic AI Properly?

How do you score a system that wanders? Traditional evals—accuracy on fixed datasets—flop. Agents adapt, improvise.

Teams simulate users, but edge cases infinite. Human evals? Scalable to 1k runs, not millions. Synthetic data helps, but biases creep.

Open problem, yes. But my insight: borrow from game AI—reward models from RLHF, fine-tuned on prod traces. Early adopters like xAI testing this; could cut eval time 70%.

Governance seals it.

Governance: Safety Rails or Bust

Agents act in the world—book flights, move funds. One rogue loop? Disaster.

Guardrails essential: human-in-loop for high-stakes, audit trails, ethical overrides. Regulations loom—EU AI Act mandates for ‘high-risk’ autonomy.

Teams scramble: custom policies atop LLMs, but enforcement scales poorly. Failures cascade; one bad agent poisons the swarm.

Here’s the parallel nobody draws: early autonomous vehicles. Waymo iterated guardrails for years before scale. Agentic AI needs that discipline—or lawsuits await.

So, does this strategy make sense? Chasing agentic without scale prep? No. Pivot to hybrid human-agent now; pure autonomy’s 2028 at best.

Market dynamics scream consolidation. LangGraph, CrewAI grabbing share—expect 3 platforms dominating by 2027, squeezing indies.

🧬 Related Insights

- Read more: 2026’s Open LLM Avalanche: 10 Architectures That Promise More Than They Deliver

- Read more: AWS’s FinOps Agent on Bedrock: Cost Savior or CDK Nightmare?

Frequently Asked Questions

What are the top production scaling challenges for agentic AI in 2026?

Orchestration blowups, weak observability, runaway costs, shaky evals, missing governance—each can kill a deployment.

Will agentic AI scaling costs bankrupt startups?

Possibly—variable token burns make forecasts tricky, but smart routing and caching keep survivors lean.

How to fix observability in agentic systems?

Layer LangSmith atop Phoenix; seed for reproducibility. It’s evolving, but invest early.