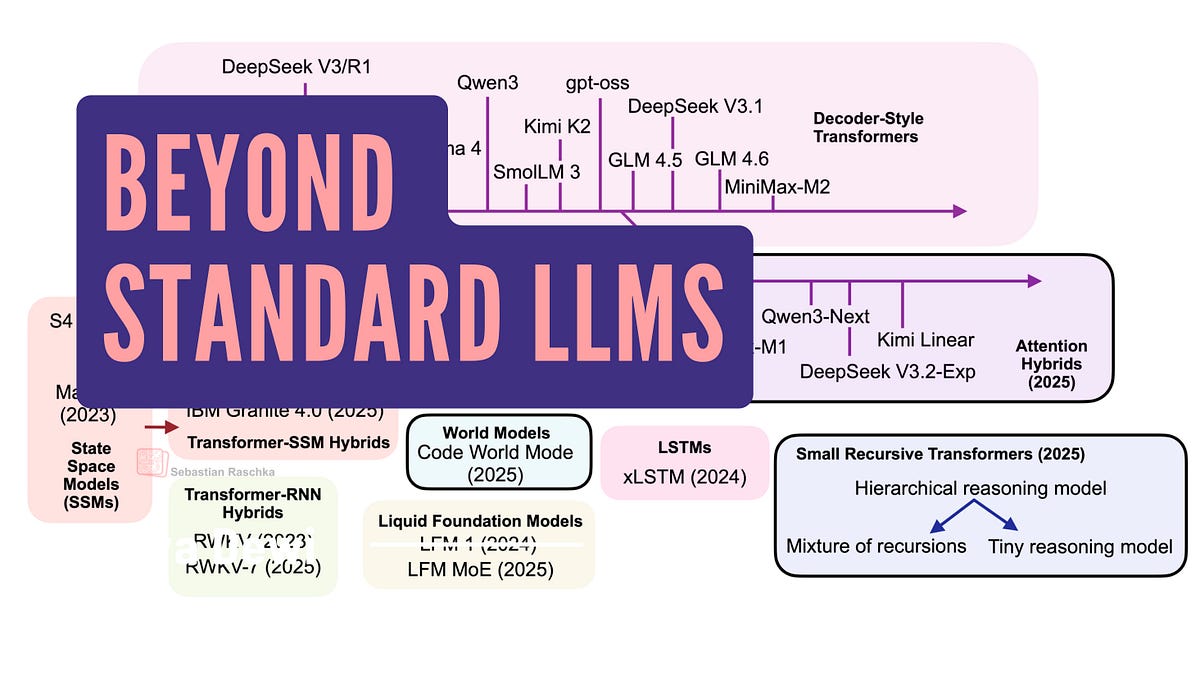

Large Language Models

Linear Attention Hybrids Challenge Transformer Grip on Open LLMs

Transformers still own the leaderboard, but alternatives like linear attention hybrids promise quadratic breakthroughs. Here's why they're worth watching—and where they fall short.