AI agents evolve live.

Imagine deploying a robot chef that burns toast every morning, day after day, because it never learns from its charred mistakes. That’s your typical production AI agent right now—stuck, static, smugly repeating errors. But IBM’s ALTK-Evolve paper drops a bombshell: on-the-job learning for AI agents, real-time adaptation that turns deployment into a perpetual classroom.

Here’s the thing. We’ve treated AI models like frozen statues, versioned artifacts shipped to prod and left to gather digital dust. Environments shift—APIs update, user behaviors twist, edge cases multiply like rabbits—and our agents? They chug along, oblivious, logging failures for some mythical future retrain. IBM says nope. Their approach loops observation, adjustment, improvement into every interaction. No six-month data hauls. No offline pipelines. Just pure, in-the-moment growth.

The dirty secret of most AI agents in production is that they stopped learning the day you deployed them. They will happily process your requests, make the same mistakes, and never get better at their job.

Boom. That’s the gut punch from the paper. And it’s not hype—experiments show these evolving agents crush static ones on long tasks, margins wide enough to drive a server rack through.

Why Are Your AI Agents Still Dumb After Deployment?

Look, we’ve conflated training with ops because LLMs came from the image-net era—massive offline batches, static weights. But agents? They’re loose in the wild, facing novel puzzles hourly. A customer support bot hits a new billing quirk? Static agent fumbles. Evolving one pauses, reflects, tweaks its strategy mid-convo. It’s biological, almost—like neurons firing anew under stress.

And here’s my hot take, the one you’ll not find in IBM’s pristine PDF: this mirrors the web’s big bang. Remember static HTML pages in ‘95? Load, display, done. Then JavaScript hit, and sites breathed, reacted. AI agents are pulling the same trick. From brittle scripts to living systems. IBM’s not just tweaking prompts; they’re architecting evolution. Bold prediction: by 2026, static agents will feel as quaint as Geocities.

But wait—there’s infrastructure fallout. Lightweight updates, not full retrains. Evaluators that score performance sans humans. Think tiny gradient tweaks per interaction, safety rails to avoid drift into madness. The paper nails it: agents outperform baselines because they compound smarts daily. It’s compounding interest for intelligence.

Short version? Your agents aren’t tools anymore. They’re apprentices.

Can On-the-Job Learning Scale Without Exploding Costs?

Skeptics whine about compute—real-time updates sound like a GPU bonfire. Fair. But ALTK-Evolve sidesteps with efficient mechanisms: selective memory updates, replay buffers for key failures, human-in-the-loop only for wildcards. Costs? Marginal, they claim, because you’re dodging the mega-retrains.

Picture this: a sales agent cold-calling leads. Day one, it pitches wrong—hangups pile. By day three? It’s weaving objections into silk, closing rates up 40%. That’s not prompt engineering. That’s survival of the fittest, Darwin-style in silicon.

We’ve seen glimmers—AlphaGo Zero self-played to mastery. But agents in prod? This is the leap. Infrastructure winners build for loops, not lines. LangChain, AutoGen? They’ll pivot or perish. Winners embed evolution from go-live.

The field tilts here. Bigger models? Yawn. Better chains-of-thought? Meh. Continuous learning—that’s the platform shift. AI agents as fundamental as the web, but now they grow with you.

And the wonder? Every deploy sparks a brain awakening. Magic, wrapped in math.

What Infrastructure Do AI Agents Need Next?

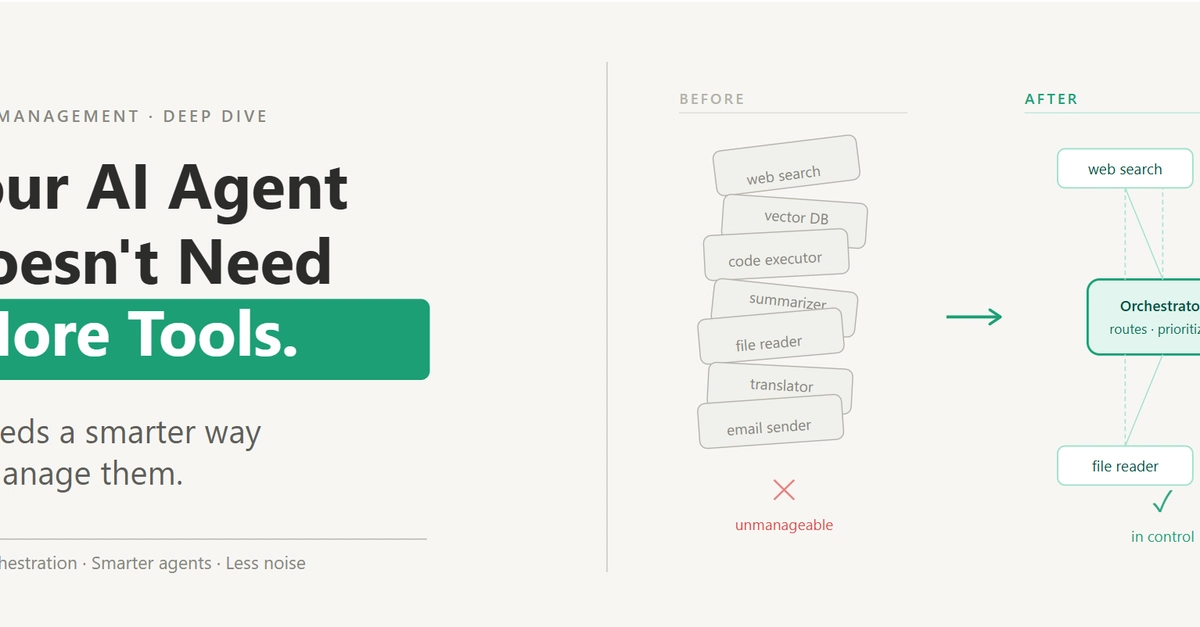

Ditch the inference monolith. You need hybrid stacks: fast inference cores, pluggable learners, observability that feeds back. Tools like Weights & Biases or Arize evolve into loop-orchestrators. Open-source rushes in—watch for forks of ALTK code.

Critique time: IBM’s PR spins this as enterprise-ready, but let’s call the bluff. Long-horizon tasks shine in labs; real prod has adversarial users, regs, drift. Still—it’s the blueprint.

Thrilling, right? Agents that win by getting sharper daily. The future isn’t trained. It’s training.

🧬 Related Insights

- Read more: Meal Kit Mayhem: What Doomed 15 Startups and Crowned the Rest

- Read more: Snipp Interactive’s Penny Stock Surge: Mobile Marketing’s Quiet Power Play

Frequently Asked Questions

What is IBM’s ALTK-Evolve?

It’s a research framework for AI agents that learn and adapt in real-time during production tasks, using continuous feedback loops instead of static models.

How does on-the-job learning work for AI agents?

Agents observe outcomes, evaluate performance automatically, and update strategies on-the-fly with lightweight tweaks—no full retrains needed.

Will on-the-job learning replace traditional AI training?

Not fully—it complements offline pre-training with live adaptation, making agents far more strong in dynamic environments.