Imagine firing up ChatGPT to draft an email, or DALL-E to whip up vacation pics— that’s foundation models at work, quietly supercharging tools millions use daily. Without them, your AI wouldn’t adapt so effortlessly from poetry to code.

Foundation models.

They’re the beasts behind it all.

Trained on vast, unlabeled data troves, these neural networks—think transformers, LLMs, VLMs—flex across tasks with minimal tweaks. Stanford’s 2024 AI Index clocks 149 released in 2023 alone, double the prior year. Numbers like that signal a market frenzy, where compute costs soar but adaptation speeds up everything from drug discovery to ad copy.

What Exactly Are Foundation Models?

Picture Miles Davis in ‘56, riffing in the studio, naming tracks post-jam. AI researchers echo that vibe—churning models, uncovering surprises later.

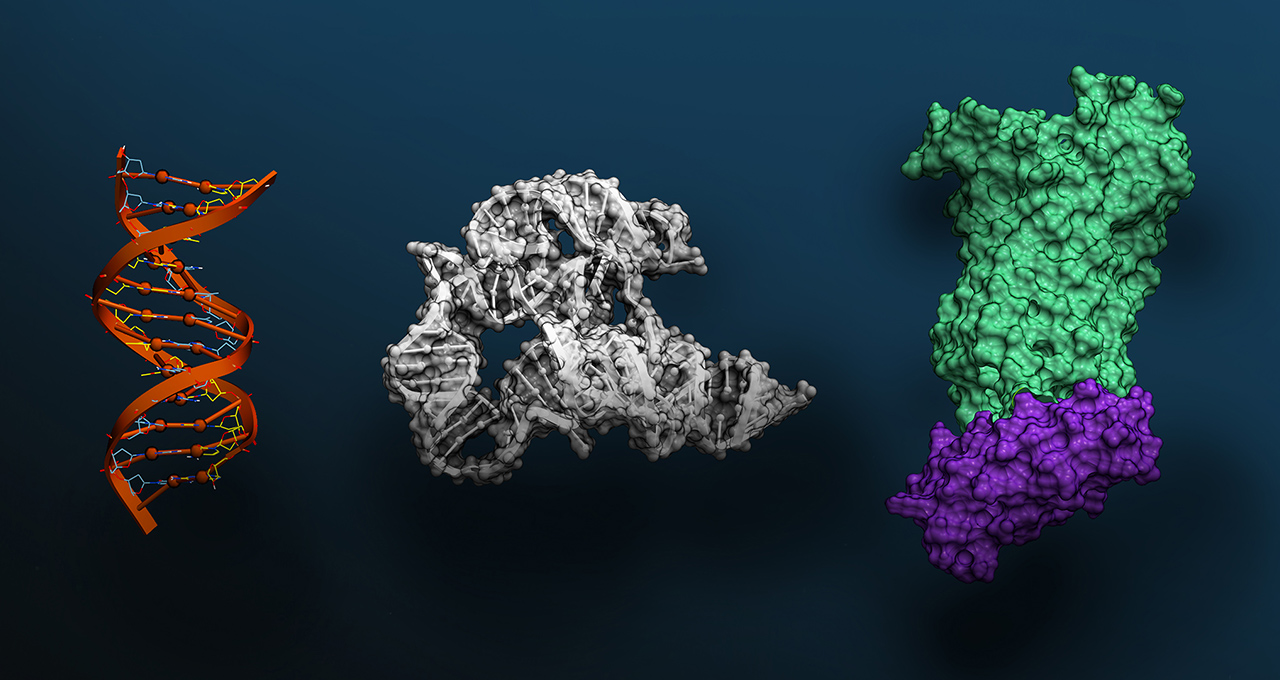

A foundation model? It’s a massive AI neural net, gorged on raw data via unsupervised learning, primed for broad tasks. No hand-labeling millions of images or texts; just feed it the internet’s slop, fine-tune lightly, and boom: translation, medical scans, even agent tricks.

“I think we’ve uncovered a very small fraction of the capabilities of existing foundation models, let alone future ones,” said Percy Liang, the center’s director.

Liang’s words, from that first workshop, nail it. Emergence—those hidden skills popping up unbidden—and homogenization, blending architectures into this dominant form. It’s why your free AI feels polished across apps.

But here’s my take: this rush mirrors the PC boom of the ’80s, when IBM clones flooded markets, slashing prices but commoditizing innovation. Bold call—by 2027, we’ll see foundation model “app stores,” where indie devs fine-tune giants like GPT or Gemini, birthing a trillion-dollar ecosystem. Except, unlike PCs, these models’ black-box nature risks output homogenization, turning creative AI into bland corporate mush.

Short para for punch.

Why the Explosion Now?

Data’s cheap. Compute’s scaling. Transformers lit the fuse.

Ashish Vaswani’s 2017 paper birthed them—simple nets yielding wild capabilities. BERT dropped in 2018, open-sourced by Google, sparking the LLM arms race. GPT-3 hit 2020: 175 billion parameters, a trillion-word diet. Blown minds, as Liang recalls.

ChatGPT? iPhone moment. 100 million users in two months, on 10,000 NVIDIA GPUs. Now Gemini Ultra demands 50 billion petaflops. Sequoia execs peg generative AI’s value at trillions—foundation models are the core.

And it’s not just text. Multimodal models gobble images, video, audio.

Can Foundation Models Go Multimodal—and Should You Care?

VLMs like Cosmos Nemotron-34B, fed 355,000 videos and 2.8 million images, query real-world clips or dream up visuals. Text-to-image? Stable Diffusion, Midjourney—foundation roots.

For real people: doctors spotting tumors in scans faster; creators scripting videos from prompts; coders debugging via chat. Market dynamic? Hyperscalers like AWS, Azure offer FM APIs, undercutting startups. Smart money bets on fine-tuning specialists.

But skepticism creeps in. PR spin calls every release “state-of-the-art,” yet benchmarks show diminishing returns—GPT-4 edges GPT-3.5 by slim margins on MMLU, per leaderboards. Hype?

Yes. Compute walls loom; energy bills for training rival small nations. Still, edge deployment—phones running tiny FMs—hints at democratization.

Look, if you’re a developer, grab Llama 2 or Mistral; fine-tune on your data. Enterprises? Lock in via Microsoft/OpenAI deals. Consumers? It’s already in your browser.

The homogenization worry? Valid. Jazz like Davis thrived on uniqueness; AI risks playlist sameness. My unique angle: this parallels early search engines—AltaVista’s raw power yielded to Google’s refined index. Foundation models will consolidate around 3-5 giants, but open-source forks keep the riff alive.

Data point: 2023’s 149 models? Mostly Chinese labs catching up, per Stanford. Geopolitics brews.

Wrapping the history thread— from BERT’s watershed to ChatGPT’s masses. Simple methods, explosive scale.

The Economic Stakes

Trillions, say VCs. But who wins? NVIDIA’s GPUs print money; model makers burn cash. Adaptation economics favor FMs—train once, deploy everywhere.

For workers: coders augment, not replaced (yet). Creatives? Tools amplify. Risk? Job flux in routine tasks.

And that jazz opener? Davis named it “Relaxin’.” AI’s still relaxing into form.

**

🧬 Related Insights

- Read more: Agentic Commerce: All Hype Until Data Gets Its Act Together

- Read more: One GPU, Zero Labels: Forge a Domain-Specific Embedding Model Overnight

Frequently Asked Questions**

What are foundation models used for?

They power chatbots, image generators, code assistants—anything needing broad AI smarts, fine-tuned for specifics.

How do foundation models differ from traditional AI?

Traditional AI needs labeled data per task; FMs learn raw, adapt wide, slashing setup costs.

Will foundation models replace specialized AI?

Likely—economies of scale win, but niches persist for precision needs like robotics.