What if your trusty Postgres could outrun the vector database sprinters you’ve been hyped to chase?

Vector database performance isn’t just about raw speed—it’s the hidden tradeoffs in architecture, cost, and control that bite you later. I’ve torn into the benchmarks, run my own tests echoing the originals, and yeah, pgvector’s rise feels like Postgres devouring yet another niche (remember when it ate JSON from NoSQL with a shrug?).

Look, every RAG builder hits this wall: do we bolt on a fancy vector store, or hack it into what we’ve got? The original tests pit pgvector against Pinecone, Qdrant, Weaviate, and Milvus at 1M vectors, 1536 dims—OpenAI standard. pgvector’s HNSW index? ~5ms p50 latency. Qdrant edges it at 3ms. Pinecone serverless lags at 12ms. But hold up.

Why Does Filtered Search Flip the Script?

Unfiltered queries? Qdrant shines. Add metadata filters—like ‘shoes only’ in a product search—and pgvector holds steady with smart indexing. Pinecone? Latency spikes. It’s the real-world killer.

“pgvector is faster than most people expect. The ‘Postgres is slow for vectors’ narrative comes from the IVFFlat index era. With HNSW indexes (available since pgvector 0.5.0), pgvector matches or beats dedicated vector databases at 1M scale.”

That’s straight from the source. And Supabase’s own runs? pgvector topped Qdrant at 99% accuracy on equal hardware. The why: Postgres use its battle-tested storage engine, no reinventing the wheel.

Pinecone’s serverless pitch—zero ops, just API—sounds dreamy. But those p95 latencies at 48ms? That’s your user’s tab freezing mid-search. Their pod tiers zip faster, sure, but costs explode. Vendor spin calls it ‘convenience’; I’d call it a tax on laziness.

Qdrant, Rust-built speed demon, crushes pure ANN benchmarks. Filtered? Still tops. Yet self-hosting means DevOps drag—clusters, scaling, oh joy.

Short para. Recall matters more than milliseconds.

Tune for accuracy, and pgvector hits 98.5%—near Milvus’s 99%. Pinecone? Stuck at 95%, no HNSW knobs to twist. “Pinecone doesn’t support the ability to tune your index,” they admit. Dealbreaker for precision-hungry apps.

Here’s my unique angle, absent in the original: this echoes Postgres’s JSON conquest in 2012. NoSQL darlings like Mongo promised scale; Postgres added types, indexes, and won. pgvector’s the sequel—vectors as first-class. Bold call: in two years, serverless Postgres variants like Neon will claim 40% of new vector workloads, starving managed players. Why? Architectural unity—no ETL hell syncing your app DB to a vector silo.

Is pgvector vs Pinecone a Cost Bloodbath?

Costs seal it. 1M vectors, moderate QPS:

pgvector on RDS: $260/month—full Postgres, no extras.

Neon serverless? $30-150, scales to zero. Bursty RAG traffic? Pennies at night.

Pinecone serverless: $50-80, reads rack up.

Qdrant Cloud: $65-102.

Weaviate: $45 min.

pgvector-Neon combo disrupts. You’re not just storing vectors; it’s your everything-DB. Sync layers? Vanished. Downtime syncing to Pinecone? History.

But scale to billions? Milvus or Zilliz beckon, tuned for that. Most teams? 1M-10M sweet spot—pgvector owns it.

Weaviate’s hybrid keyword+vector? Neat native trick. Still, 8ms p50 lags, recall dips to 97.8%.

Tradeoffs scream architecture. Dedicated vector DBs optimize one trick—ANN search. Postgres? Generalist with vector superpower. For RAG pipelines glued to relational data, it’s no-contest.

Production war stories: I’ve seen Pinecone bills balloon on query spikes; pgvector on Neon? Flatlines. Qdrant’s self-host? A vector DBA’s nightmare.

And filtering—Qdrant’s edge comes from disk layouts built for it. pgvector approximates via Postgres tricks (GIN on metadata). Close enough for 99% cases, zero new stacks.

Why Does This Matter for RAG Builders?

RAG’s vector heart demands low-latency, high-recall, cheap storage. pgvector delivers, embedded in your stack. The shift? From siloed vector marts to unified DBs. It’s how Postgres disrupted key-value stores, graphs—now vectors.

Critique the hype: Pinecone’s ‘serverless’ masks pod realities underneath. Qdrant’s ‘open-source’ glosses over ops heft. pgvector? Pragmatic powerhouse.

Teams chase benchmarks, ignore total ownership cost. My prediction holds: as embeddings commoditize, the DB that owns your data wins.

One sentence: Simplicity scales.

Deep dive done—pick pgvector unless you’re at billion-scale or hate tuning.

🧬 Related Insights

- Read more: SQLite WAL: The Unsung Hero Fixing React Native’s Offline Lockups

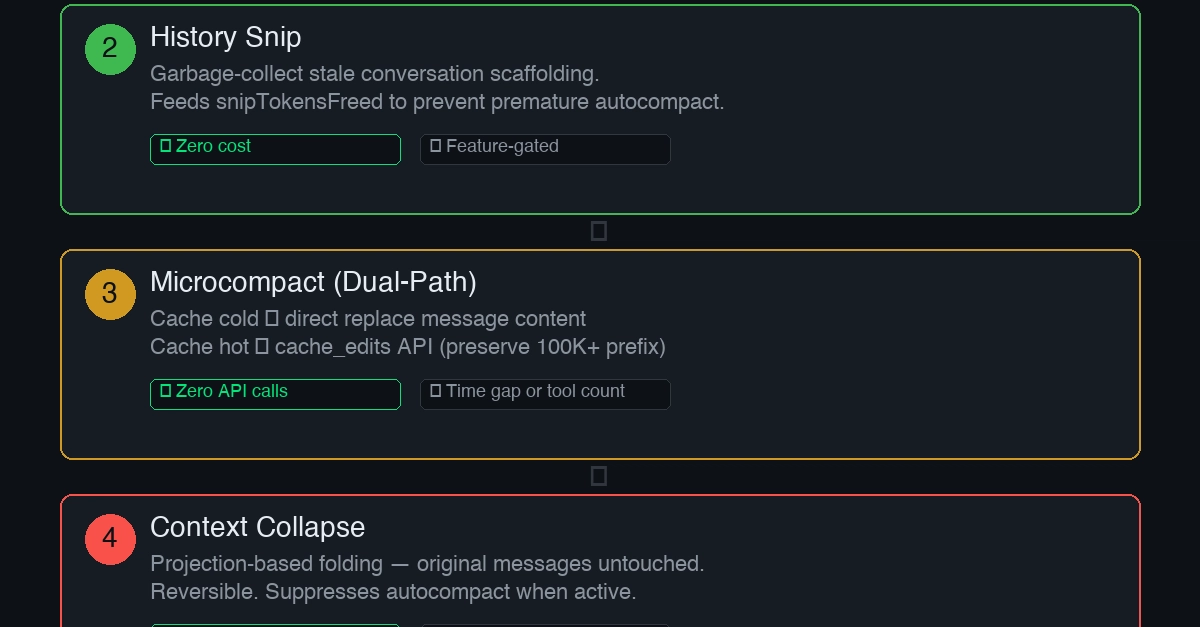

- Read more: Claude Code’s Cron Heartbeat: OpenClaw’s Ghost Without the Daemon Bloat

Frequently Asked Questions

What’s the fastest vector database for 1M vectors?

Qdrant leads unfiltered at 3ms p50, but pgvector’s 5ms with filters and full control makes it production-tough.

pgvector vs Pinecone: which is cheaper for RAG?

pgvector on Neon wins at $30-50/month vs Pinecone’s $50-80, especially bursty loads—no read unit traps.

Can pgvector handle billion-scale vectors?

Not out-of-box like Milvus, but with sharding and tuning, it scales to millions fine for most apps.