My terminal blinked. Claude Code, mid-session on a tangled Node.js repo, yanked user prefs from a Markdown file. No vectors. No databases. Just text.

Claude Code’s memory system isn’t chasing the AI hype train. It’s parked at the station, sipping coffee, reading your codebase live. Forget snapshots. Forget git history caches. The mantra? Don’t store what the code screams right now.

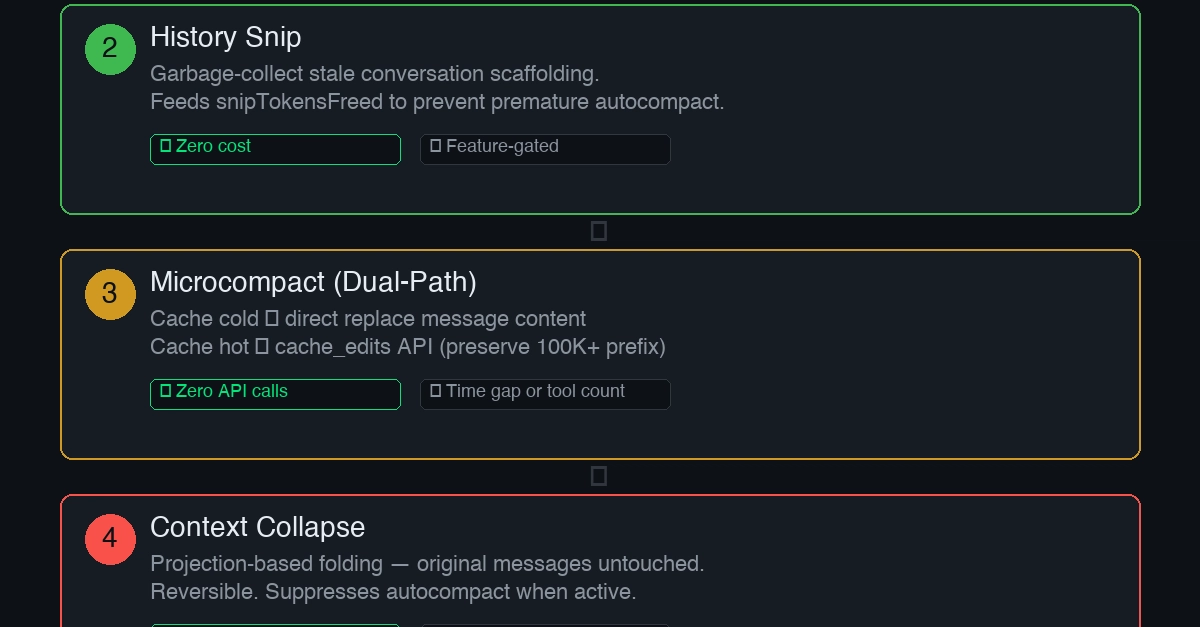

And here’s the kicker—a stale memory poisons everything. “A stale memory is worse than no memory at all, because the model acts on it with confidence.” That’s straight from the docs, and damn if it isn’t true. I’ve seen LLMs confidently spit garbage from outdated embeddings. Claude sidesteps that trap.

Why Claude Code Obsesses Over ‘Current State’

Code mutates. Refactors happen. Paths shift. Stash a fact like “auth in src/auth/” and boom—your AI’s lying to itself next week. But run a glob on the project? Fresh truth, every time.

This isn’t thrift. It’s paranoia about drift. Memories stick to meta-stuff: who you are (data scientist, observability nut), what ticks you off (no summaries after diffs), project deadlines (merge freeze 2026-03-05), external pointers (Linear for bugs). Four types. Hard stops. No fuzzy tags.

If you only save corrections, you will avoid past mistakes but drift away from approaches the user has already validated, and may grow overly cautious.

Smart. Feedback logs wins too—“keep this style”—so the model doesn’t “improve” away your faves. Models bland out without anchors. Seen it.

Closed taxonomy crushes open tags. No “code-style” vs “formatting-pref” sprawl. Each type’s wired different: feedback needs Why/How fields. Retrieval tweaks per flavor. Forces clarity. No label diarrhea.

But dates? User says “Thursday.” Code mandates absolute: 2026-03-05. Obvious? Models parrot verbatim otherwise. Explicit rule. Good.

Why Skip Vector DBs for a 250ms LLM Peek?

Critics howl here. Live Sonnet call scans descriptions, picks 5 files. ~250ms, 256 tokens. Async prefetch hides it—feels instant.

Vectors? Embed, store, cosine-match. Scales huge, but shallow semantics. “Deployment” won’t snag “CI/CD” reliably. Opaque scores. Infra bloat.

| Dimension | Sonnet Peek | Vector DB |

|---|---|---|

| Smarts | Deep lang match | Surface sim |

| Setup | Zip | Embed + store |

| Peek inside | Why this one? | ???? |

| Latency | 250ms hidden | Embed + hunt |

| Fits | 20-200 files | Millions |

For CLI sessions, 20-100 memories? Sonnet wins. No shard fleets. No Pinecone bills. Tradeoff screams first-principles: match your workload, don’t chase scale porn.

Limit to 5? Behavioral hack. Flood with 50, users hoard junk. Cap nudges cleanup, sharp descriptions. Constraints shape humans better than servers.

Here’s my take, absent from the source: this echoes Unix pipes from ’70s—small tools, compose smart. Vector DBs? Modern bloatware, like shoving SQL at grep jobs. Claude Code revives that ethos for AI agents. Bold prediction: copycats dump vectors by 2026, chasing this lean memory vibe. Anthropic’s PR spins “simpler,” but it’s a stealth philosophy flex.

Does Capping Memories at 5 Files Kill Scalability?

Nah. It’s genius user psychology. Sessions hit 20-100 files tops. More? Prune. Write crisp summaries so Sonnet nails it.

No /remember command alone. Post-turn, agent distills. Auto-saves meta. Enforces the four types. No drift.

Skeptical? I’ve battled vector RAG hell—hallucinations from stale embeds, infra nightmares. Claude’s Markdown pile feels… liberating. Primitive? Maybe. Effective? Runs circles ‘round bloated stacks.

Dry laugh: Anthropic preaches this while hawking Opus. But code don’t lie—source shows obsession with now-truth.

Punchy win: transparency. Sonnet explains picks. Vectors? Magic numbers.

Tradeoff city. Scales poor past 200? Fine—for coders, not Netflix. CLI tool. Not enterprise CRUD.

Wander a sec: reminds me of early Git—blobs, not DBs. Self-describing. Why complicate?

Why Does This Matter for Developers?

You’re refactoring at 2am. Claude recalls your “no lodash, native only” without hallucinating paths. Pulls deadline nudge. Skips vector tax.

Hype calls it limited. I call bullshit. Corporate stacks love vectors for grant-chasing papers. Real work? This wins.

Unique angle: PR spin says “tradeoffs.” Truth? Anthropic dodges VC-fueled over-engineering. Bet they laugh at OpenAI’s embedding farms.

Deep dive payoff—Claude Code isn’t “simple.” It’s surgically anti-fragile. Code evolves; memories adapt via live reads. Vectors fossilize.

One gripe: four types rigid? Future-proof? Meh. But beats chaos.

🧬 Related Insights

- Read more: ShadCN UI in 2026: Copy-Paste Code That Owns Your Future

- Read more: The 404 Page That Trolls You: Why Uselessness Is the Future of Web Fun

Frequently Asked Questions

Why does Claude Code use Markdown files instead of vector databases?

Markdown keeps it dead simple—no infra, live LLM picks semantically. Vectors scale but shallow-match and drift.

What are the four memory types in Claude Code?

User (prefs), feedback (wins/losses), project (decisions), reference (externals). Hard boundaries, no tag mess.

Does Claude Code’s 5-memory limit hurt usability?

Nope—forces sharp curation. Users with 200+ prune junk, write better descriptions. Behavioral win.