What happens when your core training library gets blindsided by a new paradigm every six months?

TRL v1.0 doesn’t pretend to have all the answers upfront. It’s a post-training library forged in the fire of real-world flux—six years of commits, 75+ methods, 3 million downloads a month. And here’s the kicker: it wasn’t designed in a vacuum. No grand blueprint. Just relentless iteration as PPO gave way to DPO, then RLVR-style tricks upended the stack again.

How Did Post-Training Even Get This Messy?

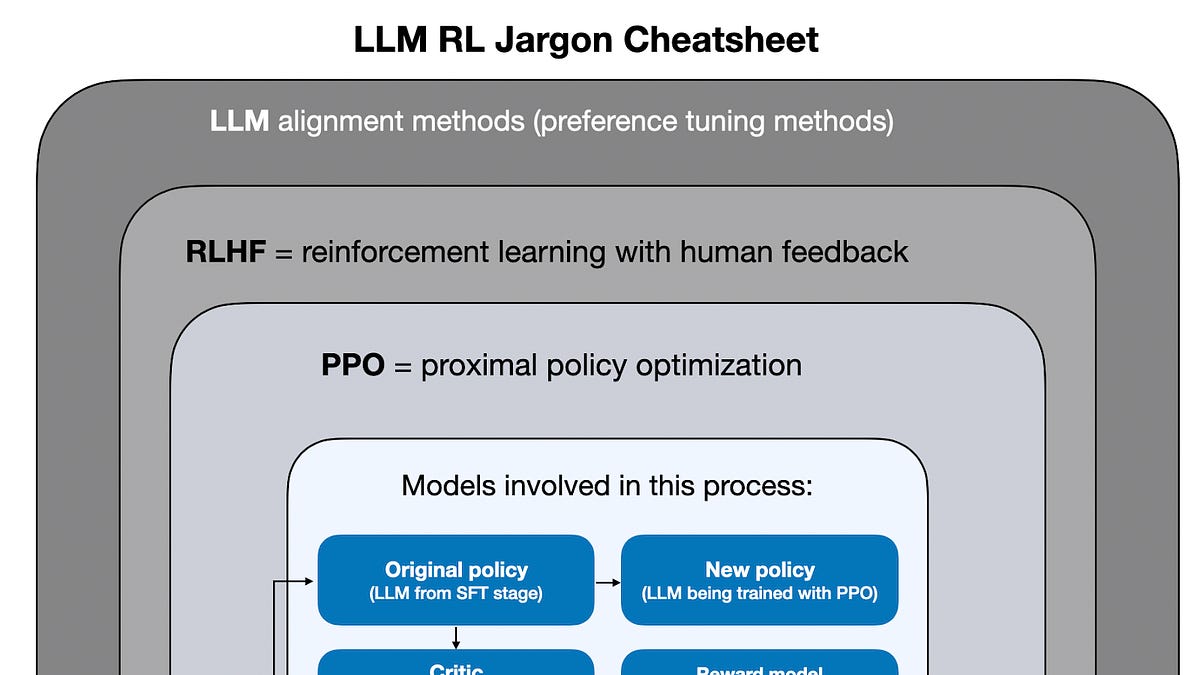

PPO ruled once. Picture it: policy networks, reference models, reward learners, rollout sampling, full RL loops. Seemed bulletproof. Schulman et al. in 2017, Ziegler in 2019—they set the canon.

But DPO smashed that. Rafailov and crew in 2023 said, screw the reward model, no value functions, no online sampling. Preferences alone could align models. ORPO, KTO followed suit—suddenly, the sacred stack looked like yesterday’s news.

Then RLVR hits, like GRPO from Shao et al. this year. Math problems? Code? Tools? Rewards from verifiers now—deterministic checks, not fuzzy learners. Rollouts return, but twisted. The core objects? Unrecognizable.

The lesson is not just that methods change. The definition of the core keeps changing with them. Strong assumptions here have a short half-life.

That’s straight from the TRL team’s manifesto. Spot on. No library nails this because the field’s a shape-shifter.

TRL survives by design—or anti-design. Don’t abstract the stable essence (it evaporates). Abstract the change itself. Rewards? Essential in PPO. Optional in DPO. Back as verifiers in GRPO. One-size-fits-all abstraction? Dead twice over.

Why Does TRL v1.0 Split Stable from Experimental?

Look. TRL woke up one day and realized it was infrastructure. Unsloth, Axolotl—thousands of users stacking on its trainers. Break an arg? Instant outages downstream.

v1.0 owns it. Stable core: semantic versioning, ironclad. SFTTrainer? Rock solid. Experimental? from trl.experimental.orpo import ORPOTrainer—no promises, pure velocity.

This dual life isn’t half-assed. It’s surgical. Field spits methods faster than stability tests. Stable-only? Irrelevant by fall. All-in? Breakage apocalypse.

Promotion? Usage + low maint cost. Community hammers SFT, DPO, Reward modeling, RLOO, GRPO—they graduate. Rest? Docs track the wild side.

And the architecture? Evolutionary scars make it weird, but wise. Parts scream “why?” until you see the paradigm pivots they endured.

Here’s my take, one you won’t find in the announcement: this mirrors the Linux kernel’s stable/longterm branches versus mainline. Linus Torvalds faced hardware tsunamis too—CPUs, GPUs, peripherals morphing. Kernel devs learned: vendor-lock the tested paths, let experimenters crash elsewhere. TRL’s doing kernel-grade stability for AI post-training. Bold call? In two years, every rival library copies this or fades.

But wait—does it really deliver? Downloads say yes. Major projects bet on it. Yet skeptics whisper: experimental layer’s a distraction, dilutes focus.

Nah. It’s the secret sauce. Stable without it would fossilize. TRL moves with the field because it invites the chaos—in a cage.

Think bigger. Post-training’s not refinement; it’s rupture. Each shift rewrites the stack’s shape. Libraries ignoring that? Roadkill. TRL bets on modularity over monoliths—rewards as pluggable, loops as configurable. Unusual? Sure. But it scales with the madness.

Users love it. “Stable and experimental coexist within the same package, with explicitly different contracts.” That’s the promise. Grab SFTTrainer for prod. Poke ORPOTrainer for tomorrow’s edge.

Is TRL v1.0 Actually Better for Developers?

Developers, you’re smart— you know hype when you smell it. TRL’s no vaporware pitch. It’s battle-tested, user-shaped.

Why care? Speed. Try DPO sans reward model hassle. GRPO for code/math without PPO baggage. Compare apples-to-apples across 75 methods.

Downstreams like Axolotl prove it: TRL breaks? They break. So v1.0 freezes the surface. No renamed args, shifted defaults. Incidents? Outsourced to experimental.

Critique time. The team’s PR spins evolution nicely, but glosses maintenance hell. Experimental bloat could creep—75 methods ain’t trivial. Yet design minimizes it: shared guts, thin wrappers.

Prediction: as verifiers dominate (think o1-style reasoning chains), TRL’s pluggable rewards shine. Others scramble.

Short version.

It works because it had to.

Ecosystem lock-in grows. Hugging Face’s TRL isn’t just a lib—it’s the glue holding post-training sane.

🧬 Related Insights

- Read more: Google’s Gemini API Splits into Flex and Priority: The Real Cost of Reliable AI

- Read more: Google’s February AI Onslaught: Summit Hype, Model Tweaks, and the Usual Suspects

Frequently Asked Questions

What is TRL v1.0?

TRL v1.0 is Hugging Face’s post-training library with 75+ methods, dual stable/experimental tracks to handle AI’s rapid changes.

Is TRL stable for production use?

Yes—the core trainers like SFT and DPO follow semantic versioning; experimental ones don’t, for cutting-edge stuff.

How does TRL differ from other training libraries?

It evolves with paradigm shifts (PPO to DPO to GRPO) by design, not fighting them—pluggable components over rigid abstractions.