Ever wonder why your AI sidekick forgets your quirks session after session, even as it ‘learns’ to code your dreams?

It’s not improving. You are.

I fired up a Claude Code session last Tuesday—same project I’ve nursed for weeks. Custom LangGraph agents tangled with FastAPI backends, Cloudflare tunnels snaking through. My CLAUDE.md file? A battle-tested bible of patterns, decisions, dead ends. Memory files crisp. Context docs sharpened over dozens of chats.

Claude slurped it all in seconds. Quoted my naming quirks from two weeks back. Nailed deployment prefs. Then I nudged: extend that agent routing we’d hammered out Friday.

Boom. First principles. It pitched the exact architecture we’d trashed—documented dead end. I corrected. It pivoted. End of session: back to square one. Or Friday’s square, anyway.

Next day? Fresh session. Re-read notes. Same dance. I’ve looped this enough to spot the glitch. Claude ain’t adapting to me. I’m adapting to Claude. That CLAUDE.md? Beefier now, pre-empting its goofs. Memory files? Surgical. The glow-up’s yours, dev. Not the weights.

I have done this enough times to notice something I should have noticed earlier. Claude is not getting better at working with me. I am getting better at briefing Claude.

What ‘Self-Improving’ Really Means for Claude

Anthropic drops this gem: Claude “authors up to 90% of the code” on internal projects. Headlines erupt—recursive self-improvement! AI bootstraps better AI! Singularity via git commits!

Here’s the unglamorous truth. Claude spits code into Claude Code—a TypeScript CLI wrapper around the API. Tools, file I/O, perms, context juggling. Scaffolding. Model proposes tweaks. Engineer vets, tests, merges the winners. Ditches flops.

The core? Untouched. Those trillion-parameter weights—frozen post-training. Harness tweaks don’t ripple back. It’s like souping up your car’s dash cluster while the V8 idles stock.

Autocomplete polishing its own textbox. Not evolution.

Why Does ‘Learning’ Feel So Real—But Isn’t?

Words matter. Learning—in ML, in kids—means the system mutates. Weights shift. Novel problems yield to old smarts reborn. Not here.

Claude’s ‘memory’? Text dump to file. Session kicks off: context window slurps it. Model skims like you eyeball a fridge magnet. Session dies—poof, context clears. Weights? Pristine. Identical to ship day.

ChatGPT’s the same. Periodic summary injections. Retrieval. Not adaptation.

Research toys flirt with real change. MIT’s SEAL self-edits into finetunes. NVIDIA’s test-time training squishes context to temp weights. Meta’s HyperAgents rewrite their logic on feedback. Cool. None production-bound. Why? Forgetting cascades. Alignment wobbles. Per-user flops bankrupt the cloud.

No corp finetunes your pet model. Too dicey, too pricey. You get a notepad the AI re-scans daily—like a doc pretending your chart equals empathy.

Short para for punch: It’s all facade.

And here’s my hot take, absent from the hype: this mirrors the 1980s Lisp machine era. Userland hackers customized kernels on-the-fly, crafting ‘intelligent’ tools. Felt alive. But brittle, unscalable—sparked an AI winter. Today’s prompt sorcery? Same vibe. You’ll boom as ‘AI whisperers,’ but without weight updates, it’s theater. Bold call: edge devices flip this in 3 years—your phone finetunes locally, no server snitch. Anthropic’s PR spins ‘self-improvement’ to mask they’re still shipping black boxes.

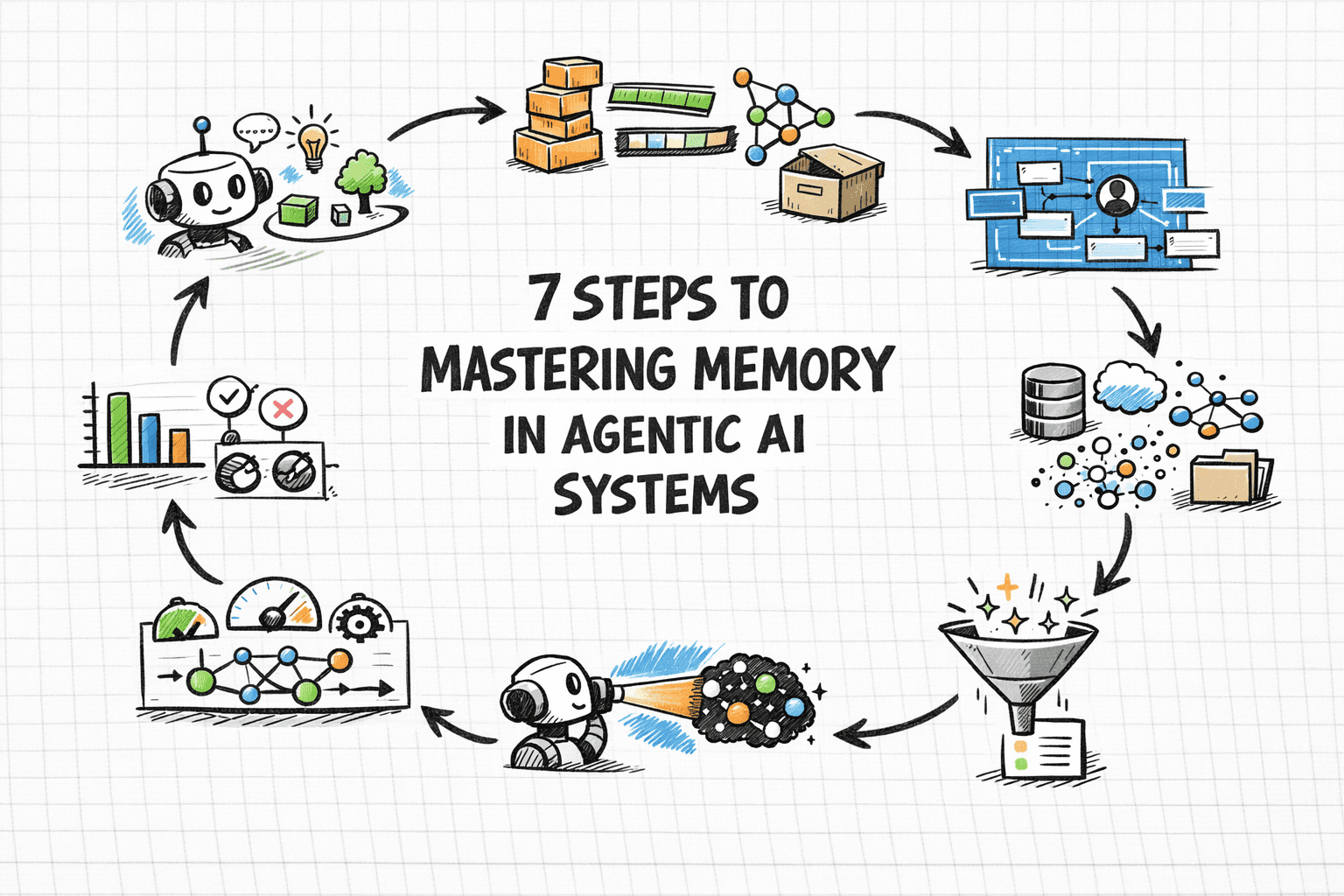

How Does AI Memory Actually Work?

Peel it back. Context windows ballooned—Claude 3.5 Sonnet’s 200K tokens gulp your CLAUDE.md feast. But it’s ephemeral. No gradient descent. No backprop etching scars into neurons.

Retrieval-augmented generation (RAG) on steroids. Your files? External brain. Model infers on-the-fly, spits gold—until reset.

Why build this way? Scale. One model serves billions. Personal finetunes? Compute Armageddon. Plus, safety: finetuned rogues hallucinate jailbreaks or biases dialed to 11.

But it warps you. Devs hoard CLAUDE.mds like grimoires. Teams templatize prompts. Prompt engineering morphs from hack to craft—your unique insight? It’s birthing a new guild, prompt priests tuning silicon gods.

We spiral: better briefs yield better outputs, loop-tightening. Feels mutual. Isn’t.

One sentence wonder: You’re the trainer. AI’s the reluctant pup.

Is Claude’s ‘Self-Improvement’ Just Packaging Polish?

Back to Anthropic’s flex. Claude codes its harness. Engineer gatekeeps. Weights static.

Corporate sleight-of-hand. Headlines chase ‘AI builds AI’ dopamine. Reality: iterative devops with LLMs as junior devs.

Critique their spin—90% authorship? Cherry-picked internals, not wild west consumer chaos. My sessions? 20% usable first pass. Corrections galore.

Architectural shift? Harnesses evolve faster than models now. Claude Code iterates weekly. Sonnet? Quarterly cliff.

You’re not using AI. You’re co-piloting a static oracle with dynamic Post-Its.

Dense para time: Imagine scaling this to enterprises—fleet of CLAUDE.mds per team, versioned like codebases, diffed for insights. That’s the real play. Not mythical self-upgrades, but human-AI symbiosis where we architect the memory layer. Barriers crumble with vector DBs embedding your lore at ludicrous speeds. But call the emperor naked: no production AI learns like a human because no one dares the weight roulette.

The User-Led Renaissance

Flip it. You’re improving—files honed, patterns distilled. That’s power.

My CLAUDE.md anticipates Claude’s first-principles fetish, flags rejected paths upfront. Sessions shrink from hours to minutes.

Prediction: this births ‘context OS’—OSes where your digital shadow (files, chats, diffs) auto-synthesizes into hyper-prompts. AI stays dumb engine. You? The spark.

But hype-check: Anthropic’s not delivering singularity. They’re vending mirrors reflecting your grind back as ‘magic.’

Fragment. Skeptical? Test it. Nuke your CLAUDE.md. Watch genius evaporate.

🧬 Related Insights

- Read more: $12.5M Pledge: Big Tech’s Bet on AI to Secure Open Source

- Read more: Microsoft’s Responsible AI Standard: Principles Meet Practice

Frequently Asked Questions

What is self-improving AI in Claude?

Anthropic’s term for their model generating code to enhance its own tools—like Claude Code CLI—but core model weights don’t change. It’s interface tweaks, not true learning.

Does AI memory mean the model learns from you?

No—it’s just reloading text files into context each session. Weights stay frozen; no permanent adaptation.

Will AI ever truly self-improve for users?

Research hints yes via on-device finetuning, but production lags due to compute costs, safety risks. Expect enterprise first, consumers later.