So, are we ready to admit it? The buzz about AI models getting smarter was always a bit of a red herring, wasn’t it? For years, we’ve been treated to endless benchmarks and increasingly polished chat interfaces, all while the real meat — how this stuff actually gets used — simmered on the back burner. But this past week, something shifted. It felt less like another spec bump for the latest large language model and more like the ground beneath our digital feet is starting to buckle.

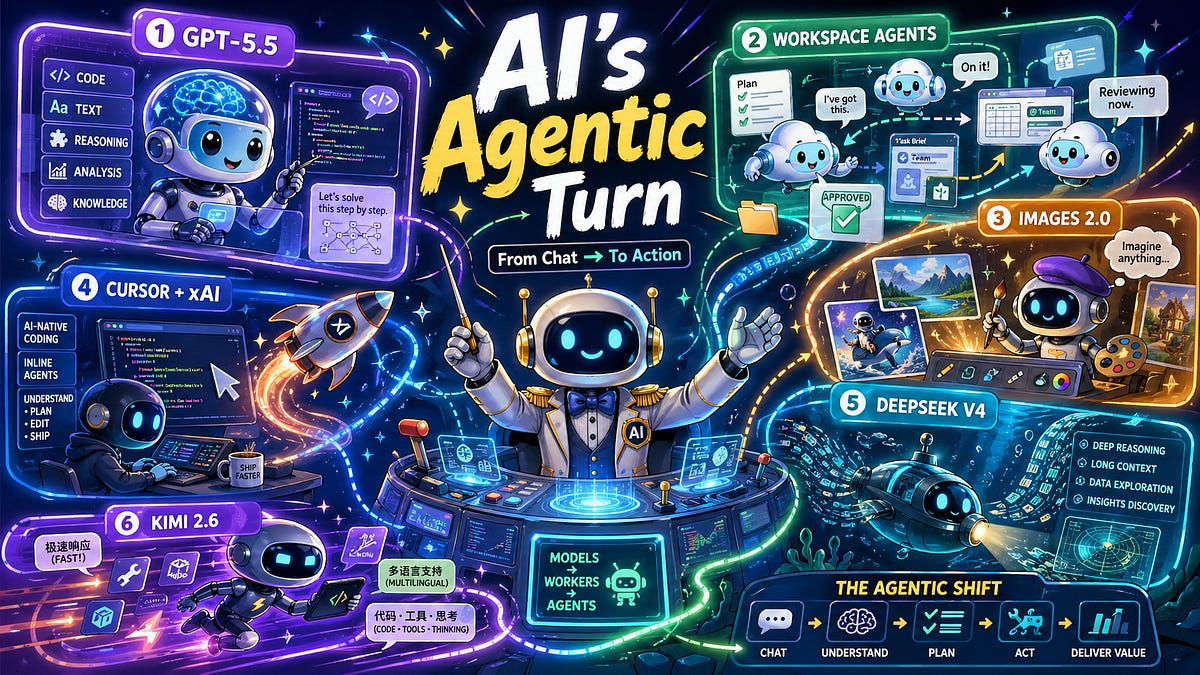

The big news, the undeniable gravitational center of the AI universe right now, is OpenAI’s apparent release of GPT-5.5. Now, the benchmarks and the improved reasoning? Sure, that’s expected. It’s the trajectory. But the real story, the one whispered in the hushed halls of dev teams and enterprise IT departments, is that these models are no longer just isolated smarts. They’re getting tangled, like an complex web, with the very systems where actual work happens: your code editor, your company’s clunky workflows, the chaotic cloud environment, the endless Slack threads, and yes, those so-called “agentic interfaces” everyone’s been hyping.

This isn’t just about a better chatbot. It’s about a computational engine that can, and will, coordinate action. Think about it. A frontier model isn’t just a model anymore. It’s a runtime. It’s the intelligence layer slotted into everything from research pipelines to those clunky enterprise assistants. The game has fundamentally changed from ‘smarter conversation’ to ‘actionable execution’.

The AI Enterprise Enters the Chat (Literally)

And OpenAI’s other moves this week just hammered that point home. Their “Workspace Agents” aren’t just jazzed-up custom GPTs for your business. This is the nascent stage of AI acting as a literal institutional process. Imagine agents that live inside your company, operating in the cloud, playing nicely with ChatGPT and Slack, respecting your permissions, remembering context (finally!), and — get this — running long workflows. That’s not just productivity; that’s AI being woven into the fabric of organizational life.

Then there’s ChatGPT Images 2.0. Suddenly, AI isn’t just about churning out text or code; it’s bleeding into visual production. Better text rendering, multilingual prowess, actual visual reasoning, and something they’re calling “images with thinking” — where the AI apparently takes its sweet time planning and refining. It’s like they’re trying to turn ChatGPT from a single-purpose app into a multimodal work environment. Text, code, images, tools, memory, approvals, agents… all converging.

Why the xAI/Cursor Deal Matters (Hint: It’s About Control)

The xAI deal with Cursor? It’s not just a tech acquisition; it’s a perfect illustration of this larger shift. Cursor has emerged as one of the clearest examples of AI-native software development moving from a quirky novelty to something foundational. And code? Code is the absolute ideal playground for AI agents. Why? Because it’s explicit. It’s testable. It’s composable. And most importantly, it’s economically vital. A coding agent can draft, edit, execute, debug, and verify. It operates in a loop where progress can actually be measured. And in AI, whoever owns that loop — whoever controls that fundamental workflow — controls a significant chunk of the future. Elon’s playing the long game here.

Meanwhile, you can’t ignore DeepSeek V4 and Kimi 2.6. These aren’t just playing catch-up; they’re rapidly compressing the frontier from the open and semi-open side. The competition isn’t about who has the prettiest chatbot anymore. It’s about long context windows, raw coding power, tool integration, latency that doesn’t make you want to throw your laptop, and reliable agentic behavior. The battlefield has definitively moved.

It’s not about intelligence as conversation anymore. It’s about intelligence as execution. This is the operational revolution.

What Does This Mean for Your Job?

The models themselves aren’t the product anymore. The product is the model plus the harness, the tools, the memory, the permissions, the environment, and the feedback loop. We’re moving from systems that answer questions to systems that perform work. And if you’re not thinking about how your role interfaces with — or is replaced by — an AI that can perform tasks autonomously, you’re already behind.

AI Research Breakdown

On the research front, Google’s DeepMind and Google Research have cooked up “Decoupled DiLoCo.” The idea is to make training large language models more resilient to hardware meltdowns and network hiccups. By splitting the compute into independent, asynchronously chatting “learners,” they’re apparently boosting training efficiency while keeping performance decent, even when they intentionally inject chaos into the system. Smart, given how much money is on the line with these massive training runs.

Then there’s LLaDA2.0-Uni from Inclusion AI and Ant Group. This is a unified discrete diffusion model that’s supposed to handle both understanding and generating multimodal stuff (think text and images) within a single framework. They discretize visual inputs into semantic tokens and use block-level diffusion. Apparently, it can keep up with specialized vision-language models and even handle interleaved generation and reasoning. Sounds complex, but the goal is clear: one model to rule them all.

Finally, Carnegie Mellon University and Amazon AGI have dropped SkillLearnBench. This is pitched as the first benchmark specifically designed to test how well AI agents can learn new skills continuously across 20 real-world tasks. The initial findings suggest that while continual learning methods do improve things, there’s still a long way to go before agents can smoothly acquire and adapt new abilities like a seasoned pro.

My unique insight here? We’re witnessing a replay of the early internet days. Remember when the web was just static pages, and then suddenly, we had e-commerce, social media, and the app explosion? AI is going through its own Cambrian explosion, moving from raw information processing to complex, system-integrated task execution. The companies that can build the stable, reliable, and accessible environments for these AI agents to operate within will be the new giants, not necessarily the ones with the biggest models.

And who is actually making money here? Right now, it’s the platforms that can embed these models into lucrative workflows. OpenAI is positioning itself as the operating system for AI work. xAI, by acquiring Cursor, is buying its way into the critical developer workflow. The companies that sell infrastructure and tools for AI development and deployment will also do well. It’s a land grab, and the map is still being drawn with very expensive ink.