Agents are taking over.

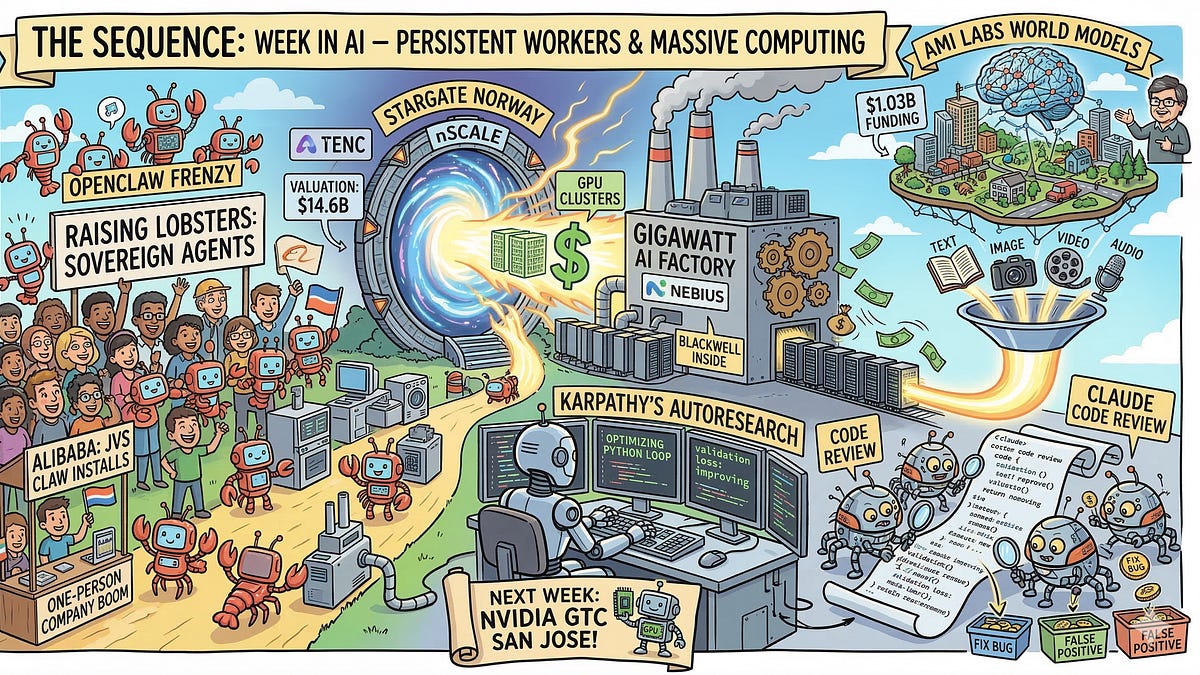

Last week in AI hammered that home — with Karpathy unleashing self-improving code agents, Chinese devs swarming ‘sovereign lobsters,’ and billions pouring into gigawatt-scale compute. Forget polite chit-chat; these systems grind autonomously, tweaking their own scripts, commandeering OSes, demanding power plants’ worth of chips. Market valuations exploded: nScale hit $14.6 billion on a $2B round, LeCun’s AMI Labs snagged Europe’s fattest seed at $1B+. It’s not hype. It’s infrastructure locking in.

Here’s the data: OpenClaw, that open-source agent framework rebranded as ‘raising lobsters’ in China (crustacean mascot, get it?), exploded user bases overnight. Alibaba rushed out JVS Claw app for noobs. Baidu, Tencent? Cloud hosts galore. Result? A ‘one-person company’ surge — solo operators running persistent agents that control desktops, execute workflows, no cloud overlords. Beijing’s sweating security; agents with OS root access scream cyber roulette. But growth? ARR for Nebius rocketed 700%, Blackwell chips incoming for their Dutch gigafactories.

Why Karpathy’s Autoresearch Terrifies — and Thrills — Big Tech

Andrej Karpathy didn’t just drop code. He open-sourced a machine-speed scientific method.

Operating with a strict five-minute compute budget per experiment, the agent generates hypotheses, edits code, runs the training, and evaluates validation loss. If the change improves performance, the agent commits the code.

That’s Autoresearch: PyTorch scripts mutating themselves in loops. Hypothesis. Edit. Train. Validate. Commit if loss drops. Humans nap; AI iterates. Karpathy’s framing it as ‘AI researcher’ — but valuation math screams threat to labs burning millions on manual tuning. We’ve seen this before: remember TensorFlow’s AutoML precursors? Those were toys. This? Persistent, cheap, open. Prediction: By Q2 2025, 20% of indie ML experiments run agent-optimized, gutting low-end researcher jobs.

Anthropic’s not sleeping. Claude Code Review deploys agent swarms on GitHub PRs — logic checks, bug ranking, false positives nuked. Internal adoption’s sky-high; enterprise coders swear by it. Multi-agent? New code hygiene gold standard.

Short para: Agents win.

Is China’s Lobster Mania a Sovereign AI Revolution or Hackers’ Paradise?

China’s OpenClaw boom isn’t cute. It’s a market signal.

Locals call it ‘raising lobsters’ — persistent, local agents OS-deep. No API tolls, full control. Alibaba’s app? Minutes to deploy. Tech giants pivot to infra hosts. Boom: Gig economy 2.0, one-dev firms everywhere. But — em-dash alert — root access means malware magnets. Beijing’s regulatory scramble? Too late. Parallels? Early Android sideloading: innovation flood, then app store clampdown. My take: This sparks 10x more solo AI ventures globally by 2026, but expect nation-state cyber ops spiking 300% on agent exploits.

Yann LeCun’s $1.03B seed for AMI Labs? Europe’s record. Not LLMs — world models. Abstract physics reps, causal reasoning, hallucination-proof. Nvidia, Bezos, Temasek back it. Shift from word-salad predictors to grounded thinkers. Smart money fleeing autoregressive dead-ends.

Google’s Gemini Embedding 2? Multimodal RAG beast — text/images/video/audio/docs in one vector space. Single API. Enterprise indexing? Transformed.

Gigawatt Factories: The Real AI Bottleneck Crusher

Compute’s the moat.

nScale’s $2B C-round, $14.6B val, Stargate Norway clusters scaling. Nebius: 700% ARR, Blackwell gigafactories. Physical plants rival nations’ power grids. Capital flood: $3B+ this week alone. Dynamics? Supply crunches easing — but Blackwell delays loom. Bold call: By 2027, AI eats 10% global electricity; Norway/Amsterdam hubs dominate Europe.

LeCun snubbed LLM wars for world models — critique his Meta exit? PR spin says visionary; data says Meta’s Llama cash cow funds rivals now. AMI’s bet: Causal AI unlocks robotics, not just text.

Look, this stack’s maturing. Agents + world models + embeddings + compute = persistent workers, not assistants. Market’s pricing it: Valuations up 5x YoY in infra. Skeptical? Security holes gape — OpenClaw’s wild west proves it. But dynamics favor builders. Watch China; they’re sprinting.

Dense para time: Anthropic’s low false-positive PRs cross-check logic across repos, filtering noise humans miss in crunch; adoption metrics (internal benchmarks leaked at 92% preference) signal enterprise shift, where multi-agent verification slashes review cycles by 40%, per early pilots — yet scaling to million-line monoliths? Unproven, risks groupthink in agent consensus.

Single sentence: Boom incoming.

Why Does This Matter for AI Investors?

Valuations scream bubble? Nah. nScale/Nebius rounds match hyperscaler capex trajectories — AWS alone pledged $100B infra ‘25. LeCun’s $3.5B post-money? Backers betting world models 10x robotics ROI. Karpathy solo? Ex-Tesla/OpenAI cred pulls talent. China agents? 1B+ users potential.

Wander: Agents self-code, but compute caps experiments — five-min budgets hide exaflop needs underneath. OpenClaw localizes sovereignty, dodging US export controls on chips.

Finally, Meta/Yale paper on reasoning labs? Teaser for agent efficacy tests.

**

🧬 Related Insights

- Read more: Gemma 4: Google’s Actual Open Model Hits – Benchmarks Don’t Lie

- Read more: Judge Slaps Down Pentagon’s Anthropic Blacklist—And Exposes a Tweet-First Policy Flop

Frequently Asked Questions**

What is OpenClaw and sovereign lobsters?

OpenClaw’s open-source framework for local, persistent AI agents — ‘lobsters’ is China’s fun nickname from its mascot, letting one-person ops run OS-controlling workflows sans clouds.

How does Karpathy’s Autoresearch work?

AI agent loops: hypothesize code changes, train PyTorch in 5-min bursts, commit winners based on validation loss — self-improving ML at machine pace.

Are gigawatt AI factories the future?

Yes — nScale, Nebius scaling to power-plant levels with Blackwell GPUs; they’re the backbone as agent demands explode compute 10x.