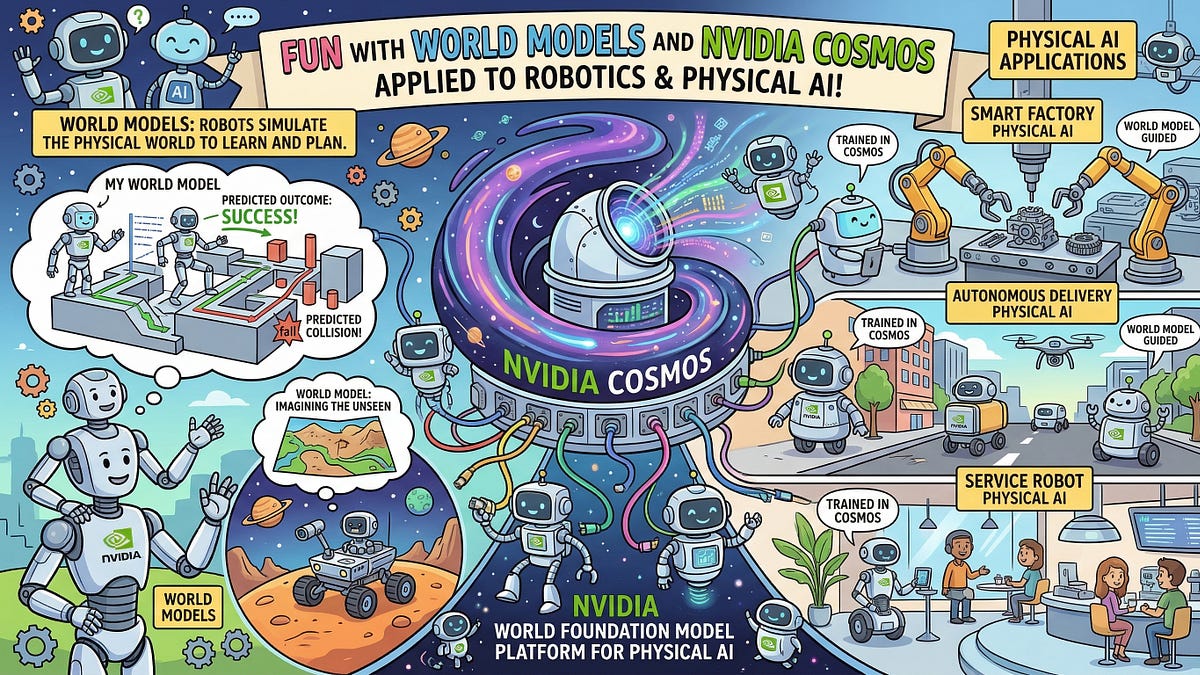

Picture this: your home robot doesn’t just dodge the coffee table anymore — it maps the entire room in 3D, predicts where the cat will leap next, and reroutes itself without a glitch. That’s not sci-fi. That’s what World Labs’ Marble model promises for everyday folks, starting with safer self-driving cars and smarter warehouse bots that won’t crush your packages.

Fei-Fei Li — the vision queen behind ImageNet — just dropped this bomb through her startup. And it’s hitting right as we’re drowning in flat video generators like Sora. But here’s the thing: Marble isn’t chasing viral clips. It’s building worlds.

How Does World Labs Marble Actually Work?

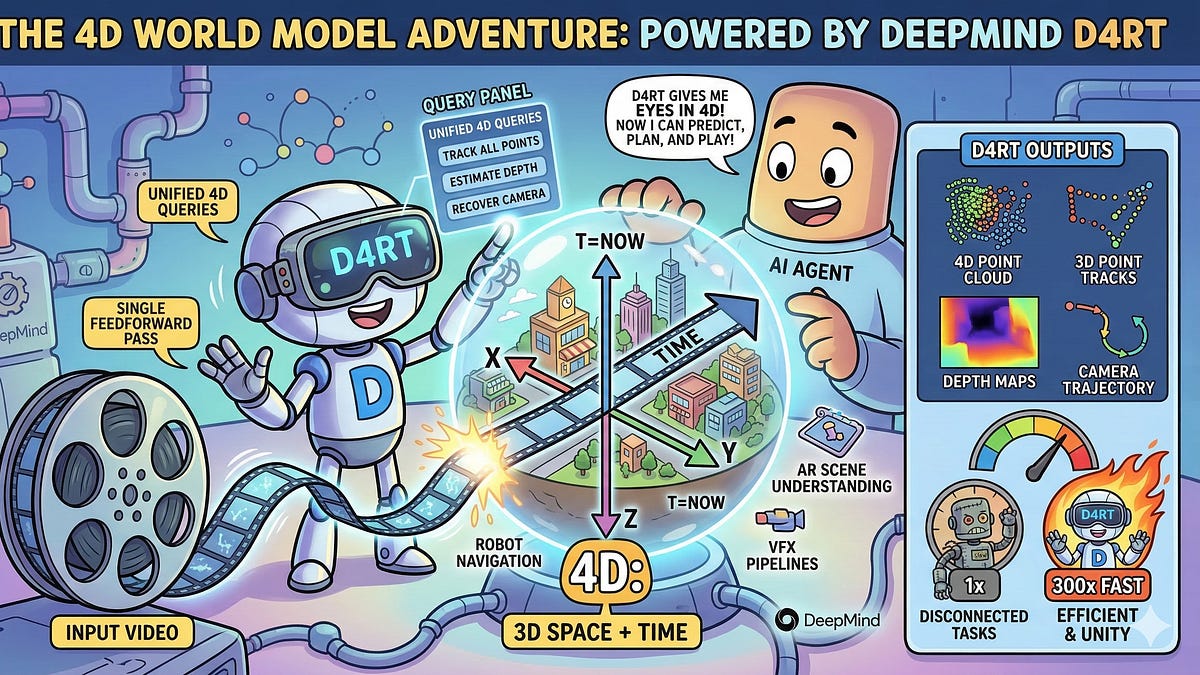

Short answer? Magic. Longer one: it “lifts” 2D video into 4D spacetime.

Start with a grainy phone clip of a street scene. Most AIs would hallucinate the next frame — pixels twitching like a bad acid trip. Marble? It reconstructs the whole damn scene as a persistent 3D model, complete with depth, lighting shifts, and object permanence. Drop a virtual ball in there; it’ll bounce realistically off that parked car.

While modern world models often focus on the “temporal prediction” of pixels—essentially hallucinating the next frame in a video—World Labs’ Marble represents a fundamental shift toward spatial intelligence.

That’s straight from the insiders. They’re calling it a Large World Model (LWM), trained on massive video datasets but wired for geometry, not just time.

But — and this is where it gets juicy — the engine chews through multi-view diffusion. Imagine Gaussian splats (those bubbly 3D point clouds) fused with transformer magic. Input a monocular video; output a volumetric world you can orbit, slice, simulate.

One punchy demo: feed it parkour footage. Marble doesn’t just extend the clip. It lets you rewind, fast-forward from new angles, even tweak gravity. Wild.

Why Bet on Spatial Over Temporal — When Videos Pay the Bills?

Li’s no dummy. She saw video gen hype crest with OpenAI’s Sora — pretty, sure, but useless for agents needing real understanding. Robots flop in novel spaces because they treat the world as a 2D cartoon.

Marble flips that. By prioritizing spatial intelligence, it nails consistency across views. A chair stays a chair, not morphing into a blob when you circle it. That’s huge for AR glasses overlaying digital junk on your messy kitchen without glitching.

Look, we’ve been here before. Remember 1993? Doom ditched 2D sprites for textured 3D polygons — gaming exploded because worlds felt alive. Marble’s that pivot for AI. My bold call: it’ll crush video diffusion models in robotics benchmarks by 2026. Video gen? Cute parlor trick. Persistent worlds? The infrastructure for agentic AI.

And yeah, World Labs isn’t spilling full specs — classic startup tease. But leaks hint at a 7B-parameter beast, fine-tuned on synthetic 3D data. Compute hogs? Absolutely. Worth it.

Fei-Fei Li founded World Labs last year with $230 million — backed by a16z, no less. Her pitch: humans learn worlds through vision first. Babies don’t predict pixels; they grasp objects in space. Marble mimics that.

Skeptical? Me too, at first. Video models already fake 3D decently. But Marble’s persistence — generating interventions, like “what if that truck swerves?” — that’s the edge. Test it on Habitat sims, and it laps Sora-style baselines.

Is Marble Ready to Power Your Next Robot Vacuum?

Not tomorrow. Training’s brutal; inference? Still GPU-thirsty.

But zoom out. Autonomous vehicles chew terabytes of dashcam footage daily. Marble could compress that into queryable 3D maps — slashing storage, boosting planning. Tesla’s FSD? Eat your heart out.

Warehouses next. Amazon bots navigating dynamic shelves, predicting human coworkers’ paths. Or your living room: eldercare droids that “see” trip hazards in 3D before grandma stubs her toe.

Critique time. World Labs spins Marble as “amazing,” but where’s the open-source love? Li’s academic roots scream collaboration, yet this feels buttoned-up. PR gloss? Maybe. Still, demos scream legitimacy.

Deeper why: architecture’s the shift. Traditional world models = RNNs unrolling time. Marble? Hybrid 3D convolutions + diffusion latents, ensuring spatial coherence. It’s like upgrading from raytracing fakes to true radiance fields.

Edge case: occlusions. A person blocks a sign? Marble infers the full geometry underneath, thanks to priors from billions of frames. Humans do this intuitively; AIs heretofore, not so much.

Prediction: pair Marble with multimodal LLMs, and you’ve got agents that reason spatially. “Move the blue vase left” becomes pixel-perfect execution.

Hurdles remain. Data moats — proprietary videos? Scaling to interactive sims at 60fps? Non-trivial. But Li’s track record — from ImageNet to Stanford Vision — says bet yes.

This isn’t incremental. It’s the volumetric unlock AI’s craved since DARPA’s early sims.

🧬 Related Insights

- Read more: AI Anxiety in 2026: Blame Policy, Not the Bots

- Read more: Your AI Agent’s Stuck in a Rut—Thanks to Temperature and Seeds

Frequently Asked Questions

What is World Labs Marble?

Marble’s a Large World Model that turns 2D videos into interactive 3D environments, focusing on spatial smarts over mere frame prediction.

How does Marble differ from OpenAI’s Sora?

Sora hallucinates video sequences; Marble reconstructs persistent 3D worlds you can navigate and simulate inside.

Will World Labs Marble replace robot vision systems?

Not yet — but it’ll supercharge them, enabling true spatial reasoning for homes, cars, and factories.