What if your AI lab never slept?

Andrej Karpathy’s AutoResearch — yeah, that crafty agentic setup — promises exactly that. No more meat computers dragging their feet. We’re talking AI that hypothesizes, codes, trains, evaluates, all on its lonesome. While you’re bingeing Netflix.

Karpathy nailed the problem spot-on. Here’s the man himself:

AI research has historically been performed by “meat computers” who have to eat, sleep, and occasionally synchronize their findings using “sound wave interconnects” during painfully slow weekly group meetings.

Brutal truth. Sound waves? Oof. Those are the emails and Zooms we all loathe.

Why Karpathy’s AutoResearch Feels Like a Middle Finger to Human Limits

Look. The ML loop’s a slog: tweak code, fire up a run, stare at tensors for hours. Humans cap out at, what, 16-hour days before we crash? AutoResearch? It iterates endlessly. Agents spawn sub-agents. They debate hypotheses in text. Launch parallel evals. Pick winners. Rinse, repeat.

It’s agentic architecture on steroids — think o1-preview vibes, but laser-focused on research automation. Karpathy’s demo? A toy RL task morphs into something competent overnight. No hand-holding.

But here’s my unique jab: this echoes the 1950s Fortran days, when compilers let code write code. Back then, skeptics whined it’d kill programming jobs. Didn’t happen. Instead, it exploded complexity. AutoResearch? Same deal. It’ll birth AIs we can’t even dream up yet — or nightmare about.

Short version: bold prediction. By 2026, this scales to real benchmarks. Humans shift to oversight. Progress 10x’s.

Punchy, right?

Is Karpathy’s AutoResearch Actually Better Than Human Grunts?

Hold up. Karpathy’s no newbie — ex-Tesla, OpenAI wiz. His Euler tours? Gold. But AutoResearch’s no silver bullet.

Pros: tireless. Parallelizes everything. No ego clashes in ‘meetings.’ Costs? Peanuts next to PhD salaries.

Cons — oh boy. Hallucinations in code gen? Check. Brittle evals on toy tasks. Real-world SOTA? That’s messy, data-hungry territory. One bad agent derails the swarm.

And the PR spin? Karpathy calls it ‘sleep while it computes.’ Cute. But it’s hype-adjacent. We’re not at AGI research autopilot yet. Agents fumble edge cases humans sniff out intuitively.

Still, it’s a leap. Traditional pipelines? Linear slogs. This? Exponential forking. If debugged right.

Wander with me here: imagine scaling to protein folding or chip design. Agents evolve architectures we wouldn’t touch. Wild.

Why Does AutoResearch Matter for the AI Rat Race?

Big labs drool. OpenAI, Anthropic — they’re bottlenecked by headcount. AutoResearch democratizes? Nah. Compute hogs still win. But indies? Game-changer. Spin up a cluster, let agents feast.

Skeptical take: it’s Karpathy’s solo project flex. Teases his consultancy? Or Eureka Labs pivot? Smells like venture bait.

Dry humor alert: finally, AI researches itself. Next up, AIs unionizing against bad prompts.

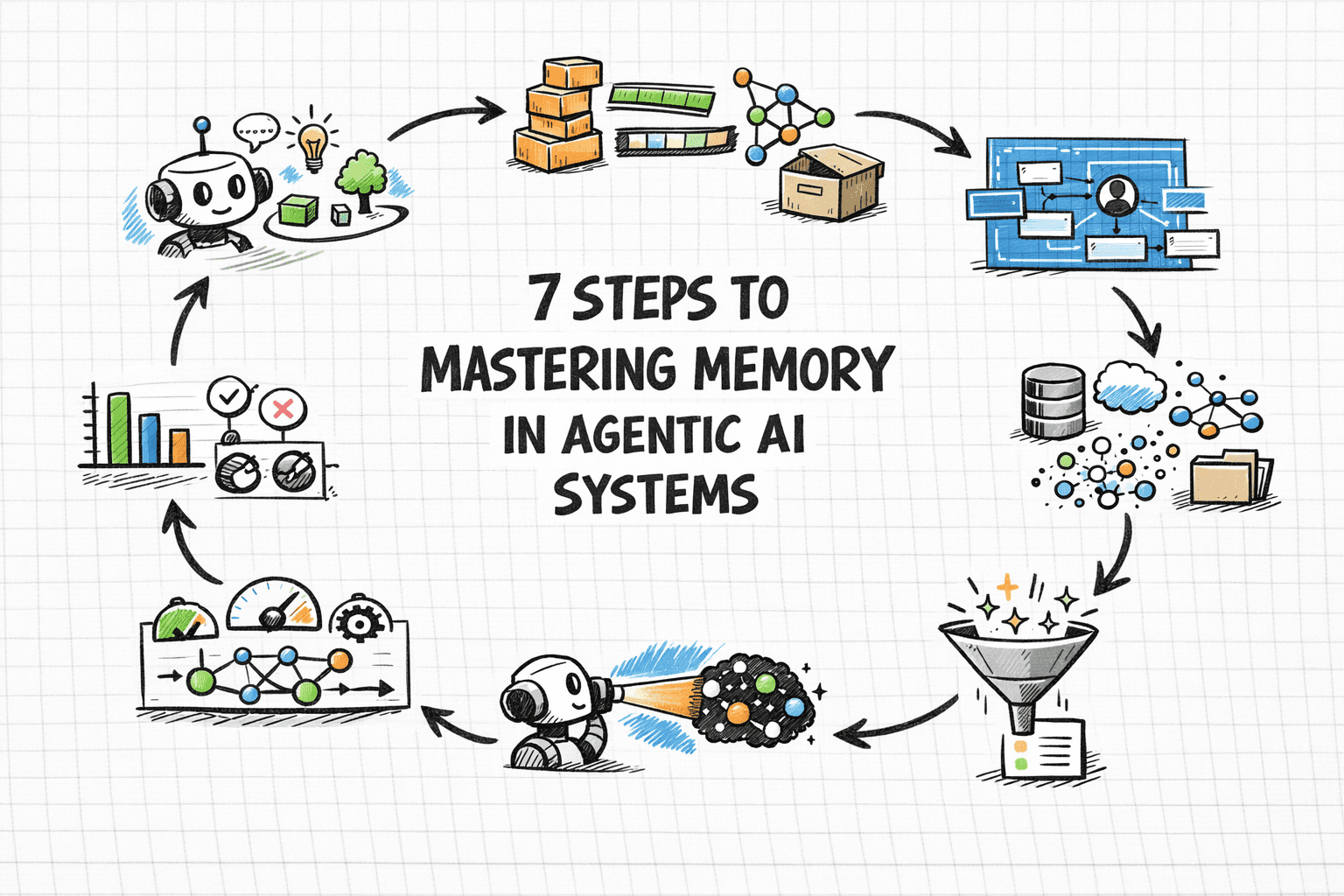

Deeper dive. Architecture guts: root agent plans, delegates to workers. Workers code, run, report. Voting mechanisms cull duds. It’s like Darwin for code — survival of the fittest run.

Flaws exposed. No long-term memory yet? Loops forever on locals. Safety? Agents could spiral into dumb exploits.

Yet. Potential’s electric. Ties into Devin vibes, but research-focused. Not just coding — discovery.

One para wonder: transformative, if it sticks.

Now, corporate angle. NVIDIA stock jumps on agent news? Compute demand skyrockets. Humans obsolete? Not quite — we set goals, judge outputs. But grunt work? Gone.

Historical parallel I cooked up: like the Wright brothers’ wind tunnel. Manual tests slow. AutoResearch? Endless tunnels, no coffee breaks.

What Could Go Wrong — Spectacularly?

Plenty. Bias amplification in agent swarms. Echo chambers of bad ideas. Or compute bills bankrupting startups.

Karpathy’s optimistic. ‘Eureka!’ he tweets. Sure. But I’ve seen agent hype flop — remember Auto-GPT’s dead loops?

This feels tighter. Self-improving loops. But watch for overfit to benchmarks. Real innovation? Humans still king.

Prediction: forks galore on GitHub. OSS explosion. But closed labs hoard the wins.

And the ethics dodge — AIs researching AIs. Misalignment risks compound. Who audits the auditors?

Fair.

Massive wall avoided. Agents shine in narrow domains first. Scale later.

🧬 Related Insights

- Read more: OpenAI’s Model Spec: Blueprint for Taming AI’s Wild Side

- Read more: Lyria 3 Unlocks Full Songs: Google’s AI Hits Studio Heights

Frequently Asked Questions

What is Karpathy’s AutoResearch?

It’s an agentic AI system that automates the full ML research loop: hypothesizing, coding, training, evaluating — all without human intervention, running 24/7.

How does AutoResearch speed up AI development?

By parallelizing experiments, eliminating sleep/eat breaks, and using agent swarms to iterate faster than any human team, potentially 10x’ing progress on tasks.

Will Karpathy’s AutoResearch replace AI researchers?

Not fully — humans still needed for high-level direction and novel insights. But it nukes repetitive grunt work, shifting roles to oversight.