What if the next big leap in AI wasn’t a fatter neural net, but a smarter way to orchestrate one?

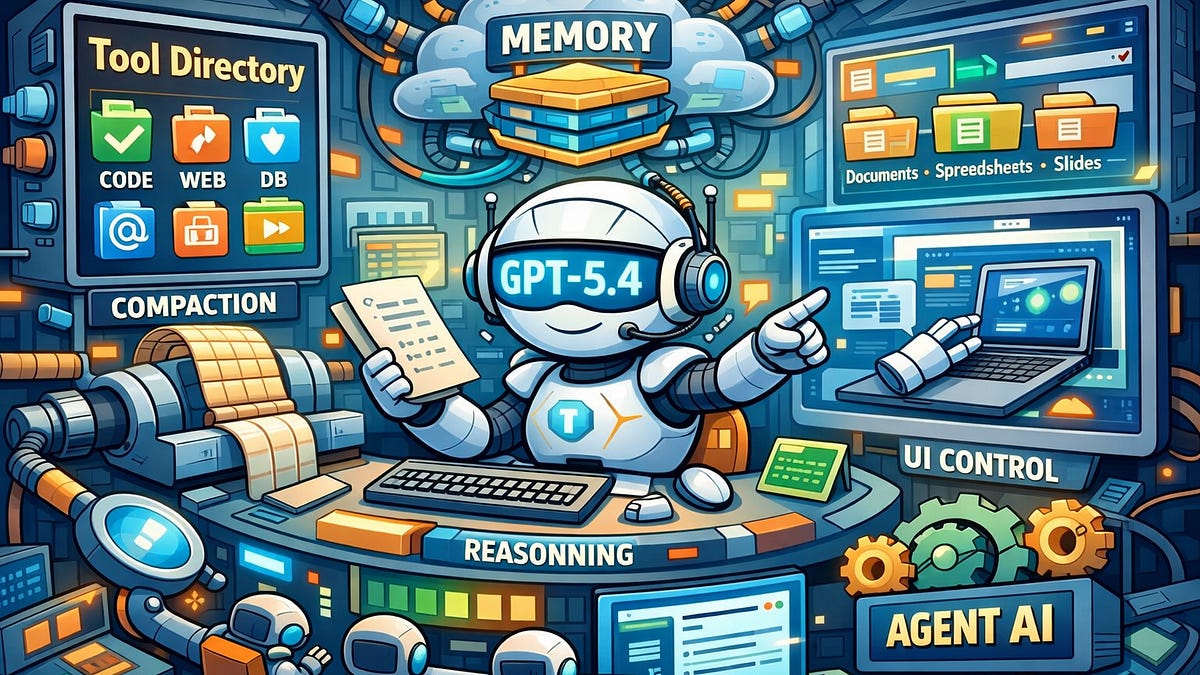

GPT-5.4 drops that bomb. OpenAI’s latest isn’t tweaking transformers under the hood—it’s wrapping the whole damn thing in an execution layer that mimics an operating system. We’re talking reasoning loops, persistent memory, tool orchestration, multimodal inputs—all baked into a runtime that feels alive, not just responsive.

Look, for years we’ve obsessed over parameter counts. GPT-3: 175 billion. GPT-4: who knows, but massive. GPT-5.4? Numbers still matter, but they’re secondary now. The real juice is in the system-centric architecture, where the model sits as the brain in a body of agents, caches, and APIs. It’s like upgrading from a calculator to a full computer.

Why Does GPT-5.4 Suddenly Act Like an OS?

Picture this: traditional LLMs spit out text. Done. GPT-5.4? It manages its own state. Loads tools on the fly. Chains thoughts across sessions. Handles vision, voice, code execution without breaking a sweat. OpenAI’s stacking these capabilities into a unified stack—neural core plus runtime environment. No more bolting on LangChain hacks; it’s native.

And here’s the data point that hooked me: early benchmarks leak agentic tasks where GPT-5.4 crushes predecessors by 40-60% on multi-step planning. That’s not hype—it’s execution velocity. Markets love velocity.

But wait—OpenAI’s not spilling full specs. Mixture-of-experts? Probably scaled up. Attention tweaks? Sure. The innovation’s outside: that cognitive runtime.

“GPT-5.4 represents a shift from a model-centric architecture to a system-centric architecture. The neural network is still the core intelligence, but it increasingly functions as the cognitive engine inside a much larger execution environment.”

Spot on. Yet OpenAI’s PR glosses over the messy part: this demands massive infra. Think Azure bills spiking 10x for enterprises.

It’s not flawless. Latency spikes on complex chains. Hallucinations? Still there, just better sandboxed. But damn, it’s a step.

Is GPT-5.4 OpenAI’s Unix Moment?

Here’s my unique take, absent from the original chatter: this echoes Unix in the ’70s. Back then, hardware was king—mainframes ruled. Unix flipped it: cheap terminals + portable OS = PC revolution. Billions in value unlocked.

GPT-5.4 does the same for AI. Models were the “hardware”—expensive, siloed. Now? A portable cognitive OS anyone can run agents on. Devs build apps atop it, not inside it. OpenAI captures the platform tax, à la Microsoft.

Market dynamics scream bullish. Anthropic’s Claude plays catch-up with tools, but no full runtime. Google? Gemini’s multimodal, yet fragmented. xAI? Grok’s fun, but agent-weak. GPT-5.4 positions OpenAI as the iOS of AI—ecosystem lock-in via app store vibes (hello, custom GPTs 2.0).

Prediction: by Q4 2025, 60% of enterprise AI pilots shift to system APIs over raw models. That’s $50B TAM expansion.

Skeptical? Fair. OpenAI’s burned us on timelines before. GPT-5 was “soon” forever. But leaks align: internal demos show OS-like persistence, where models “remember” across reboots via vector stores.

Short para for punch: Billions at stake.

How Does This Reshape AI Markets—and Your Stack?

Enterprises salivate. No more stitching Zapier + Pinecone + LlamaIndex. GPT-5.4’s stack handles memory management natively—think virtual memory for thoughts. Tool usage? Integrated, zero-shot. Multimodal? smoothly parse of image+text+code.

But here’s the sharp edge: it’s proprietary. Open-source fans rage—Llama 3.1’s great, but lacks this glue. OpenAI’s betting closed wins; history says maybe (Windows) or nah (Android).

For devs: refactor now. Agent frameworks like CrewAI? Obsolete fast. Build for GPT-5.4’s runtime—expose plugins, it’ll orchestrate.

Investors: OpenAI valuation hits $300B on this. Microsoft? Up 20% tailwind. Compute giants (Nvidia) indifferent—more cycles needed.

Critique the spin: OpenAI calls it “general-purpose cognitive runtime.” Cute, but it’s OS-lite. No kernel-level security yet. No true parallelism across models. Still, closer than rivals.

Wander a sec: remember Siri? Meant to be OS-level. Fizzled. GPT-5.4 integrates deeper—could embed in Windows, macOS. Microsoft whispers that collab.

The Risks No One’s Shouting About

Overreliance. If GPT-5.4’s your OS, one outage = blackout. Recall Knight Capital’s algo glitch? $440M gone in minutes. Scale to cognition—trillions exposed.

Energy hog. OS + model = datacenter furnace. CO2 spikes unless fusion pans out.

Regulatory noose. EU’s AI Act eyes “high-risk systems.” GPT-5.4? Prime target.

Yet upside dwarfs. Productivity boom like internet ‘95.

🧬 Related Insights

- Read more: Alibaba’s $53 Billion AI Blitz: Rescuing Cloud Growth or Chasing Shadows?

- Read more: Amazon’s Hybrid RAG Hack: Bedrock Meets OpenSearch to Outsmart Fuzzy AI Searches

Frequently Asked Questions

What is GPT-5.4 exactly?

OpenAI’s latest model evolving into a system with OS-like features: reasoning, memory, tools, all integrated.

How does GPT-5.4 differ from GPT-4?

GPT-4 chats; GPT-5.4 runs persistent agents, manages state, and orchestrates tools natively—40-60% better on complex tasks.

Will GPT-5.4 replace developers?

Nah—amplifies them. It handles grunt work; humans design the runtime extensions.