Greg Abbott stared at his phone screen Saturday night, thumb hovering, then tapped ‘like’ on what looked like divine intervention.

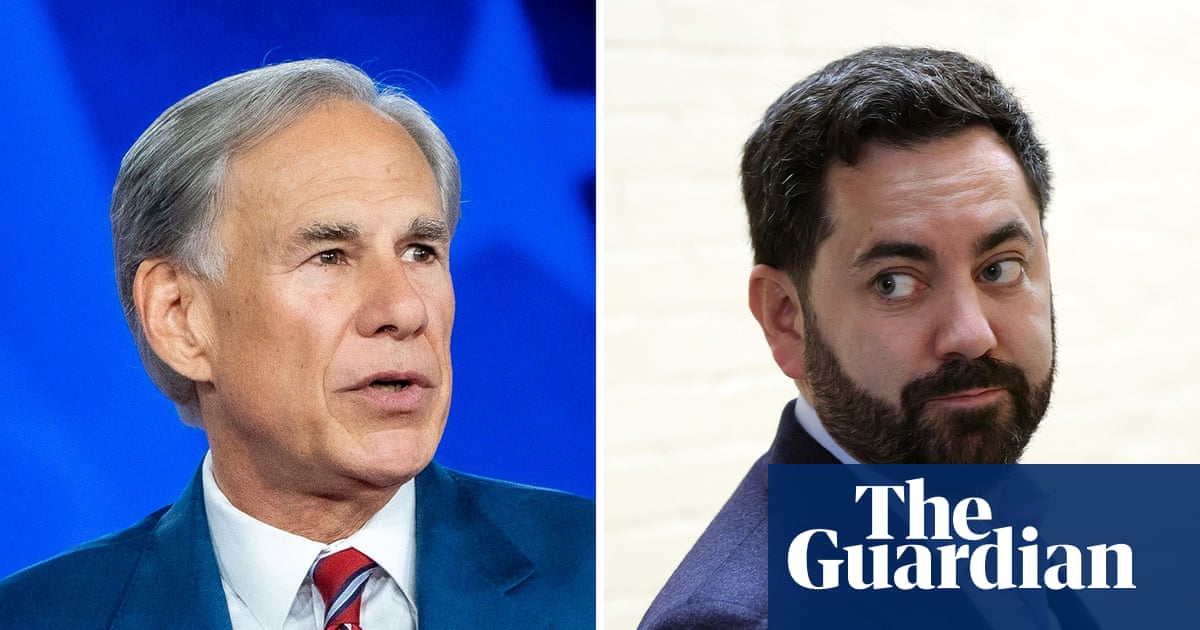

That image — a US airman, safe amid grinning special forces, flag across his lap — screamed victory over Iran. But it was pure AI fabrication, cooked up by some pro-Trump account on X, and it snagged not just the Texas governor but Ken Paxton and Mike Lawler too. Abbott gushed to his 1.4 million followers: “This is so awesome… God is sending a message to our enemies!” (He deleted it later, of course.)

How Did a Pixel-Perfect Fake Slip Past Seasoned Politicians?

Look, these aren’t rookies. Abbott’s been in the game decades, Paxton’s a lawyer-turned-Senate hopeful, Lawler’s a congressman. Yet here’s this AI-generated image, reshared 21,000 times before X slapped a warning on it: “This photo probable AI generated.” Probable? That’s the problem — it’s not screaming ‘fake’ at first glance.

The ‘how’ lies in the architecture of these tools. Modern image generators like Midjourney or Stable Diffusion don’t just slap together blobs anymore; they’ve got diffusion models trained on billions of real photos, nailing details like fabric folds, skin textures, even the subtle camaraderie in soldiers’ smiles. Why’d it work? Because it fed a narrative — Easter weekend heroics — perfectly timed after vague reports of a downed plane. No wild distortions, just plausible fill for a news vacuum.

Abbott fell for it hard. Remember March? He posted War Thunder gameplay as a US warship blasting an Iranian jet. Or 2023’s Garth Brooks hoax. Pattern here: rush to amplify, verify later (if ever).

But Republicans aren’t solo victims.

Democrats play too — Keith Edwards’ walker-Trump image hit 13.5 million views. Gavin Newsom’s cuffed-Trump fantasies. Post-border shootings, fakes of nurses at gunpoint flooded feeds. It’s bipartisan slop.

“Details can get mistaken or altered in a way that is dangerous in these very volatile situations,” Hany Farid, a University of California digital forensics expert, told the Minneapolis Star Tribune. “In the fog of war and in conflict, it is just really messy, and we are simply adding noise to an already complicated and difficult situation.”

Farid nails it. AI doesn’t invent from thin air; it remixes reality, tweaking just enough to evade quick eyes.

Why Is AI Slop Exploding Around Breaking News?

Blame the speed. Maduro’s January grab? Boom — AI images of him in cuffs, missiles over Caracas, Venezuelans cheering. No photos? No problem. Generators churn variants in seconds.

NewsGuard’s Sofia Rubinson called it: these fakes “do not drastically distort the facts on the ground,” but they “fill gaps in real-time reporting.” That’s the genius (or curse) of latent space diffusion — models learn distributions of real events, so a ‘rescued pilot’ prompt pulls from Gulf War archives, SEAL team pics, flag ceremonies. Output? Eerily convincing.

X’s algorithm loves it. Emotional spikes — joy, rage — drive engagement. That pro-Trump post? Viral before scrutiny. Platforms add labels post-facto, but damage done: Trump was set for a Monday presser on the ‘rescue.’ Imagine the spin.

Here’s my unique angle, absent from the chatter: this echoes the 1898 Hearst yellow journalism wars, where sketched fakes of Spanish atrocities fueled the Spanish-American War. Back then, printing presses took days; now, AI democratizes deception in seconds. Prediction? By 2026 midterms, we’ll see watermark mandates — not optional, enforced via federal API hooks on generators. Tech giants’ll fight it, crying censorship, but public outrage (like Billy Binion’s “crash course in media literacy” plea) will force hands.

Short para: It’s bleak.

Abbott didn’t comment Monday. His team? Silent. But the why runs deeper than one gaffe — it’s architectural. Social feeds prioritize novelty over truth; humans crave confirmation. AI exploits both, architecting trust erosion one like at a time.

Take the image itself. Airman’s blank face (no ID, smart), soldiers’ generic joy, flag’s perfect drape. Detectors flag hands (often mangled), but this one’s clean — latest models fix that via control nets, inpainting errors on the fly.

Paxton liked it raw. Lawler captioned: “God Bless America!” Deleted too. Pattern: delete, deny, move on.

What Happens When Trump Weighs In?

Monday’s presser loomed. If he’d echoed the fake? Amplification to Mar-a-Lago levels. We’ve seen it — his crowd-surfing Pope hoax, election deepfakes. But this? Ties to Iran tensions, Easter symbolism. PR gold if real; mud if not.

Critique the spin: Abbott’s “God is sending a message” isn’t just hype — it’s theological weaponization, blending faith with unverified pixels. Dangerous in red states where skepticism’s sin.

Broader shift: real-time forensics needed. Tools like Hive Moderation or Truepic watermark, but adoption lags. Why? Users want unfiltered feeds. Platforms profit from chaos.

One sentence: Fix this, or democracy drowns in diffusion models.

Billy Binion got it right — we need discernment bootcamp. Not top-down literacy classes, but UI overhauls: hover-for-source, AI-probability badges pre-share. X tried warnings; too late.

Historical parallel? 1917’s Creel Committee faked atrocity posters to sell WWI. Statecraft via image. Today? Bottom-up, anyone with a prompt.

Will AI Misinfo Kill Media Literacy—or Evolve It?

Evolve, if we’re lucky. Detectors race generators — adversarial training loops. But arms race favors creators; open-source models leapfrog closed ones.

Politicos adapt slow. Abbott’s repeat dupes scream complacency. Or strategy? Share first, scrub later — virality wins.

FAQ time.

🧬 Related Insights

- Read more: Daily Briefing: April 07, 2026

- Read more: AI Anxiety in 2026: Blame Policy, Not the Bots

Frequently Asked Questions

What was the AI image that fooled Republicans?

It showed a US airman rescued from Iran, surrounded by smiling special forces with a flag on his lap — shared on X, liked by Abbott, Paxton, Lawler.

How do you spot AI-generated images like this?

Check hands/fingers, lighting inconsistencies, generic faces; use tools like Illuminarty or Google’s reverse search, but train your eye on emotional overkill.

Is this just Republicans or everyone getting fooled by AI fakes?

Everyone — Dems share Trump walker pics, Newsom’s handcuff fantasies; it’s partisan fuel for all sides in the misinformation wars.

And that’s the deep dive. Pixels over people, for now.