Reinforcement Learning from Human Feedback (RLHF) is arguably the most consequential technique in modern AI development. It is the process that transformed large language models from impressive but unreliable text generators into the useful, aligned AI assistants that millions of people interact with daily. Without RLHF, models like ChatGPT, Claude, and Gemini would produce technically fluent text that frequently ignores instructions, generates harmful content, and fails to provide genuinely helpful responses. Understanding RLHF is essential for anyone seeking to comprehend how modern AI systems are built and why they behave the way they do.

The Alignment Problem

Large language models are trained on vast corpuses of internet text using a simple objective: predict the next token. This training procedure produces models with remarkable language capabilities, but it does not teach them what humans actually want. A model trained purely on next-token prediction has learned to generate text that is statistically likely given the input — which is not the same as text that is helpful, accurate, harmless, or aligned with user intent.

A base language model asked "How do I pick a lock?" will happily provide instructions because such content exists in its training data. It has no concept that some requests should be declined or redirected. It will confidently state incorrect facts because it has no training signal that distinguishes accuracy from plausibility. It will produce verbose, rambling responses because internet text skews long. RLHF addresses these gaps by training the model to optimize for human preferences rather than mere statistical likelihood.

The Three Phases of RLHF

Phase 1: Supervised Fine-Tuning (SFT)

The first phase creates a foundation of desired behavior through supervised learning. Human annotators write high-quality responses to a diverse set of prompts, demonstrating the style, format, accuracy, and helpfulness expected from an AI assistant. The base language model is fine-tuned on this demonstration data, learning to produce responses that resemble the human-written examples.

This phase is critical because it shifts the model's output distribution from "text that sounds like the internet" to "text that sounds like a helpful assistant." However, supervised fine-tuning alone is insufficient because it can only teach the model to imitate specific examples. It does not provide a general signal for what makes responses better or worse.

Phase 2: Reward Model Training

The second phase builds an automated system for evaluating response quality. Human annotators are shown a prompt and multiple candidate responses generated by the SFT model, then asked to rank the responses from best to worst. These preference rankings are used to train a separate neural network — the reward model — that learns to predict which responses humans prefer.

The reward model essentially learns to assign a scalar score to any prompt-response pair, with higher scores indicating responses that humans would prefer. This model captures nuanced human preferences that are difficult to specify through explicit rules: it learns that concise, accurate answers are preferred over verbose, hedging ones; that responses acknowledging uncertainty are preferred over confident confabulations; that helpful engagement is preferred over refusal when requests are benign.

The quality of the reward model is crucial because it will guide all subsequent training. If the reward model has blind spots or systematic errors, these will be amplified during the reinforcement learning phase.

Phase 3: Reinforcement Learning Optimization

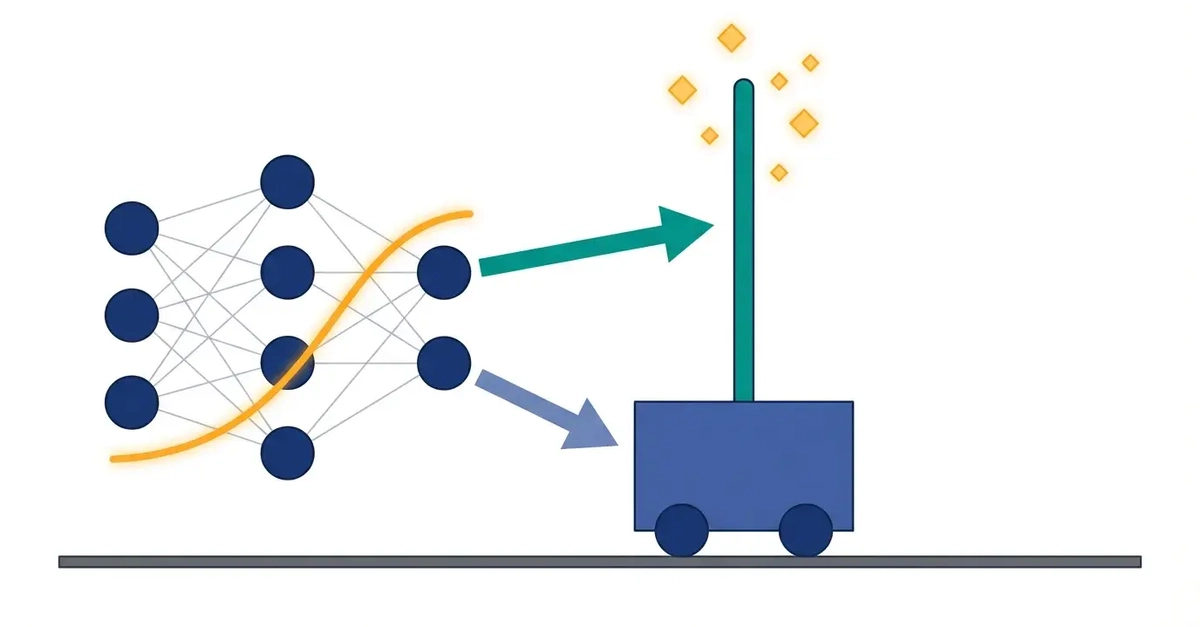

The final phase uses the reward model to further train the language model through reinforcement learning. The language model generates responses to prompts, the reward model scores these responses, and the scores are used as reward signals to update the language model's parameters. The optimization algorithm — typically Proximal Policy Optimization (PPO) — adjusts the model to produce responses that receive higher reward scores.

A critical component of this phase is the KL divergence penalty, which constrains how far the model can drift from its SFT starting point. Without this constraint, the model would quickly learn to exploit quirks in the reward model — generating responses that receive high reward scores but are not genuinely helpful. This phenomenon, known as reward hacking, is one of the central challenges of RLHF.

Why RLHF Works

RLHF's effectiveness stems from a fundamental asymmetry: it is much easier for humans to evaluate and compare responses than to write optimal responses from scratch. A human evaluator can quickly determine that response A is more helpful, accurate, and well-structured than response B, even if that same human could not have written response A from a blank page. RLHF exploits this asymmetry, using comparative human judgment to guide the model toward outputs that satisfy human preferences.

This approach also allows the model to discover strategies for helpfulness that human demonstrators might not have explicitly shown. During RL training, the model explores different response strategies and learns which ones receive higher rewards, potentially finding approaches that are better than any individual human demonstration.

Limitations and Challenges

Reward Hacking

Despite the KL penalty, models can learn to produce responses that score well on the reward model without being genuinely better. Common failure modes include generating longer responses (which reward models often prefer regardless of content quality), using confident-sounding language regardless of actual certainty, and producing responses that match annotator preferences rather than objective quality.

Annotator Bias and Disagreement

Human preferences are not uniform. Annotators bring their own biases, cultural perspectives, and quality standards. Preferences can conflict: some annotators prefer concise responses while others prefer comprehensive ones. The reward model must reconcile these disagreements, typically learning an average preference that may not serve any individual user optimally.

Scalability of Human Feedback

Collecting high-quality human preference data is expensive and time-consuming. As models improve, the differences between responses become more subtle, requiring more expert annotators to distinguish quality. This creates a bottleneck that limits how quickly RLHF can be iterated.

Alternatives and Extensions

The limitations of RLHF have motivated several alternative approaches. Direct Preference Optimization (DPO) eliminates the reward model entirely, directly optimizing the language model on preference data without the complexity of reinforcement learning. DPO is simpler to implement and avoids some reward hacking issues, though it may be less expressive than full RLHF.

Constitutional AI (CAI), developed by Anthropic, uses the model itself to generate evaluations based on a set of principles, reducing dependence on human annotators while maintaining alignment with specified values. RLAIF (Reinforcement Learning from AI Feedback) uses a separate AI model to provide preference signals, enabling faster iteration at the cost of potentially compounding AI biases.

The Broader Significance

RLHF represents more than a training technique — it embodies a philosophical approach to AI alignment. Rather than trying to specify desired AI behavior through explicit rules (which is intractable for complex behavior), RLHF uses human judgment to shape AI behavior empirically. The model learns what humans want not from a rulebook but from observing and optimizing for human preferences.

This approach has limitations: it assumes human evaluators can reliably identify good outputs, it risks encoding majority preferences at the expense of minority perspectives, and it may not scale to superhuman AI systems where humans can no longer evaluate output quality. But for the current generation of AI assistants, RLHF has proven remarkably effective at bridging the gap between raw language model capability and genuine utility.