The faint hum of servers in a data center feels a universe away from the jarring screech of metal on asphalt. But somewhere between those two realities, a new kind of AI is being forged.

Applied Intuition, a company that’s quietly morphed from YC-era autonomy tooling into a $15 billion behemoth, is on a mission to build this “physical AI.” Qasar Younis and Peter Ludwig, its co-founders, aren’t talking about chatbots that can hallucinate movie plots; they’re focused on getting AI to reliably operate heavy machinery, drive trucks through bustling Japanese cities, and navigate the unforgiving terrain of construction sites and defense systems. Their journey, spanning a decade, offers a granular look at the often-overlooked chasm between intelligent algorithms and tangible, real-world deployment.

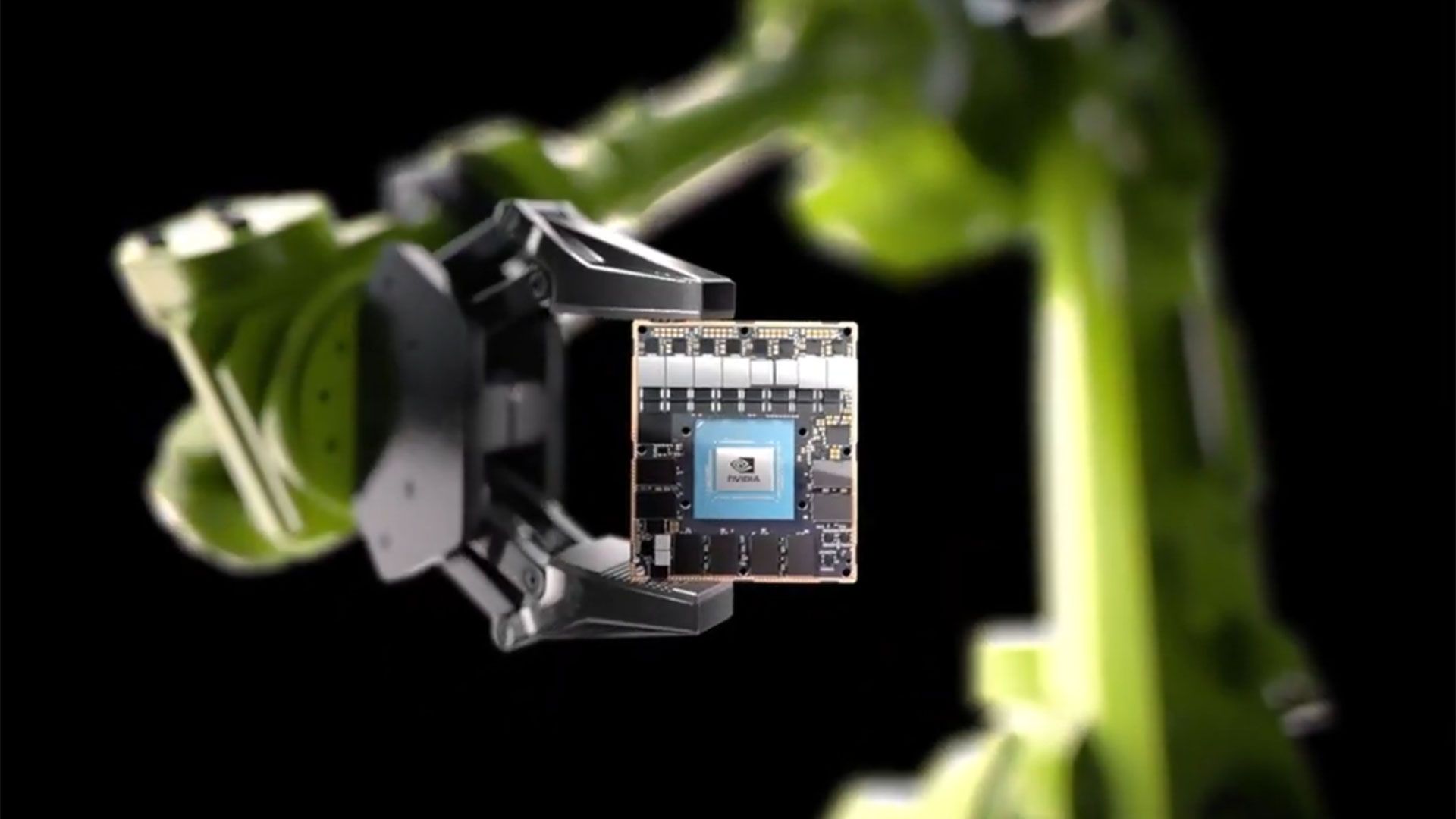

Here’s the thing: everyone’s excited about the models. OpenAI’s latest release, Google’s advances – they grab headlines. But Younis and Ludwig are quick to point out that model intelligence, while crucial, is no longer the primary bottleneck. The real head-scratcher, the genuine engineering challenge of our time, is getting these sophisticated AI systems to perform safely and effectively on constrained hardware, with all the real-time demands, power limitations, and unforgiving physical constraints that entails.

Why Physical AI is Fundamentally Different

Think about it. An LLM can misinterpret a prompt, generate nonsensical text, or even invent facts. Annoying? Absolutely. But it doesn’t typically result in a catastrophic failure that causes physical harm. For safety-critical machines – driverless trucks, autonomous vehicles, industrial robots – the stakes are infinitely higher. A mistake isn’t just a glitch; it’s a potential disaster. This demands a level of reliability and determinism that’s orders of magnitude beyond what we expect from our digital assistants.

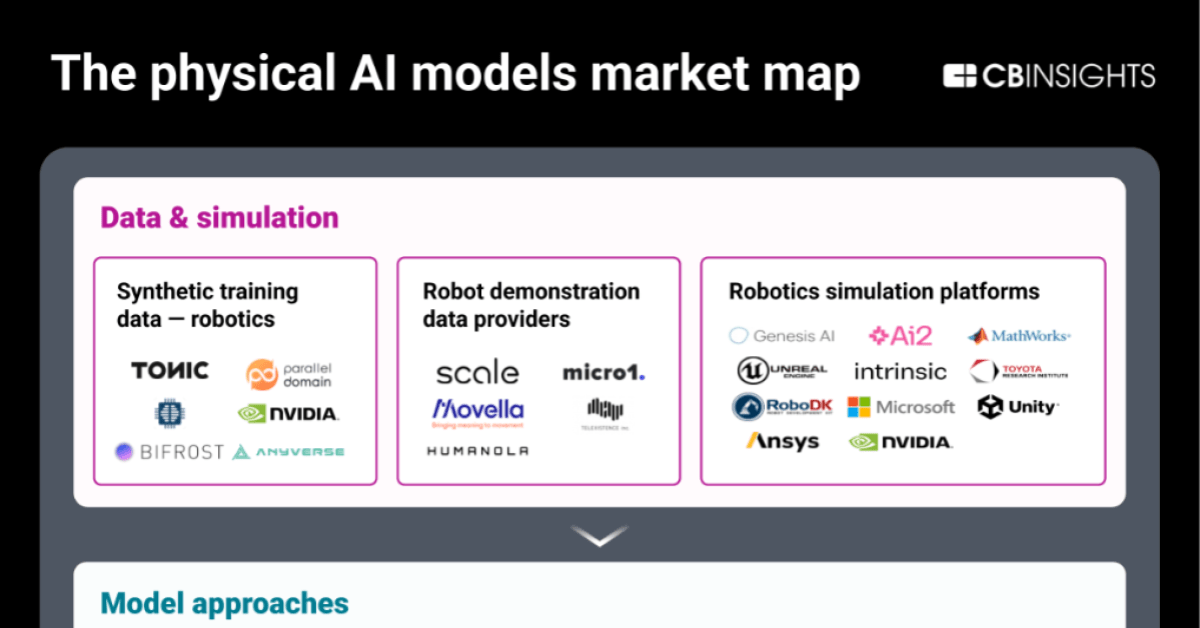

Applied Intuition’s evolution mirrors this complexity. They began by building simulation and data infrastructure, essentially the digital proving grounds for robotaxi companies. But as they dug deeper, they realized the need for a more holistic approach. It wasn’t enough to test in simulation; you needed strong operating systems designed for the unique demands of moving machines, and fundamental AI models that understood the nuances of the physical world.

Their current platform, spanning over 30 products, touches simulation, operating systems, and AI models. It’s a proof to the idea that physical AI isn’t just one thing; it’s a complex ecosystem where software, hardware, and real-world physics must harmonize.

Is the Hardware the New Frontier?

Ludwig draws a compelling parallel: today’s complex vehicle and machine software stacks are like mobile phones before the advent of Android and iOS. Fragmented, proprietary, and difficult to manage. Applied Intuition aims to be the operating system layer, the foundational platform that allows for more standardized, reliable, and scalable development of physical AI applications. This isn’t about replicating an iPad’s functionality; it’s about building an OS capable of handling real-time sensor streams, managing latency in milliseconds, ensuring fail-safes, and enabling reliable over-the-air updates without the terrifying risk of “bricking” a multi-ton vehicle.

This shift from pure model development to a focus on deployment and infrastructure is why tooling companies, which felt a bit unfashionable a few years back, are back in vogue. The AI boom hasn’t just created smarter models; it’s highlighted the critical importance of the workflows and tools that allow those models to be effectively integrated into real-world systems.

“The hard part is deploying models onto real hardware, under safety, latency, power, cost, and reliability constraints.”

And even within Applied Intuition, AI isn’t just the product; it’s becoming a tool for their own engineers. Coding agents like Cursor and Claude Code, along with internal adoption leaderboards, are changing how they write software, even for the most safety-critical embedded systems. It’s a fascinating microcosm of how AI is permeating every layer of technological development.

The Unending Quest for Validation

One of the most significant challenges Younis and Ludwig highlight is verification and validation. As AI models become more intelligent, evaluating their performance becomes paradoxically harder. Traditional deterministic tests start to break down. End-to-end autonomy requires simulation that’s not just accurate, but also fast and cheap enough to enable practical reinforcement learning. The goal shifts from binary pass/fail to quantifying reliability with a certain number of “nines” – a statistical approach to safety.

This is where the real-world testing will never disappear. No simulator, however advanced, can perfectly replicate the chaos and unpredictability of reality. The infamous incidents involving companies like Cruise and Waymo underscore this point. Public trust isn’t built solely on technical prowess; it’s built on consistent, reliable performance and how companies interact with regulators and the public when things inevitably go wrong. Waymo, they suggest, has set a high bar for the industry through its measured approach.

World models are another area of intense focus. Understanding not just visual cues, but also cause-and-effect – how hydroplaning affects a vehicle, how the dynamics of construction equipment change based on load – is crucial. But these world models need to be incredibly efficient for onboard systems, which have strict limits on latency, power consumption, and size. This means distillation-like techniques to shrink massive data-center models down to their essential, performant core.

It’s a far cry from the brittle last 1% of a robotics demo. Younis’s advice to founders is sharp: constrain the commercial problem, avoid early mimicry of mature companies, and remember that technological compounding is only valuable if you survive long enough to see it. The landscape, they suggest, has changed. The 2014 YC advice might not cut it in 2026, given shifts in capital markets and the dynamics of AI startups.

Applied Intuition’s decade-long grind has provided them with a unique foresight; they can look at a robotics demo and predict the next 20 problems a company will face. It’s this hard-earned wisdom, forged in the trenches of physical AI deployment, that truly sets them apart. They’re not just building smart machines; they’re building the intelligence infrastructure for a world that moves.