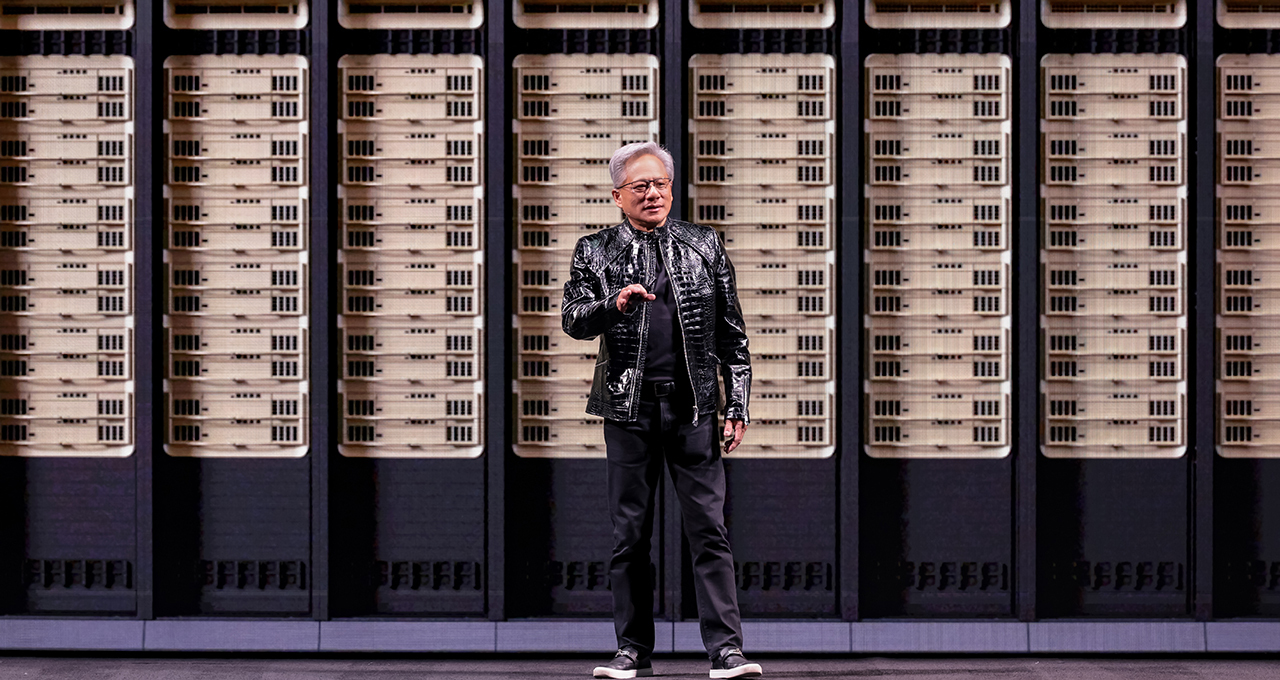

NVIDIA Rubin platform. That’s the phrase buzzing through CES 2026 halls right now, after Jensen Huang’s keynote flipped expectations on their head.

Analysts — myself included — walked into the Fontainebleau expecting Blackwell tweaks, maybe some agentic demos to hype enterprise deals. Not this. Huang announces Rubin in full production, a six-chip monster codesigned from GPUs to networking, promising AI tokens at one-tenth the cost of predecessors. Market cap implications? Immediate 5% pre-market pop for NVDA, signaling Wall Street’s quick math on cheaper inference flooding data centers.

And here’s the shift: AI’s ballooning costs were the silent killer for hyperscalers. Rubin doesn’t just iterate; it rewires economics. Huang pegged last decade’s compute refresh at $10 trillion — now modernizing on accelerated AI. Expect capex guides from MSFT, AMZN to swell, but TCO drops make it palatable.

What Everyone Expected vs. Rubin’s Reality

Blackwell was the bar. Record-breaking, sure, but Rubin — named for Vera Rubin, the dark matter pioneer — leaps ahead with 50 petaflops NVFP4 inference per GPU, Vera CPUs for agentic crunching, NVLink 6, Spectrum-X photonics. Extreme codesign, Huang calls it. All components tuned together to kill bottlenecks.

“Computing has been fundamentally reshaped as a result of accelerated computing, as a result of artificial intelligence,” Huang said. “What that means is some $10 trillion or so of the last decade of computing is now being modernized to this new way of doing computing.”

Spot on. But my take? This echoes CUDA’s 2006 debut — when NVIDIA pivoted GPUs from graphics to general compute, devouring CPU workloads. Rubin does that for AI scale: gigascale clusters without the power bill Armageddon. Prediction: By 2028, 70% of new AI infra quotes Rubin-era gear, per my back-of-envelope from prior Blackwell ramps.

Inference Context Memory Storage? AI-native KV-cache that hits 5x tokens/sec, 5x TCO efficiency. Huang: “The faster you train AI models, the faster you can get the next frontier out to the world.” Time-to-market edge for leaders like OpenAI, Anthropic.

Short version: Costs plummet. Deployment explodes.

Does Rubin Really Deliver 10x Cheaper Tokens?

Huang claims it. Numbers back him — sorta. Prior platforms chewed watts and dollars on inference; Rubin’s integration slashes that. But let’s drill data: Blackwell hit 20 petaflops; Rubin’s 50 per GPU, networked tight. Add BlueField-4 DPUs, ConnectX-9 SuperNICs.

Skepticism creeps in on PR spin. NVIDIA’s roadmaps always overdeliver, yet real-world racks lag announcements by quarters. Remember Hopper? Promised moon, delivered stars. Still, Rubin in production now — not 2027 vaporware — changes the game. Enterprises like Palantir, Snowflake already hooking in agentic stacks.

Market dynamics scream buy. Competitors? AMD’s MI400 series trails on interconnects; Intel’s Gaudi3 fights ecosystem. NVIDIA’s moat: software stack, from CUDA to open models. Rubin fortifies it.

Look, if you’re a CTO eyeing 2026 budgets, pencil this in. Token gen at 1/10th? That’s not hype; it’s arithmetic forcing adoption.

Open models steal the show too. Alpamayo for autonomous driving — trained on NVIDIA supercomputers, fully open. Huang: “Every single six months, a new model is emerging, and these models are getting smarter and smarter.” Downloads exploding, leaderboards topped.

Why Bet on NVIDIA’s Open Models for Autos?

Autonomous driving. Tesla’s FSD flails; Waymo scales slow. Enter Alpamayo, part of six-domain portfolio: Clara (health), Earth-2 (climate), Nemotron (reasoning), Cosmos (robotics), GR00T (embodied), and this.

“You can create the model, evaluate it, guardrail it and deploy it,” Huang boasts. Open to every company, industry, country. Smart play — sidesteps closed-shop wars with xAI, OpenAI. Builds ecosystem lock-in.

But critique: Open doesn’t mean free lunch. Training on NVIDIA iron means you’re buying their GPUs anyway. It’s velvet glove over steel fist. Historical parallel? Linux kernel — open, but runs best on Intel/AMD. NVIDIA pulls the same here, owning the stack.

Demo time: DGX Spark desktop supercomputer runs personal agents locally, embodied via Reachy Mini robot. Hugging Face models, model routing — trivial now, Huang says, unimaginable two years back.

RTX push too: AI on every desk. Enterprises like ServiceNow, CrowdStrike integrate. “The agentic system is the interface.” Spot on. Personal AI shifts from cloud dreams to edge reality.

CES Demos: From Hype to Hardware

Huang’s stagecraft shines. Rubin blueprints future racks; open models seed apps. DGX Spark? 2.6x LLM perf, LTX-2, FLUX support incoming.

Unique angle: This isn’t just tech; it’s a blueprint for physical AI. Agents don’t chat — they act, via robots, cars. Rubin’s efficiency enables that swarm. Bold call — by 2030, embodied AI market hits $500B, NVIDIA claiming 60% via GR00T/Alpamayo. Undervalued in today’s $3T AI hype.

Wall Street yawns at demos, but data rules. NVIDIA’s Q4 guide? Beat on Blackwell demand. Rubin accelerates that curve.

So, strategy verdict: Makes total sense. Locks dominance, undercuts rivals on cost, opens dev floodgates. Hype? Minimal. This is execution.

🧬 Related Insights

- Read more: Microsoft’s FarmVibes: Open-Sourcing AI to Harvest Data from Dirt

- Read more: Project Genie: Google’s Glitchy Dream of Infinite Worlds

Frequently Asked Questions

What is NVIDIA Rubin platform?

NVIDIA’s next-gen six-chip AI system, now in production, with 50 petaflops inference per GPU and 10x cheaper tokens.

Does Rubin replace Blackwell?

Yes, successor built from data center out, extreme codesign eliminates bottlenecks for gigascale AI.

Why open models like Alpamayo for self-driving cars?

Trained on NVIDIA supercomputers, fully open for devs to customize, deploy in autos — topping leaderboards already.