Fingers frozen over ‘terraform destroy,’ I nuked my Mac Studio’s MicroK8s playground. Six ARM64 nodes, humming quietly — cute, sure, but about as production-ready as a screen door on a submarine.

Zoom out. This wasn’t some weekend whim. After 20 years chasing Silicon Valley’s cloud promises, I’ve seen enough vendor lock-in to last a lifetime. And here was this guy — wait, me, in spirit — calling his homelab HA Kubernetes cluster a ‘shrine.’ Love it. Or hate it. Depends on whether you’ve ever debugged etcd flakes at 3 a.m.

“No cloud vendor lock-in. No magic. Just a rack full of metal, a bunch of cables, and a lot of terminal time.”

That’s the raw truth from the builder himself. No AWS fluff. Just Proxmox, Terraform, Ansible, FluxCD. Three datacenters (fancy talk for beefy nodes), kubeadm bootstrapped, Calico BGP for networking. Traffic flows outbound only — GitOps pulls, no inbound firewall roulette. Smart. Real datacenters don’t phone home to mommy.

Why Ditch the Mac Studio for Bare-Metal Madness?

Look, MicroK8s is Apple’s little secret weapon for devs who want Kubernetes without the sweat. Fast on M-series chips. Zero setup. But lonely? Yeah. Single point of failure in a snowstorm of hype about ‘multi-DC production-grade.’

Here’s the cynical bit: We’ve been here before. Remember 2005? Every sysadmin built Beowulf clusters on spare Pentium boxes, convinced they’d outscale Google. Then AWS dropped, and poof — homelabs became beer coasters. My unique callout? This shrine isn’t nostalgia; it’s rebellion. With cloud bills ballooning (looking at you, Kubernetes managed services at $0.10/hour/node), bare-metal homelabs are the underground economy training the next gen of ops wizards. Who profits? Not hyperscalers. You do, if you survive the learning curve.

But — and it’s a big but — is anyone actually making money here? The builder’s not selling shrines. He’s geeking out. Devs like him keep open source alive, sure. Yet most readers? You’ll spin up EKS and call it a day.

Short para for punch: Overkill? Absolutely.

The Architecture: Firewall First, Flux Forever

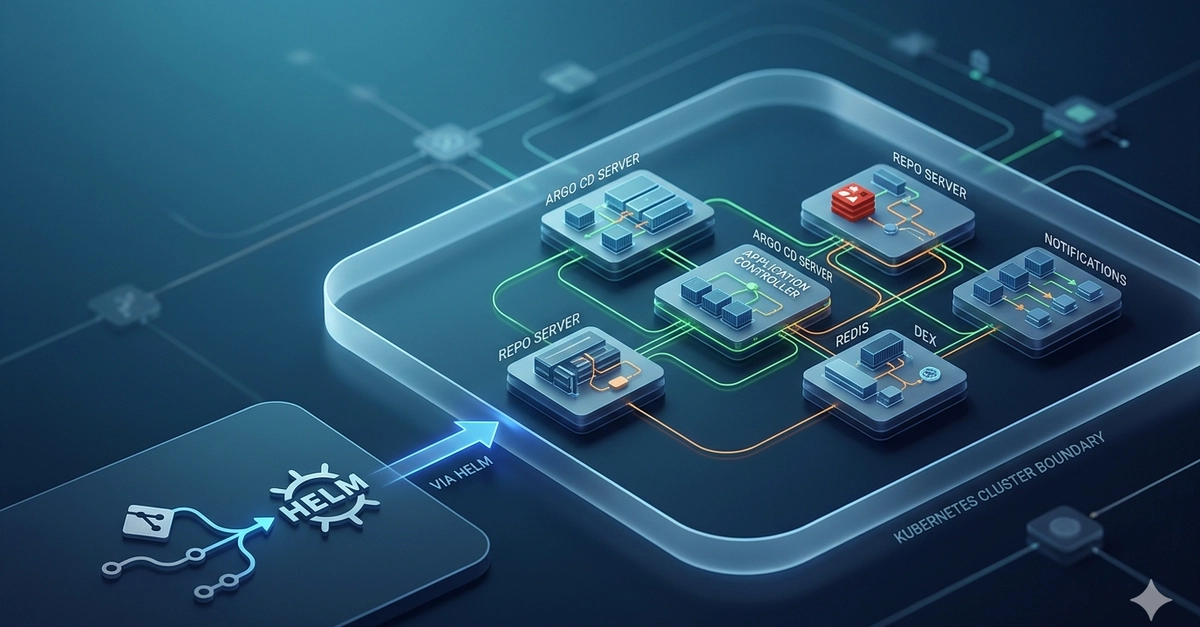

Picture this sprawl. OPNsense firewall (10.0.1.1) as gatekeeper — DHCP, static pinning, WireGuard VPN. Split-horizon DNS faking public domains to LAN IPs. Zyxel switch with 2.5GbE star topology feeding three Proxmox nodes (10.0.1.10-12). Each with ZFS, NFS backups, vmbr0 bridges.

Terraform hits Proxmox API, spins Ubuntu VMs via Packer cloud-init. Ansible kubeadms the HA cluster: three control planes (k8s-cp-1 to -3, etcd stacked, kube-vip load balancing), three workers. Calico CNI on BGP — no VXLAN tax. MetalLB for services (10.0.1.200-210). Pod CIDR 10.244.0.0/16, services 10.245.

FluxCD? The holy grail. Pulls from GitHub, no webhooks begging for ports. Your Mac Studio — the ‘pulpit’ — SSHes, kubectl’s, ansibles from afar. No holes punched.

It wanders a bit, this setup, like a real engineer’s fever dream. Predictable IPs via cloud-init DHCP reservations. But tweak one cable, and BGP flaps. Welcome to the cult.

Is a Homelab HA Kubernetes Cluster Worth Your Weekend?

Hell no, if you’re chasing TikTok likes. Yes, if you want to grok what ‘high availability’ really means — not Google’s 99.99% SLA, but your own etcd quorum dancing on faulty NICs.

Costs? Three nodes: say $500 each in hardware (EPYC minis or whatever). Switch $300. Cables? Pray. Time? Weeks of ‘why is kubelet segfaulting?’

My bold prediction: By 2026, tools like this shrine will birth a homelab PaaS market. Think Talos Linux on steroids, Flux v2 with AI drift detection. Big Tech ignores it now — their loss. You’re training on real metal while they simulate in sandboxes.

Skeptical aside (because I must): PR spin calls this ‘production-grade.’ Cute. Try failover testing with real downtime. Still, beats Minikube roulette.

One sentence wonder: Impressive. Replicable? Barely.

Now, the meaty how-to — because you came for it.

First, Proxmox trifecta. Install on bare metal, cluster ‘em loosely. Terraform with telmate/proxmox provider: vars for DCs, VM specs. Packer bakes Ubuntu 22.04 cloud images with static DHCP hooks.

Ansible playbook: kubeadm init on cp-1, join tokens for others. Kube-vip for VIP (10.0.1.100?). Calico manifest via BGP (announce to your router). MetalLB for L2 loadbalancing.

Flux bootstrap: flux install –components=all, then flux bootstrap github –owner=you –repository=clusters –branch=main –path=./clusters/prod. Done. Git commit, watch it reconcile.

Trouble spots? ZFS snapshots for rollbacks. OPNsense rules: allow 6443 API from pulpit IP only. WireGuard for remote kubectl.

Why Does This Matter for DevOps Skeptics?

Cloud’s a trap. EKS/GKE? Fine for payroll apps. But learning HA on your dime? Priceless. No egress fees. No compliance audits. Just you, YAML, and victory.

Critique the hype: Builder’s ‘shrine’ emoji-fest screams man-cave pride. Reality? 90% uptime if you’re lucky, without monitoring (add Prometheus, duh).

Dense para time: We’ve normalized $10k/month clusters for ‘dev.’ This flips it — scale teaching before scaling teams. Historical parallel: ARPANET nerds wiring garages pre-NSFNET. Today’s homelabs? Same vibe, fueling Web3, edge AI without Nvidia gouging.

🧬 Related Insights

- Read more: AI Gateways: Savior or Just Another Layer of Bloat?

- Read more: Cloudflare’s Sneaky React Bot Trap: Reverse-Engineered and Ripe for Bypassing

Frequently Asked Questions

What is a homelab HA Kubernetes cluster?

It’s three+ nodes running kubeadm with etcd quorum, load balancers, GitOps — all on your hardware, no cloud.

How do I build HA Kubernetes on Proxmox?

Terraform VMs, Ansible bootstrap, FluxCD sync. Start with single DC, add nodes.

Is FluxCD necessary for homelab K8s?

Not ‘necessary,’ but it beats manual kubectl apply forever. Pull-based, secure.