Picture this: the robotics world buzzing with Vision-Language-Action (VLA) models—those brainy systems that see, chat, and grab like a sci-fi butler. We all expected them chained to beefy GPUs in labs, sipping cloud power like vampires. But NXP flips the script. Their new guide on bringing robotics AI to embedded platforms? It’s a wake-up jolt. Suddenly, your home robot arm—powered by a chip the size of a matchbox—nails precise tasks without phoning home for help.

This changes everything. No more laggy inference delays turning smooth pours into spills. Real robots, real-time, right on the edge.

Why Was Everyone Skeptical About Edge Robotics AI?

Look, VLMs ate the world first—spotting cats in photos, describing chaos. Then VLAs leveled up: not just seeing and talking, but acting. Grab that mug. Stir the soup. But deploy on a robot’s tiny brain? Ha. Compute squeezes, memory cramps, power sips only. Plus, robots can’t wait—arms freeze mid-swing while the model ponders, leading to wobbles like a drunk ballerina.

NXP calls it out sharp: “In synchronous control pipelines, while the VLA is running inference, the arm is idle awaiting commands leading to oscillatory behavior and delayed corrections.”

In synchronous control pipelines, while the VLA is running inference, the arm is idle awaiting commands leading to oscillatory behavior and delayed corrections.

Bingo. That’s the pain. Async inference fixes it—think of it as the model whispering moves ahead while the arm dances on. But latency? Must beat action time, or flop.

Here’s my hot take, absent from their post: this echoes the 1980s Unix-on-PDA revolution. Back then, folks laughed at cramming full OSes into pocket calculators. Cut to today—your phone’s a supercomputer. NXP’s doing that for robot smarts. Swarms of $100 home helpers? Coming faster than you think.

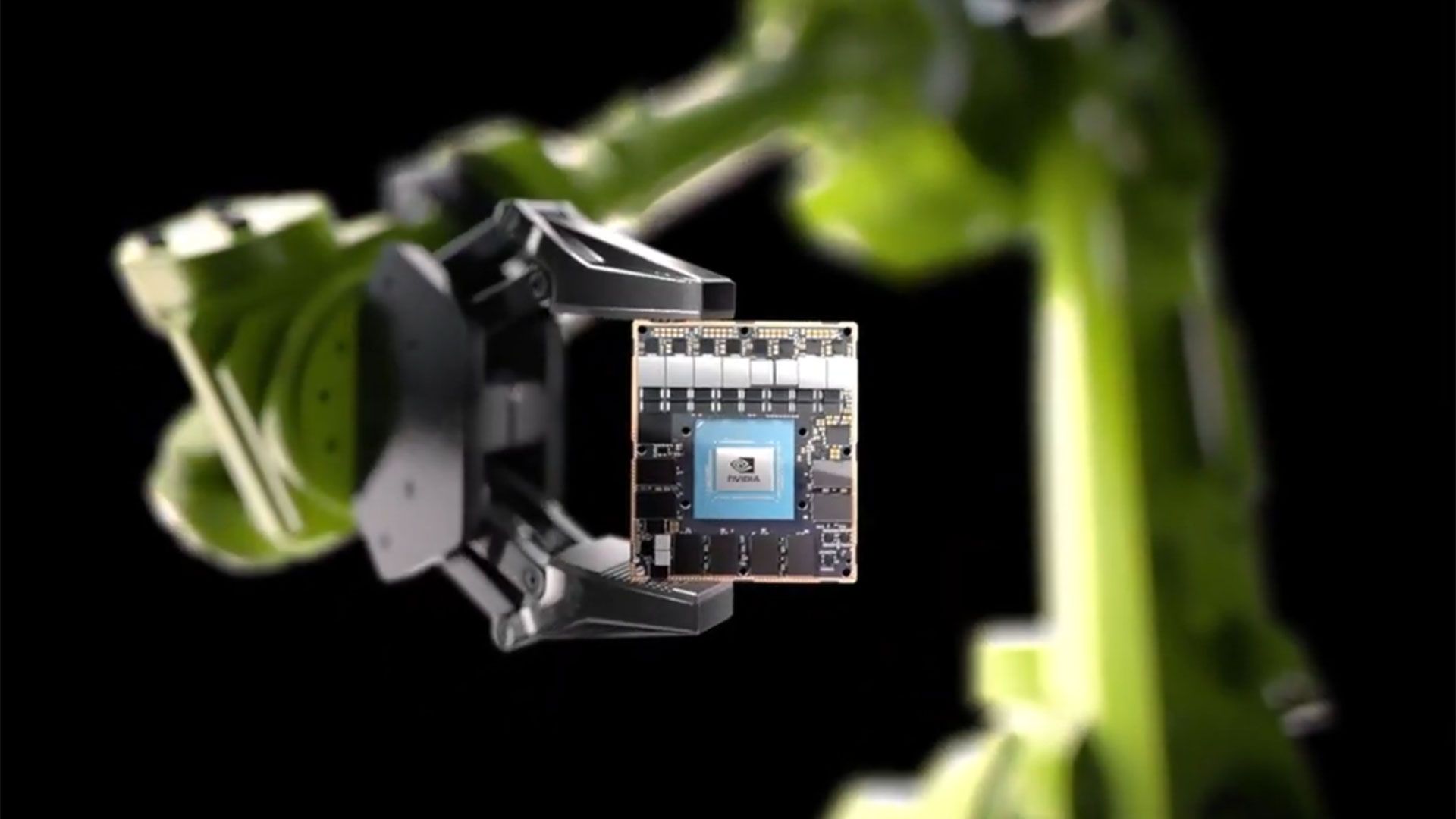

And it’s no hype. They tuned ACT and SmolVLA on i.MX 95 SoC. Real-time wins.

Data first. Garbage in, garbage out—but worse for robots. “High‑quality, consistent data beats ‘more but messy’ data.”

They recorded for “Put the tea bag in the mug.” Simple? Brutal to perfect.

Fixed cameras on rigid mounts—no drift from vibes or resets. Controlled lights, no sunny whims. Max contrast: no ghost-white objects vanishing. Back up calibrations—code crashes shouldn’t nuke your work. And crucially: no cheating. Operator sees only what the robot cams feed. Pure.

Three cams nailed it: top for scene, gripper for precision (game-changer—enforces clean data), left for depth. Gripper cam? Velcro that cable, or it flops.

Hardware hacks shine: heat-shrink on claws ups grip, cuts slips, stabilizes learning. Genius.

Recording playbook: Cluster workspace (11 zones, 10cm squares, 10 eps each). Vary poses. Hold out validation cluster (bye, cluster 6). Max motions—small VLAs crave motion vocab.

How Do You Fine-Tune VLAs Without Melting the Chip?

Fine-tuning’s the alchemy. NXP shares schemas, checklists. But it’s systems engineering, not just squash the model. Decompose architecture, schedule smart, align hardware.

Async pipelines decouple think from do. Latency under execution window—boom, fluid motion.

They hit real-time on i.MX 95. That’s NXP’s beast: AI muscle in embedded form. Tea bag task? Nailed.

But wait—my prediction: this sparks a robot gold rush. Forget Boston Dynamics showponies. Think IKEA-assembly bots in every garage, fine-tuned on your data. Platform shift, baby—like iOS for arms.

Vary viewpoints smart: more cams boost accuracy, spike latency. Goldilocks at three.

Gripper cam enforces discipline—operator blind to extras, data pure as snow.

Is NXP’s i.MX 95 the Edge Robotics King?

Short answer: damn close. They prove VLAs aren’t cloud-only divas. Embedded? Yes, with tweaks.

Bringing VLA models to embedded platforms is not a matter of model compression, but a complex systems engineering problem requiring architectural decomposition, latency-aware scheduling, and hardware-aligned execution.

Spot on. Compression alone? Nah. Full-stack rethink.

Unique angle: NXP’s not just selling chips—they’re gifting the playbook. Open-ish, practical. Contrasts closed-shop giants hoarding tricks. This democratizes edge robots.

What if your Roomba learns to fold socks? Dataset from your messy floor, fine-tune local. No upload.

Challenges linger. Power walls. Heat. But i.MX 95 laughs them off for now.

Bold call: by 2026, 80% consumer robots run edge VLAs. Home aides, warehouses—explosion.

Recording Datasets That Don’t Suck: The Checklists

NXP’s gold: concrete steps.

-

Rigid mounts.

-

Light control.

-

Contrast max.

-

Cal backups.

-

No-cheat rule.

Workspace clusters. Diversity. Unseen val sets. Motion extremes.

Tweaks like friction tubing? Turns “almosts” to wins, steady training.

It’s bursty wisdom—hard lessons distilled.

This isn’t theory. They did it. Tea bag dunked.

Why Does This Matter for Robot Builders?

Dev dream: no cloud bills. Privacy. Real-time. Offline ops.

Async? Smooth as butter.

But pick cams wise. Gripper mandatory for fiddly stuff.

NXP’s guide? Bible for now.

My insight redux: like ARMs conquering phones, i.MX et al conquer robots. Ecosystem blooms.

🧬 Related Insights

- Read more: OpenAI’s Superintelligence Dreams Clash with Insiders’ Altman Distrust

- Read more: Quantum Computing Edges Closer to Turbocharging AI Supremacy

Frequently Asked Questions

What is VLA fine-tuning for robotics?

VLA (Vision-Language-Action) fine-tuning teaches models to see scenes, understand language prompts, and output robot actions—like grabbing objects—using your custom datasets on edge hardware.

How to record high-quality datasets for robot AI?

Use fixed cameras, controlled lighting, max contrast, no cheating with extra views, cluster workspaces for diversity, and gripper cams for precision—aim for 10+ episodes per zone.

Can embedded chips like i.MX 95 run advanced AI models?

Yes—NXP shows real-time VLA inference on i.MX 95 with async pipelines, beating latency hurdles for smooth robot control, no cloud needed.