Developers, picture this: you’re knee-deep in debugging with an AI agent that’s spun up a full Node.js app, deps installed, git history pristine. Lunch hits. Session times out. Back at your desk? Total reset. Twenty minutes of compute down the drain before it even recalls what ‘it’ was working on. Amazon Bedrock AgentCore Runtime persistent session storage changes that grind for good.

That’s the quiet revolution here—not some flashy benchmark, but a fix for the filesystem hell that’s plagued agentic AI from day one. Agents treat the filesystem as their memory dump, far beyond token limits. But ephemerality kills it. Now, with managed storage in public preview, your agent’s /mnt/workspace survives microVM restarts. Shell commands? Fire them directly, no LLM middleman bloating costs or latency.

Why Does Persistent Storage Feel Like a Unix Revolution for AI?

Back in the ’90s, sysadmins cursed vanishing NFS mounts or reboot-wiped /tmp dirs. They’d hack around with cron jobs dumping state to floppies (yeah, floppies). Sound familiar? Amazon Bedrock AgentCore echoes that pain but scales it to AI agents that “think” via file writes—code gen, npm installs, git commits. Without persistence, every resume is a groundhog day rerun.

Here’s the architecture shift: each session spins a dedicated microVM—kernel, RAM, clean FS—for isolation. Secure? Ironclad. Productive? Not when idle timeout nukes your node_modules. Managed session storage mounts a durable dir at agent creation. Write to /mnt/workspace? It sticks, even as compute swaps out.

The filesystem is ephemeral. When your agent’s session stops, everything that it created, like the installed dependencies, the generated code, or the local git history disappears.

That’s straight from AWS docs—raw truth. Their fix? Boto3 tweak in filesystemConfiguration: sessionStorage with sizeInGiB and mountPath. Control plane for lifecycle, data plane for invokes. Agent writes, runtime persists. Boom.

Stop the session. microVM dies. Resume with same runtime-session-id. Fresh VM mounts the old state. Agent blinks, sees git history intact, picks up like you never left. No S3 hacks, no keep-alive hacks draining wallet. Pure filesystem fidelity.

But wait—deterministic ops like npm test? Old way: pipe through LLM tool call. Latency spikes, tokens burn, flakiness creeps in (LLMs hallucinate bash?). New way: InvokeAgentRuntimeCommand. Shell it straight in the VM. No LLM detour.

Can Bedrock’s Shell Commands Finally Ditch LLM Latency for Good?

Think about testing flows. Agent patches code. You need npm test green before merge. Routing via LLM? Adds 5-10s per call, non-zero failure rate. Custom orchestrator outside? Sync FS access, auth hell, more code.

AgentCore’s command invoke sidesteps it all. Hit the data plane client: bedrock-agentcore.InvokeAgentRuntimeCommand. Payload: your bash snippet. Runs in-session, captures stdout/stderr/return code. Agent gets results as observation. Deterministic as native shell, zero token tax.

And here’s my take, absent from AWS spin: this combo births overnight agents. Spin one pre-bed: scaffold, build, test cycles autonomous. Wake to PR ready, git-pushed. Not hype—it’s the microVM + persistence unlocking cron-job agents. Echoes early AWS Lambda going stateful via EFS, but for brains, not functions. Bold call? Within a year, dev teams run “agent fleets” on this, slashing human toil 30% on boilerplate workflows.

Critique time: AWS calls it “unlocking workflows that weren’t possible before.” Fair, but PR glosses the microVM overhead—cold starts still sting without state. And public preview means rough edges; quota limits, regional rollout. Don’t ditch Cursor yet.

Configuring’s dead simple. SDK snippet:

response = control_client.create_agent_runtime(

# ...

filesystemConfiguration={

'sessionStorage': {

'sizeInGiB': 10,

'mountPath': '/mnt/workspace'

}

}

)

Invoke, work, stop, resume. Agent prompt stays same; FS memory does the heavy lift. Shell? Same client, command payload. Outputs feed back smoothly.

Real-world? Coding agents mature here. No more “reinstall everything” loops killing UX. Data agents hoarding CSVs in /mnt/data? Persist. Security? VM isolation holds; storage’s managed, encrypted, yours alone.

How Do You Actually Set Up Bedrock AgentCore Persistence?

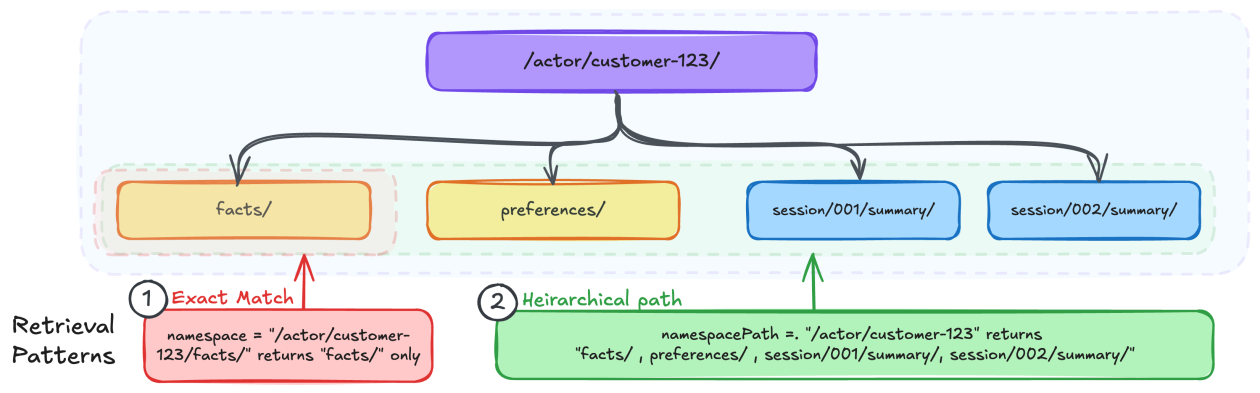

Start with agent runtime creation—control client. filesystemConfiguration mandatory for storage. Mount must /mnt/* . Size? 1-100GiB, billed per provisioned. Invoke data client for chat or commands.

Edge: git ops shine. Agent clones, commits, pushes—state survives, so push works post-resume. No more “clean slate” git amnesia.

Limits hit: max sessions per runtime, idle timeouts configurable but not infinite. Cost? Per GiB-month storage + compute. Worth it? For prod agents, hell yes.

This isn’t toy AI. It’s infrastructure for agents that act like devs—stateful, shell-savvy, relentless.

**

🧬 Related Insights

- Read more: Tech’s AI Gold Rush: Jobs Axed, Payoff Elusive

- Read more: Amazon Quick’s AI Onboarding Bots: HR Savior or Slick AWS Upsell?

Frequently Asked Questions**

What is Amazon Bedrock AgentCore persistent storage?

Managed dir (/mnt/*) that survives microVM stop/resume, letting agents keep files, deps, git across sessions—no re-downloads needed.

Does Bedrock AgentCore shell execution replace LLM tools?

For deterministic cmds like npm test or git push, yes—direct VM exec cuts latency/tokens; LLMs still for reasoning.

How to enable persistent filesystem in Bedrock AgentCore?

Add sessionStorage to filesystemConfiguration on CreateAgentRuntime; mountPath starts with /mnt/workspace or similar.